The IBM POWER8 Review: Challenging the Intel Xeon

by Johan De Gelas on November 6, 2015 8:00 AM EST- Posted in

- IT Computing

- CPUs

- Enterprise

- Enterprise CPUs

- IBM

- POWER

- POWER8

Floating Point: C-ray

Shifting over from integer to floating point benchmarks we have C-ray. C-ray is an extremely simple ray-tracer which is not representative of any real world raytracing application. In fact, it is essentially a floating point benchmark that runs out the L1-cache. Luckily it is not as synthetic and meaningless as Whetstone, as you can actually use the software to do simple raytracing. That is not the kind of benchmark we like to use for the evaluations of server CPUs, but since our first efforts to port some of our favorite applications to OpenPOWER failed, we settled for something easier. We knew we would have the POWER8 system only for a few weeks, so we had to play it safe.

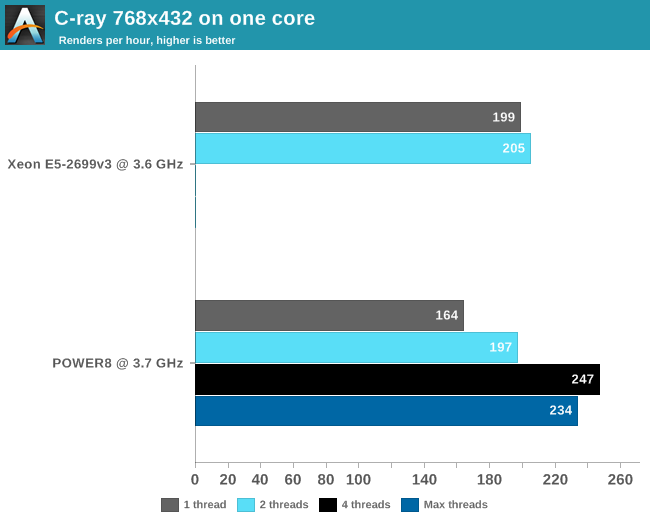

First we compiled the C-ray multi-threaded version with -O3 -ffast-math. To understand the CPU performance better, we limited C-ray with taskset to one or two threads (CPU 0 and 18) on the Haswell-based Xeon and one to eight threads on the POWER8. We also kept the output resolution at 768x432 to keep the render times in check. The "sphfract" file was used as input.

Real floating point intensive applications tend to put the memory subsystem under pressure, and running a second thread makes it only worse. So we are used to seeing that many HPC applications performe worse with multi-threading on. But since C-ray runs mostly out of the L1-cache, we get different behavior. Still, 8 threads of floating action seem to be too much: the POWER8 delivers the best FP performance at 4 threads. At this point, the POWER8 core is able to deliver 20% higher floating point performance than the Haswell Xeon.

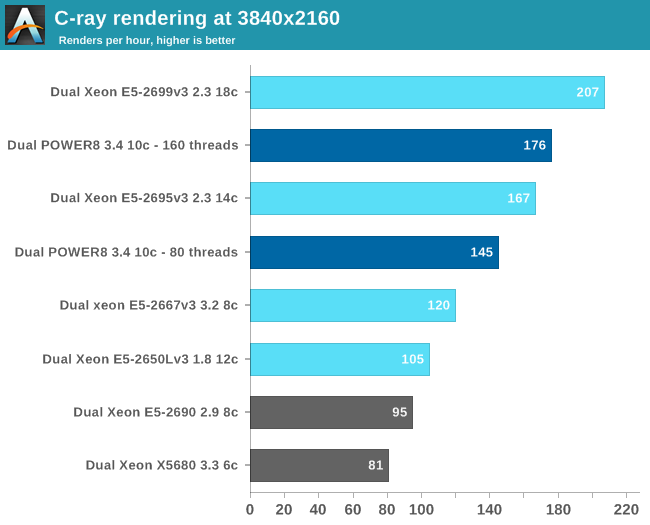

Next we used all 160 (20 x 8 threads SMT) or 72 (36 x 2 threads SMT) threads and increased the resolution to 3840x2160.

With a core count that is 80% higher, there is nothing stopping the Xeon E5-2699 v3 from taking the top spot. Still, the POWER8 delivers solid performance and outperforms the slower Xeon E5-2695 v3 by 5%. Although the real world relevance of this benchmark is small, we now have an idea of how good the "basic FP" performance is. Otherwise in real world applications, the use of AVX-2/VSX and the available bandwidth will play a role.

146 Comments

View All Comments

psychobriggsy - Friday, November 6, 2015 - link

So you are complaining that your job's selection of hardware has made you earn twice as much?dgingeri - Friday, November 6, 2015 - link

No, because I don't earn twice as much. I'm not fully trained in AIX, so I have to muddle my way through dealing with the test machines we have. We don't use them for full production machines, just for testing software for our customers. (Which means I have to reinstall the OS on at least one of those machines about every month or so. That is a BIG pain in the behind due to the boot procedure. Where it takes a couple hours to reinstall Windows or Linux, it takes a full day to do it on an AIX machine.)I'm trying to advise people to NOT use AIX. It's an awful operating system. I'm also advising people NOT use IBM Power based machines because they are extremely aggravating to work on. Overall, it costs much more to run IBM Power machines, even if they aren't running AIX, than it does to run x86 machines. The up front cost might look competitive, but the maintenance costs are huge. Running AIX on them makes it an order of magnitude more expensive.

serpint - Friday, November 6, 2015 - link

I suggest reading the NIM A-Z handbook. It shouldn't take you more than 10 minutes to fully deploy an AIX system fully built and installed with software. As with Linux, it also shouldn't take more than about 10 minutes to install and fully deploy a server if you have any experience scripting installs.The developerworks community inside IBM is possibly the best free resource you could hope for. Also the redbooks.ibm.com site.

Compared to most *NIX flavors, AIX is UNIX for dummies.

agtcovert - Tuesday, November 10, 2015 - link

If you had a NIM server setup and were using LPARs, loading a functional image of AIX should take 10 minutes flat, on a 1G network.If you're loading AIX on a physical machine without using the virtualization, you're wasting the server.

agtcovert - Tuesday, November 10, 2015 - link

I've worked on AIX platforms extensively for about the same amount of time. First, most of these purchases go through a partner and yours must've sucked because we got great support from our IBM partner -- free training, access to experts, that sort of thing.Second, I always love the complaining about the cost of the hardware, etc. If you're buying big iron Power servers, the maintenance cost should be near irrelevant. And again, your partner should take care to negotiate that into the deal for 3-5 years ensuring you have access to updates.

The other thing no one ever talks about is *why* you buy these servers. Why do they take so long to boot? Well, for the frame it self, it's a deep POST. But then, mine were never rebooted in 4 years, and that's for firmware upgrades (online) and a couple of interface card swaps (also done online with no service disruption). Do that on x86. So reason #1 -- RAS, at the hardware level. Seriously, how often did you need to reboot the frame?

Reason #2 -- for large enterprises, you can do so much with these with relatively few cores they lead to huge licensing savings in Oracle, IBM software. For us, it was over $1m a year ongoing. And no, switching to other software was not an option. We could run an Oracle RAC on 4 cores of Power 7 (at the time) versus the 32 x86 it was on previously. That saves a lot of $.

The machine reviewed does not run AIX. It's Linux only. So the maintenance, etc. you mention isn't even relevant.

There are still things that are annoying I suppose. AIX is steeped in legacy to some degree, and certainly not as easy to manage as a Linux box. But there are a lot of guides out there for free -- it took me about a month to be fully productive. And the support costs you pay for -- well, if I ran into a wall, I just opened a PMR. IBM was always helpful

nils_ - Wednesday, November 11, 2015 - link

I'm mostly working in Linux Devops now, but I remember dreading to use all the "classic" Unix machines at my first "real" job 12 years ago. We ran a few IRIX and AIX boxes which were ancient along itself. Hell even the first thing I did on my work Macbook was to replace the BSD userland with GNU wherever possible.It's hard to find any information on them and any learning materials are expensive and usually on dead trees. They pretty much want to sell training, consulting etc. along with the often non-competitive Hardware prices since these companies don't actually WANT to sell hardware. They want to sell everything that surrounds it.

retrospooty - Friday, November 6, 2015 - link

The problem with server chips is that its about platform stability. IBM (and others) dropped off the face of the Earth and as mentioned above Intel now has 95% of the market. This chip looks great but will companies buy into it in mass? What if IBM makes another choice to drop off the face of the Earth again and your platform is dead ended? I would have to think long and hard about going with them at this point.FunBunny2 - Friday, November 6, 2015 - link

Not likely. the mainframe z machines are built using POWER blocks.Kevin G - Friday, November 6, 2015 - link

POWER and System Z are two different architectures. Case in point, POWER is a RISC design introduced in the 90's where as the System Z mainframes can trace their roots to a CISC design from the 1960's (and it is still possible to run some of that 1960's code unmodified).They do share a handful of common parts (think the CDIMMs) to cut down on support costs.

plonk420 - Friday, November 6, 2015 - link

can you run an x264 benchmark on it?? x)