The IBM POWER8 Review: Challenging the Intel Xeon

by Johan De Gelas on November 6, 2015 8:00 AM EST- Posted in

- IT Computing

- CPUs

- Enterprise

- Enterprise CPUs

- IBM

- POWER

- POWER8

Database Performance: MySQL

Both MySQL and PostgreSQL do not scale well enough to make use of 72 threads (Dual Xeon E5), let alone 160 threads (Dual POWER8). We installed Percona MySQL Server 5.6, which is the most scalable InnoDB based MySQL server.

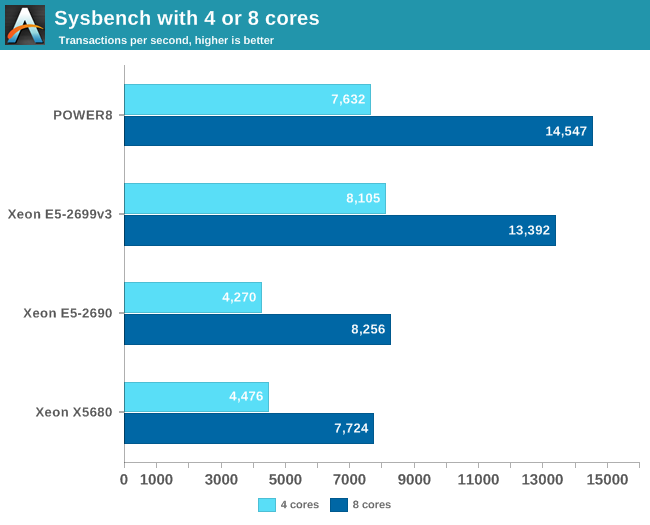

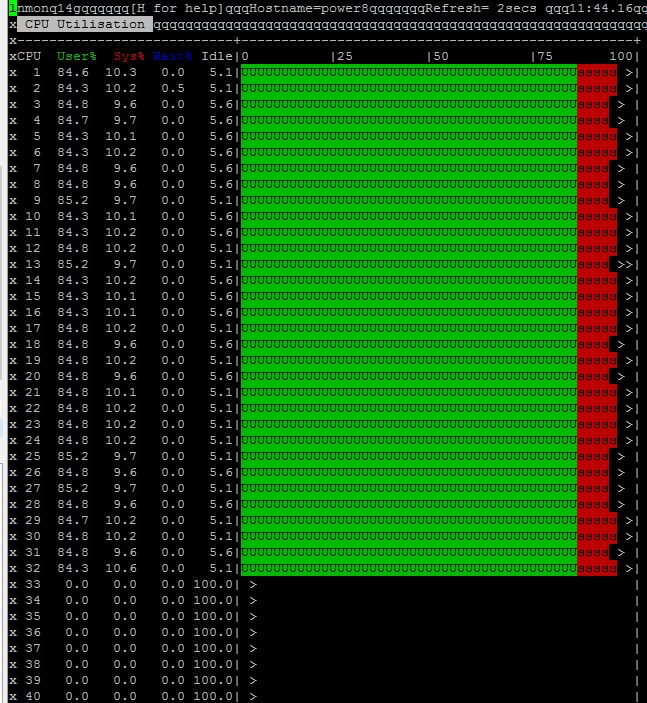

We used the MySQL Sysbench benchmark, but we limited MySQL with taskset to run on 4 or 8 physical cores. We verified that this was actually the case by running "nmon" on top of the IBM server.

You can clearly see that the first 32 threads are used (CPU 0 - 7).

Sysbench allows us to place an OLTP load on a MySQL test database, and you can chose the regular test or the read-only test. We chose read only as even with solid state storage, Sysbench is quickly disk I/O limited.

We tested with 10 million records and 100,000 requests. The main reason why we tested with Sysbench is to get a huge amount of queries that only select very small parts (a few or one row) of the tables, Sysbench allows you to test with any number of threads you like, but there is no "think time" feature. That means all queries fire off as quickly as possible, so you cannot simulate "light" and "medium" loads.

The response times are very small, which is typical for an OLTP test. To take them into account, we are showing you the highest throughput at around 3-4 ms. As the results tend to vary a bit, we give you the average of three runs.

With only 4 cores active, the Xeon E5-2699 v3 is still running at 3.3 GHz. Once we use 8 cores, the clockspeed lowers to 2.9 GHz, and the POWER8 outperforms the best Xeon by a small margin. However, we are only testing a part of the CPUs, similar to running only one VM. Ultimately what this means is that total performance will be:

- the POWER8 will be around 36k (14400/8 * 20 cores)

- the Xeon E5-2699 v3 will be around 60k (13400/8 * 36 cores)

- the Xeon E5-2695 v3 will be around 45k (13000/8 * 28 cores)

So the current MySQL performance on top of POWER8 is good, but MySQL runs still a lot better on the Xeons.

146 Comments

View All Comments

usernametaken76 - Thursday, November 12, 2015 - link

Technically this is not true. IBM had a working version of AIX running on PS/2 systems as late as the 1.3 release. Unfortunately support was withdrawn and future releases of AIX were not compiled for x86 compatible processors. One can still find a copy of this release if one knows where to look. It's completely useless to anyone but a museum or curious hobbyist, but it's out there.zenip - Friday, November 13, 2015 - link

...>--click here-Steven Perron - Monday, November 23, 2015 - link

Hello Johan,I was reading this article, and I found it interesting. Since I am a developer for the IBM XL compiler, the comparisons between GCC and XL were particularly interesting. I tried to reproduce the results you are seeing for the LZMA benchmark. My results were similar, but not exactly the same.

When I compared GCC 4.9.1 (I know a slightly different version that you) to XL 13.1.2 (I assume this is the version you used), I saw XL consistently ahead of GCC, even when I used -O3 for both compilers.

I'm still interested in trying to reproduce your results, so I can see what XL can do better, so I have a couple questions on areas that could be different.

1) What version of the XL compiler did you use? I assumed 13.1.2, but it is worth double checking.

2) Which version of the 7-zip software did you use? I picked up p7zip 15.09.

3) Also, I noticed when the Power 8 machine was running at full capacity (for me that was 192 threads on a 24 core machine), the results would fluctuate a bit. How many runs did you do for each configuration? Were the results stable?

4) Did you try XL at the less aggressive and more stable options like "-O3" or "-O3 -qhot"?

Thanks for you time.

Toyevo - Wednesday, November 25, 2015 - link

Other than the ridiculous price of CDIMMs the power efficiency just doesn't look healthy. For data centers leasing their hardware like Amazon AWS, Google AppEngine, Azure, Rackspace, etc, clients who pay for hardware yet fail to use their allocation significantly help the bottom line of those companies by reduced overheads. For others high usage is a mandatory part of the ROI equation during its period as an operating asset, thus power consumption is a real cost. Even with our small cluster of 12 nodes the power efficiency is a real consideration, let alone companies standardizing toward IBM and utilising 100s or 1000s of nodes that are arguably less efficient.Perhaps you could devise some sort of theoretical total cost of ownership breakdown for these articles. My biggest question after all of this is, which one gets the most work done with the lowest overheads. Don't get me wrong though, I commend you and AnandTech on the detail you already provide.

AstroGuardian - Tuesday, December 8, 2015 - link

It's good to have someone challenging Intel, since AMD crap their pants on regular basisdba - Monday, July 25, 2016 - link

Dear Johan:Can you extrapolate how much faster the Sparc S7 will be in your Cluster Benchmarking,

if the 2 on Die Infiniband ports are Activated, 5, 10, 20% ???

Thank You, dennis b.