The Mobile CPU Core-Count Debate: Analyzing The Real World

by Andrei Frumusanu on September 1, 2015 8:00 AM EST- Posted in

- Smartphones

- CPUs

- Mobile

- SoCs

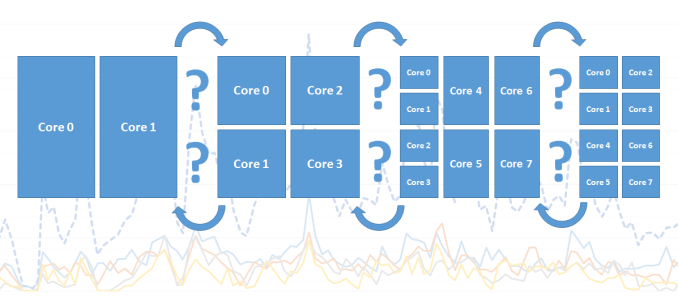

Over the last 5 years the mobile space has seen a dramatic change in terms of performance of smartphone and tablet SoCs. The industry has seen a move from single-core to dual-core to quad-core processors to today’s heterogeneous 6-10 core designs. This was a natural evolution similar to what the PC space has seen in the last decade, but only in a much more accelerated pace. While ILP (Instruction-level parallelism) has certainly also gone up with each new processor architecture, with designs such as ARM’s Cortex A15 or Apple’s Cyclone processor cores brining significant single-threaded performance boosts, it’s the increase of CPU cores that has brought the most simple way of increasing overall computing power.

This increasing of CPU cores brought up many discussions about just how much sense such designs make in real-world usages. I can still remember when the first quad-cores were introduced that users were arguing the benefit of 4 cores in mobile workloads and that these increases were just done for the sake of marketing. I can draw parallels between those discussions from a few years ago and today’s arguments about 6 to 10-core SoCs based on big.LITTLE.

While there have been some attempts to analyse the core-count debate, I was never really satisfied with the methodology and results of these pieces. The existing tools for monitoring CPUs just don’t cut it when it comes to accurately analysing the fine-grained events that dictate the management of multi-core and heterogeneous CPUs. To try to finally have a proper analysis of the situation, for this article, I’ve tried to approach this issue from the ground up in an orderly and correct manner, and not relying on any third-party tools.

Methodology Explained

I should start with a disclaimer that because the tools required for such an analysis rely heavily on the Linux kernel, that this analysis is constrained to the behaviour of Android devices and doesn't necessarily represent the behaviour of devices on other operating systems, in particular Apple's iOS. As such, any comparisons between such SoCs should be limited to purely to theoretical scenarios where a given CPU configuration would be running Android.

The Basics: Frequency

Traditionally when wanting to log what the CPU is doing, most users would think of looking at the frequency which it is currently running at. Usually this gives a rough idea to see if there is some load on the CPU and when it kicks into high gear. The issue with this is the way one captures the frequency: the readout sample will always be a single discrete value at a given point in time. To be able to accurately get a good representation of the frequency one would need to have a sample rate of at least twice as fast as the CPU’s DVFS mechanism. Mobile SoCs now can switch frequency at intervals of down to 10-20ms, and even have unpredictable finer-grained switches which can be caused by QoS (Quality of Service) requests.

Sampling at anything under half the DVFS switching speeds can lead to inaccurate data. For example this can happen in periodic short high bursts. Take a given sample rate of 1s: Imagine that we read frequency out at 0.1s and 1.1s in time. Frequency at both these readouts would be either at a high or low frequency. What happens in-between though is not captured, and due to the switching speed being so high, we can miss out on 90%+ of the true frequency behaviour of the CPU.

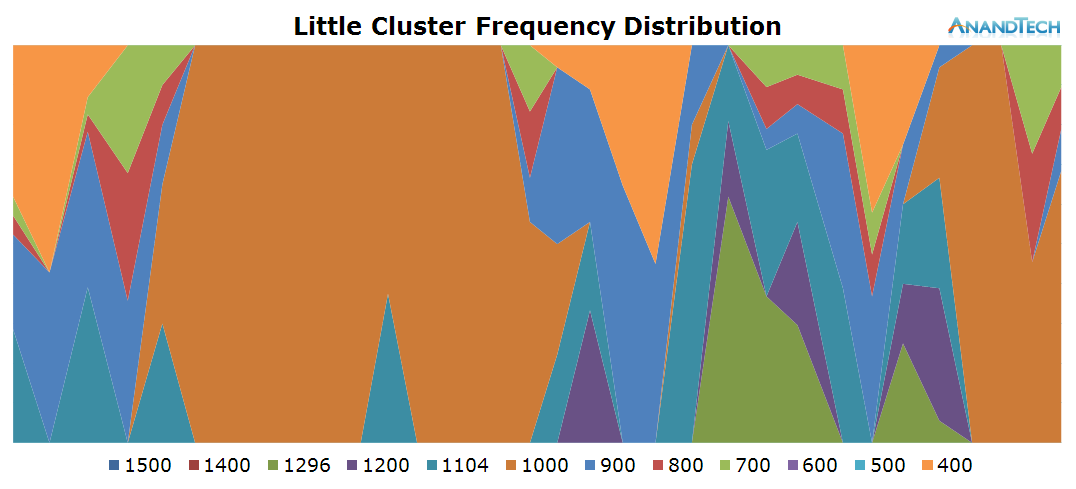

Instead of going the route of logging the discrete frequency at a very high rate, we can do something far more accurate: Log the cumulative residency time for each frequency on each readout. Since Android devices run on the Linux kernel, we have easy access to this statistic provided by the CPUFreq framework. The time-in-state statistics are always accurate because they are incremented by the kernel driver asynchronously at each frequency change. So by calculating the deltas between each readout, we end up with an accurate frequency distribution within the period between our readouts.

What we end up is a stacked time distribution graph such as this:

The Y-axis of the graph is a stacked percentage of each CPU’s frequency state. The X-axis represents the distribution in time, always depending on the scenario’s length. For readability’s sake in this article, I chose an effective ~200ms sample period (Due to overhead on scripting and time-keeping mechanisms, this is just a rough target) which should give enough resolution for a good graphical representation of the CPU’s frequency behaviour.

With this, we now have the first part of our tools to accurately analyse the SoC’s behaviour: frequency.

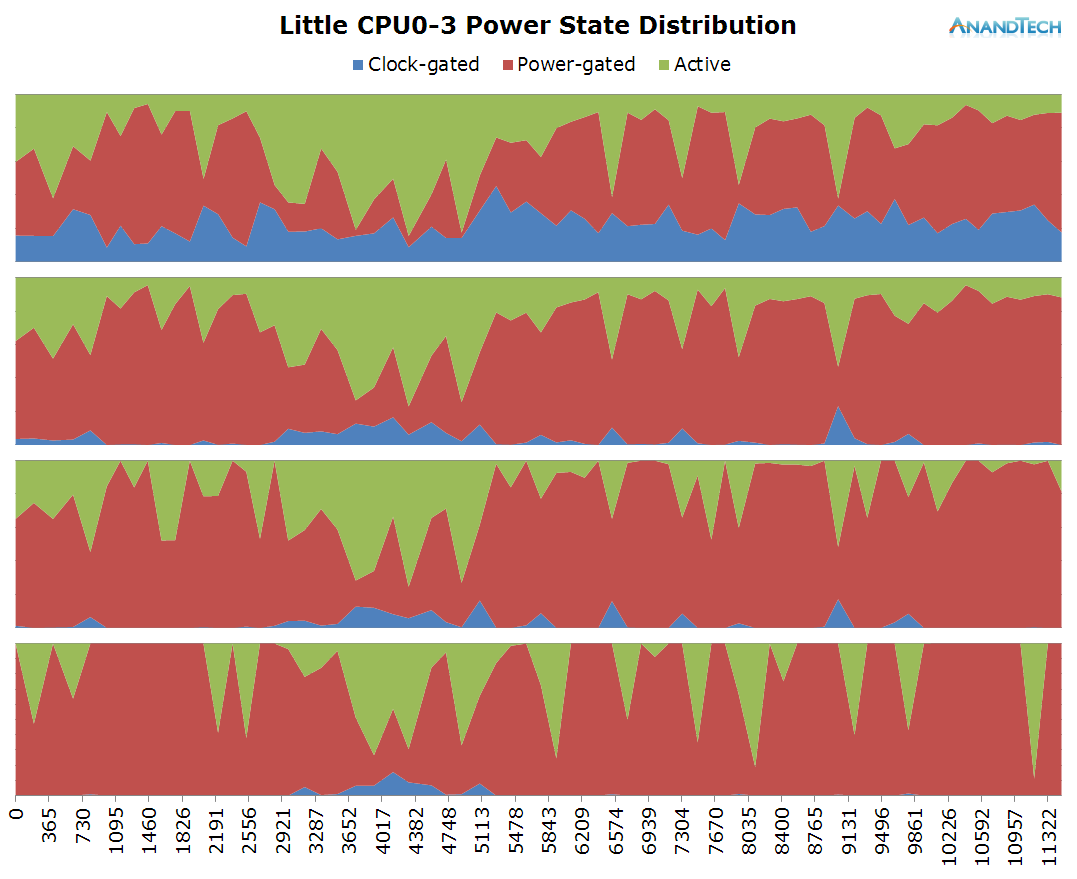

The Details: Power States

While frequency is one of the first metrics that comes to mind when trying to monitor a CPU’s behaviour, there’s a whole other hidden layer that rarely gets exposure: CPU idle states. For readers looking for a more in-depth explanation of how CPUIdle works, I’ve touched upon it and power management of modern SoCs in general work in our deep dive of the Exynos 7420. These explanations are valid for basically all of today's SoCs based on ARM CPU IP, so it applies to SoCs from MediaTek and ARM-based Qualcomm chipsets as well.

To keep things short, a simplified explanation is that beyond frequency, modern CPUs are able to save power by entering idle states that either turn off the clock or the power to the individual CPU cores. At this point we’re talking about switching times of ~500µs to +5ms. It is rare to find SoC vendors expose APIs for live readout of the power states of the CPUs, so this is a statistic one couldn’t even realistically log via discrete readouts. Luckily CPU idle states are still arbitrated by the kernel, which again, similarly to the CPUFreq framework, provides us aggregate time-in-state statistics for each power state on each CPU.

This is an important distinction to make in today’s ARM CPU cores as (except for Qualcomm’s Krait architecture) all CPUs within a cluster run on the same synchronous frequency plane. So while one CPU can be reported to be running at a high frequency, this doesn’t really tell us what it’s doing and could as well be fully power-gated while sitting idle.

Using the same method as for frequency logging, we end up with an idle power-state stacked time-distribution graph for all cores within a cluster. I’ve labelled the states as “Clock-gated”, “Power-gated” and “Active” which in technical terms they represent the WFI (Wait-For-Interrupt) C1, power-collapse C2 idle states, as well as the difference in time to the wall-clock which represents the “active” time in which the CPU isn’t in any power-saving state.

The Intricacies: Scheduler Run-Queue Depths

One metric I don’t think that was ever discussed in the context of mobile is the depth of the CPU’s run-queue. In the Linux kernel scheduler the run-queue is a list of processes (The actual implementation involves a red-black tree) currently residing on that CPU. This is at the core of the preemptive scheduling nature of the CFS (Completely Fair Scheduler) process scheduler in the Linux kernel. When multiple processes run on the same CPU the scheduler is in charge to fairly distribute processing time between each thread based on time-slices and process priority.

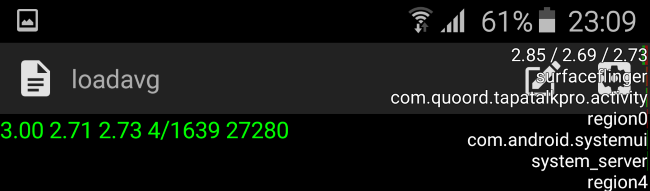

The kernel and Android are able to sort of expose information on the run-queue through one of the kernel’s sysfs nodes. On Android this can be enabled through the “Show CPU Usage” option in the developer options. This gives you three numerical parameters as well as a list of the read-out active processes. The numerical value is the so-called “load average” of the scheduler. It represents the load of the whole system – and it can be used to read how many threads in a system are used. The three values represent averages for different time-windows: 1 minute, 5 minutes and 15 minutes. The actual value is a percentage – so for example 2.85 represents 285%. How this is meant to be interpreted is that if we were to consolidate all processes in as little CPUs as possible we theoretically have two CPUs whose load is 100% (summing up to 200%) as well as a third up to 85% load.

Now this is very odd, how can the phone be fully using almost 3 cores while I was doing nothing more than idling on the screen with the CPU statistics on? Sadly the kernel scheduler suffers from the same sampling rate issue as explained in our frequency logging methodology. Truth is that the load average statistic is only a snapshot of the scheduler’s run-queues which is updated only in 5-second intervals and the represented value is a calculated load based on the time between snapshots. Unfortunately this statistic is extremely misleading and in no way represents the actual situation of the run-queues. On Qualcomm devices this statistic is even more misleading as it can show load-averages of up to 12 in idle situations. Ultimately, this means it’s basically impossible to get accurate RQ-depth statistics on stock devices.

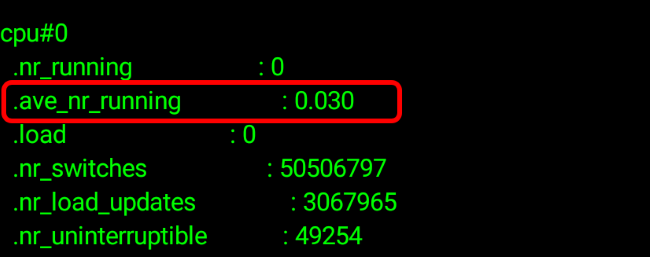

Luckily, I stumbled upon the same issue a few years ago and was aware of a patch that I previously used in the past and which was authored by Nvidia which introduces detailed rq-depth statistics. This tracks the run-queues accurately and atomically each time a process enters or leaves a run-queue, enabling it to expose a sliding-window average of the run-queue depth of each CPU over the period of 134ms.

Now we have a live pollable average for the scheduler’s run-queues and we can fully log the exact amount of threads run on the system.

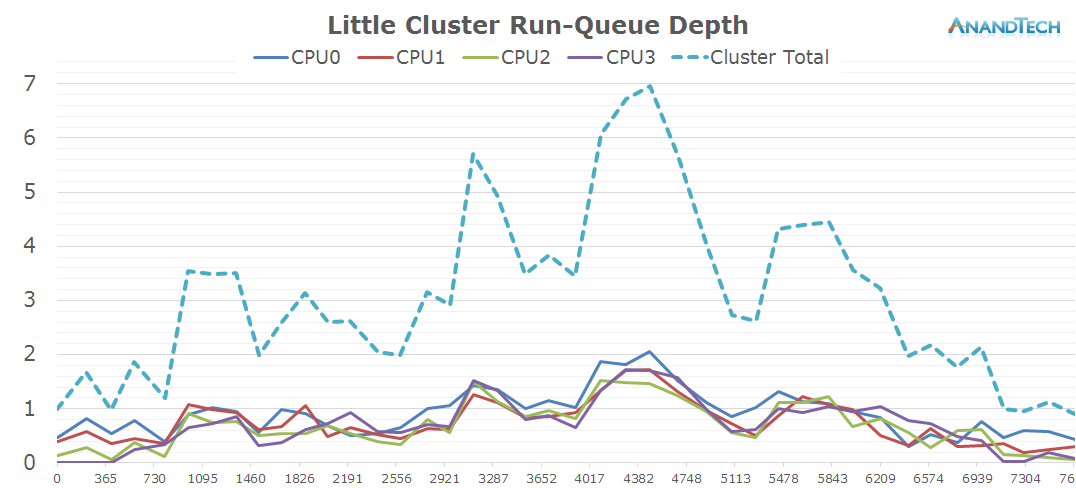

Again, the X-axis throughout the graphs represent the time in milliseconds. This time the Y-axis represents the rq-depth of each CPU. I also included the sum of the rq-depths of all CPUs in a cluster as well the sum of both clusters for the system total in a separate graph.

The values can be interpreted similarly to the load-average metrics, only this time we have a separate value for each CPU. A run-queue depth of 1 means the CPU is loaded 100% of the time, 0.2 means the CPU is loaded by only 20%. Now the interesting metric comes for values above 1: For anything above a rq-depth of 1 it means that the CPU is preempting between multiple processes which cumulatively exceed the processing power of that CPU. For example in the above graph we have some per-CPU peaks of ~2. It means the CPU has at least two threads on that CPU and they each share 50% of the compute-time of that CPU, i.e. they’re running at half speed.

The Data And The Goals

On the following pages we’ll have a look at about 20 different real-world often encountered use-cases where we monitor CPU frequency, power states and scheduler run-queues. What we are looking for specifically is the run-queue depth spikes for each scenario to see just how many threads are spawned during the various scenarios.

The tests are run on Samsung's Galaxy S6 with the Exynos 7420 (4x Cortex A57 @ 2.1GHz + 4x Cortex A53 @ 1.5GHz) which should serve well as a representation of similar flagship devices sold in 2015 and beyond.

Depending on the use-cases, we'll see just how many of the cores on today's many-core big.LITTLE systems are used. Together with having power management data on both clusters, we'll also see just how much sense heterogeneous processing makes and just how much benefit one can gain from it.

157 Comments

View All Comments

lilmoe - Tuesday, September 1, 2015 - link

"If 4 threads running on 4 small cores at 50% FMax can be done by one big core at FMin without wasting any cycles, the advantage actually goes to the big core configuration."That's hardly a real-world or even valid comparison. Things aren't measured that way.

On the chip level, it all boils down to a direct comparison, which by itself isn't telling much because the core configuration of two different chips isn't usually the only difference. Other metrics start to kick in. Those arguing dual-core wide cores are thinking iOS, which by itself invalidates the comparison. We're talking Android here.

On the software side, real life scenarios aren't easy to quantify.

This article simply states the following:

- Android currently has relatively good parallelism capabilities for common workloads,

- Therefore, there is merit in 8 small core and 4x4 big.LITTLE configurations from an efficiency perspective. The latter being beneficial for comparable performance with custom core designs when needed.

Most users are either browsing, texting, or on social media. Most of the games played, BY FAR, are the less demanding ones that usually don't trigger the big cores.

I've said this before in reply to someone else. When QC and Samsung release their custom quad core designs, which do honestly believe would me more power efficient, those chips as-is? Or the same chips in addition to little cores in big.LITTLE (provided they can be properly configured that way).

A wise man once said: "efficiency is king".

lilmoe - Tuesday, September 1, 2015 - link

You guys are deliberately stretching the scope in which the findings of this article applies to.Just stop.

It has been clearly stated that this only applies to how Android (and Android Apps) manage to benefit from more cores in terms of efficiency. It was clearly stated that this doesn't apply to other operating systems "iOS in particular".

Nenad - Tuesday, September 1, 2015 - link

I agree.Especially since any app designed for performance will launch as many threads as needed to use available cores. So looking if "there are more than 4 threads active on 4+4 core CPU" can be misleading. If you run those tests on 2 core CPU, would number of threads remain same or be reduced? How about 10 core CPU?

In other words, only comparing performance and power usage (and not number of threads) would tell us if 4+4 is better than 4 or than 2 cores. Problem with that is finding different CPUs on same technology platform (to ensure only number of cores is different, and not 20nm vs 28nm vs different process etc).

Barring that, comparison of power performance per cost among 4+4 vs 4 vs 2 is also better indicator than comparing number of threads.

TD;DR: it is 'easy' to have more threads than CPU cores, but it does not indicate neither performance nor power usage.

ThisIsChrisKim - Tuesday, September 1, 2015 - link

The question being answered was this, "On the following pages we’ll have a look at about 20 different real-world often encountered use-cases where we monitor CPU frequency, power states and scheduler run-queues. What we are looking for specifically is the run-queue depth spikes for each scenario to see just how many threads are spawned during the various scenarios."It was simply assessing if multiple cores were actually used in the little-big design. Not a comparison of different designs.

Aenean144 - Tuesday, September 1, 2015 - link

Yes, that was what the article was trying to find out, but it didn't answer the question of whether 4-core and 8-core designs are better than 2 core or 3 core designs. That's been the contention of the "can't use that many cores" mantra.All this article has explained is that the OS scheduler can distribute threads across a lot of cores, something hardly anyone has a problem with.

What I'd like to see is the performance or user experience difference between 2-core, 4-core, and 8-core designs, all using the same SoC. There's nothing magic about this. In PCs today, we've largely settled on 2-core and 4-core designs for consumer systems. 6-core and 8-core systems for gaming rigs, but that's largely an artifact of Intel's SKUs.

So, if I believe the marketing, these smartphones really need to have 8-core designs when my laptop or desktop, capable of handling an order of magnitude more computation needs, with just 2-cores or 4-cores?

prisonerX - Tuesday, September 1, 2015 - link

You're missing the point. Your desktop is faster because it uses much more power. Mobile phones have more cores because it's more efficient to use more lower power cores than fewer high power cores.Aenean144 - Tuesday, September 1, 2015 - link

My desktop and laptop are power limited at their respective TDPs, and it's been this way for a very long time. If more cores were the answer, why are we sitting at mostly 2-core and 4-core CPUs in the PC space?All this stuff isn't new whatsoever, and the PC space went through the same core-count race 10 years ago. There has to be something systematically different such that Intel went down this path while ARM smartphones are in the midst of a core-count race.

I've read that it could be an economics thing as ARMH gets money on a per-core basis while spending money on complicated DVFS schemas and high IPC cores isn't worth it for them. Maybe in the PC space, we don't need the performance anymore.

mkozakewich - Wednesday, September 2, 2015 - link

It's because we're always fighting for quicker single-thread work. A lot of things can be parallelized, but there are also a lot of things today that aren't. I agree that Intel should try out some kind of big.LITTLE thing with a couple Atom cores and a Core M, just to see how it runs.prisonerX - Wednesday, September 2, 2015 - link

It's Intel's backward looking strategy. They're competing in high power/high single thread performance because they can win that with legacy desktop software and a legacy CPU architecture.Meanwhile, the rest of the world is going low power multithreaded, because that's the future. Going forward it's the only way to increase performance with low power. Google are correctly pushing an aggressively multithreaded software architecture.

Intel have already hit the wall with single threaded performance. They can win the present but not the future. Desktops aren't moving forward because no-one cares about them except gamers, and gamers largely don't care about power usage because they don't run on batteries.

Frihed - Friday, September 4, 2015 - link

In the desktop, the costs of making a chip matters much more, as the bigger chips are some times more expensive than a hole mobile device. The costs of putting more cores in the chip counts there.