The Mobile CPU Core-Count Debate: Analyzing The Real World

by Andrei Frumusanu on September 1, 2015 8:00 AM EST- Posted in

- Smartphones

- CPUs

- Mobile

- SoCs

S-Browser - AnandTech Article

We start off with some browser-based scenarios such as website loading and scrolling. Since our device is a Samsung one, this is a good opportunity to verify the differences between the stock browser and Chrome as we've in the past identified large performance discrepancies between the two applications.

To also give the readers an idea of the actions logged, I've also recorded recreations of the actions during logging. These are not the actual events represented in the data as I didn't want the recording to affect the CPU behaviour.

We start off by loading an article on AnandTech and quickly scrolling through it. It's mostly at the beginning of the events that we're seeing high computational load as the website is being loaded and rendered.

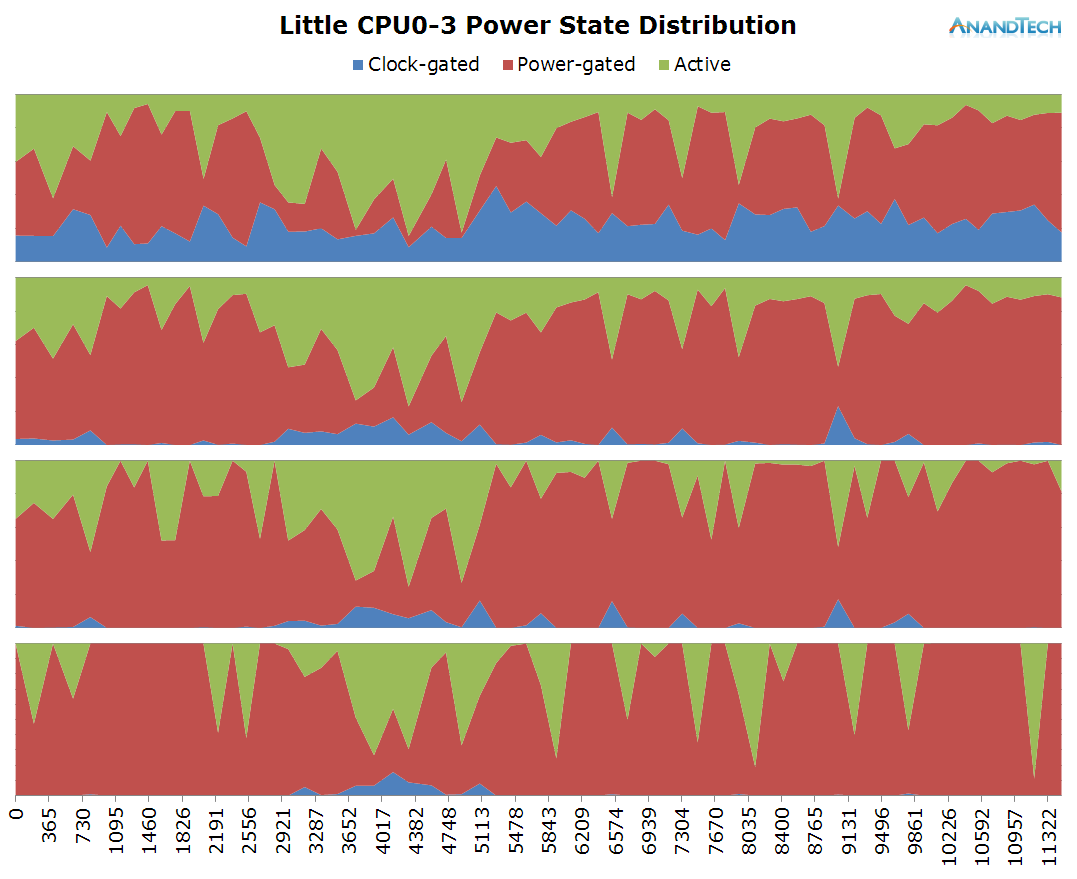

Starting off at a look of the little cluster behaviour:

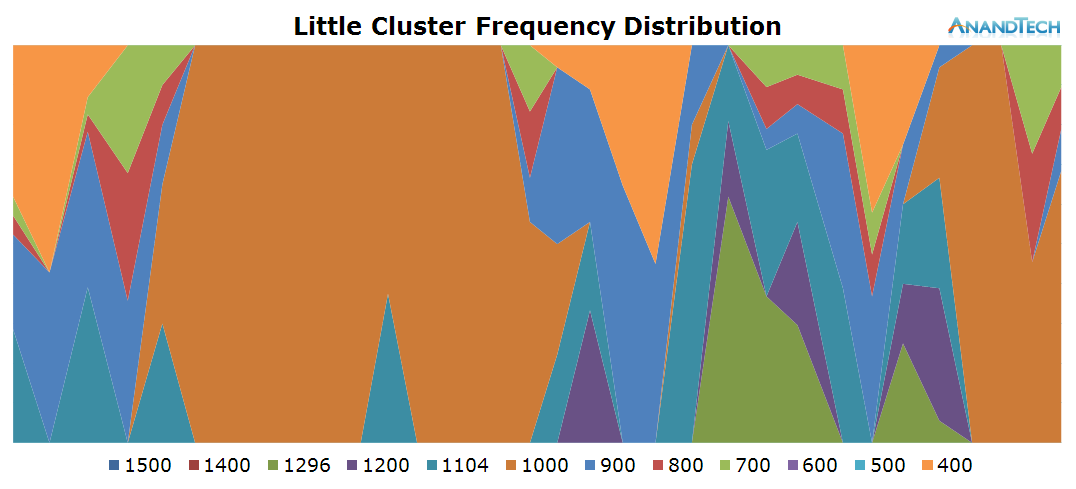

The time period of the data is 11.3s, as represented in the x-axis of the power state distribution chart. During the rendering of the page there doesn't seem to be any particular high load on the little cores in terms of threads, as we only see about 1 little thread use up around 20% of the CPU's capacity. Still this causes the cluster to remain at around the 1000MHz mark and causes the little cores to mostly stay in their active power state.

Once the website is loaded around the 6s mark, threads begin to migrate back to the little cores. Here we actually see them being used quite extensively as we see peaks of 70-80% usage. We actually have bursts where may seem like the total concurrent threads on the little cluster exceeds 4, but still nothing too dramatically overloaded.

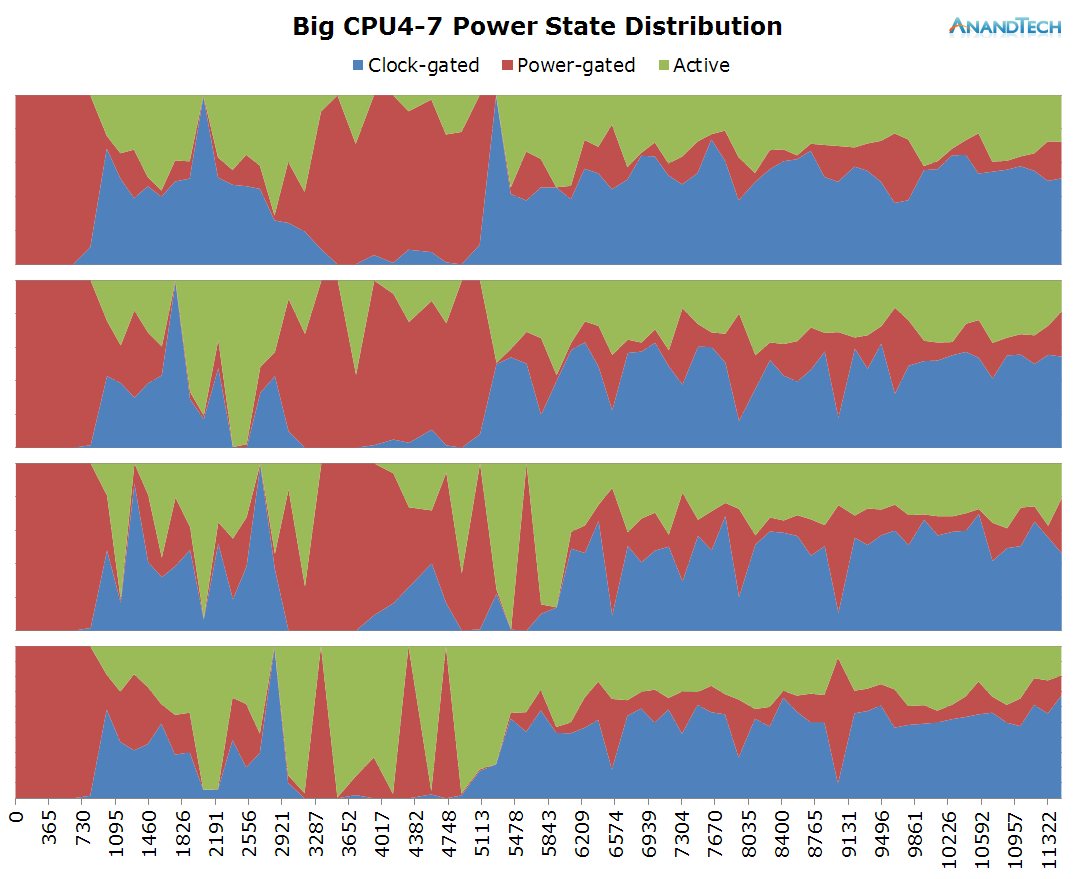

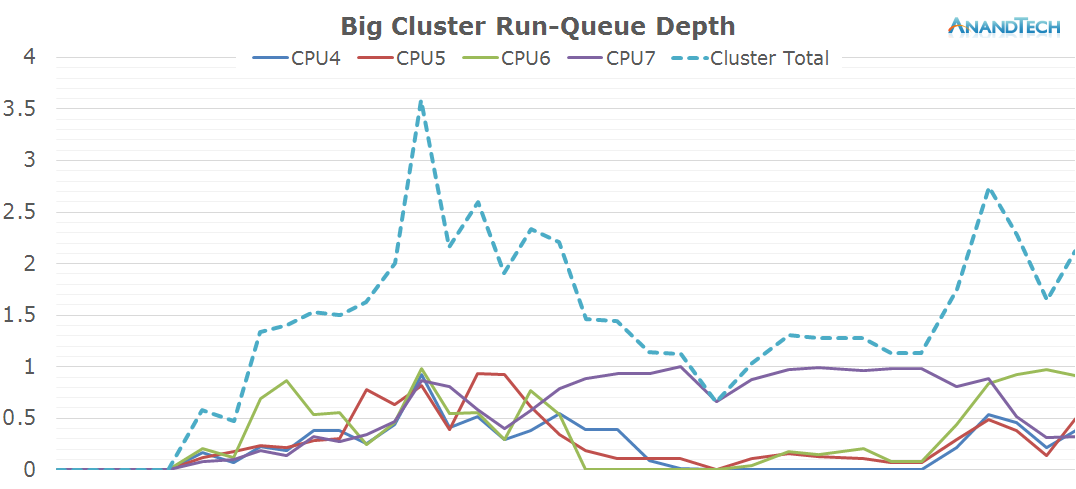

Moving on to the big cluster:

On the big cluster, we see an inversion of the run-queue graph. Where the little cores didn't have many threads placed on them, we see large activity on the big cluster. The initial web site rendering is clearly done by the big cluster, and it looks like all 4 cores have working threads on them. Once the rendering is done and we're just scrolling through the page, the load on the big cluster is mostly limited to 1 large thread.

What is interesting to see here is that even though it's mostly just 1 large thread that requires performance on the big cores, most of the other cores still have some sort of activity on them which causes them to not be able to fall back into their power-collapse state. As a result, we see them stay within the low-residency clock-gated state.

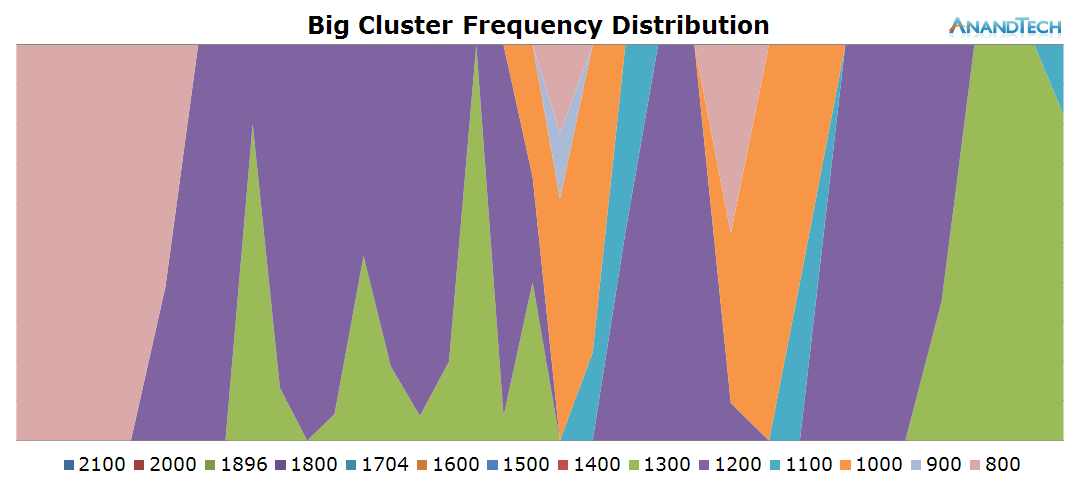

On the frequency side, the big cores scale up to 1300-1500 MHz while rendering the initial site and 1000-1200 while scrolling around the loaded page.

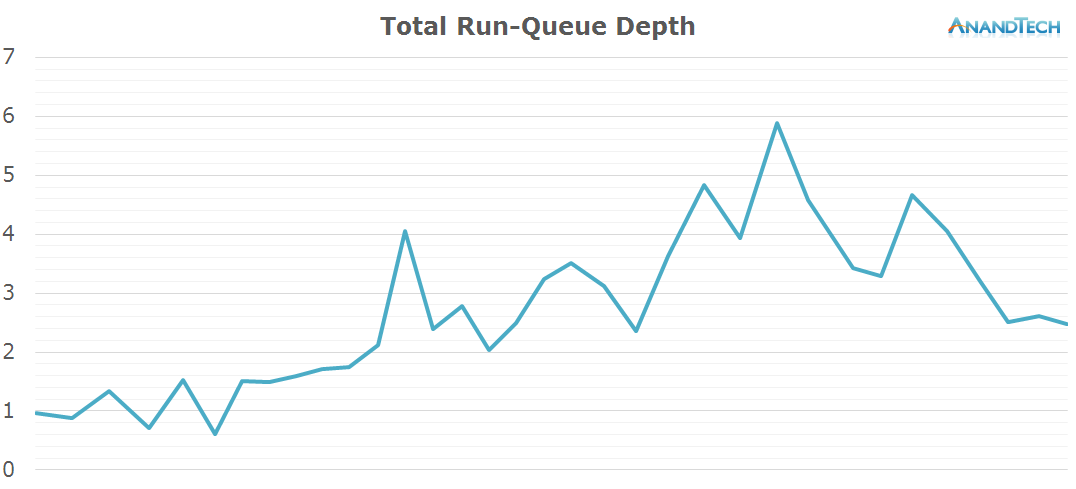

When looking at the total amount of threads on the system, we can see that the S-Browser makes good use of at least 4 CPU cores with some peaks of up to 5 threads. All in all, this is a scenario which doesn't necessarily makes use of 8 cores per-se, however the 4+4 setup of big.LITTLE SoCs does seem to be fully utilized for power management as the computational load shifts between the clusters depending on the needed performance.

157 Comments

View All Comments

R0H1T - Tuesday, September 1, 2015 - link

Seems like Android has Windows' number as far as "multi-threading" is concerned, kudos to Google for this & seems like the tired old argument of developers getting a free pass (for poor MT implementation on desktops) needs to change asap!Impulses - Tuesday, September 1, 2015 - link

Ehh, I think you're ignoring some key differences in clock speed and single threaded performance, not to mention how easily Intel can ramp clock speed up and back down, and then there's Hyper Threading which allows you to span more threads per core.Laptops might be the outlier, but I dunno what benefit a desktop (which have commonly run quads for years) would see from a lower powered core cluster. Development just works very differently by nature of the environment.

Also things that benefit a ton from parallelization on the desktop often end up using the GPU instead... And/or specialized instructions that aren't available at all on mobile. It's not even apples and oranges IMO, it's apples and watermelons.

R0H1T - Tuesday, September 1, 2015 - link

You're missing the point, which is that Google & Android have shown (even with the vast number of SoC's it runs on) that MT & load management, when implemented properly, on the supported hardware & complementing software, makes great use of x number of cores even in a highly constrained environment like a smartphone.On desktops we ought to have had affordable octa cores available for the masses by now, but since Intel has no real competition & they price their products through the roof, we're seeing what or how windows & the x86 platform has stagnated. Granted that more people are moving to small, portable computing devices but there's no reason why the OS & the platform as a whole has to slow down, also the clock speed, IPC argument is getting old now. If anything DX12, Mantle, Vulkan et al have shown us is that if there's good hardware & the willingness to push it to its limits developers, with the right tools at hand, will make use of it. Not to mention giving them a free pass for badly coded programs, remember the "ST performance is king" argument, is the wrong way to go as it not only wastes the (great) potential of desktops but it also slows down the progress of PC as a platform.

Now I know MT isn't a cakewalk especially on modern systems but if anything it should be more widespread because desktops & notebooks give a lot of thermal headroom, as compared to tablets & smartphones, besides the 30+ years of history behind this particular industry should make the task easier. Also not all compute tasks can be offloaded to GPU, that's why it's even more imperative that the users push developers to make use of more cores & not get the free ride that GPGPU has been giving them over the last few years, as it is the GPU industry is also slowing down massively & then we'll eventually be back to square one & zero growth.

metafor - Tuesday, September 1, 2015 - link

Yes and no. Google and Android are able to show that things like app updates, web page loads and general system upkeep is able to take advantage of multiple threads. But that's been true for a while. In a smartphone, those happen to be the performance dominating tasks. On a desktop, those tasks are noise.Desktop workloads that actually stress the CPU (and users care about performing well) are very different. That's not to say they're not threadable, but they may not be as threadable as Chrome, which basically eats RAM and processes.

That being said, heterogenous MT could make a lot of sense for laptop processors as well. Having threadable workloads run on smaller Atoms instead of big Sky Lakes would probably improve efficiency. But it may not be as dramatic depending on the perf/W of Sky Lake at lower frequencies.

niva - Tuesday, September 1, 2015 - link

OK can we talk about this for a bit. I for one found the webpage CPU usage extremely disturbing. I'm running an old phone, Galaxy Nexus, and browsing has become by far the task my phone struggles with the most. Why is that? What is it about modern websites that causes them to be so CPU heavy? Is that acceptable? It does seem that much of the internet is filled with websites running shady scripts in the background and automatically playing video or sound which is annoying at the very least, but detrimental to performance always. Whatever happened to website optimization for minimizing data usage and actually making websites accessible?Secondly, what is the actual throughput of CPUs in desktops compared to the latest state of the line arm APUs? Just because desktop workloads might be different, does that mean that a mobile APU cannot handle it or is that simply due to the usage mode of the device in question? What I'm seeing out of mobile/phone chips is that they are extremely capable, to the point I'm starting to wonder if I'll ever need another desktop rig to replace my old Phenom X2 machine.

metafor - Tuesday, September 1, 2015 - link

I would guess that websites are just more complicated nowadays. Think about a dynamic website like Twitter, which has to have live menus and notifications/updates. That's basically a program more than just a web page. We've slowly migrated what used to be stand-alone programs to load-on-demand web programs. And added many many inefficient layers of script interpreters in between.emn13 - Thursday, September 3, 2015 - link

Somewhat ironically, the more modern a web-page the *less* friendly it is likely to be to multithreading. After all, modern features tend to include heavy javascript usage (which is almost purely single-threaded), and a CPU usage that is bottlenecked by a path through javascript (typically layout not actually the JS itself, but that layout affects JS and hence needs fine-grained interaction).Jaybus - Tuesday, September 1, 2015 - link

It is the more extensive use of client-side processing, in a nutshell, JavaScript and JSON. On older websites, they dynamic stuff was processed server-side and the client simply did page reloads. The modern sites require less bandwidth, but at the expense of increasing CPU usage.Also, modern sites are higher res and more image intensive, or in other words more GPU heavy as well. Some of the Nexus struggle can be attributed to GPU load.

mkozakewich - Wednesday, September 2, 2015 - link

Most of it has to do with using multiple JavaScript libraries. It's not strange to need to download over 50 different files on a website today. Anandtech.com took 123 requests over four seconds to load. Mostly fonts, ads, and Twitter stuff, but it adds up.name99 - Tuesday, September 1, 2015 - link

You are totally misinterpreting these results.The mere existence of a large number of runnable threads does not mean that the cores are being usefully used. Knowing that there are frequently four runnable threads is NOT the same thing as knowing that four cores are useful, because it is quite possible that those threads are low priority, and that sliding them so as run consecutively rather than simultaneously would have no effect on perceived user performance.

There is plenty of evidence to suggest that this interpretation is correct.

Within the AnandTech data, the fact that these threads are usually on the LITTLE cores, and running those at low frequency, suggests they are not high priority threads.

This paper from MS research confirms the hypothesis:

http://research.microsoft.com:8082/en-us/um/people...

Now there is a whole lot of tribalism going on in this thread. I'm not interested in that; I'm interested in the facts. What the MS paper states (confirmed, IMHO) by these AnandTech results, is that there is a reasonable (around 20%) throughput improvement in going from one to two threads, along with a small (around 10%) energy drop, and that going from two to three or four cores buys you only very slight further energy and performance boosts.

In one sense this means there's no harm in having octacores around --- they don't seem to burning energy, and in principle they could deliver extra snappiness (though the lousiness of the scheduling in these AnandTech results suggests that's more a hope than a reality). But there's a world of difference between the claim "doesn't hurt energy, may occasionally be slightly useful" and the claim "pretty much always useful because apps are so deeply threaded these days".