The Intel 6th Gen Skylake Review: Core i7-6700K and i5-6600K Tested

by Ian Cutress on August 5, 2015 8:00 AM ESTSkylake's iGPU: Intel Gen9

Both the Skylake processors here use Intel’s HD 530 graphics solution. When I first heard the name, alarm bells went off in my head with questions: why is the name different, has the architecture changed, and what does this mean fundamentally?

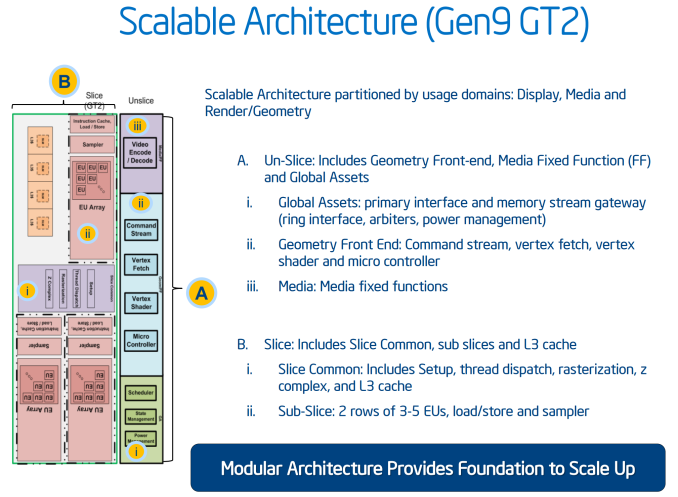

Not coming up with many details, we did the obvious thing – check what information comes directly out of the processor. Querying HD 530 via Intel's OpenCL driver reports a 24 EU design running at 1150 MHz. This is different than what GPU-Z indicates, which points to a 48 EU design instead, although GPU-Z is not often correct on newer graphics modules before launch day. We can confirm that this is a 24 EU design, and this most likely follows on from Intel’s 8th Generation graphics in the sense that we have a base GT2 design featuring three sub-slices of 8 EUs each.

As far as we can tell, Intel calls the HD 530 graphics part of its 9th Generation (i.e. Gen9). We have been told directly by Intel that they have changed their graphics naming scheme from a four digit (e.g. HD4600) to a three digit (HD 530) arrangement in order "to minimize confusion" (direct quote). Personally we find that it adds more confusion, because the HD 4600 naming is not directly linked to the HD 530 naming. While you could argue that 5 is more than 4, but we already have HD 5200, HD 5500, Iris 6100 and others. So which is better, HD 530 or HD 5200? At this point it will already create a miasma of uncertainty, probably exaggerated until we get a definite explanation of the stack nomenclature.

Naming aside, Generation 9 graphics comes with some interesting enhancements. The slice and un-slice now have individual power and clock domains, allowing for a more efficient use of resources depending on the load (e.g. some un-slice not needed for some compute tasks). This lets the iGPU better balance power usage between fixed-function operation and programmable shaders.

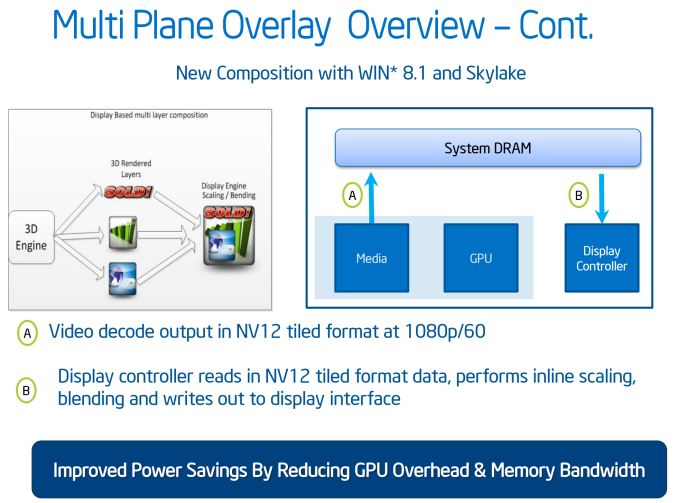

Generation 9 will support a feature called Multi Plane Overlay, which is a similar feature to AMD’s video playback path adjustments in Carrizo. The principle here is that when a 3D engine has to perform certain operations to an image (blend, resize, scale), the data has to travel from the processor into DRAM then to the GPU to be worked on, then back out to DRAM before it hits the display controller, a small but potentially inefficient operation in mobile environments. What Multi Plane Overlay does is add fixed function hardware to the display controller to perform this without ever hitting the GPU, minimizing power consumption from the GPU and taking out a good portion of DRAM data transfers. This comes at a slight hit for die area overall due to the added fixed function units.

As shown above, this feature will be supported on Win 8.1 with Skylake’s integrated graphics. That being said, not all imaging can be moved in this way, but where possible the data will take the shorter path.

To go along with the reduced memory transfer, Gen9 has support for memory color stream compression. We have seen this technology come into play for other GPUs, where by virtue of fixed function hardware and lossless algorithms this means that smaller quantities of image and texture data is transferred around the system, again saving power and reducing bandwidth constraints. The memory compression is also used with a scalar and format conversion pipe to reduce the encoding pressure on the execution units, reducing power further.

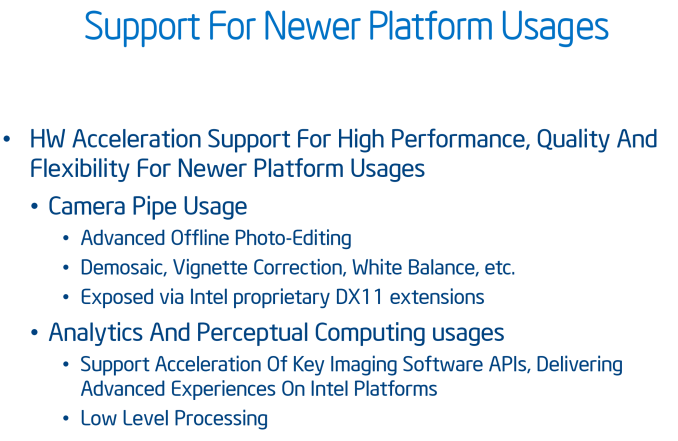

Adding into the mix, we have learned that Gen9 includes a feature called the ‘Camera Pipe’ for quick standard adjustments to images via hardware acceleration. This adjusts the programmable shaders to work in tandem for specific DX11 extensions on common image manipulation processes beyond resize/scale. The Camera Pipe is teamed with SDKs to help developers connect into optimized imaging APIs.

Media Encoding & Decoding

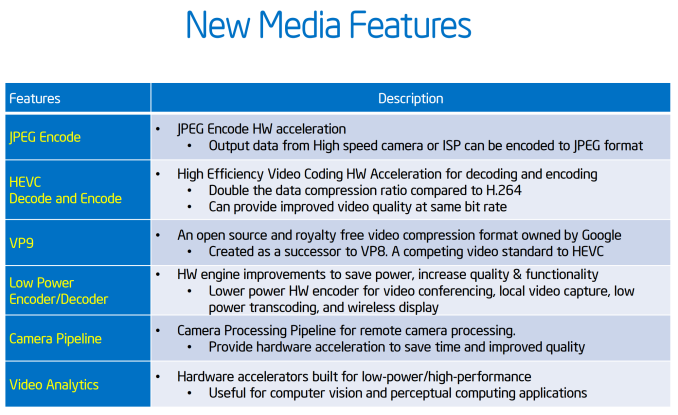

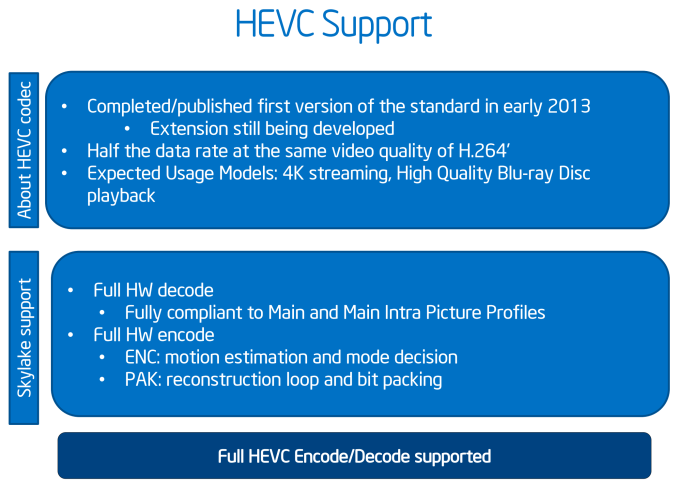

In the world of encode/decode, we get the following:

Whereas Broadwell implemented HEVC decoding in a "hybrid" fashion using a combination of CPU resources, GPU shaders, and existing GPU video decode blocks, Skylake gets a full, low power fixed function HEVC decoder. For desktop users this shouldn't impact things by too much - maybe improve compatibility a tad - but for mobile platforms this should significantly cut down on the amount of power consumed by HEVC decoding and increase the size and bitrate that the CPU can decode. Going hand-in-hand with HEVC decoding, HEVC encoding is now also an option with Intel's QuickSync encoder, allowing for quicker HEVC transcoding, or more likely real-time HEVC uses such as video conferencing.

Intel is also hedging their bets on HEVC by also implementing a degree of VP9 support on Skylake. VP9 is Google's HEVC alternative codec, with the company pushing it as a royalty-free option. Intel calls VP9 support on Skylake "partial" for both encoding and decoding, indicating that VP9 is likely being handled in a hybrid manner similar to how HEVC was handled on Broadwell.

Finally, JPEG encoding is new for Skylake and set to support images up to 16K*16K.

Video Support

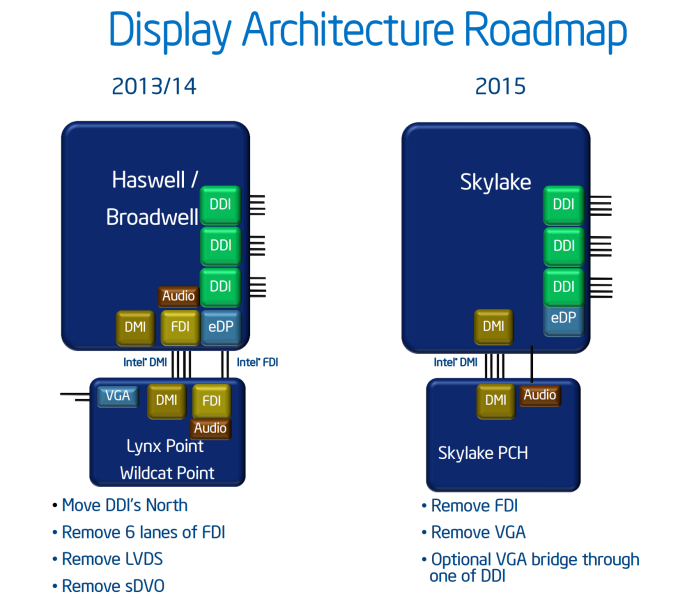

The analog (VGA) video connector has now been completely removed from the CPU/chipset combination, meaning that any VGA/D-Sub video connection has to be provided via an active digital/analog converter chip. This has been a long time coming, and is part of a previous committment made by Intel several years ago to remove VGA by 2015. Removing analog display functionality will mean added cost for legacy support in order to drive analog displays. Arguably this doesn’t mean much for Z170 as the high end platform is typically used with a discrete graphics card that has HDMI or DisplayPort, but we will see motherboards with VGA equipped in order to satisfy some regional markets with specific requirements.

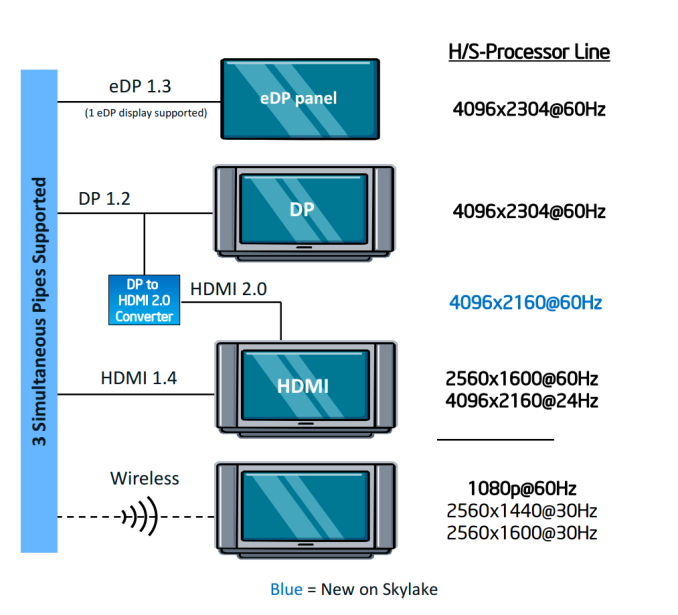

HDMI 2.0 is not supported by default, and only the following resolutions are possible on the three digital display controllers:

A DP to HDMI 2.0 converter, specifically an LS-Pcon, is required to do the adjustments, be it on the motherboard itself or as an external adapter. We suspect that there will not be many takers buying a controller to do this, given the capabilities and added benefits listed by the Alpine Ridge controller.

477 Comments

View All Comments

jwcalla - Wednesday, August 5, 2015 - link

I kind of agree. I think I'm done with paying for a GPU I'm never going to use.jardows2 - Wednesday, August 5, 2015 - link

If you don't overclock, buy a Xeon E3. i7 performance at i5 price, without integrated GPU.freeskier93 - Wednesday, August 5, 2015 - link

Except the GPU is still there, it's just disabled. So yes, the E3 is a great CPU for the price (I have one) but you're still paying for the GPU because the silicon is still there, you're just not paying as much.MrSpadge - Wednesday, August 5, 2015 - link

Dude, an Intel CPU does not get cheaper if it's cheaper to produce. Their prices are only weakly linked to the production costs.AnnonymousCoward - Saturday, August 8, 2015 - link

That is such a good point. The iGPU might cost Intel something like $1.Vlad_Da_Great - Wednesday, August 5, 2015 - link

Haha, nobody cares abot you @jjj. Integrating GPU with CPU saves money not to mention space and energy. Instead of paying $200 for the CPU and buy dGPU for another 200-300, you get them both on the same die. OEM's love that. If you dont want to use them just disable the GPU and buy 200W from AMD/NVDA. And it appears now the System memory will come on the CPU silicon as well. INTC wants to exterminate everything, even the cockroaches in your crib.Flunk - Wednesday, August 5, 2015 - link

Your generational tests look like they could have come from different chips in the same series. Intel isn't giving us much reason to want to upgrade. They could have at least put out a 8-core consumer chip. It isn't even that much more die space to do so.BrokenCrayons - Wednesday, August 5, 2015 - link

With Skylake's Camera Pipeline, I should be able to apply a sepia filter to my selfies faster than ever before while saving precious electricity that will let me purchase a little more black eyeliner and those skull print leg warmers I've always wanted. Of course, if it doesn't, I'm going to be really upset with them and refuse to run anything more modern than a 1Giga-Pro VIA C3 at 650 MHz because it's the only CPU on the market that is gothic enough pending the lack of much needed sepia support in Skylake.name99 - Wednesday, August 5, 2015 - link

And BrokenCrayons wins the Daredevil award for most substantial of lack vision regarding how computers can be used in the future.For augmented reality to become a thing we need to, you know, actually be able to AUGMENT the image coming in through the camera...

Today on the desktop (where it can be used to prototype algorithms, and for Surface type devices). Tomorrow in Atom, and (Intel hopes), giving them some sort of edge over ARM (though good luck with that --- I expect by the time this actually hits Atom, every major ARM vendor will have something comparable but superior).

Beyond things like AR, Apple TODAY uses CoreImage in a variety of places to handle their UI (eg the Blur and Vibrancy effects in Yosemite). I expect they will be very happy to use new GPU extensions that do this with lower power, and that same lower power will extend to all users of the CI APIs.

Without knowing EXACTLY what Camera Pipeline is providing, we're in no position to judge.

BrokenCrayons - Friday, August 7, 2015 - link

I was joking.