AMD Dives Deep On High Bandwidth Memory - What Will HBM Bring AMD?

by Ryan Smith on May 19, 2015 8:40 AM ESTHistory: Where GDDR5 Reaches Its Limits

To really understand HBM we’d have to go all the way back to the first computer memory interfaces, but in the interest of expediency and sanity, we’ll condense that lesson down to the following. The history of computer and memory interfaces is a consistent cycle of moving between wide parallel interfaces and fast serial interfaces. Serial ports and parallel ports, USB 2.0 and USB 3.1 (Type-C), SDRAM and RDRAM, there is a continual process of developing faster interfaces, then developing wider interfaces, and switching back and forth between them as conditions call for.

So far in the race for PC memory, the pendulum has swung far in the direction of serial interfaces. Though 4 generations of GDDR, memory designers have continued to ramp up clockspeeds in order to increase available memory bandwidth, culminating in GDDR5 and its blistering 7Gbps+ per pin data rate. GDDR5 in turn has been with us on the high-end for almost 7 years now, longer than any previous memory technology, and in the process has gone farther and faster than initially planned.

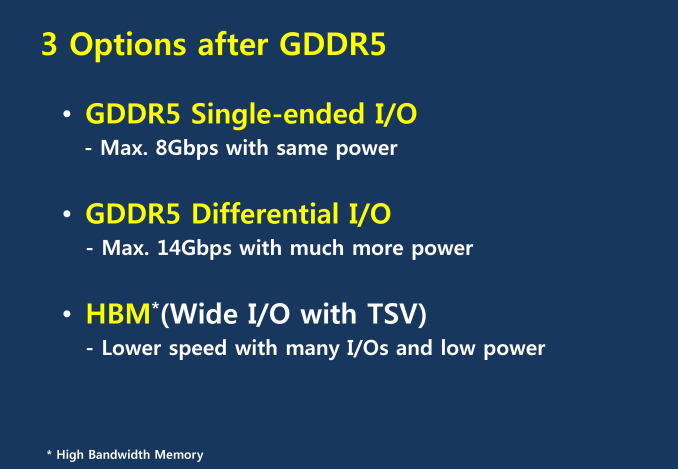

But in the cycle of interfaces, the pendulum has finally reached its apex for serial interfaces when it comes to GDDR5. Back in 2011 at an AMD video card launch I asked then-graphics CTO Eric Demers about what happens after GDDR5, and while he expected GDDR5 to continue on for some time, it was also clear that GDDR5 was approaching its limits. High speed buses bring with them a number of engineering challenges, and while there is still headroom left on the table to do even better, the question arises of whether it’s worth it.

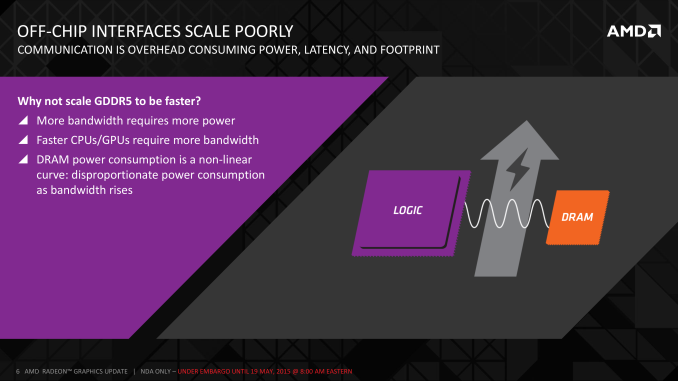

AMD 2011 Technical Forum and Exhibition

The short answer in the minds of the GPU community is no. GDDR5-like memories could be pushed farther, both with existing GDDR5 and theoretical differential I/O based memories (think USB/PCIe buses, but for memory), however doing so would come at the cost of great power consumption. In fact even existing GDDR5 implementations already draw quite a bit of power; thanks to the complicated clocking mechanisms of GDDR5, a lot of memory power is spent merely on distributing and maintaining GDDR5’s high clockspeeds. Any future GDDR5-like technology would only ratchet up the problem, along with introducing new complexities such as a need to add more logic to memory chips, a somewhat painful combination as logic and dense memory are difficult to fab together.

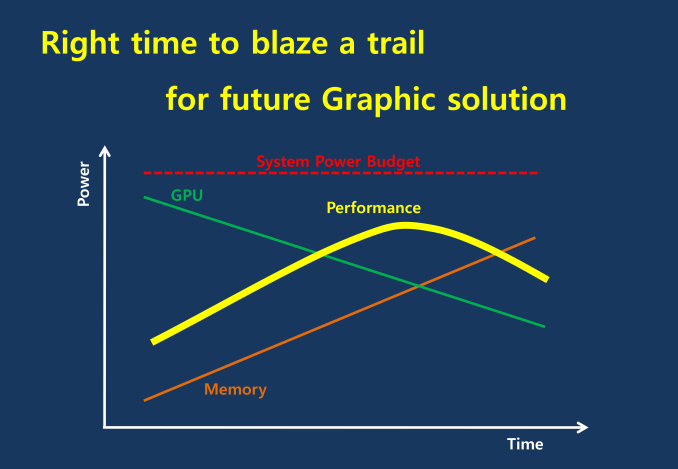

The current GDDR5 power consumption situation is such that by AMD’s estimate 15-20% of Radeon R9 290X’s (250W TDP) power consumption is for memory. This being even after the company went with a wider, slower 512-bit GDDR5 memory bus clocked at 5GHz as to better contain power consumption. So using a further, faster, higher power drain memory standard would only serve to exacerbate that problem.

All the while power consumption for consumer devices has been on a downward slope as consumers (and engineers) have made power consumption an increasingly important issue. The mobile space, with its fixed battery capacity, is of course the prime example, but even in the PC space power consumption for CPUs and GPUs has peaked and since come down some. The trend is towards more energy efficient devices – the idle power consumption of a 2005 high-end GPU would be intolerable in 2015 – and that throws yet another wrench into faster serial memory technologies, as power consumption would be going up exactly at the same time as overall power consumption is expected to come down, and individual devices get lower power limits to work with as a result.

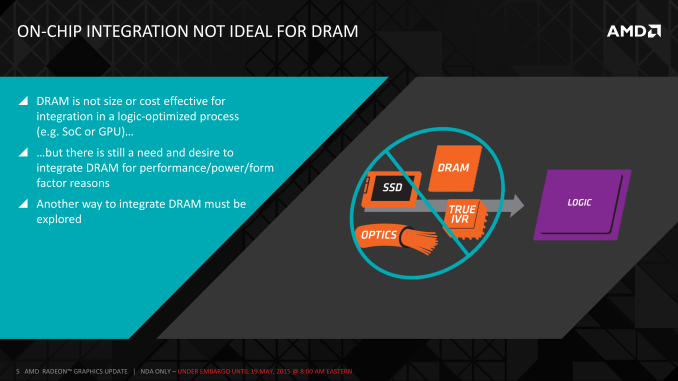

Finally, coupled with all of the above has been issues with scalability. We’ll get into this more when discussing the benefits of HBM, but in a nutshell GDDR5 also ends up taking a lot of space, especially when we’re talking about 384-bit and 512-bit configurations for current high-end video cards. At a time when everything is getting smaller, there is also a need to further miniaturize memory, something that GDDR5 and potential derivatives wouldn’t be well suited to resolve.

The end result is that in the GPU memory space, the pendulum has started to swing back towards parallel memory interfaces. GDDR5 has been taken to the point where going any further would be increasingly inefficient, leading to researchers and engineers looking for a wider next-generation memory interface. This is what has led them to HBM.

163 Comments

View All Comments

Flunk - Tuesday, May 19, 2015 - link

This opens up a lot of possibilities. AMD could produce a CPU with a huge amount of on-package cache like Intel's crystalwell, but higher density.For now it reinforces my opinion that the 10nm-class GPUs that are coming down the pipe in the next 12-16months are the ones that will really blow away the current generation. The 390 might match the Titan in gaming performance (when not memory-constrained) but it's not going to blow everything away. It will be comparable to what the 290x did to the 7970, just knock it down a peg instead of demolishing it.

Kevin G - Tuesday, May 19, 2015 - link

Indeed and that idea hasn't been lost with other companies. Intel will be using a similar technology with the next Xeon Phi chip.See: http://www.eetimes.com/document.asp?doc_id=1326121

Yojimbo - Tuesday, May 19, 2015 - link

CPUs demand low latency memory access, GPUs can hide the latency and require high bandwidth. Although I haven't seen anything specifically saying it, it seems to me that HMC is probably lower latency than HBM, and HBM may not be suitable for that system.Kevin G - Tuesday, May 19, 2015 - link

I'll agree that CPUs need a low latency path to memory.Where I'll differ is on HMC. That technology does some serial-to-parallel conversion which adds latency into the design. I'd actually fathom that HBM would be the one with the lower latency.

nunya112 - Tuesday, May 19, 2015 - link

I dont see this doing very well at all for AMD. yeilds are said to be low. and this is 1st gen. bound to be issues.Compund the fact we havent seen an OFFICIAL driver in 10 months, and poor performing games at release, I want to back the little guy. But I just can't. there are far too many risks.

plus the 980 will come down in price when this comes out . making it a great deal. coupled with 1-3 months for new drivers from NV. you have yourself a much better platform. and as others mentioned. HBM2 will be where it is at as 4gb on a 4K capable card is pretty bad. So no point buying this card if 4gb is max. its a waste. 1440P fills 4gb. so it looks like AMD will still be selling 290X rebrands. and doing poorly on the flagship product. and Nvidia will continue to dominate discreet graphics.

the 6gb 980Ti is looking like a sweet option till pascal.

And for me me my R9 280 just doesnt have the Horsepower for my new 32 " samsung 1440p monitor. so I have to get something.

jabber - Tuesday, May 19, 2015 - link

Maybe should have waited on buying the monitor?WithoutWeakness - Tuesday, May 19, 2015 - link

No this is clearly AMD's fault.xthetenth - Tuesday, May 19, 2015 - link

I'd expect that the reason for the long wait in drivers is getting the new generation's drivers ready. Also, what settings does 1440P fill 4 GB on? I don't see 980 SLI or the 295X tanking in performance, as they would if their memory was getting maxed.Xenx - Tuesday, May 19, 2015 - link

DriverVer=11/20/2014, 14.501.1003.0000, with the catalyst package being 12/8/2014 - That would be 6mo. You're more than welcome to feel 6mo is too long, but it's not 10.Kevin G - Tuesday, May 19, 2015 - link

Typo page 2:"Tahiti was pushing things with its 512-bit GDDR5 memory bus." That should be Hawaii with a 512 bit wide bus or Tahiti with a 384 bit wide bus.