Intel Xeon D Launched: 14nm Broadwell SoC for Enterprise

by Ian Cutress on March 9, 2015 8:00 PM EST- Posted in

- CPUs

- Intel

- 10G Ethernet

- Enterprise

- SoCs

- 14nm

- Xeon-D

- Broadwell-DE

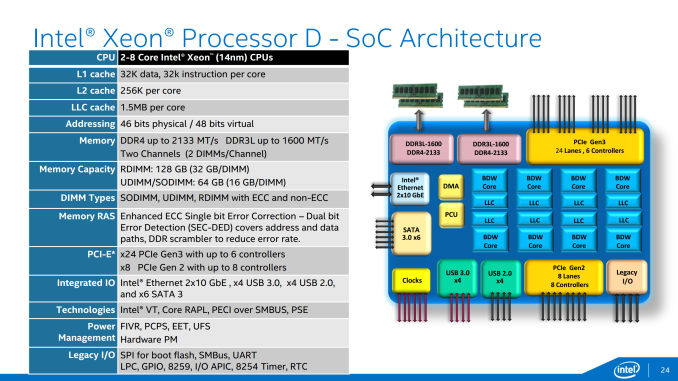

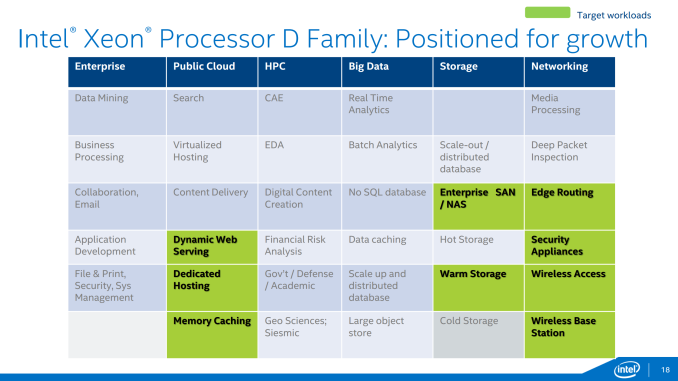

It is very rare for Intel to come out and announce a new integrated platform. Today this comes in the form of Xeon D, best described as the meeting in the middle between Xeon E3 and Atom SoCs, taking the best bits of both and fitting into the market for the low-end server market prioritizing efficiency and networking. Xeon D, also known as Broadwell-DE, combines up to eight high performance Broadwell desktop cores and the PCH onto a single die, reduces both down to 14 nm for power consumption/die area and offers an array of server features normally found with the Xeon/Avoton line. This is being labeled as the first proper Intel Xeon SoC platform.

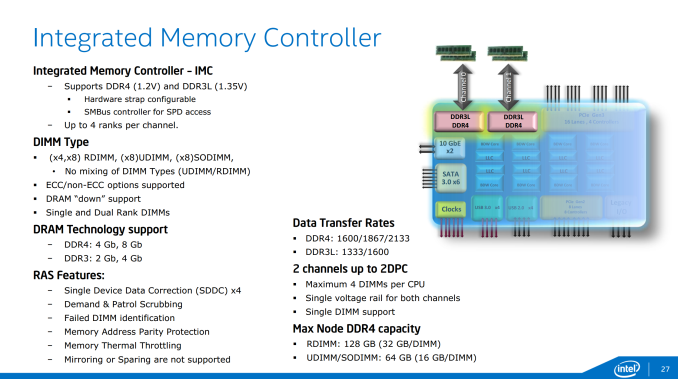

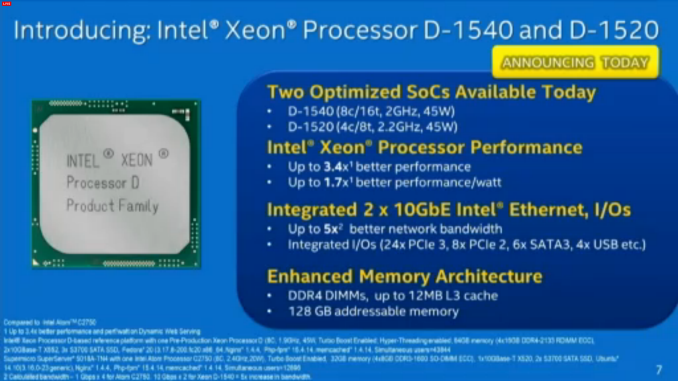

This is the slide currently doing the rounds from Intel’s pre-briefings on Xeon D. This is showing the current top of the line Xeon D-1540, giving eight Broadwell cores for a total of sixteen threads. Each core has access to 32KB/32KB of L1 cache, similar to Xeon E3 v3, as well as 256KB of L2 and 1.5 MB of L3 per core. The SoC supports both DDR3L and DDR4 controllers, with memory compatibility listed as 64GB in UDIMM and 128GB in RDIMM – both ECC and non-ECC is supported.

Note that the DRAM is limited to four DIMMs per CPU, which means two channels at 2 DIMMs per channel, rather than anything quad channel which remains the realm of the Xeon E5 v3 line. Also the limitations of 64GB/128GB in UDIMM/RDIMM are for DDR4 only – I might expect the DDR3L limits to be half that unless Intel has fixed the issue with supporting 16GB DDR3 UDIMMs on non-Atom platforms.

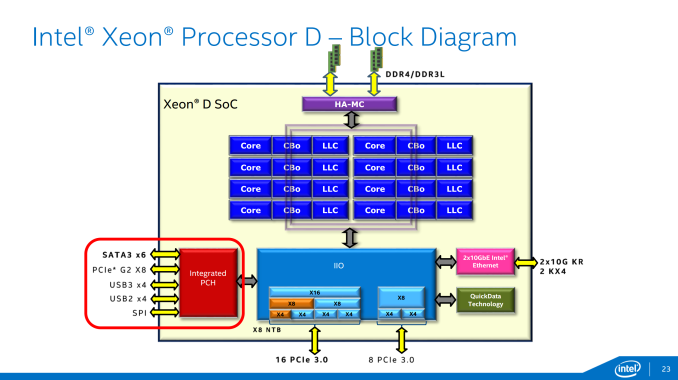

The core arrangement will be in a ring rather than a crossbar, as this tends to be where Intel’s strengths lie when it comes to processor design:

The SoC will have 24 PCIe lanes, up from 16 on the Xeon E3 v3 range. This is normally supported as x16/x8, although can be split into x4/x4/x4/x4/x4/x4 depending on the features required on the platform. The integrated IO hub also has bandwidth for eight lanes of PCIe 2.0 which will support eight separate x1 devices, such as ASPEED controllers, Ethernet or more storage.

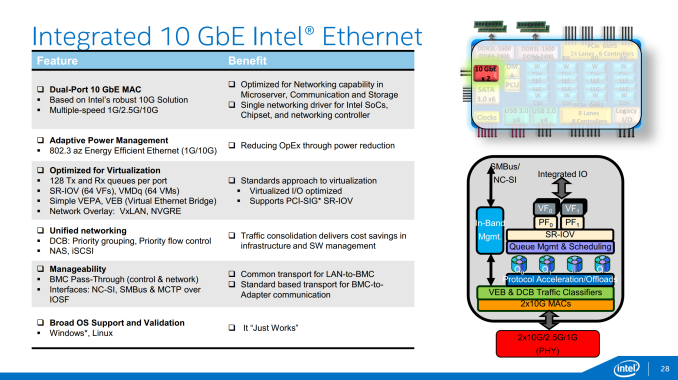

Speaking of networking, the SoC will have bandwidth for two 10GbE connections direct, which will work both in 1G and 2.5G modes. These are optimized for virtualization, allowing 128 Tx and Rx queues per port as well as SR-IOV and VMDq enhancements. With the integration on board, driver support should also be easier to manage rather than external controller solutions.

Adding in the Ethernet onto the SoC is rather interesting because the SoC is rated at a 45W TDP. Normally a server chipset is rated for around 13W, with 10G Ethernet at 7-13W. Thus even the additions of storage and networking can come to 20W, leaving 25W for the cores themselves. As a result, the cores are clocked at 2.0 GHz base for the 8-core D-1540, and 2.2 GHz for the 4-core D-1520. Both SKUs will turbo up to 2.5 GHz when needed.

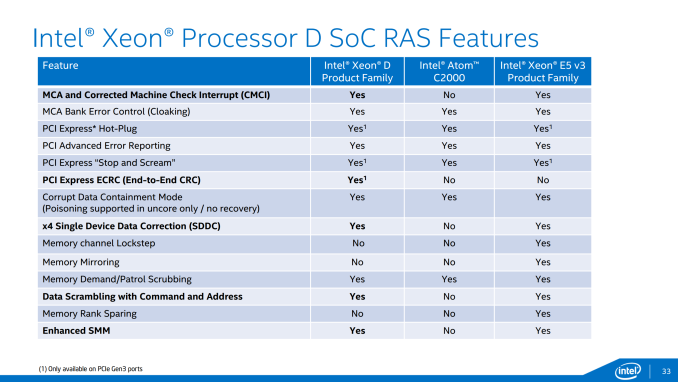

The SoC also supports the more common server and enterprise aspects normally associated with this product range – virtualization, separate external system control and RAS (reliability, availability and serviceability).

The RAS features are a big jump up from the Atom C2000 range, although there are one or two missing relating to memory from the Xeon E5 v3 counterparts.

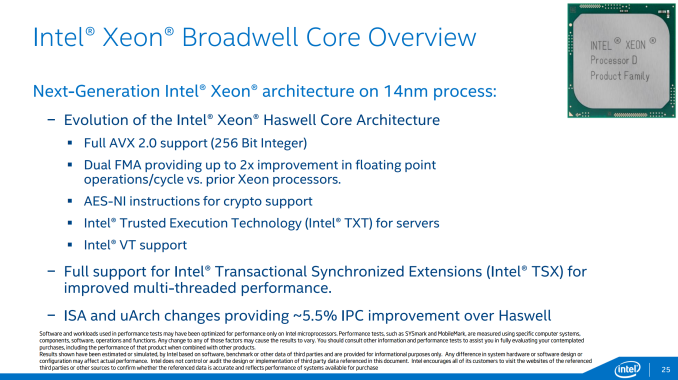

Intel is placing some interesting claims on performance, specifically 3.4x better performance of the high end SKU compared to the Atom C2750 (eight core Silvermont) and 1.7x better performance per watt. Breaking down these comparisons, we have Silvermont against Broadwell which has a significant difference in architecture, and then an eight-core/eight-thread C2750 against the eight-core/sixteen-thread D-1540, which should improve the performance when software can take advantage of the threading. The performance per watt should have been expected moving from 22nm Silvermont to 14nm Broadwell. Bundle these in together, and the 3.4x / 1.7x numbers seem a reasonable comparison. The more poignant number perhaps is the 5.5% IPC increase over Haswell due to microarchitecture improvements:

What Xeon D also brings to the table is the fixed silicon for Transactional Synchronized Extensions (TSX) that were disabled due to an obscure bug found in the middle of last year. This is combined with full AVX 2.0 support, dual FMA and AES-NI instructions.

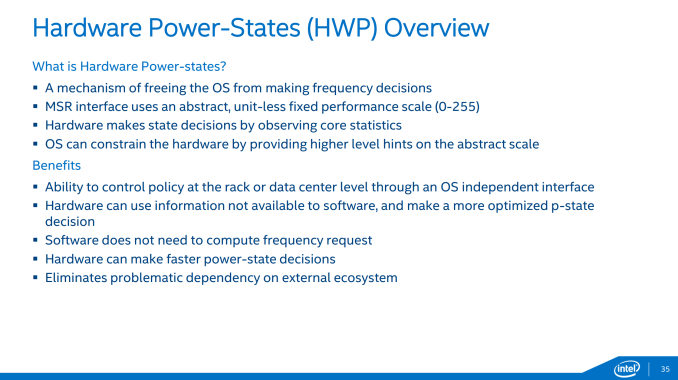

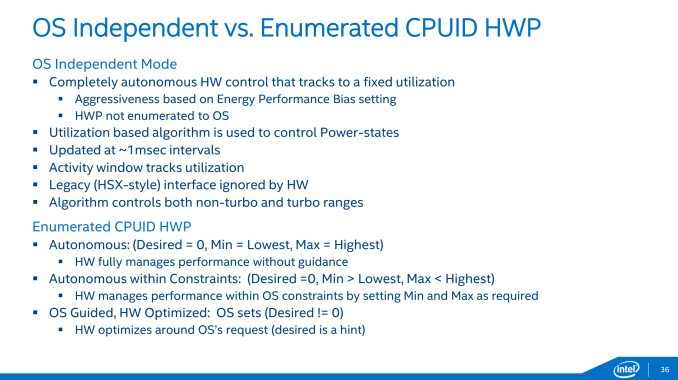

The other element of the equation is power, with Xeon D implementing Hardware Power States – a function that allows the hardware to adjust performance depending on core metrics independent of the operating system functionality. This allows for quicker load-to-idle times by probing the CPU at a level that might not be possible in software, as well as varying the function depending on loading and power consumption to a higher rate of granularity on the fly.

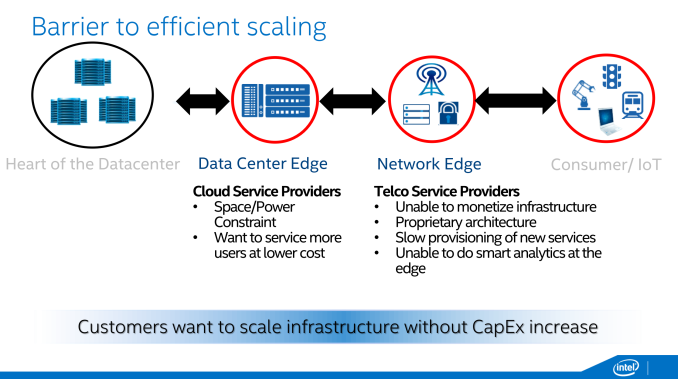

Xeon D is aimed at the ‘Data Center Edge’ for the start of 2015, moving into the ‘Networking Edge’ during the second half of the year. These edge-case scenarios are meant to tackle a region where sometimes the C2750 line was not powerful enough, or the Xeon E3 line was not as cost effective as could be – Xeon D aims to satisfy both sides of the equation.

IoT related implementations are being considered, again for the second half of the year. We probed Intel on the likelihood of seeing any consumer oriented implementations of such a platform, and although they recognized that there might be some niche situations they had not considered where Xeon D might be appropriate, they were being bullish on actually aiming anything at the consumer. Xeon D is purely an enterprise play. That being said, so was the Atom C2750, but it made its way into the hands of the consumer via products such as ASRock Rack’s C2750D4I which we reviewed last year.

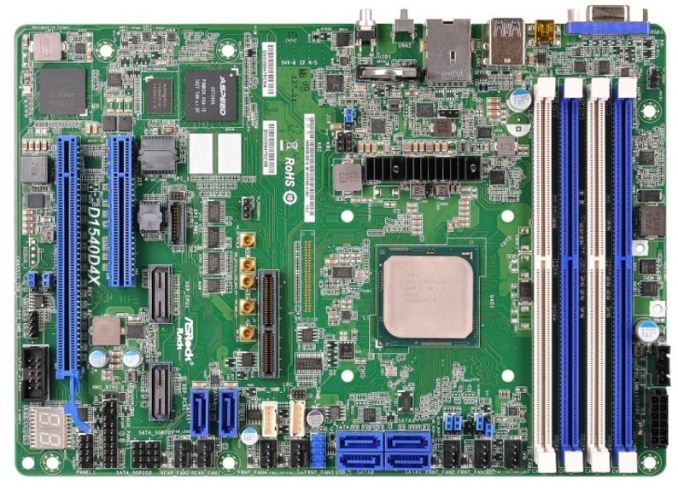

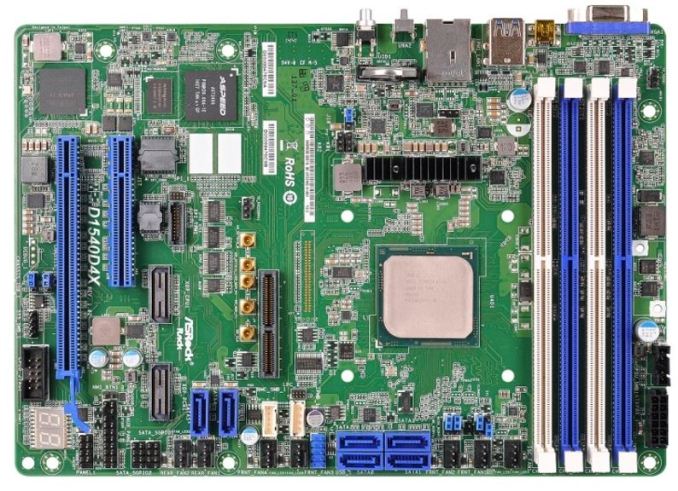

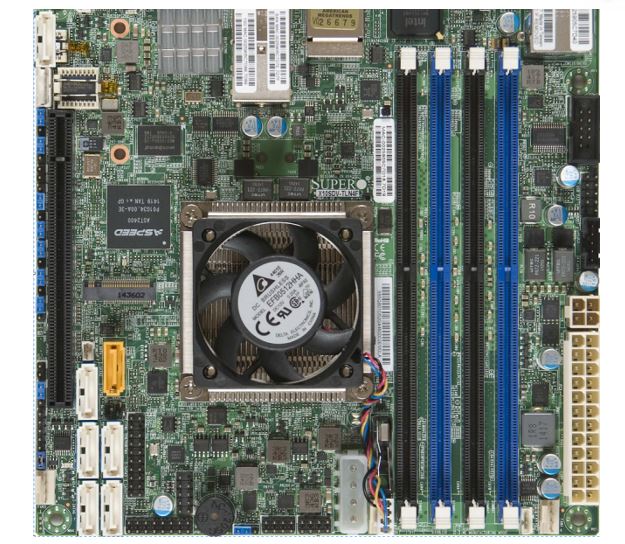

Current indications of products coming to market are by way of ASRock and also Supermicro. Patrick at ServeTheHome caught these two in his sights:

The ASRock Rack D1540D4X is a server build, as shown by the limited power delivery as well as the storage and PCIe arrangement. Networking is via a selected PHY card, supporting either dual 10GBase-T or SFP+. ASRock Rack is showing this setup at CeBIT, hopefully in action.

The SuperMicro X10SDV-TLN4F comes in a regular mini-ITX form factor, supporting dual 10GBase-T along with two I350-AM2 GbE ports and IPMI 2.0 with KVM. The PCIe lanes of Xeon D come into effect here, giving a PCIe 3.0 x4 M.2 slot for 2242 and 2280 sized M.2 storage cards.

Estimated prices for the motherboards put them in the $800-$1000 bracket with the CPU, perhaps matching or besting the equivalent Avoton platform + X540-T2 bundling. Interestingly Patrick also caught wind that Tyan is not going to play in this market, with no products planned.

Despite the official launch today, we are more likely to see product available in volume in April, although a few select partners might be able to ask their regular distributors about testing today. Both Johan and I are looking into sampling a few of these for AnandTech reviews, so stay tuned for those. We have some deeper information on the architecture to pour through between now and then which we will go into for the reviews. Johan recently compared the Xeon E3 v3, Atom C2000 and X-Gene 1 platforms, to which Xeon D is another piece of the puzzle.

Source: Intel, ServeTheHome

38 Comments

View All Comments

Lonearchon - Tuesday, March 10, 2015 - link

It the main power input. Asrock has used smaller power input connector before such as MT-C224 board.DanNeely - Tuesday, March 10, 2015 - link

My question was about its pinout/etc. While the MT-C224 uses an 18 pin connector, 4 of the pins aren't used; so it's possible this is just a rationalized version of that one. That said, it appears to be playing a bit fast and loose with the AX spec. The MT-C224 identifies 9 ground pins and 5 for DC in. Looking at the pinout on the ATX PSU adapter cable from Newegg's gallery, 4 of the DC in pins are for 12V, and the 5th appears to be the PS-ON signal. This means it's playing a bit fast and loose with the ATX spec, because it's ignoring the power-good (gray wire) signal; which is normally used by the PSU to signal when its voltages have stabilized after powering on and to signal an operational fault (hopefully soon enough to keep the mobo from smoking itself). It can (probably) get away with that by waiting past the maximum in spec wait for voltage stabilization before attempting to post and by using an onboard power monitoring circuit.f0d - Tuesday, March 10, 2015 - link

what makes you think this is atom class?it uses broadwell cores at a low clock

this isnt baytrail or cherrytrail - its the full fat broadwell cores

"Xeon D, also known as Broadwell-DE, combines up to eight high performance Broadwell desktop cores and the PCH onto a single die"

as far as i can tell its broadwell-e with the southbridge on the same substrate

f0d - Tuesday, March 10, 2015 - link

was supposed to be response to michael bay but something got messed upKtracho - Tuesday, March 10, 2015 - link

I could use this in a combination NAS/PC/mini-workstation, since I unfortunately don't play games. I could run Windows for personal use and Linux for the server side, and not feel too guilty about leaving it turned on 24 hours a day.My other reaction is that combined with, say, an NVIDIA K80 or two, this would provide fantastic computing per watt, much more efficiently than a single node in the Titan at Oak Ridge National Laboratory, which uses AMD 12-16 core CPUs if I remember correctly, running at around the same frequency as this CPU, and NVIDIA Tesla cards on a PCIe Gen. 2 interface. The networking on those nodes may be a bit faster perhaps, but 10G is not bad.

MrHorizontal - Tuesday, March 10, 2015 - link

OK, so they've built the chip, it's now time to ramp up pressure on Intel to package this for laptops, and finally get rid of the 16GB RAM, no ECC and 4-core limits artificially imposed on mobile workstations.I've never understood why the Xeon E3 wasn't repackaged into a mobile form factor much like a Core i7 is...

DanaGoyette - Tuesday, March 17, 2015 - link

It seems odd that they list both VT and SR-IOV, yet don't list VT-d.How useful is SR-IOV without VT-d? (I've used VT-d, but not SR-IOV.)

Supercell99 - Monday, March 30, 2015 - link

I really don't see anything great here. 10Gbe is still expensive because of the switches, not so much the adapters. A chip that runs 8 x 2.0Ghz is really not that impressive in the server world at these prides, if it could do SMP or something then it would get some attention. But as another commenter pointed out. Intel is only competing with itself in the high end server market and they aren't going to under-cut themselves by offering something at a much lower price point with the same computational horsepower as current Xeon's.