Samsung SM951 (512GB) PCIe SSD Review

by Kristian Vättö on February 24, 2015 8:00 AM ESTAnandTech Storage Bench - The Destroyer

The Destroyer has been an essential part of our SSD test suite for nearly two years now. It was crafted to provide a benchmark for very IO intensive workloads, which is where you most often notice the difference between drives. It's not necessarily the most relevant test to an average user, but for anyone with a heavier IO workload The Destroyer should do a good job at characterizing performance.

| AnandTech Storage Bench - The Destroyer | ||||||||||||

| Workload | Description | Applications Used | ||||||||||

| Photo Sync/Editing | Import images, edit, export | Adobe Photoshop CS6, Adobe Lightroom 4, Dropbox | ||||||||||

| Gaming | Download/install games, play games | Steam, Deus Ex, Skyrim, Starcraft 2, BioShock Infinite | ||||||||||

| Virtualization | Run/manage VM, use general apps inside VM | VirtualBox | ||||||||||

| General Productivity | Browse the web, manage local email, copy files, encrypt/decrypt files, backup system, download content, virus/malware scan | Chrome, IE10, Outlook, Windows 8, AxCrypt, uTorrent, AdAware | ||||||||||

| Video Playback | Copy and watch movies | Windows 8 | ||||||||||

| Application Development | Compile projects, check out code, download code samples | Visual Studio 2012 | ||||||||||

The table above describes the workloads of The Destroyer in a bit more detail. Most of the workloads are run independently in the trace, but obviously there are various operations (such as backups) in the background.

| AnandTech Storage Bench - The Destroyer - Specs | ||||||||||||

| Reads | 38.83 million | |||||||||||

| Writes | 10.98 million | |||||||||||

| Total IO Operations | 49.8 million | |||||||||||

| Total GB Read | 1583.02 GB | |||||||||||

| Total GB Written | 875.62 GB | |||||||||||

| Average Queue Depth | ~5.5 | |||||||||||

| Focus | Worst case multitasking, IO consistency | |||||||||||

The name Destroyer comes from the sheer fact that the trace contains nearly 50 million IO operations. That's enough IO operations to effectively put the drive into steady-state and give an idea of the performance in worst case multitasking scenarios. About 67% of the IOs are sequential in nature with the rest ranging from pseudo-random to fully random.

| AnandTech Storage Bench - The Destroyer - IO Breakdown | |||||||||||

| IO Size | <4KB | 4KB | 8KB | 16KB | 32KB | 64KB | 128KB | ||||

| % of Total | 6.0% | 26.2% | 3.1% | 2.4% | 1.7% | 38.4% | 18.0% | ||||

I've included a breakdown of the IOs in the table above, which accounts for 95.8% of total IOs in the trace. The leftover IO sizes are relatively rare in between sizes that don't have a significant (>1%) share on their own. Over a half of the transfers are large IOs with one fourth being 4KB in size.

| AnandTech Storage Bench - The Destroyer - QD Breakdown | ||||||||||||

| Queue Depth | 1 | 2 | 3 | 4-5 | 6-10 | 11-20 | 21-32 | >32 | ||||

| % of Total | 50.0% | 21.9% | 4.1% | 5.7% | 8.8% | 6.0% | 2.1% | 1.4 | ||||

Despite the average queue depth of 5.5, a half of the IOs happen at queue depth of one and scenarios where the queue depths is higher than 10 are rather infrequent.

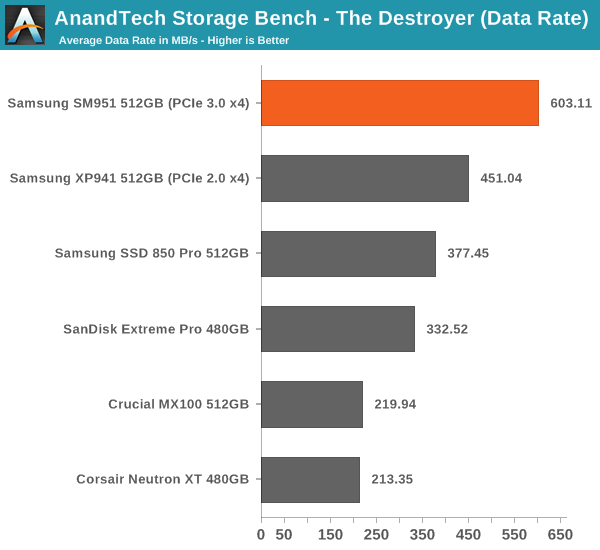

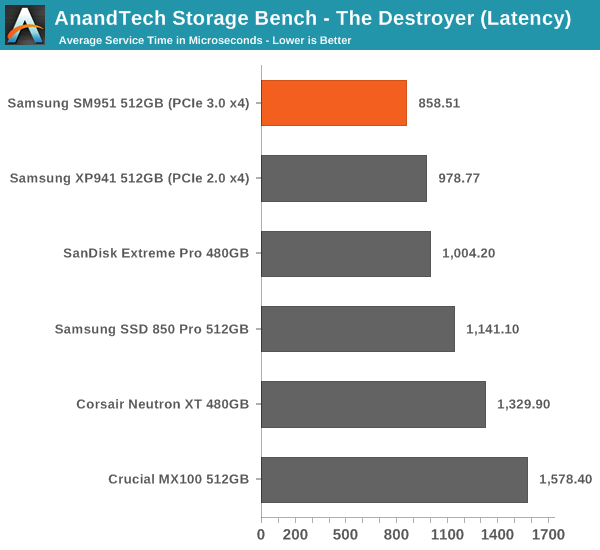

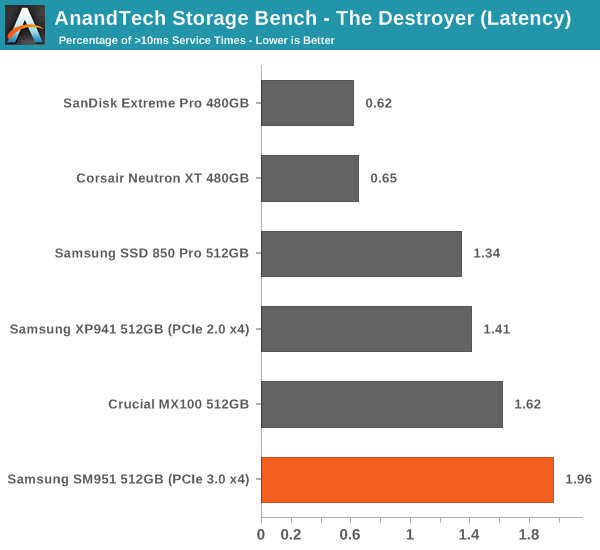

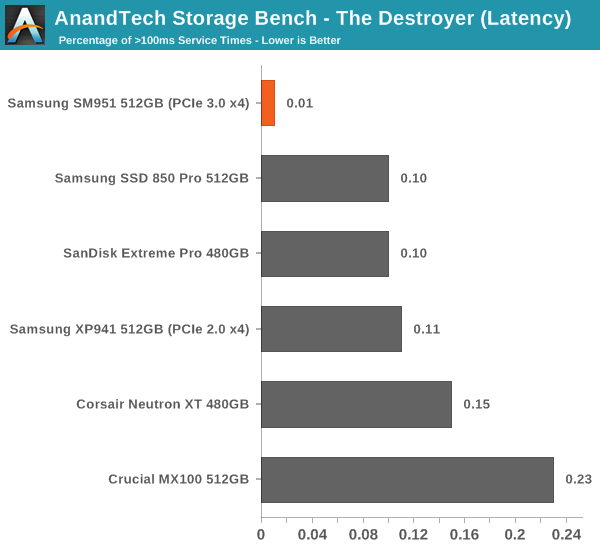

The two key metrics I'm reporting haven't changed and I'll continue to report both data rate and latency because the two have slightly different focuses. Data rate measures the speed of the data transfer, so it emphasizes large IOs that simply account for a much larger share when looking at the total amount of data. Latency, on the other hand, ignores the IO size, so all IOs are given the same weight in the calculation. Both metrics are useful, although in terms of system responsiveness I think the latency is more critical. As a result, I'm also reporting two new stats that provide us a very good insight to high latency IOs by reporting the share of >10ms and >100ms IOs as a percentage of the total.

The SM951 takes the lead easily and provides ~34% increase in data rate over the XP941. The advantage over some of the slower SATA 6Gbps drives is nearly threefold, which speaks for the performance benefit that PCIe and especially PCIe 3.0 provide.

The latency benefit isn't as significant, which suggests that the SM951 provides substantial boost in large IO performance, but the performance at small IO sizes isn't dramatically better.

Despite the lowest average latency, the SM951 actually has the most >10ms IO with nearly 2% of the IOs having higher latency than 10ms. I did some thermal throttling testing (see the dedicated page for full results) and the SM951 seems to throttle fairly aggressively, so my hypothesis is that the high number is due to throttling, which limits the drive's throughput momentarily (and hence increases the latency) to cool down the drive.

However, the SM951 has the least >100ms IOs, which means that despite the possible throttling the maximum service times stay between 10ms and 100ms.

128 Comments

View All Comments

DanNeely - Tuesday, February 24, 2015 - link

"In any case, I strongly recommend having a decent amount of airflow inside the case. My system only has two case fans (one front and one rear) and I run it with the side panels off for faster accessibility, so mine isn't an ideal setup for maximum airflow."With the space between a pair of PCIe x16 slots appearing to have become the most popular spot to put M2 slots I worry that thermal throttling might end up being worse for a lot of end user systems than on your testbench because it'll be getting broiled by GPUs. OTOH even with a GPU looming overhead, it should be possible to slap an aftermarket heatsink on using thermal tape. My parts box has a few I think would work that I've salvaged from random hardware (single wide GPUs???) over the years; if you've got anything similar lying around I'd be curious if it'd be able to fix the throttling problem.

Kristian Vättö - Tuesday, February 24, 2015 - link

I have a couple Plextor M6e Black Edition drives, which are basically M.2 adapters with an M.2 SSD and a quite massive heatsink. I currently have my hands full because of upcoming NDAs, but I can certainly try to test the SM951 with a heatsink and the case fully assembled before it starts to ship.DanNeely - Tuesday, February 24, 2015 - link

Ok, I'd definitely be interested in seeing an update when you've got the time. Thanks.Railgun - Tuesday, February 24, 2015 - link

While I can see it's a case of something is better than nothing, given the mounting options of an M.2 drive, a couple of chips will not get any direct cooling benefit. In fact, they're sitting in a space where virtually zero airflow will be happening.The Plextor solution. and any like it is all well and good, but for those that utilize a native M.2 port on any given mobo, they're kind of out of luck. As it turns out, I also have a GPU blocking just above mine for any decent sized passive cooling; 8cm at best. Maybe that's enough, but the two chips on the other side have the potential to simply cook.

DanNeely - Tuesday, February 24, 2015 - link

Depends if it's the flash chips or the ram/controller that're overheating. I think the latter two are on top and heat sinkable.jhoff80 - Tuesday, February 24, 2015 - link

It'd be even worse too for many of the mini-ITX boards that are putting the M.2 slot underneath the board.I mean, something like M.2 is ideal for these smaller cases where cabling can become an issue, so having the slot on the bottom of the board combined with a drive needing airflow sounds like grounds for a disaster.

extide - Tuesday, February 24, 2015 - link

Yeah I bet it's the controller that is being throttled, because IT is overheating, not the actual NAND chips.ZeDestructor - Tuesday, February 24, 2015 - link

I second this motion. Prefereably as a seperate article so I don't miss it (I only get to AT via RSS nowadays)rpg1966 - Tuesday, February 24, 2015 - link

Maybe a dumb question, but: the 512GB drive has 4 storage chips (two on the front, two on the back), therefore each chip stores 128GB. If the NAND chips are 64Gbit (8GB), that means there are 16 packages in each chip - is that right?Kristian Vättö - Tuesday, February 24, 2015 - link

That is correct. Samsung has been using 16-die packages for quite some time now in various products.