The DirectX 12 Performance Preview: AMD, NVIDIA, & Star Swarm

by Ryan Smith on February 6, 2015 2:00 PM EST- Posted in

- GPUs

- AMD

- Microsoft

- NVIDIA

- DirectX 12

Frame Time Consistency & Recordings

Last, but not least, we wanted to also look at frame time consistency across Star Swarm, our two vendors, and the various APIs available to them. Next to CPU efficiency gains, one of the other touted benefits of low-level APIs like DirectX 12 is the ability for developers to better control frame time pacing due to the fact that the API and driver are doing fewer things under the hood and behind an application’s back. Inefficient memory management operations, resource allocation, and shader compiling in particular can result in unexpected and undesirable momentary drops in performance. However, while low-level APIs can improve on this aspect, it doesn’t necessarily mean high-level APIs are bad at it. So it is an important distinction between bad/good and good/better.

On a technical note, these frame times are measured within (and logged by) Star Swarm itself. So these are not “FCAT” results that are measuring the end of the pipeline, nor is that possible right now due to the lack of an overlay option for DirectX 12.

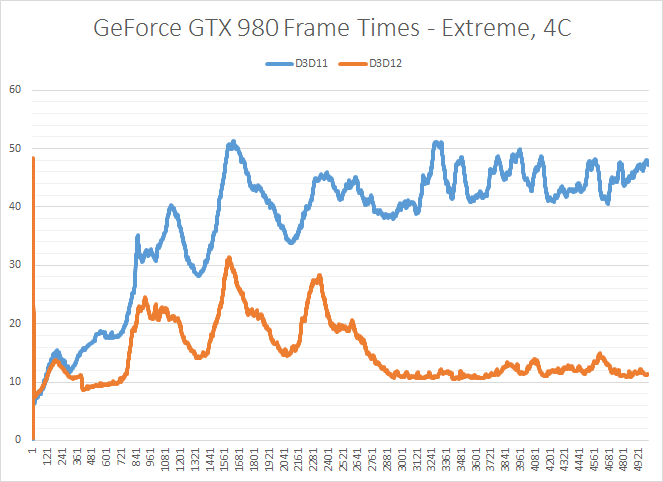

Starting with the GTX 980, we can immediately see why we can’t always write-off high-level APIs. Benchmark non-determinism aside, both DirectX 11 and DirectX 12 produce consistent frame times; one is just much, much faster than the other. Both on paper and subjectively in practice, Star Swarm has little trouble maintaining consistent frame times on the GTX 980. Even if DirectX 11 is slow, it is at least consistent.

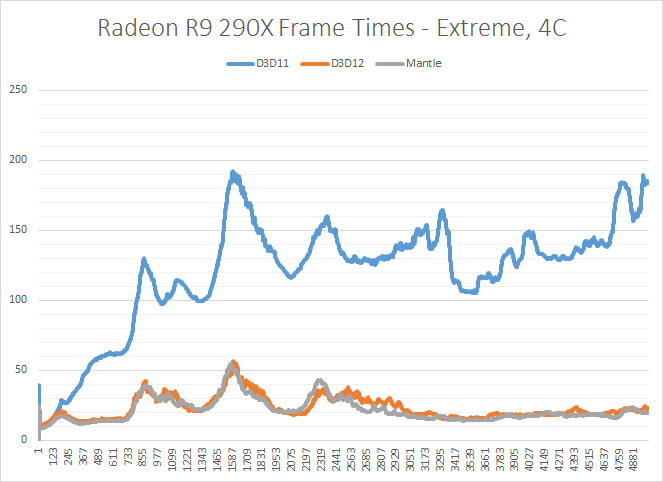

The story is much the same for the R9 290X. DirectX 11 and DirectX 12 both produce consistent results, with neither API experiencing frame time swings. Meanwhile Mantle falls into the same category as DirectX 12, producing similarly consistent performance and frame times.

Ultimately it’s clear from these results that if DirectX 12 is going to lead to any major differences in frame time consistency, Star Swarm is not the best showcase for it. With DirectX 11 already producing consistent results, DirectX 12 has little to improve on.

Finally, along with our frame time consistency graphs, we have also recorded videos of shorter run-throughs on both the GeForce GTX 980 and Radeon R9 290X. With YouTube now supporting 60fps, these videos are frame-accurate representations of what we see when we run the Star Swarm benchmark, showing first-hand the overall frame time consistency among all configurations, and of course the massive difference in performance.

245 Comments

View All Comments

ObscureAngel - Saturday, February 7, 2015 - link

Ryan can you do an article demonstrating the low performance of AMD GPUs in low end CPUs like i3 or anything, in more CPU Bound games comparing to nvidia GPUs in the same CPUs?Unworthy websites have done it, like GameGPU.ru or Digital foundry.

They don't have so much expression because well, sometimes they are a bit dumb.

I confirmed that recently with my own benchmarks, AMD GPUs really have much less performance in the same CPU (low-end CPUs) than an nvidia GPU.

If you look into it and publish maybe that would put a little pressure on AMD and they start to look into it.

But not sure if you can do it, AMD gives your website AMD GPUS and CPUs to benchmark, i'm pretty sure AMD wouldn't like to read the truth..

But since Futuremark new 3dmark is close to release that new benchmark that benchmarks overhead/drawcalls.

It could be nice to give a little highlight of that problem with AMD.

Many people are starting to notice that problem, but AMD are ignoring everyone that claims the lack of performance, so we need somebody strong like Anandtech or other website to analyse these problems and publish to everyone see that something is wrong.

Keep in mind that AMD just fixed the frametime problem in crossfire, cause one website (which i dont remember) publish that, and people start to complain about it, and they start to fix it, and they really fix it.

Now, we already have the complains but we dont have the upper voice like you guys.

okp247 - Sunday, February 8, 2015 - link

Sorry, my bad. The numbers I've stated in the above posts were indeed from either the Follow or Attract scenario.So what is up with the underutilized AMD cards? Clearly, they are not stretching their legs under DX11. In the article you touch upon the CPU batch submission times, and how these are taking a (relatively) long time on the AMD cards. Is this the case also with other draw-call heavy games or is it a fluke in Star Swarm?

ObscureAngel - Monday, February 9, 2015 - link

It happens on games too.I did a video and everything about it.

Spread the word, we need to get AMD attention for this..Since they dont answer me i decided to publicly start to say bad things about them :D

https://www.youtube.com/watch?v=2-nvGOK6ud8

killeak - Saturday, February 7, 2015 - link

Both API (D3D12 and Mantle) are under NDA. In the case of D3D12, in theory if you are working with D3D12 you can't speak about it unless you have explicit authorization from MS. The same with Mantle and AMD.I hope D3D12 goes public by GDC time, I mean the public beta no the final version, after that things will change ;)

Klimax - Saturday, February 7, 2015 - link

Thanks for numbers. They show perfectly how broken and craptastick entire POS is. There are extreme number of idiocies and stupidities in it that it couldn't pass any review by any competent developer.1)Insane number of batches. You want to have at least 100 objects in one to actually see benefit. (Civilization V default settings) To see quite better performance I would say at least 1000 objects to be in one. (Civilization V test with adjusted config) Star Swarm has between 10 to 50 times more batches then Civilization. (Precise number cannot be said as I don't have number of objects to be drawn reported from that "benchmark")

2)Absolutely insane number of superfluous calls. Things like IASetPrimitiveTopology are called (almost) each time an object is to be drawn with same parameters(constants) and with large number of batches those functions add to overhead. That's why you see such large time for DX11 draw - it has to reprocess many things repeatedly. (Some caching and shortcuts can be done as I am sure NVidia implemented them, but there are limits even for otherwise very cheap functions)

3)Simulation itself is so atrociously written that it doesn't really scale at all! This is in space, where number of intersection is very small, so you can process it at maximum possible parallelization.

360s run had 4 cores used for 5,65s with 5+ for 6,1s in total. Bad is weak word...

And I am pretty sure I haven't uncovered all. Note: I used Intel VTune for analysis 1 year ago. Since then no update came so I don't think anything changed at all... (Seeing those numbers I am sure of it)

nulian - Saturday, February 7, 2015 - link

The draw calls are misused on purpose in this demo to show how much better it has become. The advantage for normal games is they can do more light and more effects that use a lot of draw calls without breaking the performance on pc. It is one of the biggest performance different between console and PC draw calls.BehindEnemyLines - Saturday, February 7, 2015 - link

Or maybe they are doing that on purpose to show the bottleneck of DX11 API? Just a thought. If this is a "poorly" written performance demo, then you can only imagine the DX12 improvements after it's "properly" written.Teknobug - Saturday, February 7, 2015 - link

Wasn't there some kind of leaked info that DX12 was basically a copy of Mantle with DX API? Wouldn't surprise me that it'd come close to Mantle's performance.dragonsqrrl - Sunday, February 8, 2015 - link

Right, cause Microsoft only started working on DX12 when Mantle was announced...bloodypulp - Sunday, February 8, 2015 - link

You're missing the point. Mantle/D12 are so similar you could essentially call DX12 the Windows-only version of Mantle. By releasing Mantle, AMD gave developers an opportunity to utilize the new low-level APIs nearly two years before Microsoft was ready to release their own as naturally it was tied to their OS. Those developers who had the foresight to take advantage of Mantle during those two years clearly benefited. They'll launch DX12-ready games before their competitors.