NVIDIA Publishes Statement on GeForce GTX 970 Memory Allocation

by Ryan Smith on January 24, 2015 8:00 PM EST

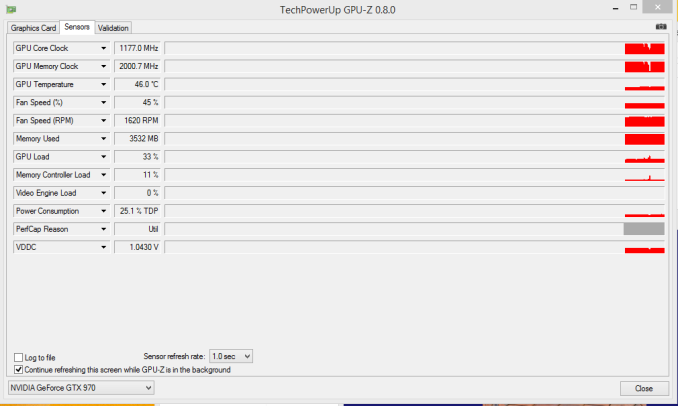

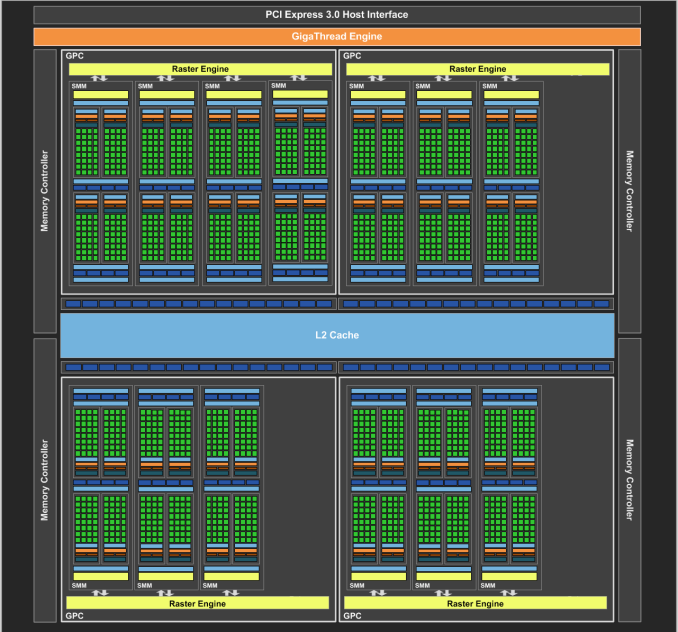

On our forums and elsewhere over the past couple of weeks there has been quite a bit of chatter on the subject of VRAM allocation on the GeForce GTX 970. To quickly summarize a more complex issue, various GTX 970 owners had observed that the GTX 970 was prone to topping out its reported VRAM allocation at 3.5GB rather than 4GB, and that meanwhile the GTX 980 was reaching 4GB allocated in similar circumstances. This unusual outcome was at odds with what we know about the cards and the underlying GM204 GPU, as NVIDIA’s specifications state that the GTX 980 and GTX 970 have identical memory configurations: 4GB of 7GHz GDDR5 on a 256-bit bus, split amongst 4 ROP/memory controller partitions. In other words, there was no known reason that the GTX 970 and GTX 980 should be behaving differently when it comes to memory allocation.

GTX 970 Memory Allocation (Image Courtesy error-id10t of Overclock.net Forums)

Since then there has been some further investigation into the matter using various tools written in CUDA in order to try to systematically confirm this phenomena and to pinpoint what is going on. Those tests seemingly confirm the issue – the GTX 970 has something unusual going on after 3.5GB VRAM allocation – but they have not come any closer in explaining just what is going on.

Finally, more or less the entire technical press has been pushing NVIDIA on the issue, and this morning they have released a statement on the matter, which we are republishing in full:

The GeForce GTX 970 is equipped with 4GB of dedicated graphics memory. However the 970 has a different configuration of SMs than the 980, and fewer crossbar resources to the memory system. To optimally manage memory traffic in this configuration, we segment graphics memory into a 3.5GB section and a 0.5GB section. The GPU has higher priority access to the 3.5GB section. When a game needs less than 3.5GB of video memory per draw command then it will only access the first partition, and 3rd party applications that measure memory usage will report 3.5GB of memory in use on GTX 970, but may report more for GTX 980 if there is more memory used by other commands. When a game requires more than 3.5GB of memory then we use both segments.

We understand there have been some questions about how the GTX 970 will perform when it accesses the 0.5GB memory segment. The best way to test that is to look at game performance. Compare a GTX 980 to a 970 on a game that uses less than 3.5GB. Then turn up the settings so the game needs more than 3.5GB and compare 980 and 970 performance again.

Here’s an example of some performance data:

GeForce GTX 970 Performance Settings GTX980 GTX970 Shadows of Mordor

<3.5GB setting = 2688x1512 Very High

72fps

60fps

>3.5GB setting = 3456x1944

55fps (-24%)

45fps (-25%)

Battlefield 4

<3.5GB setting = 3840x2160 2xMSAA

36fps

30fps

>3.5GB setting = 3840x2160 135% res

19fps (-47%)

15fps (-50%)

Call of Duty: Advanced Warfare

<3.5GB setting = 3840x2160 FSMAA T2x, Supersampling off

82fps

71fps

>3.5GB setting = 3840x2160 FSMAA T2x, Supersampling on

48fps (-41%)

40fps (-44%)

On GTX 980, Shadows of Mordor drops about 24% on GTX 980 and 25% on GTX 970, a 1% difference. On Battlefield 4, the drop is 47% on GTX 980 and 50% on GTX 970, a 3% difference. On CoD: AW, the drop is 41% on GTX 980 and 44% on GTX 970, a 3% difference. As you can see, there is very little change in the performance of the GTX 970 relative to GTX 980 on these games when it is using the 0.5GB segment.

Before going any further, it’s probably best to explain the nature of the message itself before discussing the content. As is almost always the case when issuing blanket technical statements to the wider press, NVIDIA has opted for a simpler, high level message that’s light on technical details in order to make the content of the message accessible to more users. For NVIDIA and their customer base this makes all the sense in the world (and we don’t resent them for it), but it goes without saying that “fewer crossbar resources to the memory system” does not come close to fully explaining the issue at hand, why it’s happening, and how in detail NVIDIA is handling VRAM allocation. Meanwhile for technical users and technical press such as ourselves we would like more information, and while we can’t speak for NVIDIA, rarely is NVIDIA’s first statement their last statement in these matters, so we do not believe this is the last we will hear on the subject.

In any case, NVIDIA’s statement affirms that the GTX 970 does materially differ from the GTX 980. Despite the outward appearance of identical memory subsystems, there is an important difference here that makes a 512MB partition of VRAM less performant or otherwise decoupled from the other 3.5GB.

Being a high level statement, NVIDIA’s focus is on the performance ramifications – mainly, that there generally aren’t any – and while we’re not prepared to affirm or deny NVIDIA’s claims, it’s clear that this only scratches the surface. VRAM allocation is a multi-variable process; drivers, applications, APIs, and OSes all play a part here, and just because VRAM is allocated doesn’t necessarily mean it’s in use, or that it’s being used in a performance-critical situation. Using VRAM for an application-level resource cache and actively loading 4GB of resources per frame are two very different scenarios, for example, and would certainly be impacted differently by NVIDIA’s split memory partitions.

For the moment with so few answers in hand we’re not going to spend too much time trying to guess what it is NVIDIA has done, but from NVIDIA’s statement it’s clear that there’s some additional investigating left to do. If nothing else, what we’ve learned today is that we know less than we thought we did, and that’s never a satisfying answer. To that end we’ll keep digging, and once we have the answers we need we’ll be back with a deeper answer on how the GTX 970’s memory subsystem works and how it influences the performance of the card.

93 Comments

View All Comments

haukionkannel - Sunday, January 25, 2015 - link

Hmmm they are not faulty cards. They just have worse memory controller than 980 has, and when you compare the price of the cards, you can take cheaper 970 or much more expensive 980 with better memory controller.This more like "a not so good" feature than a bug, because it has been done for purpose.

xthetenth - Monday, January 26, 2015 - link

That's worse. They're 4GB cards sold as 4GB cards, not 4GB cards terms and conditions may apply. Between Keplers having too little RAM and this I have way less faith that an NV card will hold onto its launch performance relative to other cards than I did half a year ago.Siana - Wednesday, January 28, 2015 - link

Small realistic assessments: most of the laptops i have seen fail have failed on the largest chip cracking its balls due to flexing of the mainboard. And most of these haven't had an nVidia in them!So it's really a very common issue, nothing special. Was it more pronounced on the particular nVidia in question? Yes, in the regard that the cracking also happened by pure thermal cycling within specified thermal parameters. But i doubt it seriously affected the laptop life, unless your laptop had a rigid magnesium body.

Alexvrb - Wednesday, January 28, 2015 - link

You have no idea how bad it was. Sorry. Google Nvidia Bumpgate. It was a HUGE issue. The failure rate was staggering, MOST of the ones with Nvidia MCPs that relied on the integrated graphics died within a couple years. It wasn't due to mainboard flexing. It was due to mismatched pad-bump materials, incorrect underfill selection (not firm enough), and uneven power draw.It wasn't quite as pronounced on dedicated graphics but there were certain models that were prone to early failure. Heat wasn't truly the cause (as it occurred within expected/rated operational temp ranges) but it did exacerbate the issue.

Ualdayan - Sunday, January 25, 2015 - link

The worst thing about bumpgate was, as someone who had multiple videocard failures (particularly 7950GX2s because they ran so hot anyway) everybody else insisted *I* must be at fault for the failures. Everybody insisted there was no way I was seeing multiple failures within months of each other without something else being wrong like my PSU (nevermind that it was happening in multiple different computers). Especially the manufacturer ("there's nothing wrong with our cards") before the problem was admitted by nVidia.GeorgeH - Saturday, January 24, 2015 - link

They acknowledge that there is a performance problem, albeit slight - in the examples they chose to use. It isn't unreasonable to assume there might be edge cases where a program assumes that all 4GB is created equal and is more memory performance restricted.Overall I'd bet this will end up a complete non-issue. That said, the fact that Nvidia "hid" this deficiency (by not clearly disclosing it from the beginning) just gives the internet drama machine way too much fuel. Stupid move on Nvidia's part.

CaedenV - Saturday, January 24, 2015 - link

Slower processors do not scale evenly under higher amounts of stress compared to faster processors. Having a 3-5% performance difference under identical heavier loads can be accounted for by lots of things, not just a difference in vRAM speeds. These are performance numbers are normal and what you would expect comparing these specs of cards.Gigaplex - Saturday, January 24, 2015 - link

You're calling a 1-3% performance difference (within margin of error in measurements) when comparing 2 products that differ in more than just memory configurations a performance problem? No, they do not acknowledge there is a performance problem, and even state, using those figures, to back up the claim that there isn't one.(There might be a performance problem under specific workloads, but their examples don't show it)

GeorgeH - Sunday, January 25, 2015 - link

"Slight" performance difference. And they clearly admit it: "there is very little change in the performance ... when it is using the 0.5GB segment." For Nvidia PR-speak, that's pretty clear. The single digit difference also makes it a non-issue, assuming that's as bad as it gets.Horza - Sunday, January 25, 2015 - link

It would be naive to assume that Nvidia chose examples that showed a worst-case scenario for the issue. How much of the slower 0.5GB segment was even in use? >3.5GB could mean 3.51GB which of course would have next to no impact on performance. I'm not implying this is some huge issue for owners just that I's like to know more.