The NVIDIA GeForce GTX 980 Review: Maxwell Mark 2

by Ryan Smith on September 18, 2014 10:30 PM ESTCrysis 3

Still one of our most punishing benchmarks, Crysis 3 needs no introduction. With Crysis 3, Crytek has gone back to trying to kill computers and still holds “most punishing shooter” title in our benchmark suite. Only in a handful of setups can we even run Crysis 3 at its highest (Very High) settings, and that’s still without AA. Crysis 1 was an excellent template for the kind of performance required to drive games for the next few years, and Crysis 3 looks to be much the same for 2014.

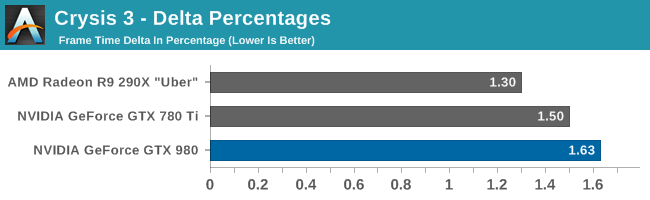

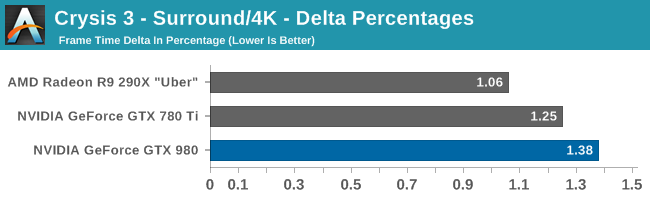

Meanwhile delta percentage performance is extremely strong here. Everyone, including the GTX 980, is well below 3%.

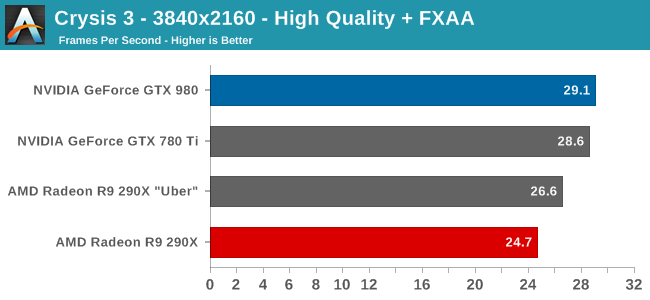

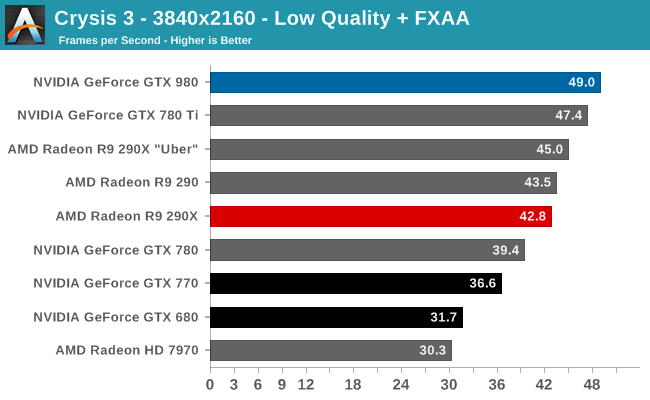

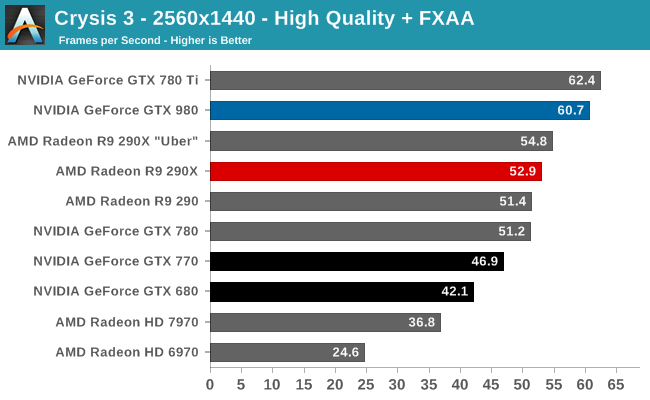

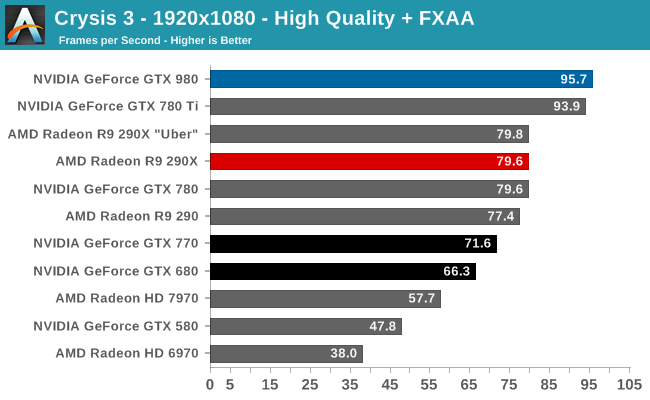

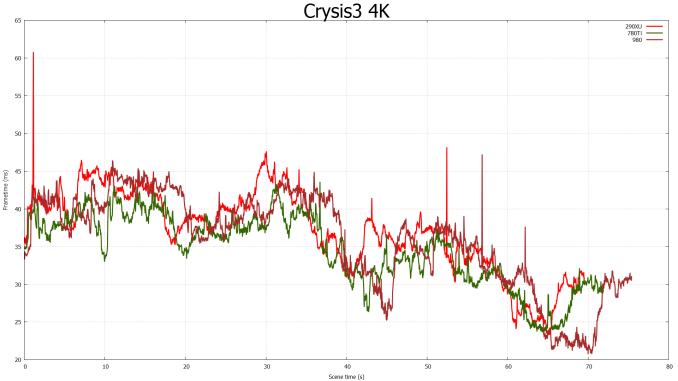

Always a punishing game, Crysis 3 ends up being one of the only games the GTX 980 doesn’t take a meaningful lead on over the GTX 780 Ti. To be clear the GTX 980 wins in most of these benchmarks, but not in all of them, and even when it does win the GTX 780 Ti is never far behind. For this reason the GTX 980’s lead over the GTX 780 Ti and the rest of our single-GPU video cards is never more than a few percent, even at 4K. Otherwise at 1440p we’re looking at the tables being turned, with the GTX 980 taking a 3% deficit. This is the only time the GTX 980 will lose to NVIDIA’s previous generation consumer flagship.

As for the comparison versus AMD’s cards, NVIDIA has been doing well in Crysis 3 and that extends to the GTX 980 as well. The GTX 980 takes a 10-20% lead over the R9 290XU depending on the resolution, with its advantage shrinking as the resolution grows. During the launch of the R9 290 series we saw that AMD tended to do better than NVIDIA at higher resolutions, and while this pattern has narrowed some, it has not gone away. AMD is still the most likely to pull even with the GTX 980 at 4K resolutions, despite the additional ROPS available to the GTX 980.

This will also be the worst showing for the GTX 980 relative to the GTX 680. GTX 980 is still well in the lead, but below 4K that lead is just 44%. NVIDIA can’t even do 50% better than the GTX 680 in this game until we finally push the GTX 680 out of its comfort zone at 4K.

All of this points to Crysis 3 being very shader limited at these settings. NVIDIA has significantly improved their CUDA core occupancy on Maxwell, but in these extreme situations GTX 980 will still struggle with the CUDA core deficit versus GK110, or the limited 33% increase in CUDA cores versus GTX 680. Which is a feather in Kepler’s cap if anything, showing that it’s not entirely outclassed if given a workload that maps well to its more ILP-sensitive shader architecture.

The delta percentage story continues to be unremarkable with Crysis 3. GTX 980 does technically fare a bit worse, but it’s still well under 3%. Keep in mind that delta percentages do become more sensitive at higher framerates (there is less absolute time to pace frames), so a slight increase here is not unexpected.

274 Comments

View All Comments

Kutark - Sunday, September 21, 2014 - link

I'd hold on to it. Thats still a damn fine card. Honestly you could probably find a used one on ebay for a decent price and SLI it up.IMO though id splurge for a 970 and call it a day. I've got dual 760's right now, first time i've done SLI in prob 10 years. And honestly, the headaches just arent worth it. Yeah, most games work, but some games will have weird graphical issues (BF4 near release was a big one, DOTA 2 doesnt seem to like it), others dont utilize it well, etc. I kind of wish id just have stuck with the single 760. Either way, my 2p

SkyBill40 - Wednesday, September 24, 2014 - link

@ Kutark:Yeah, I tried to buy a nice card at that time despite wanting something higher than a 660Ti. But, as my wallet was the one doing the dictating, it's what I ended up with and I've been very happy. My only concern with a used one is just that: it's USED. Electronics are one of those "no go" zones for me when it comes to buying second hand since you have no idea about the circumstances surrounding the device and seeing as it's a video card and not a Blu Ray player or something, I'd like to know how long it's run, it's it's been OC'd or not, and the like. I'd be fine with buying another one new but not for the prices I'm seeing that are right in line with a 970. That would be dumb.

In the end, I'll probably wait it out a bit more and decide. I'm good for now and will probably buy a new 144Hz monitor instead.

Kutark - Sunday, September 21, 2014 - link

Psshhhhh.... I still have my 3dfx Voodoo SLI card. Granted its just sitting on my desk, but still!!!In all seriousness though, my roommate, who is NOT a gamer, is still using an old 7800gt card i had laying around because the video card in his ancient computer decided to go out and he didnt feel like building a new one. Can't say i blame him, Core 2 quad's are juuust fine for browsing the web and such.

Kutark - Sunday, September 21, 2014 - link

Voodoo 2, i meant, realized i didnt type the 2.justniz - Tuesday, December 9, 2014 - link

>> the power bills are so ridiculous for the 8800 GTX!Sorry but this is ridiculous. Do the math.

Best info I can find is that your card is consuming 230w.

Assuming you're paying 15¢/kWh, even gaming for 12 hours a day every day for a whole month will cost you $12.59. Doing the same with a gtx980 (165w) would cost you $9.03/month.

So you'd be paying maybe $580 to save $3.56 a month.

LaughingTarget - Friday, September 19, 2014 - link

There is a major difference between market capitalization and available capital for investment. Market Cap is just a rote multiplication of the number of shares outstanding by the current share price. None of this is available for company use and is only an indirect measurement of how well a company is performing. Nvidia has $1.5 billion in cash and $2.5 billion in available treasury stock. Attempting to match Intel's process would put a significant dent into that with little indication it would justify the investment. Nvidia already took on a considerable chunk of debt going into this year as well, which would mean that future offerings would likely go for a higher cost of debt, making such an investment even harder to justify.While Nvidia is blowing out AMD 3:1 on R&D and capacity, Intel is blowing both of them away, combined, by a wide margin. Intel is dropping $10 billion a year on R&D, which is a full $3 billion beyond the entire asset base of Nvidia. It's just not possible to close the gap right now.

Silma - Saturday, September 20, 2014 - link

I don't think you realize how many billion dollars you need to spend to open a 14 nm factory, not even counting R&D & yearly costs.It's humongous, there is a reason why there are so few foundries in the world.

sp33d3r - Saturday, September 20, 2014 - link

Well, if the NVIDIA/AMD CEOs is blind enough and cannot see it coming, then intel are gonna manufacture their next integrated graphics on a 10 or 8 nm chip and though immature will be a tough competition to them in terms of power and efficiency and even weight.remember currently pcs load integrated graphics as a must by intel and people add third party graphics only 'cause intels is not good enough literally adding weight of two graphics cards (Intels and third partys) to the product. Its all worlds apart more convenient when integrated graphics outperforms or able to challenge third party GPUs, we would just throw away NVIDIA and guess what they wont remain a monopoly anymore rather completely wiped out

Besides Intels integrated graphics are getting more mature in terms of not just die size with every launch, just compare 4000s with 5000s, it wont be long before they catch up.

wiyosaya - Friday, September 26, 2014 - link

I have to agree that it is partly not about the verification cost breaking the bank. However, what I think is the more likely reason is that since the current node works, they will try to wring every penny out of that node. Look at the prices for the Titan Z. If this is not an attempt to fleece the "gotta have it buyer," I don't know what is.Ushio01 - Thursday, September 18, 2014 - link

Wouldn't paying to use the 22nm fabs be a better idea as there about to become under used and all the teething troubles have been fixed.