Intel Xeon E5 Version 3: Up to 18 Haswell EP Cores

by Johan De Gelas on September 8, 2014 12:30 PM ESTBenchmark Configuration and Methodology

This review - due to time constraints and a failing RAID controller inside our iSCSI storage - concentrates mostly on the performance and performance/watt of server applications running on top of Ubuntu Server 14.04 LTS. To make things more interesting, we tested 4 different SKUs and included the previous generation Xeon E5-2697v2 (high end Ivy Bridge EP), Xeon E5-2680v2 (mid range Ivy Bridge EP) and E5-2690 (high end Sandy Bridge EP). All test have been done with the help of Dieter and Wannes of the Sizing Servers Lab.

We include the Opteron "Piledriver" 6376 server (configuration here) only for nostalgia and informational purposes. It is clear that AMD does not actively competes in the high end and midrange server CPU market anno 2014.

Intel's Xeon E5 Server – "Wildcat Pass" (2U Chassis)

| CPU |

Two Intel Xeon processor E5-2699 v3 (2.3GHz, 18c, 45MB L3, 145W) |

| RAM | 128GB (8x16GB) Samsung M393A2G40DB0 (RDIMM) 256GB (8x32GB) Samsung M386A4G40DM0 (LRDIMM) |

| Internal Disks | 2x Intel MLC SSD710 200GB |

| Motherboard | Intel Server Board Wilcat pass |

| Chipset | Intel Wellsburg B0 |

| BIOS version | Beta BIOS dating August the 9th, 2014 |

| PSU | Delta Electronics 750W DPS-750XB A (80+ Platinum) |

The 32 GB LRDIMMs were added to the review thanks to the help of IDT and Samsung Semiconductor.

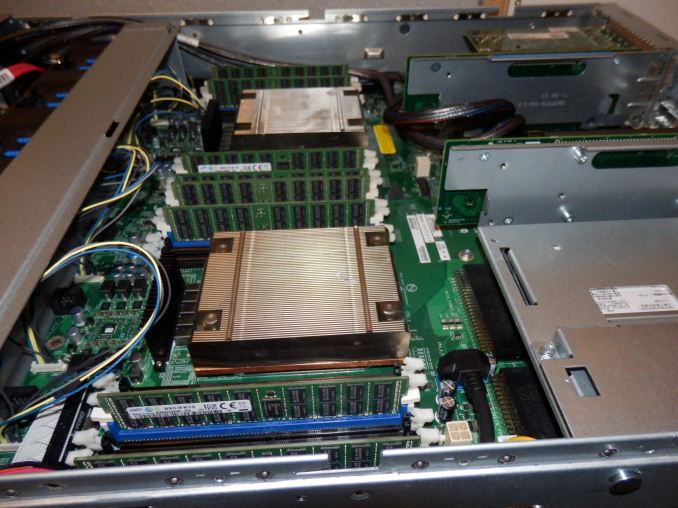

The picture above gives you a look inside the Xeon E5-2600v3 based server.

Supermicro 6027R-73DARF (2U Chassis)

| CPU | Two Intel Xeon processor E5-2697 v2 (2.7GHz, 12c, 30MB L3, 130W) Two Intel Xeon processor E5-2680 v2 (2.8GHz, 10c, 25MB L3, 115W) Two Intel Xeon processor E5-2690 (2.9GHz, 8c, 20MB L3, 135W) |

| RAM | 128GB (8x16GB) Samsung M393A2G40DB0 |

| Internal Disks | 2x Intel MLC SSD710 200GB |

| Motherboard | Supermicro X9DRD-7LN4F |

| Chipset | Intel C602J |

| BIOS version | R 3.0a (December the 6th, 2013) |

| PSU | Supermicro 740W PWS-741P-1R (80+ Platinum) |

All C-states are enabled in both the BIOS.

Other Notes

Both servers are fed by a standard European 230V (16 Amps max.) powerline. The room temperature is monitored and kept at 23°C by our Airwell CRACs. We use the Racktivity ES1008 Energy Switch PDU to measure power consumption. Using a PDU for accurate power measurements might seem pretty insane, but this is not your average PDU. Measurement circuits of most PDUs assume that the incoming AC is a perfect sine wave, but it never is. However, the Rackitivity PDU measures true RMS current and voltage at a very high sample rate: up to 20,000 measurements per second for the complete PDU.

85 Comments

View All Comments

bsd228 - Friday, September 12, 2014 - link

Now go price memory for M class Sun servers...even small upgrades are 5 figures and going 4 years back, a mid sized M4000 type server was going to cost you around 100k with moderate amounts of memory.And take up a large portion of the rack. Whereas you can stick two of these 18 core guys in a 1U server and have 10 of them (180 cores) for around the same sort of money.

Big iron still has its place, but the economics will always be lousy.

platinumjsi - Tuesday, September 9, 2014 - link

ASRock are selling boards with DDR3 support, any idea how that works?http://www.asrockrack.com/general/productdetail.as...

TiGr1982 - Tuesday, September 9, 2014 - link

Well... ASRock is generally famous "marrying" different gen hardware.But here, since this is about DDR RAM, governed by the CPU itself (because memory controller is inside the CPU), then my only guess is Xeon E5 v3 may have dual-mode memory controller (supporting either DDR4 or DDR3), similarly as Phenom II had back in 2009-2011, which supported either DDR2 or DDR3, depending on where you plugged it in.

If so, then probably just the performance of E5 v3 with DDR3 may be somewhat inferior in comparison with DDR4.

alpha754293 - Tuesday, September 9, 2014 - link

No LS-DYNA runs? And yes, for HPC applications, you actually CAN have too many cores (because you can't keep the working cores pegged with work/something to do, so you end up with a lot of data migration between cores, which is bad, since moving data means that you're not doing any useful work ON the data).And how you decompose the domain (for both LS-DYNA and CFD makes a HUGE difference on total runtime performance).

JohanAnandtech - Tuesday, September 9, 2014 - link

No, I hope to get that one done in the more Windows/ESXi oriented review.Klimax - Tuesday, September 9, 2014 - link

Nice review. Next stop: Windows Server. (And MS-SQL..)JohanAnandtech - Tuesday, September 9, 2014 - link

Agreed. PCIe Flash and SQL server look like a nice combination to test this new Xeons.TiGr1982 - Tuesday, September 9, 2014 - link

Xeon 5500 series (Nehalem-EP): up to 4 cores (45 nm)Xeon 5600 series (Westmere-EP): up to 6 cores (32 nm)

Xeon E5 v1 (Sandy Bridge-EP): up to 8 cores (32 nm)

Xeon E5 v2 (Ivy Bridge-EP): up to 12 cores (22 nm)

Xeon E5 v3 (Haswell-EP): up to 18 cores (22 nm)

So, in this progression, core count increases by 50% (1.5 times) almost each generation.

So, what's gonna be next:

Xeon E5 v4 (Broadwell-EP): up to 27 cores (14 nm) ?

Maybe four rows with 5 cores and one row with 7 cores (4 x 5 + 7 = 27) ?

wallysb01 - Wednesday, September 10, 2014 - link

My money is on 24 cores.SuperVeloce - Tuesday, September 9, 2014 - link

What's the story with 2637v3? Only 4 cores and the same freqency and $1k price as 6core 2637v2? By far the most pointless cpu on the list.