Intel Xeon E5 Version 3: Up to 18 Haswell EP Cores

by Johan De Gelas on September 8, 2014 12:30 PM ESTBenchmark Configuration and Methodology

This review - due to time constraints and a failing RAID controller inside our iSCSI storage - concentrates mostly on the performance and performance/watt of server applications running on top of Ubuntu Server 14.04 LTS. To make things more interesting, we tested 4 different SKUs and included the previous generation Xeon E5-2697v2 (high end Ivy Bridge EP), Xeon E5-2680v2 (mid range Ivy Bridge EP) and E5-2690 (high end Sandy Bridge EP). All test have been done with the help of Dieter and Wannes of the Sizing Servers Lab.

We include the Opteron "Piledriver" 6376 server (configuration here) only for nostalgia and informational purposes. It is clear that AMD does not actively competes in the high end and midrange server CPU market anno 2014.

Intel's Xeon E5 Server – "Wildcat Pass" (2U Chassis)

| CPU |

Two Intel Xeon processor E5-2699 v3 (2.3GHz, 18c, 45MB L3, 145W) |

| RAM | 128GB (8x16GB) Samsung M393A2G40DB0 (RDIMM) 256GB (8x32GB) Samsung M386A4G40DM0 (LRDIMM) |

| Internal Disks | 2x Intel MLC SSD710 200GB |

| Motherboard | Intel Server Board Wilcat pass |

| Chipset | Intel Wellsburg B0 |

| BIOS version | Beta BIOS dating August the 9th, 2014 |

| PSU | Delta Electronics 750W DPS-750XB A (80+ Platinum) |

The 32 GB LRDIMMs were added to the review thanks to the help of IDT and Samsung Semiconductor.

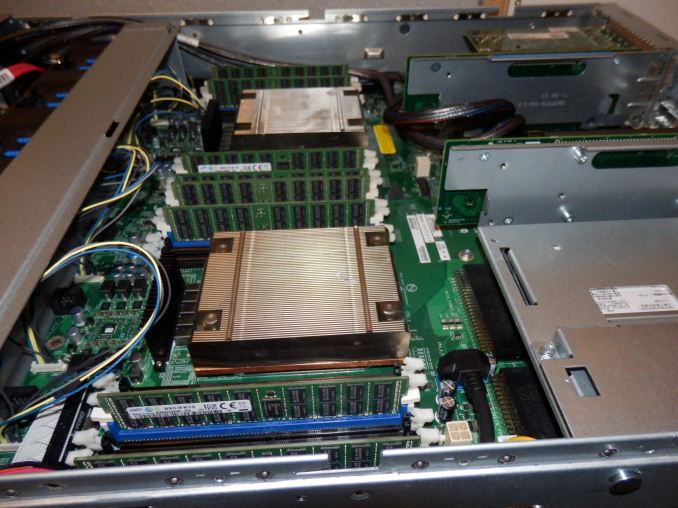

The picture above gives you a look inside the Xeon E5-2600v3 based server.

Supermicro 6027R-73DARF (2U Chassis)

| CPU | Two Intel Xeon processor E5-2697 v2 (2.7GHz, 12c, 30MB L3, 130W) Two Intel Xeon processor E5-2680 v2 (2.8GHz, 10c, 25MB L3, 115W) Two Intel Xeon processor E5-2690 (2.9GHz, 8c, 20MB L3, 135W) |

| RAM | 128GB (8x16GB) Samsung M393A2G40DB0 |

| Internal Disks | 2x Intel MLC SSD710 200GB |

| Motherboard | Supermicro X9DRD-7LN4F |

| Chipset | Intel C602J |

| BIOS version | R 3.0a (December the 6th, 2013) |

| PSU | Supermicro 740W PWS-741P-1R (80+ Platinum) |

All C-states are enabled in both the BIOS.

Other Notes

Both servers are fed by a standard European 230V (16 Amps max.) powerline. The room temperature is monitored and kept at 23°C by our Airwell CRACs. We use the Racktivity ES1008 Energy Switch PDU to measure power consumption. Using a PDU for accurate power measurements might seem pretty insane, but this is not your average PDU. Measurement circuits of most PDUs assume that the incoming AC is a perfect sine wave, but it never is. However, the Rackitivity PDU measures true RMS current and voltage at a very high sample rate: up to 20,000 measurements per second for the complete PDU.

85 Comments

View All Comments

LostAlone - Saturday, September 20, 2014 - link

Given the difference in size between the two companies it's not really all that surprising though. Intel are ten times AMD's size, and I have to imagine that Intel's chip R&D department budget alone is bigger than the whole of AMD. And that is sad really, because I'm sure most of us were learning our computer science when AMD were setting the world on fire, so it's tough to see our young loves go off the rails. But Intel have the money to spend, and can pursue so many more potential avenues for improvement than AMD and that's what makes the difference.Kevin G - Monday, September 8, 2014 - link

I'm actually surprised they released the 18 core chip for the EP line. In the Ivy Bridge generation, it was the 15 core EX die that was harvested for the 12 core models. I was expecting the same thing here with the 14 core models, though more to do with power binning than raw yields.I guess with the recent TSX errata, Intel is just dumping all of the existing EX dies into the EP socket. That is a good means of clearing inventory of a notably buggy chip. When Haswell-EX formally launches, it'll be of a stepping with the TSX bug resolved.

SanX - Monday, September 8, 2014 - link

You have teased us with the claim that added FMA instructions have double floating point performance. Wow! Is this still possible to do that with FP which are already close to the limit approaching just one clock cycle? This was good review of integer related performance but please combine with Ian to continue with the FP one.JohanAnandtech - Monday, September 8, 2014 - link

Ian is working on his workstation oriented review of the latest XeonKevin G - Monday, September 8, 2014 - link

FMA is common place in many RISC architectures. The reason why we're just seeing it now on x86 is that until recently, the ISA only permitted two registers per operand.Improvements in this area maybe coming down the line even for legacy code. Intel's micro-op fusion has the potential to take an ordinary multiply and add and fuse them into one FMA operation internally. This type of optimization is something I'd like to see in a future architecture (Sky Lake?).

valarauca - Monday, September 8, 2014 - link

The Intel compiler suite I believe already convertsx *= y;

x += z;

into an FMA operation when confronted with them.

Kevin G - Monday, September 8, 2014 - link

That's with source that is going to be compiled. (And don't get me wrong, that's what a compiler should do!)Micro-op fusion works on existing binaries years old so there is no recompile necessary. However, micro-op fusion may not work in all situations depending on the actual instruction stream. (Hypothetically the fusion of a multiply and an add in an instruction stream may have to be adjacent to work but an ancient compiler could have slipped in some other instructions in between them to hide execution latencies as an optimization so it'd never work in that binary.)

DIYEyal - Monday, September 8, 2014 - link

Very interesting read.And I think I found a typo: page 5 (power optimization). It is well known that THE (not needed) Haswell HAS (is/ has been) optimized for low idle power.

vLsL2VnDmWjoTByaVLxb - Monday, September 8, 2014 - link

Colors or labeling for your HPC Power Consumption graph don't seem right.JohanAnandtech - Monday, September 8, 2014 - link

Fixed, thanks for pointing it out.