AMD Celebrates 30 Years of Gaming and Graphics Innovation

by Jarred Walton on August 22, 2014 5:15 PM EST

AMD sent us word that tomorrow they will be hosting a Livecast celebrating 30 years of graphics and gaming innovation. Thirty years is a long time, and certainly we have a lot of readers that weren't even around when AMD had its beginnings. Except we're not really talking about the foundation of AMD; they had their start in 1969. It appears this is more a celebration of their graphics division, formerly ATI, which was founded in… August, 1985.

AMD is apparently looking at a year-long celebration of the company formerly known as ATI, Radeon graphics, and gaming. While they're being a bit coy about the exact contents of the Livecast, we do know that there will be three game developers participating along with a live overclocking event. If we're lucky, maybe AMD even has a secret product announcement, but if so they haven't provided any details. And while we can now look forward to a year of celebrating AMD graphics and most likely a final end-of-the-year party come next August, why not start out with a brief look at where AMD/ATI started and where they are now?

Source: Wikimedia Evan-Amos |

I'm old enough that I may have been an owner of one of ATI's first products, as I began my addiction career as a technology enthusiast way back in the hoary days of the Commodore 64. While the C64 initially started shipping a few years earlier, Commodore was one of ATI's first customers and they were largely responsible for an infusion of money that kept ATI going in the early days.

By 1987, ATI began moving into the world of PC graphics with their "Wonder" brand of chips and cards, starting with 8-bit PC/XT-based board supporting monochrome or 4-color CGA. Over the next several years ATI would move to EGA (640x350 and provided an astounding 16 colors) and VGA (16-bit ISA and 256 colors). If you wanted a state-of-the-art video card like the ATI VGA Wonder in 1988, you were looking at $500 for the 256K model or $700 for the 512K edition. But all of this is really old stuff; where things start to become interesting is in the early 90s with the launch and growing popularity of Windows 3.0.

Source: Wikimedia Misterzeropage |

ATI's Mach 8 was their first true graphics processor from the company. It was able to offload 2D graphics functions from the CPU and render them independently, and at the time it was one of the few video cards that could do this. Sporting 512K-1MB of memory, it was still an ISA card (or it was available in MCA if you happened to own an IBM PS/2).

Two years later the Mach 32 came out, the first 32-bit capable chip with support for ISA, EISA, MCA, VLB, and PCI slots. Mach 32 shipped with either 1MB or 2MB DRAM/VRAM and added high-color (15-bit/16-bit) and later True Color (the 24-bit color that we're still mostly using today) to the mix, along with a 64-bit memory interface. And two years after came the Mach 64, which brought support for up to 8MB of DRAM, VRAM, or the new SGRAM. Later variants of the Mach 64 also started including 3D capabilities (and were rebranded as Rage, see below), and we're still not even in the "modern" era of graphics chips yet!

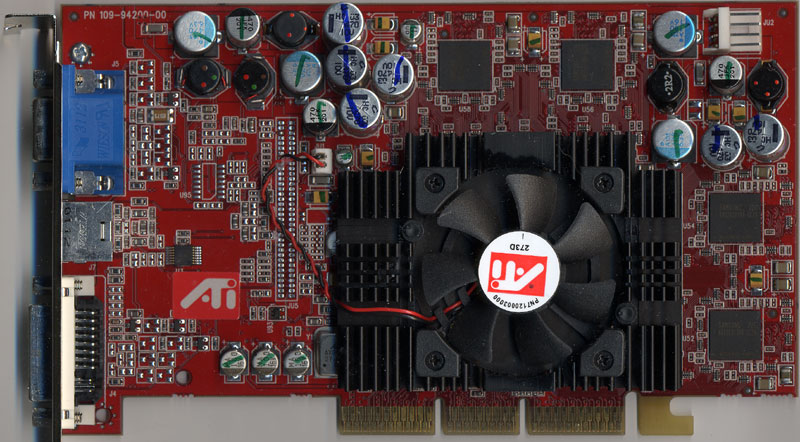

Rage Fury MAXX |

Next in line was the Rage series of graphics chips, and this was the first line of graphics chips built with 3D acceleration as one of the key features. We could talk about competing products from 3dfx, NVIDIA, Virge, S3, etc. here, but let's just stick with ATI. The Rage line appropriately began with the 3D Rage I in 1996, and it was mostly an enhancement of the Mach64 design with added on 3D support. The 3D Rage II was another Mach64 derived design, with up to twice the performance of the 3D Rage. The Rage II also found its way into some Macintosh systems, and while it was initially a PCI part, the Rage IIc later added AGP support.

That part was followed by the Rage Pro, which is when graphics chips first started handling geometry processing (circa 1998 with DirectX 6.0 if you're keeping track), and you could get the Pro cards with up to 16MB of memory. There were also low-cost variations of the Rage Pro in the Rage LT, LT Pro, and XL models, and the Rage XL may hold the distinction of being one of the longest-used graphics chips in history, as I know even in 2005 or thereabouts there were many servers still shipping with that chip on the motherboard providing graphics output. In 1998 ATI released the Rage 128 with AGP 2X support (the enhanced Rage 128 Pro added AGP 4X support among other things a year later), and up to 32MB RAM. The Rage 128 Ultra even supported 64MB in its top configuration, but that wasn't the crowning achievement of the Rage series. No, the biggest achievement for Rage was with the Rage Fury MAXX, ATI's first GPU to support alternate frame rendering to provide up to twice the performance.

Radeon 9700 Pro |

And last but not least we finally enter the modern era of ATI/AMD video cards with the Radeon line. Things start to get pretty dense in terms of releases at this point, so we'll mostly gloss over things and just hit the highlights. The first iteration Radeon brought support for DirectX 7 features, the biggest being hardware support for transform and lighting calculations – basically a way of offloading additional geometry calculations. The second generation Radeon chips (sold under the Radeon 8000 and lower number 9000 models) added DirectX 8 support, the first appearance of programmable pixel and vertex shaders in GPUs.

Perhaps the best of the Radeon breed goes to the R300 line, with the Radeon 9600/9700/9800 series cards delivering DirectX 9.0 support and, more importantly, holding onto a clear performance lead over their chief competitor NVIDIA for nearly two solid years! It's a bit crazy to realize that we're now into our tenth (or eleventh, depending on how you want to count) generation of Radeon GPUs, and while the overall performance crown is often hotly debated, one thing is clear: games and graphics hardware wouldn't be where it is today without the input of AMD's graphics division!

That's a great way to finish things off, and tomorrow I suspect AMD will have much more to say on the subject of the changing landscape of computer graphics over the past 30 years. It's been a wild ride, and when I think back to the early days of computer games and then look at modern titles, it's pretty amazing. It's also interesting to note that people often complain about spending $200 or $300 on a reasonably high performance GPU, when the reality is that the top performing video cards have often cost several hundred dollars – I remember buying an early 1MB True Color card for $200 back in the day, and that was nowhere near the top of the line offering. The amount of compute performance we can now buy for under $500 is awesome, and I can only imagine what the advances of another 30 years will bring us. So, congratulations to AMD on 30 years of graphics innovation, and here's to 30 more years!

Source: AMD Livecast Announcement

32 Comments

View All Comments

JarredWalton - Saturday, August 23, 2014 - link

I think they were just a subcontractor that made some of the same chips that other companies were making. I don't believe they did anything for the Amiga, so perhaps just a "compatible" VIC-II chip.nagi603 - Friday, August 22, 2014 - link

Well, my first foray into ATI territory was the 9700/64MB in my laptop that I used for gaming for years, even though I had to use modded drivers from the get-go to get any kind of update for it. It is still more than enough for basic office work to this day. I did start out with S3, Nvidia and noname VGAs before that, but from the 9700 onwards, I haven't looked back.Then, back on desktop, the X1600 Pro, the X1900GT, 4850, 6850 and finally the 290X now. Oh, and I modded the cooling of every one of them, except the 6850.

CuriousMike - Friday, August 22, 2014 - link

Congrats ATI (AMD) - I was a cash-starved teen with my monochrome monitor and crappy Hercules graphics card that played extactly zero games. Then... the EGA-Wonder entered my life and the world of PC gaming in monochrome was on my desk. When I purchased my first monitor (NEC Multisync 2), the VGA-Wonder was my card of choice to drive all those 320x200, 320x240 and 640x400 images of bikini clad women in full color.Samus - Friday, August 22, 2014 - link

I think congratulations are in order to ATI for weathering the storm. Many competitors after all didn't make it. We literally have three relevant companies making PC graphics chips left. In the 90's, there were dozens. 3Dfx, Tseng Labs, Via, S3, SIS, Cirrus Logic, Matrox (admittedly still around) and IBM to name a few. It's amazing to think all that's left is Intel, NVidia and ATI/AMD each with ~33% market share.Beany2013 - Sunday, August 24, 2014 - link

Tseng Labs! I have fond memories of using an ET600 to accelerate a 3D pool game on my 486SX 25mhz, to get it up to 20fp in 800x600, rather than..substantially worse using just soft rendering.Couldn't help with Quake though - that needed a maths co-processor to do the 3D stuff. Gutting.

Sttm - Saturday, August 23, 2014 - link

A64 3200+, A64x2 5200+, Phenom 9950... 9700 Pro, 4870, and currently 7970...Thanks for the memories. See you in 2015 (if you show up!)

siberus - Saturday, August 23, 2014 - link

I remember my first ati video card.It was a Powercolor radeon 9800 se that I had to get special ordered from a friends uncles computer shop. It was the first tweak-able gpu I'd ever bought. I remember soft modding it into a full fledged 9800pro and then overclocking it into a 9800xt. Probably the only time in gaming when i was on the bleeding edge . Good times :)coburn_c - Saturday, August 23, 2014 - link

30 years of innovation my ass. They started by reverse engineering Intel chips, they didn't get into the graphics business until some idiot bet the farm on ATI, and they haven't done a damn thing in the last three.JarredWalton - Saturday, August 23, 2014 - link

So when AMD started in 1969, they were reverse engineering which Intel chips exactly? And as far as an "idiot betting the farm on ATI", I think most people would say that's been one of the best moves AMD has made.coburn_c - Saturday, August 23, 2014 - link

The 8080, it was their claim to fame. Most people are stupid.