Samsung SSD 850 Pro (128GB, 256GB & 1TB) Review: Enter the 3D Era

by Kristian Vättö on July 1, 2014 10:00 AM ESTAnandTech Storage Bench 2013

Our Storage Bench 2013 focuses on worst-case multitasking and IO consistency. Similar to our earlier Storage Benches, the test is still application trace based - we record all IO requests made to a test system and play them back on the drive we are testing and run statistical analysis on the drive's responses. There are 49.8 million IO operations in total with 1583.0GB of reads and 875.6GB of writes. I'm not including the full description of the test for better readability, so make sure to read our Storage Bench 2013 introduction for the full details.

| AnandTech Storage Bench 2013 - The Destroyer | ||

| Workload | Description | Applications Used |

| Photo Sync/Editing | Import images, edit, export | Adobe Photoshop CS6, Adobe Lightroom 4, Dropbox |

| Gaming | Download/install games, play games | Steam, Deus Ex, Skyrim, Starcraft 2, BioShock Infinite |

| Virtualization | Run/manage VM, use general apps inside VM | VirtualBox |

| General Productivity | Browse the web, manage local email, copy files, encrypt/decrypt files, backup system, download content, virus/malware scan | Chrome, IE10, Outlook, Windows 8, AxCrypt, uTorrent, AdAware |

| Video Playback | Copy and watch movies | Windows 8 |

| Application Development | Compile projects, check out code, download code samples | Visual Studio 2012 |

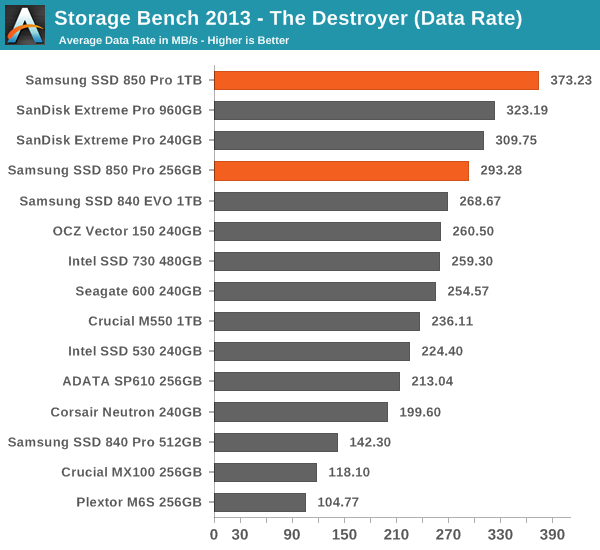

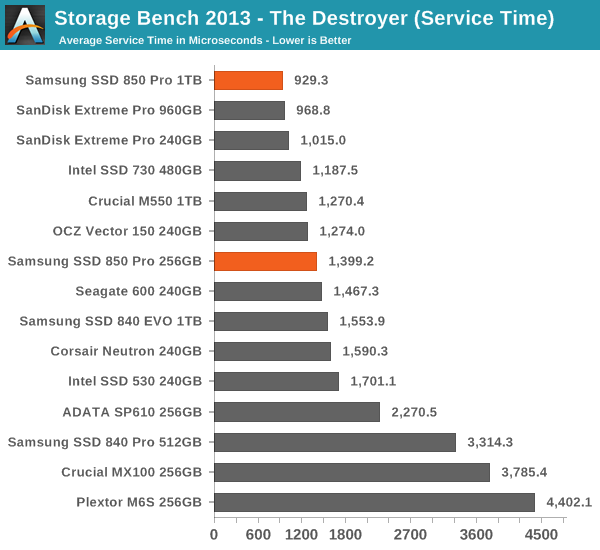

We are reporting two primary metrics with the Destroyer: average data rate in MB/s and average service time in microseconds. The former gives you an idea of the throughput of the drive during the time that it was running the test workload. This can be a very good indication of overall performance. What average data rate doesn't do a good job of is taking into account response time of very bursty (read: high queue depth) IO. By reporting average service time we heavily weigh latency for queued IOs. You'll note that this is a metric we have been reporting in our enterprise benchmarks for a while now. With the client tests maturing, the time was right for a little convergence.

Thanks to the excellent IO consistency, the 850 Pro dominates our 2013 Storage Bench. At the 1TB capacity point, the 850 Pro is over 15% faster than any drive when looking at the average data rate. That is huge because the 850 Pro has less over-provisioning than most of today's high-end drives and the 2013 Storage Bench tends to reward drives that have more over-provisioning because it essentially pushes drives to steady-state. The 256GB model does not do as well as the 1TB one but it is still one of the fastest drives in its class. I wonder if the lesser amount of over-provisioning is the reason or perhaps the Extreme Pro is just so well optimized for mixed workloads.

160 Comments

View All Comments

Squuiid - Saturday, March 14, 2015 - link

Plus, the MX100 reliability is horrible. Just google MX100 BSOD, disappearing drive.I have 2x MX100 512GB SSDs and I recommend you don't buy one, no matter how cheap they are.

nightauthor - Tuesday, July 1, 2014 - link

For business purposes, I would rather pay twice as much and get a 10 year warranty vs the 3 year supplied by Crucial. Though, for my daily, I would probably go with the Crucial.TheWrongChristian - Wednesday, July 2, 2014 - link

No current SATA drives push low queue depth random IOs to the point of saturating SATA II, let alone SATA III.At high queue depths, perhaps. But then, that is not a typical workload for most users, desktop or server.

Plus, it's a new drive, prices will come down.

jwcalla - Monday, June 30, 2014 - link

Unless they're doing 5% OP the capacities are kinda... off.melgross - Monday, June 30, 2014 - link

I think there's a slight misunderstanding of manufacturing cost. While the die size may be the same, or even smaller than a competing technology, the 32 level chip does cost more to make per area. There are more masks, more layers, more etching and washing cycles, and more chance of defects.Right now, I do see why the cost is higher. I can on,y assume that as this technology progresses, that cost will drop per area. But it will always remain higher than an SLC, MLC or TLC chip.

So there is a balance here.

Kristian Vättö - Tuesday, July 1, 2014 - link

You are correct. I did mention yield and equipment cost in the final paragraph but I figured I won't go into detail about masks and etching since those would have required an in-depth explanation of how NAND is manufactured :)R0H1T - Tuesday, July 1, 2014 - link

It would be great if Anand or you do a writeup on 3d NAND & deal with the specific pros & cons of it as compared to traditional 2d NAND & if possible include something related to manufacturing processes of these & how they're different OR more/less expensive, certainly as in case of V-NAND?MrSpadge - Tuesday, July 1, 2014 - link

You wouldn't need too much detail - just saying that the number of process steps increases by probably around an order of magnitude should make this pretty clear.frenchy_2001 - Tuesday, July 1, 2014 - link

It is probably more than that, as Samsung is currently manufacturing 32 layers of cells. Each layer requires multiple operations (deposit, etching, washing...). Their biggest advantage comes from regressing to 40nm: at that technology, each operation is *MUCH* cheaper than the equivalent one at 1X pitch (15~19nm).So, total cost is an unknown, but should be very competitive, after recovering the initial R&D investment.

Spatty - Tuesday, July 1, 2014 - link

And not to mention 3D NAND is still basically bleeding edge. It's still in the stages of where a new DDR generation arrives, much higher costs then current gen.