Samsung SSD 850 Pro (128GB, 256GB & 1TB) Review: Enter the 3D Era

by Kristian Vättö on July 1, 2014 10:00 AM ESTAnandTech Storage Bench 2013

Our Storage Bench 2013 focuses on worst-case multitasking and IO consistency. Similar to our earlier Storage Benches, the test is still application trace based - we record all IO requests made to a test system and play them back on the drive we are testing and run statistical analysis on the drive's responses. There are 49.8 million IO operations in total with 1583.0GB of reads and 875.6GB of writes. I'm not including the full description of the test for better readability, so make sure to read our Storage Bench 2013 introduction for the full details.

| AnandTech Storage Bench 2013 - The Destroyer | ||

| Workload | Description | Applications Used |

| Photo Sync/Editing | Import images, edit, export | Adobe Photoshop CS6, Adobe Lightroom 4, Dropbox |

| Gaming | Download/install games, play games | Steam, Deus Ex, Skyrim, Starcraft 2, BioShock Infinite |

| Virtualization | Run/manage VM, use general apps inside VM | VirtualBox |

| General Productivity | Browse the web, manage local email, copy files, encrypt/decrypt files, backup system, download content, virus/malware scan | Chrome, IE10, Outlook, Windows 8, AxCrypt, uTorrent, AdAware |

| Video Playback | Copy and watch movies | Windows 8 |

| Application Development | Compile projects, check out code, download code samples | Visual Studio 2012 |

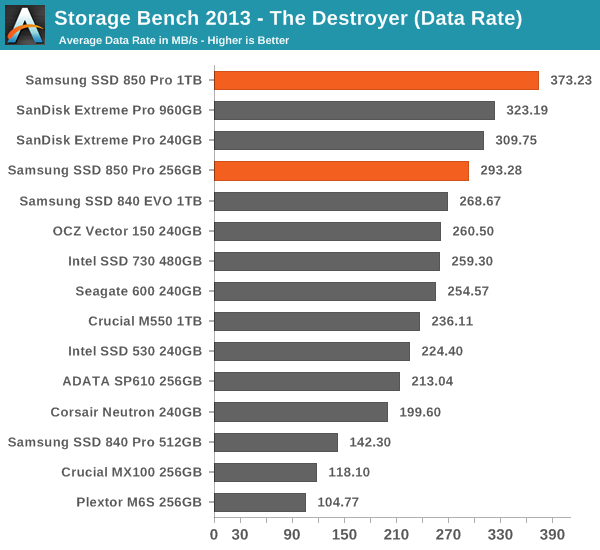

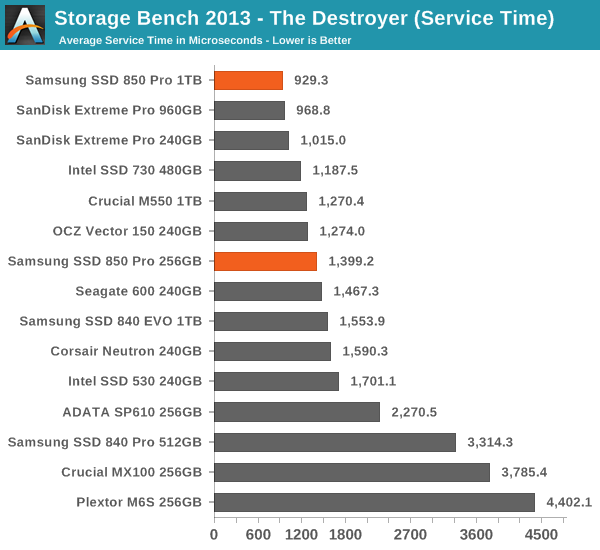

We are reporting two primary metrics with the Destroyer: average data rate in MB/s and average service time in microseconds. The former gives you an idea of the throughput of the drive during the time that it was running the test workload. This can be a very good indication of overall performance. What average data rate doesn't do a good job of is taking into account response time of very bursty (read: high queue depth) IO. By reporting average service time we heavily weigh latency for queued IOs. You'll note that this is a metric we have been reporting in our enterprise benchmarks for a while now. With the client tests maturing, the time was right for a little convergence.

Thanks to the excellent IO consistency, the 850 Pro dominates our 2013 Storage Bench. At the 1TB capacity point, the 850 Pro is over 15% faster than any drive when looking at the average data rate. That is huge because the 850 Pro has less over-provisioning than most of today's high-end drives and the 2013 Storage Bench tends to reward drives that have more over-provisioning because it essentially pushes drives to steady-state. The 256GB model does not do as well as the 1TB one but it is still one of the fastest drives in its class. I wonder if the lesser amount of over-provisioning is the reason or perhaps the Extreme Pro is just so well optimized for mixed workloads.

160 Comments

View All Comments

Krakadoom - Saturday, September 27, 2014 - link

I bought the 850 Pro 500 GB version. The transfer speeds are around (and just under) 400 MB/s and the IOPS are always around 55-60k. Not impressed - considering returning it just due to the huge gap between the rated specs and actual performance. (Of course Samsung Magician over-reports the transfer speed by quite a large margin).stealth_lee - Wednesday, October 1, 2014 - link

Someone just tipped me that V-NAND in Samsung 850 Pro is actually TLC not MLC.The first reason is the 86Gbit/die number is odd, if 850 Pro uses a TLC 128Gbit/die and emulates it to MLC then it would be 86Gbit/die, the numbers fit well.

The second reason is Chipworks confirmed it in the die shots:

http://bit.ly/YTVm9Z

http://bit.ly/1uByKcm

I'm just the messenger here, I'm not expert.

So...I was wodering is it possible to hack Samsung 850 Pro to get extra storage space in TLC?

wcatlan - Saturday, October 25, 2014 - link

Why isn't the lack of power loss protection a showstopper for any of these drives? I love the speed and reliability benefits under normal operation, but how can anyone get excited about a drive that can get corrupted in an instant due to power loss or computer freeze, where a hard shutdown is required? Seems that these drives are more prone to massive data issues much more than HDDs under the same power fault conditions. I keep looking for a good answer, but it seems smart people are willing to look past this seemingly fatal Achilles heal. Not sure what I might be missing. Any thoughts?futurefilm - Monday, December 1, 2014 - link

Today, Cyber Monday deals on Amazon, the 850 Pro 256 is going for $150. The 128 for $100. Get it now while it's hot.saagar - Thursday, January 22, 2015 - link

Dear Kristian Vättö,Fantastic review of the drives and the technology behind it. This is what readers like me expect to see on Anandtech. Thanks for breaking it down. Keep up the good work!

gsuburban - Wednesday, April 8, 2015 - link

As of April 8, 2015, the 850 Pro 256GB SSD can be had for about $144 if you look hard enough.rockfella79 - Saturday, June 27, 2015 - link

I love my 850 Pro 128 GB SSD :)KDT - Thursday, March 24, 2016 - link

Please update the endurance to 300TBW for 1TB model. This was my basis for buying this SSD. This is 2nd to Crucial MX200 (320TBW on 1TB model) in terms of endurance - for client/consumer SSDs.BimmerInd - Sunday, June 26, 2016 - link

Samsung is using 40nm over Micron's 16nm. Doing the math implies that for every 2.5 16nm Micron nodes in planar section, Samsung only does 1 40nm node. If we scale vertically to 32 layers, then Micron (or others for that matter) still do only 2.5 nodes for every 32 nodes of Samsung. Which means for every 16nm node, Samsung provides 12.8 nodes. Meaning the density scaling factor for every 32 layer increments is a multiple of 12.8. Assuming the current die size for 32 layers to be 128Gbit, then the density advantage for 256Gbit is 12.8x2 times, 512Gbit is 12.8x4 times and for 1Tbit in 2017 should be 12.8x8 times for 256 layers of nodes stacked on top of each other. So the density advantage is approximately 102.4% (theoretically). Samsung can theoretically produce a 1Tbit die at a cost advantage/space advantage of nearly 100 times compared to planar and manufacturers. It is almost like you are able to earn 100 times the profit for the same die provided the cost per bit is scaled along without passing on the price advantage to the end users until other players enter 3D market.The same is the case with Intel's 3D Cross Point Technology. They are having a new tech in their hands that is faster than NAND and closer to DRAM. So they are also planning to price it exactly between NAND and DRAM. We are already paying high costs to shift from platters to NAND and are going to pay even more to make a shift from NAND to 3D xPoint. I just wish I can jump a few years to the future, grab a high capacity NAND/xPoint drive for cheap and come back to the present and use it. Sigh !

BimmerInd - Sunday, June 26, 2016 - link

By the way this is just a rough calculation and is not to be taken literally.