Samsung SSD 850 Pro (128GB, 256GB & 1TB) Review: Enter the 3D Era

by Kristian Vättö on July 1, 2014 10:00 AM ESTRAPID 2.0: Support For More RAM & Updated Caching Algorithm

When the 840 EVO launched a year ago, Samsung introduced a new feature called RAPID (Real-time Accelerated Processing of I/O Data). The idea behind RAPID is very simple: it uses the excess DRAM in your system to cache IOs, thus accelerating storage performance. Modern computers tend to have quite a bit of DRAM that is not always used by the system, so RAPID turns a portion of that into a DRAM cache.

With the 850 Pro, Samsung is introducing Magician 4.4 along with an updated version of RAPID. The 1.0 version of RAPID supported up to 1GB of DRAM (or up to 25% if you had less than 4GB of RAM) but the 2.0 version increases the RAM allocation to up to 4GB if you have 16GB of RAM or more. There is still the same 25% limit, meaning that RAPID will not use 4GB of your RAM if you only have 8GB installed in your system.

I highly recommend that you read the RAPID page of our 840 EVO review because Anand explained the architecture and behavior of RAPID in detail, so I will keep the fundamentals short and focus on what has changed.

In addition to increasing the RAM allocation, Samsung has also improved the caching algorithms. Unfortunately, I was not able to get any details before the launch but I am guessing that the new version includes better optimization for file types and IO sizes that get the biggest benefit from caching. Remember, while RAPID works at the block level, the software also looks at the file types to determine what files and IO blocks should be prioritized. The increased RAM allocation also needs an optimized set of caching algorithms because with a 4GB cache RAPID is able to cache more data at a time, which means it can relax the filetype and block size restrictions (i.e. it can also cache larger files/IOs).

To test how the new version of RAPID performs, I put it through our Storage Benches as well as PCMark 8’s storage test. Our testbed is equipped with 32GB of RAM, so we should be able to get the full benefit of RAPID 2.0.

| Samsung SSD 850 Pro 256GB | ||||

| ATSB - Heavy 2011 Workload (Avg Data Rate) | ATSB - Heavy 2011 Workload (Avg Service Time) | ATSB - Light 2011 Workload (Avg Data Rate) | ATSB - Light 2011 Workload (Avg Service Time) | |

| RAPID Disabled | 310.8MB/s | 676.7ms | 366.6MB/s | 302.5ms |

| RAPID Enabled | 549.1MB/s | 143.4ms | 664.4MB/s | 134.6ms |

The performance increase in our Storage Benches is pretty outstanding. In both the Heavy and Light suites the increase in throughput is around 80%, making the 850 Pro even faster than the Samsung XP941 PCIe SSD.

| Samsung SSD 850 Pro 1TB | ||||

| PCMark 8 - Storage Score | PCMark 8 - Storage Bandwidth | |||

| RAPID Disabled | 4998 | 298.6MB/s | ||

| RAPID Enabled | 5046 | 472.8MB/s | ||

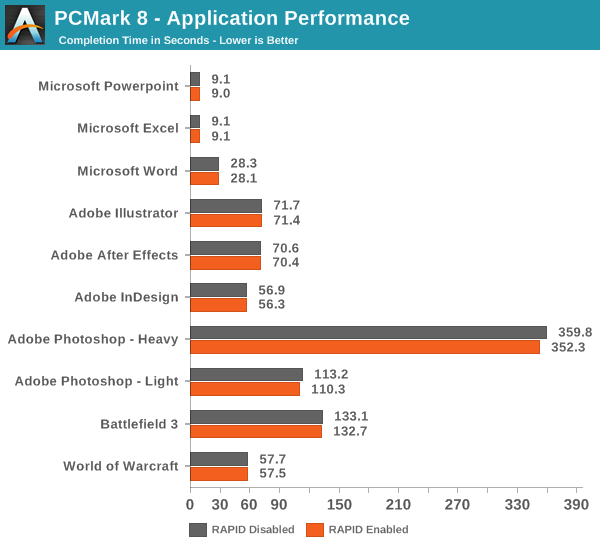

PCMark 8, on the other hand, tells a different story. As you can see, the bandwidth is again much faster, about 60%, but the storage score is only a mere 1% higher.

PCMark 8 also records the completion time of each task in the storage suite, which gives us an explanation as to why the storage scores are about equal. The fundamental issue is that today’s applications are still designed with hard drives in mind, meaning that they cannot utilize the full potential of SSDs. Even though the throughput is much higher with RAPID, the application performance is not because the software has been designed to wait several milliseconds for each IO to complete, so it does not know what to do when the response time is suddenly in the magnitude of a millisecond or two. That is why most applications load the necessary data to RAM when launched and only access storage when it is a must as back in the hard drive days, you wanted to avoid touching the hard drive as much as possible.

It will be interesting to see what the industry does with the software stack over the next few years. In the enterprise, we have seen several OEMs release their own APIs (like SanDisk’s ZetaScale) so companies can optimise their server software infrastructure for SSDs and take the full advantage of NAND. I do not believe that a similar approach works for the client market as ultimately everything is on the hands of Microsoft.

I also tried running the 2013 suite, a.k.a. The Destroyer, but for some reason RAPID did not like that and the system BSODed midway through the test. I am thinking that this is because our Storage Benches are ran without a partition, whereas RAPID also works at the file system level in the sense that it takes hints of what files should be cached. Due to that, it may be as simple as that under a high queue depth workload (like the ATSB2013), RAPID does not know what IOs to cache because there is no filesystem to guide it. I faced the same BSOD issue immediately when I fired up our IO consistency test (also ran without a partition), but when I tested with a similar 4KB random write workload using the new Iometer (which supports filesystem testing), there was absolutely no issue. This further suggests that the issue lies in our tests instead of the RAPID software itself as end-users will always run the drive with a partition anyway.

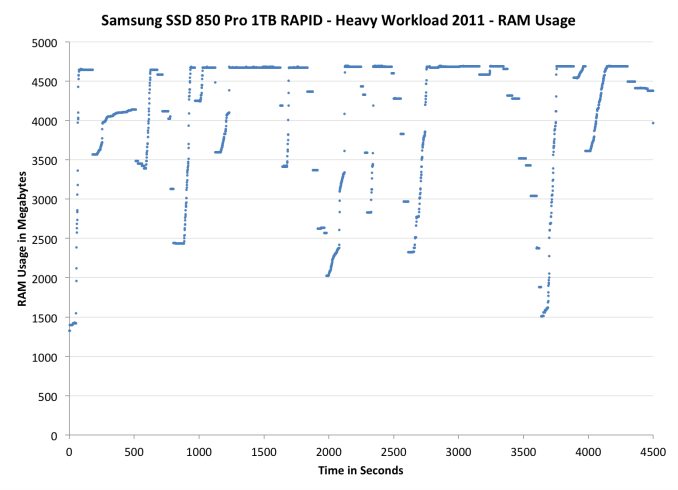

As Anand mentioned in the 840 EVO review, it is possible to monitor RAPID’s RAM usage by looking at the non-paged RAM pool. Instead of just looking at the resource monitor, I decided to take the monitoring one step further by recording the RAM usage over time with Windows’ Performance Monitor while running the 2011 Heavy workload. RAPID seems to behave fairly aggressively when it comes to RAM caching as the RAM usage increases to ~4.7GB almost immediately after firing up the test and stays there almost throughout the test. There are some drops, although I am not sure what is causing them. The idle times are limited to a maximum of 25 seconds when running the trace, so some drops could be caused by that. I need to do run some additional test and monitor the IOs to see if it is just the idle times of whether RAPID is excluding certain types of IOs.

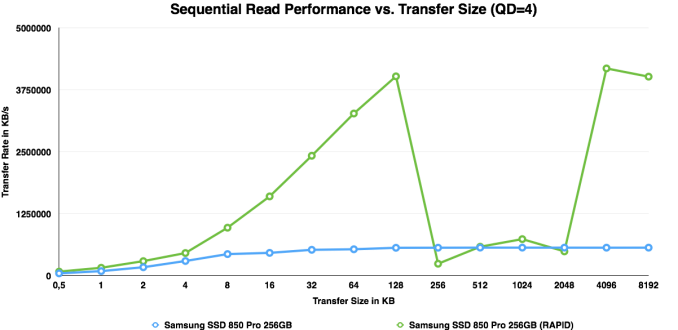

I also ran ATTO to see how the updated RAPID responses to different transfer sizes. It looks like read performance scales quite linearly until hitting the IO size of 256KB. ATTO stores its performance values in 32-bit integers and with RAPID enabled performance exceeds the size of the result variable, thus wrapping around back to 0.

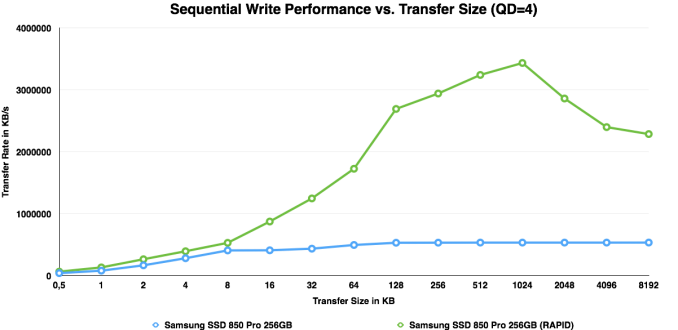

With writes, RAPID continues to cache fluently until hitting 1MB, which is when it starts to cache less aggressively.

160 Comments

View All Comments

YazX_ - Monday, July 7, 2014 - link

Prices are not going down, good thing we have Crucial who have best bang for the buck, ofcourse performance wise is not compared to sandisk or samsung, but its still a very fast SSD, for normal users and gamers, Mx100 is the best drive you can get for its price.soldier4343 - Thursday, July 17, 2014 - link

My next upgrade the Pro 850 512gb version over my OCZ 4 256gb.bj_murphy - Friday, July 18, 2014 - link

Thanks Kristian for such an amazing, in depth review. I especially loved the detailed explanation of current 2D NAND vs 3D NAND, how it all works, and why it's all so important. Possibly one of my favourite Anandtech articles to date!DPOverLord - Wednesday, July 23, 2014 - link

Looking at this it does not seem to be a HUGE difference than raid 0 of 2 Samsung Pro 840 512GB (1tb in raid 0).To upgrade at this point does not make the most sense.

Nickolai - Wednesday, July 23, 2014 - link

How are you implementing over-provisioning?joochung - Tuesday, July 29, 2014 - link

I don't see this mentioned anywhere, but were the tests performed with RAPID enabled or disabled? I understand that some of the tests could not run with RAPID enabled, but for those other tests which do run on a formatted partition (i.e. not run on the raw disk), its not clear if RAPID is enabled or disabled. Therefore its not clear how RAPID will affect the results in each test.Rekonn - Wednesday, July 30, 2014 - link

Anyone know if you can use the 850 Pro ssds on a Dell PERC H700 raid controller? Per documentation, controller only supports 3 Gb/s SATA.janos666 - Thursday, August 14, 2014 - link

I always wondered if there is any practical and notable difference between dynamic and static over-provisioning.I mean... since TRIM should blank out the empty LBAs anyway, I don't see the point in leaving unpartitioned space for static over-provisioning for home users. From a general user standpoint, having as much usable space available as possible (even if we try to restrict ourself from ever utilizing it all) seems to be a lot more practical (until it's actually usable with an acceptable speed, so even if notably slower but still fast enough...) than keeping a (significantly more, but still not perfectly) constant random write performance.

So, I always create a system partition as big as possibly (I do the partitioning manually: a minimal size EFI boot partition + everything else at one piece) without leaving unpartitioned space for over-provisioning and I try to leave as much space empty as possible.

However, one time, after I filled my 840 Pro up to ~95% and I kept it like that for 1-2 days, it never "recovered" . Even after I manually ran "defrag c: /O" to make sure the freed up space is TRIMed, sequential write speeds were really slow and random write speeds were awful. I ha to create a backup image with DD, fill the drive with zeros a few times and finally run an ATA Secure Erase before restoring the backup image.

Even though I was never gentle with the drive (I don't do stupid things like disabling swapping and caching just to reduce it's wear, I bought it to use it...) and I did something which is not recommended (filled almost all the user-accessible space with data and kept using it like that for a few days as a system disk), this wasn't something I expected from this SSD. (Even though this is what I usually get from Samsung. It always looks really nice but later on something turns out which reduces it's value/price from good or best to average or worse.) This was supposed to be a "Pro" version.

stevesy - Friday, September 12, 2014 - link

I don't normally go out of my way to comment on a product but I felt this product deserved the effort. I've been using personal computer since personal computers first came out. I fully expected my upgrade from an old 50gig SSD to be a nightmare.I installed the new 500gig Evo 850 as a secondary, cloned, switch it to primary and had it booting in about 15 minutes. No problems, no issues, super fast, WOW. Glad Samsung got it figured out. I'll be a lot less concerned my next upgrade and won't be waiting until I'm at my last few megabytes before upgrading again.

basil.bourque - Friday, September 26, 2014 - link

I must disagree with the conclusion, "there is not a single thing missing in the 850 Pro". Power-loss protection is a *huge* omission, especially for a "Pro" product.