Manual Camera Controls in iOS 8

by Joshua Ho on June 18, 2014 11:54 AM EST- Posted in

- Smartphones

- Apple

- Mobile

- Tablets

- iOS 8

For the longest time, iOS had almost no camera controls at all. There would be a toggle for HDR, a toggle to switch to the front-facing camera, and a toggle to switch to video recording mode. The only other tool that was accessible would be the AE/AF lock. This meant that you had to hope that the exposure and focus would be correct, because there was no direct method of adjusting these things. Anyone that paid attention to the WWDC 2014 keynote would’ve heard maybe a few sentences about manual camera controls. Despite the short mention in the keynote, this is a massive departure from the previously all-auto experience.

To be clear, iOS 8 will expose just about every manual camera control possible. This means that ISO, shutter speed, focus, white balance, and exposure bias can be manually set within a custom camera application. Outside of these manual controls, Apple has also added gray card functionality to bypass the auto white balance mechanism and both EV bracketing and shutter speed/ISO bracketing.

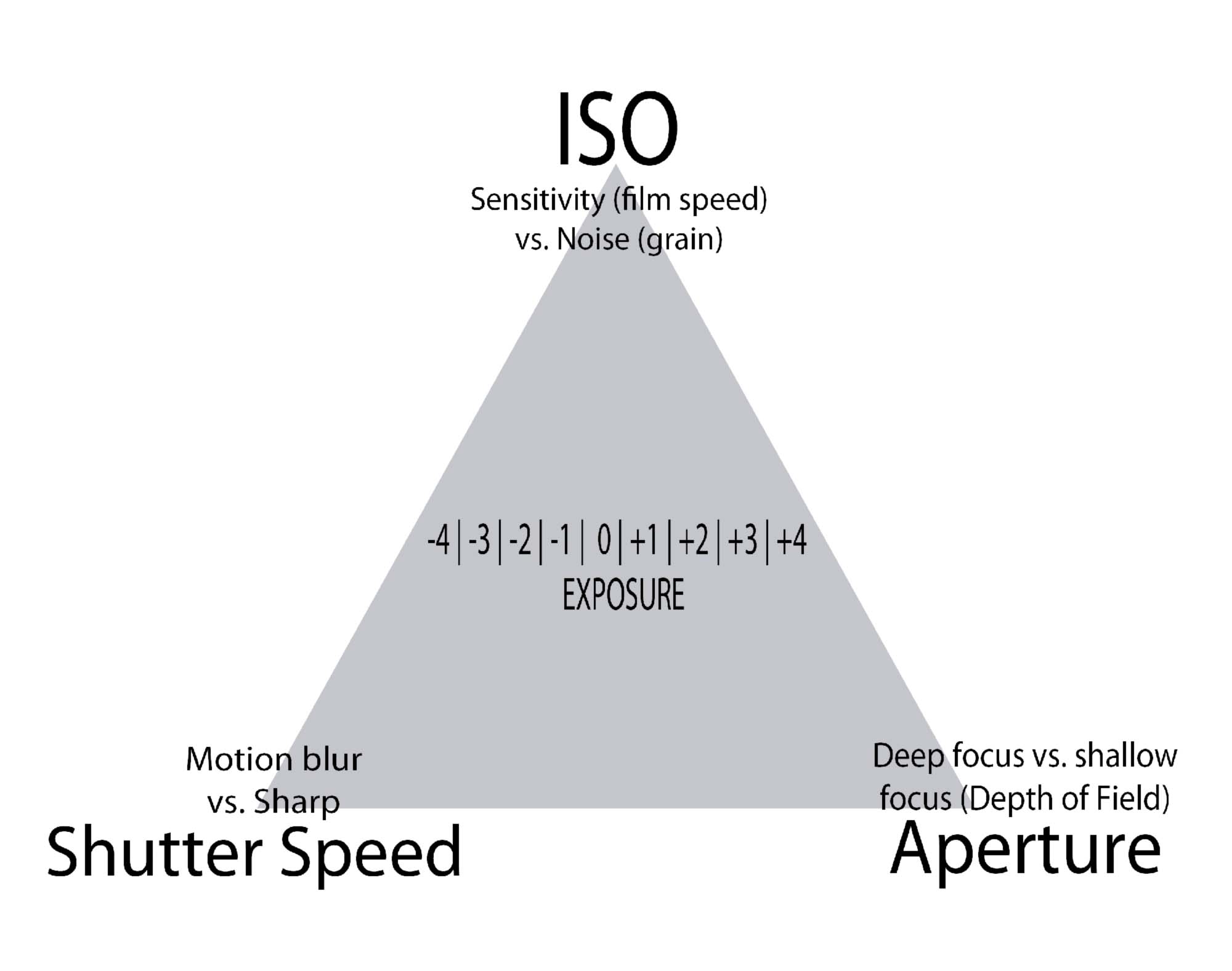

For those that are unfamiliar with such terms, it’s worth talking about what each of these controls can do. First, ISO and shutter speed are two of the three factors that affect the exposure of a scene. The third is the lens aperture, but in the context of mobile, aperture is almost always fixed. ISO is best described as the sensor gain, and shutter speed is the time that the sensor is taking in light. While increasing ISO can brighten a scene, doing so also increase the noise in an image. It's also possible to select different formats within a custom camera application, such as the low light mode. This means that a third party camera application wouldn't be denied access to features that can be found in the stock camera application. A possible UI for this third party camera can be seen below in the Lumia 1020's Nokia Pro Camera application.

The flip side is shutter speed. While longer shutter speeds can decrease ISO, it also means that hand shake and motion blur are more likely to affect the image. This means that things like long exposure photography are now possible. It’s also possible to force lower or higher ISO/shutter speed compared to what the auto-exposure algorithm would pick based upon the scene. It's also important to note that the preview frame rate will be the same as the set shutter speed. This means that the lower bound can be 1FPS in certain formats. With the controls that Apple has exposed, it’s even possible for developers to write their own custom auto-exposure algorithms. Outside of these manual controls, it’s also possible to add a bias to the auto-exposure algorithm. This should appear in the stock iOS 8 camera application in the near future.

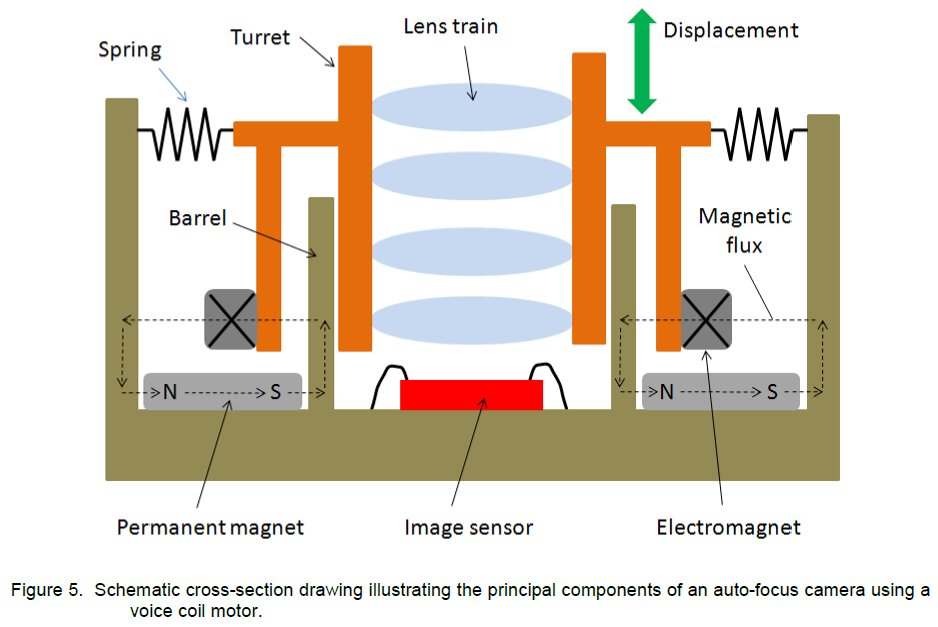

Focus is another key control that adjusts the lens from macro focus to infinity focus, and this means that it’s now possible to focus in situations where contrast detection auto-focus mechanisms struggle to work correctly. This opens up new ways to compose an image, and also opens up new kinds of shots with video. A great example of this is smoothly focusing into an object to provide a dramatic effect, something that would've been impossible up until now. There was a strong emphasis on the fact that focus couldn't be mapped to distance, as the focal length varies from device to device and the VCM behavior is also affected by gravity, age, and variance in the production process. A diagram of a VCM AF system can be seen below.

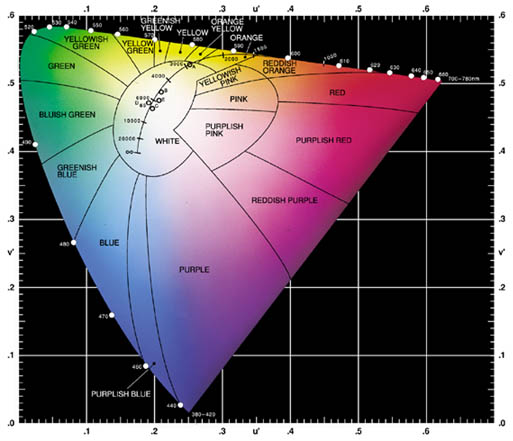

White balance is now also fully manual, something that was previously only limited to Windows Phone and HTC's custom camera application. Apple went into deep detail regarding the implementation of this manual color balance, which effectively skews based upon RGB gain at a low level, but can be converted to Kelvin through the API that is opened up. This, in addition to the "gray world" white balance, allows further control of how a shot will come out.

While only the exposure bias controls will make it into the stock camera application, all of these new controls exposed through the AVCaptureDevice APIs will enable camera applications similar to Nokia's Pro Camera or HTC's Sense 6 camera application. It's been said that Apple is one of the few OEMs that take camera seriously, and these new controls can only cement that position.

42 Comments

View All Comments

JoshHo - Wednesday, June 18, 2014 - link

For now it seems that the API doesn't expose RAW functionality, although I believe developers can now directly access encode/decode blocks on the SoC for video.Dino Ferrucci - Thursday, June 19, 2014 - link

Hey Joshua, if you want to be shooting RAW you're going to have memory and storage challenges with an iPhone - RAW files are big and take up more space compared with JPEGs and other common formats. Although if the above iOS8 spec is true, then the increased flexibility of smartphone cameras is making them more like DSLRs. That's a good thing for budding photographers but it would be great if Apple do something that allows easily expandable memory. I've found a Sandisk device called Wireless Media Drive that's pretty cool in that it allows you to free up memory on your iphone wirelessly by uploading content from your gallery and then you can delete it to free up space :)itpromike - Thursday, June 19, 2014 - link

It does shoot RAW according to developer information in iOS 8 developer docs.JoshHo - Thursday, June 19, 2014 - link

Interesting, do you have a link for this? I don't remember hearing anything about that.RandomUsername3245 - Wednesday, June 18, 2014 - link

I find it frustrating that digital camera companies lock us into this ancient film camera terminology when changing settings for a digital camera. Under the hood, most common focal planes have three settings: integration time, gain, and offset. The integration time is the same as shutter speed. Gain is basically an amplification factor in the readout electronics. The offset parameter sets what light level on the detector corresponds to a pixel value of 0.It would be interesting to know how ISO and EV are converted into these three parameters.

skiboysteve - Thursday, June 19, 2014 - link

This is a great post. Couldn't agree moreNarg - Thursday, June 19, 2014 - link

Ditto!Tigran - Thursday, June 19, 2014 - link

***This means that ISO, shutter speed, focus, white balance, and exposure bias can be manually set within a custom camera application***Couldn't we set exposure bias manually before with the touch of selected area?

***This means that a third party camera application wouldn't be denied access to features that can be found in the stock camera application***

Is it about ISO only (and may be smooth focusing in video recording)? As far as I know there are third party camera applications that allow to change manually shutter speed, depth of field, white balance (Slow Shutter Cam, Professional Camera etc).

JoshHo - Thursday, June 19, 2014 - link

The new part is that you can set the exposure bias directly, the old method of trying to expose for a bright surface is no longer necessary.The line is in reference to special modes such as the iPhone 5's 2x2 binning mode, such features will be accessible in the AVCaptureDevice API.

jlabelle - Friday, June 20, 2014 - link

IPhone sensor is not better than Lumia 1020 in absolute. It is better per surface only. As efficiency of sensor of those size are well greater than 70%, you understand that no matter what technology improvement could be brought on the table, it will never reach the 400% improvement that bring a 4 times bigger sensor. This is just physics.Aperture (in the sense of the f number) is a ratio. This is a ratio between physical aperture of the lens on the focal length. A bigger sensor has a bigger focal length to maintain the same angle of view. This is a why a f/2 lens with a tiny sensor with a 10mm focal length has nothing to do with a f/2 lens of a full frame DSLR with a 100mm lends giving the same angle of view (and the size is also very different).

Therefore, the f/2 lens of the Lumia 1020 is greatly superior to the f/2 lens of the iPhone 5S or Galaxy S5 because it means that it let the same amount of light entering the same surface of the sensor. As the sensor is 4 times bigger, it lets enter 4 times more light.

Yes, iPhone sensor had arguably a little bit better efficiency (like DXO is showing) but it will NEVER offset a 4 times bigger area.

Last but not least, whatever the sensor efficiency and the image quality, a longer focal length associated with a bigger sensor (to keep the same angle of view) give you the possibility to have smaller depth of field so better subject isolation. You can always decide to close down a lens aperture to mimic a smaller sensor but the contrary is not possible !

So bigger sensor has inherently advantages that a smaller sensor will NEVER be able to reach.

I emphasize the NEVER used above because somehow people believe that the technology will enable to have full frame performance in a mobile phone sensor size one day. But it is just physically not possible and will NEVER be. Bigger sensor will always be better, always. This is why they try to rely on different way to get what physics prevent like the dual camera on the HTC M8 or what Nokia could bring on the table with the Pelican technology and combination of several pictures.

But make no mistake, if no one come with a sensor remotely close to Lumia 1020 sensor size in the future, the image quality will not be equaled.

And this is why the best camera if ask time on a phone remain the Nokia 808, despite its age just because of the sheer size of the sensor.