VESA and MIPI Announce Display Stream Compression Standard

by Ryan Smith on April 22, 2014 8:45 PM EST- Posted in

- Displays

- DisplayPort

- GPUs

- VESA

- MIPI

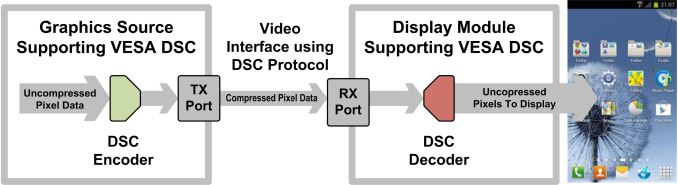

For some time now the consumer electronics industry has been grappling with how to improve the performance and efficiency of display interfaces, especially in light of more recent increases in display resolution. Through the eras of DVI, LVDS/LDI, HDMI, and DisplayPort, video has been transmitted from source to sink as raw, uncompressed data, a conceptually simple setup that ensures high quality and low latency but requires an enormous amount of bandwidth. The introduction of newer interface standards such as HDMI and DisplayPort have in turn allowed manufacturers to meet those bandwidth requirements so far. But display development is reaching a point where both PC and mobile device manufacturers are concerned about their ability to keep up with the bandwidth requirements of these displays, and their ability to do so at reasonable cost and resource requirements.

In order to address these concerns the PC and mobile device industries – through their respective VESA and MIPI associations – have been working together to create new technologies and standards to handle the expected bandwidth requirements. The focus of that work has been on the VESA's Display Stream Compression (DSC) standard, a descriptively named standard for image compression that has been in development at the VESA since late 2012. With that in mind, the VESA and MIPI have announced today that DSC development has been completed and version 1.0 of the DSC standard has been ratified, with both organizations adopting it for future display interface standards.

As alluded to by the name, DSC is an image compression standard designed to reduce the amount of data that needs to be transmitted. With DisplayPort 1.2 already pushing 20Gbps and 1.3 set to increase that to over 30Gbps, display interfaces are already the highest bandwidth interfaces in a modern computer, creating practical limits on how much further they can be improved. With limited headroom for increasing interface bandwidth, DSC tackles the issue from the other end of the problem by reducing the amount of bandwidth required in the first place through compression.

Since DSC is meant to be used at the final transmission stage, DSC itself is designed to be “visually lossless”. That is to say that it’s intended to be very high quality and should be unnoticeable to users across wide variety of content, including photos/video, subpixel text, and potentially problematic patterns. But with that said visually lossless is not the same as mathematically lossless, so while DSC is a high quality codec it’s still mathematically a lossy codec.

In terms of design and implementation DSC is a fixed rate codec, an obvious choice to ensure that the bandwidth requirements for a display stream are equally fixed and a link is never faced with the possibility of running out of bandwidth. Hand-in-hand with the fixed rate requirement, the VESA’s standard calls for visually lossless compression with as little as 8 bits/pixel, which would represent a 66% bandwidth savings over today’s uncompressed 24 bits/pixel display streams. And while 24bit color is the most common format for consumer devices, DSC is also intended work with higher color depths, including 30bit and 36bit (presumably at higher DSC bitrates), allowing it to be used even with deep color displays.

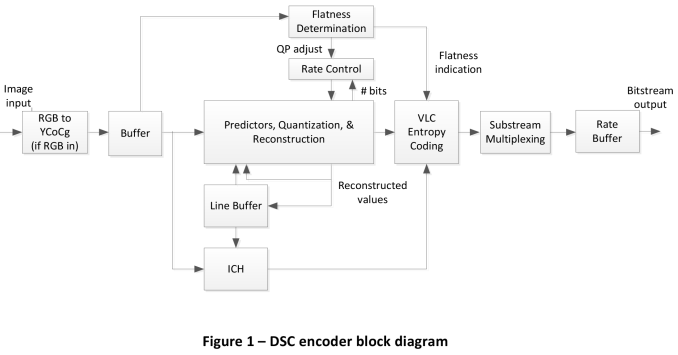

We won’t get too much into the workings of the DSC algorithm itself – the VESA has a brief but insightful whitepaper on the subject – but it’s interesting to point out the unusual requirements the VESA has needed to meet with DSC. Image and video compression is a well-researched field, but most codecs (like JPEG and H.264) are designed around offline encoding for distribution, rather than real-time encoding as part of a display standard. DSC on the other hand needed to be computationally cheap (to make implementation cheap) and low latency, all the while still offering significant compression ratios and doing so with minimal image quality losses. The end result is an interesting algorithm that uses a combination of delta pulse code modulation and indexed color history to achieve the fast compression and decompression required.

Moving on, with the ratification of the DSC 1.0 standard, both the VESA and MIPI will be adopting it for some of their respective standards. On the VESA side, eDP 1.4 will be the first VESA standard to include it, while we also expect DSC’s inclusion in the forthcoming DisplayPort 1.3. MIPI in turn will be including DSC in their Display Serial Interface (DSI) 1.2 specification for mobile devices.

With the above in mind, it’s interesting how both groups ended up at the same standard despite their significant differences in goals. The VESA is primarily concerned with driving ultra high resolutions such as 8K@60Hz, which would require over 50Gbps of uncompressed video and something not even DisplayPort 1.3 would be able to achieve. MIPI on the other hand is not concerned about resolutions as much as they are concerned about power and cost requirements; a DisplayPort-like interface could supply mobile devices with plenty of bandwidth, but high bitrate interfaces are expensive to implement and are typically very power hungry, both on an absolute basis and a per-bit basis.

| Display Bandwidth Requirements, 24bpp (Uncompressed) | |||||||||||

| Resolution | Bandwidth | Minimum DisplayPort Version | |||||||||

| 1920x1080@60Hz | 3.5Gbps | 1.1 | |||||||||

| 2560x1440@60Hz | 6.3Gbps | 1.1 | |||||||||

| 3840x2160@60Hz (4K) | 14Gbps | 1.2 | |||||||||

| 7680x4320@60Hz (8K) | >50Gbps | 1.3 + DSC | |||||||||

DSC in turn solves both of their problems, allowing the VESA to drive ultra high resolutions over DisplayPort while allowing MIPI to drive high resolution mobile displays over low cost, low power interfaces. In fact it’s surprising (and almost paradoxical) that even with the additional manufacturing costs and encode/decode overhead of DSC, that in the end DSC is both cheaper to implement and lower power than a higher bandwidth interface.

Wrapping things up, while DSC enabled devices are still some time off – the fact that the standard was just ratified means new display controllers still need to be designed and built – DSC is something we’re going to have to watch closely. Display compression is not something to be taken lightly due to the potential compromises to both image quality and latency, and while it’s unlikely the average consumer will notice it’s definitely going to catch the eyes of enthusiasts. The VESA and MIPI are going in the right direction by targeting visually lossless compression rather than accepting a significant image quality tradeoff for better bandwidth savings, but it remains to be seen just how lossless/lossy DSC really is. At a fundamental level DSC can never beat the quality of uncompressed display streams, but that doesn’t rule out other tradeoffs that will make compression worth the cost.

Source: VESA

85 Comments

View All Comments

sunbear - Tuesday, April 22, 2014 - link

Given that much of the video content being sent from a computer or set top box to a display is anyway probably coming from a compressed source (e.g. Mpeg 2, H.264, etc) why even bother with adding the latency of decoding the H.264 on the computer, then re-encoding with DSC, only to have to decode again on the display? It would seem smarter to me if they came up with a standard whereby the computer could auto-negotiate with the display and if the display responds that it is capable of decoding the existing stream, then skip the decoding on the computer entirely (no need for DSC) and pass the compressed stream directly through to the monitor.p1esk - Wednesday, April 23, 2014 - link

My guess is, it's because decoding H.264 or H.265 is computationally intensive, and they don't want to ask display manufacturers to use expensive decoder chips in displays.Guspaz - Wednesday, April 23, 2014 - link

h.264 decoders are commodity hardware, they cost next to nothing. That's not true of h.265, obviously.The problem with that approach is that not all source content is h.264 encoded. Television might be, but it might also be MPEG-2 (OTA, many digital cable/satellite services) or VC-1 (many IPTV services). Videogames and PC output isn't encoded at all.

p1esk - Wednesday, April 23, 2014 - link

We are talking about decoders that can handle 50Gbps streams in real time. Regardless of the codec used, the chips that can do that will not be cheap.sheh - Wednesday, April 23, 2014 - link

Another thing that might be relevant is that there are many ways to decode MPEG, with different resultant pixels, and all these outputs may be technically valid. For a monitor-oriented format you'd probably want the output to be the same regardless of decoder, or at least be more stringent in what's considered valid decoded output.willis936 - Wednesday, April 23, 2014 - link

So what happens when you play games or just sit and stare at the desktop? All of a sudden you're wasting a ton of power for real time compression when it's unnecessary and adding a lot of latency (in the order of ms, even into the 10s on a bad design).What would be better is a fresh standard that let the display driver tell the monitor (on next frame I'll send you updates for these pixels) and then only update the pixels that change. Ooo ahhh we save power but peak bandwidth requirements are still the same. If you want a big resolution you need a big bandwidth. This is just a money band aid.

danbob999 - Wednesday, April 23, 2014 - link

Not again. Let's not repeat the hell of compressed audio and its ever changing codecs (DOLBY, DTS, DTS TRUE FULL PRO HD 2 ULTRA SUPER MEGA III).I don't want to replace my TV just because it doesn't support the new compression codec.

Guspaz - Wednesday, April 23, 2014 - link

There are only two relevant sets of audio compression standards, and the only top-end one with any significant use (DTS-HD) is backwards compatible. That is to say that if you take a DTS-HD stream and try to play it back on a regular DTS encoder that has no idea what DTS is, it will work perfectly fine, because DTS-HD contains a regular DTS stream inside it.piroroadkill - Wednesday, April 23, 2014 - link

There are no real ever changing codecs. In fact, that's one of the fantastic things about DTS-HD Master Audio.The core 1.5Mbps stream is decodable by any old gear that supports DTS Coherent Acoustics. Backwards compatible.

Newer gear also decodes the lossless difference to produce a lossless stream.

DTS Coherent Acoustics is already a great sounding codec (not because of complexity, but mainly because of the high bitrate), so if you have to fall back to it, it's no great shakes. With the lossless stream on top, you're getting mathematically lossless output.

It's also the clear winner in terms of blu-ray soundtracks right now, so it's the one you need to worry about.

BMNify - Wednesday, April 23, 2014 - link

it should be noted that the real rec2020 3840x2160@60Hz UHD-1(4K) and 7680x4320@60Hz UHD-2(8K) requirement is in fact currently up to 120Hz for both, and if NHK/BBC r&d are right then its may go up to 240Hz or even 300Hz by the expected official 7680x4320 UHD-2 release for the Tokyo 202 Olympic games.so we have a problem as that's only 6 years away so even this vesa/mipi spec is already under powered before its available.