Crucial M550 Review: 128GB, 256GB, 512GB and 1TB Models Tested

by Kristian Vättö on March 18, 2014 8:00 AM ESTNAND Lesson: Why Die Capacity Matters

SSDs are basically just huge RAID arrays of NAND. A single NAND die isn't very fast but when you put a dozen or more of them in parallel, the performance adds up. Modern SSDs usually have between 8 and 64 NAND dies depending on the capacity and the rule of "the more, the better" applies here, at least to a certain degree. (Controllers are usually designed for a certain amount of NAND die, so too many dies can negatively impact performance because the controller has more pages/blocks to track and process.) But die parallelism is just a part of the big picture—it all starts inside the die.

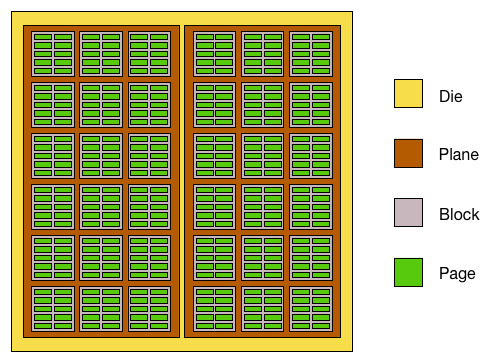

Meet the inside version of our Mr. NAND die. Each die is usually divided into two planes, which are further divided into blocks that are then divided into pages. In the early NAND days there were no planes, just blocks and pages, but as the die capacities increased the manufacturers had to find a way to get more performance out of a single die. The solution was to divide the die into two planes, which can be read from or written to (nearly) simultaneously. Without planes you could only read or program one page per die at a time but two-plane reading/programming allows two pages to be read or programmed at the same time.

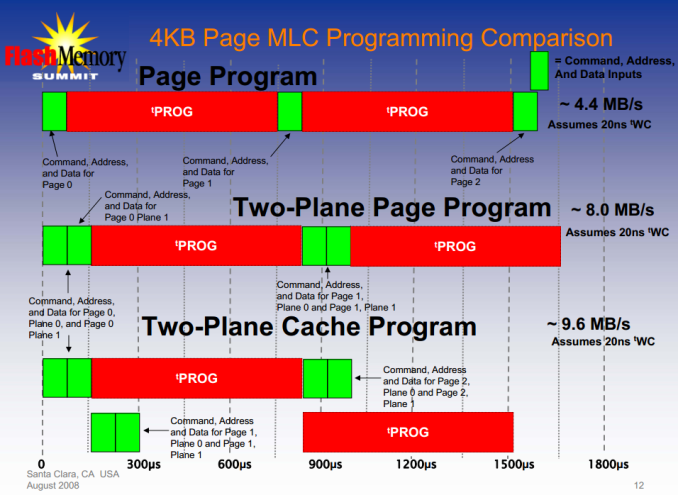

The reason I said "nearly" is because programming the NAND involves more than just the programming time. There is latency from all the command, address and data inputs, which are marginal compared to the program time but with two-plane programming they take twice the time (you'll still have to send all the necessary commands and addresses separately for both soon-to-be-programmed pages).

I did some rough calculations based on the data I have (though to be honest, it's probably not enough to make my calculations bulletproof) and it seems that the two-plane programming latency is about 2% compared to two individual dies (i.e. it takes 2% longer to program two pages with two-plane programming than with two individual dies). In other words, we can conclude that two-plane programming gives us roughly twice the throughput compared to one-plane programming.

"Okay," you're thinking, "that's fine and all, but what's the point of this? This isn't a new technology and has nothing to do with the M550!" Hold on, it'll make sense as you read further.

Case: M500

| M550 128GB | M500 120GB | |

| NAND Die Capacity | 64Gbit (8GB) | 128Gbit (16GB) |

| NAND Page Size | 16KB | 16KB |

| Sequential Write | 350MB/s | 130MB/s |

| 4KB Random Write | 75K IOPS | 35K IOPS |

The Crucial M500 was the first client SSD to utilize 128Gbit per die NAND. That allowed Crucial to go higher than 512GB without sacrificing performance but also meant a hit in performance at the smaller capacities. As mentioned many times before, the key to SSD performance is parallelism and when the die capacity doubles, the parallelism is cut in half. For the 120/128GB model this meant that instead of having sixteen dies like in the case of 64Gbit NAND, it only had eight 128Gbit dies.

It takes 1600µs to write 16KB (one page) to Micron's 128Gbit NAND. Convert that to throughput and you get 10MB/s. Well, that's the simple version and not exactly accurate. With eight dies, the total write throughput would be only 80MB/s but the 120GB M500 is rated 130MB/s. The big picture is more than just the program time as in reality you have to take into account the interface latency as well as the gains from two-plane programming and cache-mode (the command, address and data latches are cached so there is no need to wait for them between programmings).

Example of cache programming

Like I described above, two-plane programming gives us roughly twice the throughput compared to one-plane programming. As a result, instead of writing one 16KB page in 1600µs, we are able to write two pages with 32KB of data in total. That doubles our throughput from 80MB/s to 160MB/s. There is some overhead from the commands like the picture above shows but thankfully today's interfaces are so fast that it's only in the magnitude of a few percents and in real world the usable throughput should be around 155MB/s. The 120GB M500 manages around 140MB/s in sequential write, so 155MB/s of NAND write throughput sounds reasonable since there is always some additional latency from channel and die switching. Program times are also averages and vary slightly from die to die and it's possible that the set program times may actually be slightly over 1600µs to make sure all dies meet the criteria.

Case: M550

While the M500 used solely 128Gbit NAND, Crucial is bringing back the 64Gbit die for the 128GB and 256GB M550s. The switch means twice the amount of die and as we've now learned, that means twice the performance. This is actually Micron's second generation 64Gbit 20nm NAND with 16KB page size similar to their 128Gbit NAND. The increase in page size is required for write throughput (about 60% increase over 8KB page) but it adds complexity to garbage collection and can increase write amplification if not implemented efficiently (and hence lower endurance).

Micron wouldn't disclose the program time for this part but I'm thinking there is some improvement over the original 128Gbit part. As process nodes mature, you're usually able to squeeze out a little more performance (and endurance) out of the same chip and I'm thinking that is what's happening here. To get ~370MB/s out of the 128GB M550, the program time would have to be 1300-1400µs to be inline with the performance. It's certainly possible that there's something else going on (better channel switching management for instance) but it's clear that Crucial/Micron has been able to better optimize the NAND in the M550.

The point here was to give an idea of where the NAND performance comes from and why there is such dramatical difference between the M550 and M500. Ultimately all the NAND performance characteristics are something the manufacturers won't disclose and hence the figures here may not be accurate but should at least give a rough idea of what is happening at the low level.

100 Comments

View All Comments

hojnikb - Thursday, March 20, 2014 - link

You're not alone. I myself am skeptical about TLC aswell, seeing how badly it performed in pretty much every single non ssd device i've had. While samsung has really gone all out on the TLC and used lots of tricks to squeze every bit of performance they can outta TLC, i still don't believe in it.While endurance seems to be okay for most users, one thing does come to mind and no one seems to be testing it: data retention.

Cerb - Sunday, March 23, 2014 - link

Any SMART reading program will work. There are tons of them, even included in some OSes.Any secure erase program will work. There aren't tons of free ones, but they exist...or you can fart around with hdparm (frustrating, to say the least, but I was able to unbrick a SF drive that way, once).

Software just for their SSDs is an extra cost that brings very little value, but has to be made up by the gross profits of the units sold. Since there aren't special diags to run, beyond checking SMART stats, and seeing if it's bricked, for starting an RMA, why bother with software, beyond the minimum needed for performing firmware updates?

CiccioB - Wednesday, March 19, 2014 - link

You're right.Synthetic tests are interesting till a certain degree.

Morereal life one would be much more appreciated. For example, many SSD are used like boot drives. How does it really change using one cheap SSD vs one much more expensive?

How does it change copying a folder of images of few MB each (think about an archive of RAW pictures). How faster is loading whatever level to a whatever game that on a mechanical HDD maybe takes several seconds?

Having bar and graphs is nice. Having them applied to real life usage, where other overheads and bottlenecks apply, would be better, though.

Lucian2244 - Wednesday, March 19, 2014 - link

I second this, would be interesting to know.hojnikb - Wednesday, March 19, 2014 - link

+1 for that.Fancy numbers are fine and all, but mean nothing to lots of people.

HammerStrike - Wednesday, March 19, 2014 - link

IMO, the biggest advancement in SSD over the last year is not the performance increases, but the price drops, which have been spearheaded by Crucial and the M500. It seems odd to me that synthetic performances is given so much weight in the reviews while advent of "affordable" 240 & 500 GB drives is somewhat subdued. The vast majority of consumer applications are not going to have any real life difference between a M5xx and a faster drive, but the M5xx is either going to get you more storage at the same price, or let you save a chunk of change that can better be deployed to a different part of your system where you will notice the impact, such as your GPU. That, along with MLC NAND, power loss protection and the security features make them no brainers for gaming or general purpose rigs.CiccioB - Wednesday, March 19, 2014 - link

I Agree.I've recently bought a Crucial M500 240GB for a bit more than 100€ which is going to replace an older Vertex3 60GB (75€ at that time) which works perfectly but has become a little small.

It's a device that is going to be used mainly for boot and application launching (data is on a separate mechanical disk) so writing performance are of not importance.

Considering that when you do work (for real and not simply running benchmark) you copy from something to your SSD (or viceversa) you know that the bottleneck is not your SSD but the other source/destination (which in my case can also be somewhere on 1GB/s ethernet).

With such a "low tier" SSD boot times are about 10 secs and application launching is immediate (LibreOffice as well, even without the pre-caching deamon running) I have invested the extra money needed for a faster SSD for 8 GB more RAM (total of 16GB) in order to be able to use a RAM disk to do fast work when access speed is really critical.

Kristian Vättö - Wednesday, March 19, 2014 - link

I've been playing around with real world tests quite a bit lately and it's definitely something that we'll be implementing in the (near) future. However, real world testing is far more complicated than most think. Creating a test suite that's realistic is close to impossible because in reality you are doing more than just one thing at a time. In tests you can't do that because one slight change or issue with a background task can ruin all you results. The number of variables in real world testing is just insane and it's never possible to guarantee that the results are fully reproducible.After all, the most important thing is that our tests are reproducible and results accurate. And I'm not saying this to disregard real world tests but because I've faced this when running these tests. It's not very pleasing when some random background task ruins your hour of work and you have to start over hoping that it won't do it again. And then repeat this with 50 or so drives.

That said, I think I've been able to set up a pretty good suite of real world benchmarks. I don't want to just clone a Windows install, install a few apps and then load some games because that's not realistic. In real world you don't have a clean install of Windows and plenty of free space to speed everything up. I don't want to share the details of the test yet because there are still so many tests to run. When I've got everything ready, you'll see the results.

What I can say already is that IO consistency isn't just a FUD - it can make a difference in basic everyday tasks. How big the difference is in real world is another question and it's certainly not as big as what benchmarks show but that doesn't mean it's meaningless.

CiccioB - Thursday, March 20, 2014 - link

Well, don't misunderstand what I wrote.I didn't said those tests on IO consistency are FUD. It's just that they do not tell the entire thruth.

It's like benchmarking a GPU only for, let's say, pixel filling capacity ignoring all the rest.

High IOPS don't really tell how good an SSD is going to work.

I already appreciate that the test are done on SSDs that are not secured formatted every time the tests are performed as it is is done in other rewiews that show only the best performances at initial life of the device. Infact, many ignore the fact that SSD can become even 1/3 slower (in real life usage, not only in tests) when they are going to reuse cells, a thing that secure formatted SSDs never show as being a problem.

Real usage tests are what matters in the end. Even the fact that the test may be comprimised by background tasks. Users do not use their SSD in a ideal world where the OS does nothing but is all optimized to run a benchmark. Synthetic tests are good to show the potential of a device, but real life usage is a complete different thing. Even loading a game level is subject to many variables, but it's meangless saying that the SSD could load the level in 5 seconds by just looking at its performances on paper while in reality, with all the other variables that affect performances in real life, it takes 20 seconds. And it would be quite useful knowing that, for example, the fastest SSD on the market which may cost twice these "mainstream SSD with mediocre results in synthetic tests" is in reality able to load the same game level in 18 secs instead of 20, even if on paper it has twice the IO performances and can do burst transfers twice the speed.

It's not necessary to create a test where disk intensive application are used. Even the low end user starts OpenOffice/LibreOffice (or even MS Office it is is lucky and rich enough) once in a while, and the loading times of those elephants may be more interesting than knowing that the SSD can do 550MB/s when doing sequential reading with a QD32 and blocks of 128K (which in reality never happens in a consumer enviroment). Comncurrent accesses may also be an interesting test, as in real life usage, it may be possible to do many things at the same time (copying to/from the SSD while saving a big document in InDesign or loading a big TIFF/RAW with Photoshop).

Some real life tests may be created to show where a particular high performance SSD may do the difference and thus measure that difference in order to evaluate price gap with better, more useful, numbers in hands.

But just disregarding real like tests for everyday usage just because they are subjected to many variables is, in the end, not describing the entire truth on what a cheap SSD compare to a more expensive one.

You could even test extreme cases where, like me, a RAM disk is used instead of a mechanical disk or a SSD to do heavy loads. That would show how really using those different storage devices impacts on prductivity and if in the end it is really useful to invest in a more performant (and expensive) SSD or in a cheap one + more RAM.

If the aim is to guide the user to buy the best it can to do the work faster, I think it could be quite interesting doind these kind of comparison.

hojnikb - Wednesday, March 19, 2014 - link

Performance consistency is actually quite important. While most modern controllers are pretty much ok for an avarage consumer, there were times when consistency was utter gargabe and was noticeable to your avarage user aswell (phison and jmicron are fine example for that -- crucials v4 for example frequently locks up if you write a lot even with the latest firmware and write speed is consistently dropping to near zero).