Crucial M550 Review: 128GB, 256GB, 512GB and 1TB Models Tested

by Kristian Vättö on March 18, 2014 8:00 AM ESTNAND Lesson: Why Die Capacity Matters

SSDs are basically just huge RAID arrays of NAND. A single NAND die isn't very fast but when you put a dozen or more of them in parallel, the performance adds up. Modern SSDs usually have between 8 and 64 NAND dies depending on the capacity and the rule of "the more, the better" applies here, at least to a certain degree. (Controllers are usually designed for a certain amount of NAND die, so too many dies can negatively impact performance because the controller has more pages/blocks to track and process.) But die parallelism is just a part of the big picture—it all starts inside the die.

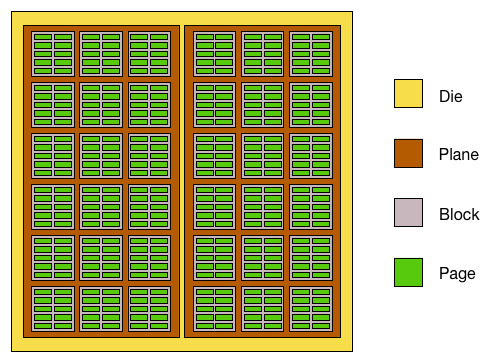

Meet the inside version of our Mr. NAND die. Each die is usually divided into two planes, which are further divided into blocks that are then divided into pages. In the early NAND days there were no planes, just blocks and pages, but as the die capacities increased the manufacturers had to find a way to get more performance out of a single die. The solution was to divide the die into two planes, which can be read from or written to (nearly) simultaneously. Without planes you could only read or program one page per die at a time but two-plane reading/programming allows two pages to be read or programmed at the same time.

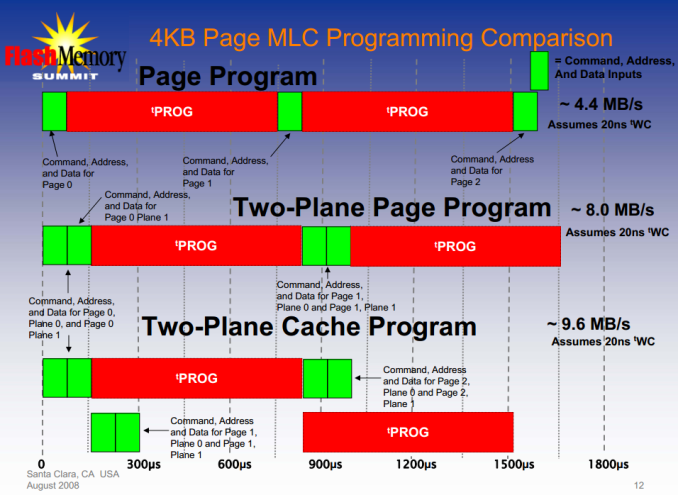

The reason I said "nearly" is because programming the NAND involves more than just the programming time. There is latency from all the command, address and data inputs, which are marginal compared to the program time but with two-plane programming they take twice the time (you'll still have to send all the necessary commands and addresses separately for both soon-to-be-programmed pages).

I did some rough calculations based on the data I have (though to be honest, it's probably not enough to make my calculations bulletproof) and it seems that the two-plane programming latency is about 2% compared to two individual dies (i.e. it takes 2% longer to program two pages with two-plane programming than with two individual dies). In other words, we can conclude that two-plane programming gives us roughly twice the throughput compared to one-plane programming.

"Okay," you're thinking, "that's fine and all, but what's the point of this? This isn't a new technology and has nothing to do with the M550!" Hold on, it'll make sense as you read further.

Case: M500

| M550 128GB | M500 120GB | |

| NAND Die Capacity | 64Gbit (8GB) | 128Gbit (16GB) |

| NAND Page Size | 16KB | 16KB |

| Sequential Write | 350MB/s | 130MB/s |

| 4KB Random Write | 75K IOPS | 35K IOPS |

The Crucial M500 was the first client SSD to utilize 128Gbit per die NAND. That allowed Crucial to go higher than 512GB without sacrificing performance but also meant a hit in performance at the smaller capacities. As mentioned many times before, the key to SSD performance is parallelism and when the die capacity doubles, the parallelism is cut in half. For the 120/128GB model this meant that instead of having sixteen dies like in the case of 64Gbit NAND, it only had eight 128Gbit dies.

It takes 1600µs to write 16KB (one page) to Micron's 128Gbit NAND. Convert that to throughput and you get 10MB/s. Well, that's the simple version and not exactly accurate. With eight dies, the total write throughput would be only 80MB/s but the 120GB M500 is rated 130MB/s. The big picture is more than just the program time as in reality you have to take into account the interface latency as well as the gains from two-plane programming and cache-mode (the command, address and data latches are cached so there is no need to wait for them between programmings).

Example of cache programming

Like I described above, two-plane programming gives us roughly twice the throughput compared to one-plane programming. As a result, instead of writing one 16KB page in 1600µs, we are able to write two pages with 32KB of data in total. That doubles our throughput from 80MB/s to 160MB/s. There is some overhead from the commands like the picture above shows but thankfully today's interfaces are so fast that it's only in the magnitude of a few percents and in real world the usable throughput should be around 155MB/s. The 120GB M500 manages around 140MB/s in sequential write, so 155MB/s of NAND write throughput sounds reasonable since there is always some additional latency from channel and die switching. Program times are also averages and vary slightly from die to die and it's possible that the set program times may actually be slightly over 1600µs to make sure all dies meet the criteria.

Case: M550

While the M500 used solely 128Gbit NAND, Crucial is bringing back the 64Gbit die for the 128GB and 256GB M550s. The switch means twice the amount of die and as we've now learned, that means twice the performance. This is actually Micron's second generation 64Gbit 20nm NAND with 16KB page size similar to their 128Gbit NAND. The increase in page size is required for write throughput (about 60% increase over 8KB page) but it adds complexity to garbage collection and can increase write amplification if not implemented efficiently (and hence lower endurance).

Micron wouldn't disclose the program time for this part but I'm thinking there is some improvement over the original 128Gbit part. As process nodes mature, you're usually able to squeeze out a little more performance (and endurance) out of the same chip and I'm thinking that is what's happening here. To get ~370MB/s out of the 128GB M550, the program time would have to be 1300-1400µs to be inline with the performance. It's certainly possible that there's something else going on (better channel switching management for instance) but it's clear that Crucial/Micron has been able to better optimize the NAND in the M550.

The point here was to give an idea of where the NAND performance comes from and why there is such dramatical difference between the M550 and M500. Ultimately all the NAND performance characteristics are something the manufacturers won't disclose and hence the figures here may not be accurate but should at least give a rough idea of what is happening at the low level.

100 Comments

View All Comments

catavalon21 - Tuesday, March 18, 2014 - link

On the Amazon site if you look closely, the model # listed under the 512GB version is CT256M550SSD1; that's the 256GB drive. Maybe one more reason many of us use the Egg over Amazon...IMHO only...xrror - Wednesday, March 19, 2014 - link

regardless, the 512GB is now listed "Sign up to be notified when this item becomes available."and sorry, but Amazon is a very valid alternative to "the Egg" these days. Maybe if you're talking about the oldschool dot.com newegg of yore, but these days it's a good idea to shop around before defaulting to NewEgg.

Anyone rememember the ABS computer advertisements in Computer Shopper? Yea... old school newegg was unqualified awesome. Then sadly dot.com burst and well. At least the newegg that remains is a good company =)

catavalon21 - Wednesday, March 19, 2014 - link

Fair enough...and regarding Computer Shopper, wow, there was more than a little grief in those pages! One of my coworkers called one of the companies with good prices, snazzy ad, and swore he heard the baby crying and the significant other yelling at the guy who answered the phone. Guess I deserved the response, but still want folks hoping to get the great deal at Amazon to read carefully.hrbngr - Wednesday, March 19, 2014 - link

Kristian,I'm very happy w/the power loss data protection that this drive offers, as opposed to the Samsung 840 Evo, for example. Is there a more consistent, fast performer that offers similar data protection features that is also a decent value, in your opinion?

wiz329 - Wednesday, March 19, 2014 - link

Were the graphs made using Stata?beginner99 - Wednesday, March 19, 2014 - link

Isn't this review a bit harsh? I mean the old M500 is at leats where I live by far the cheapest ssd. Yes both the M500 and M550 don't look great in benchmarks but are there any real-life consumer scenario this will actually matter much? Especially the performance consistency seems pretty irrelevant for consumers. I'm not running a database server that is accessed like crazy. Actually my postgresql DB for development purposes runs on a WD green drive and thats just fine for that.btb - Wednesday, March 19, 2014 - link

Agreed. The M500 have been the (seemingly unrecognized?) brandname price/performance leader for a while now, especially for those of us that like ~1TB class SSDs. Unless one wanted a crappy TLC ssd there really was no alternative, and Anandtech gave it a very lukewarm review for some reason. From all accounts the M550 seems like a roughly ~10% improvement other the original M500, so as soon as the price drops down to M500 level, its a no-brainer buy compared to the current alternatives. I also see no reason to believe Anandtechs assesment that the M550 wont take over the M500's place as soon as Crucial have phased out their stock of M500's. Its just comon sense.Kristian Vättö - Wednesday, March 19, 2014 - link

It is not our assessment that the M550 won't replace the M500, it's a statement we got straight from Micron. I even double checked after the review went live to confirm that that is really the case.The reason we gave the EVO a good review is because it did well in our tests and was the cheapest drive at the time of its release. Why wouldn't we like it in that case? TLC NAND doesn't make it "crappy" - we've shown that the endurance of TLC NAND is more than enough for client usage several times and the EVO is in fact faster than the M500 even though it uses TLC NAND.

hojnikb - Wednesday, March 19, 2014 - link

Yeah samsungs "handpicked" TLC and MEX are really doing wonder indeed.btb - Thursday, March 20, 2014 - link

Fair enough, at the time of my purchase of 2 M500's last year, they were the only ~1TB SSDs that supported eDrive, so I did not look too closely at the EVO review. I have just glanced it over now, and I agree that it does appear to have nice specs. Although I am still skeptical about TLC, or at least you would think that if they can get that good performance from TLC, there should be plenty of room for improvement on the MLC side :)Anyway I wont be purchasing any more SSDs until they reach 2TB capacity, so seeing that Crucial just released a new generation and have yet to go past 1TB, I guess that could take a while. Perhaps Samsung with their TLC have a better chance of reaching the 2TB mark.

One area I do find Crucial slightly lagging in is on the software side. When I was running intel SSDs they had some decent software for reading the SMART attributes. And from the EVO review Samsung have some nice software as well. AFAIK Crucial dont have that, which seems like something they should correct.