Imagination's PowerVR Rogue Architecture Explored

by Ryan Smith on February 24, 2014 3:00 AM EST- Posted in

- GPUs

- Imagination Technologies

- PowerVR

- PowerVR Series6

- SoCs

Technical Comparisons

Finally, to close out this look at the Rogue architecture we wanted to spend a bit of time looking at how it compares to other architectures. Unfortunately the lack of details we have on other SoC GPU architectures means we can’t make any meaningful comparisons there beyond the GFLOPs comparisons we do today (and that says nothing of real world efficiency). But we can compare it to the next best thing, which is mobile parts based on desktop GPU architectures from AMD and NVIDIA. The latter case being especially interesting, as we know Kepler will be coming to SoCs with the K1.

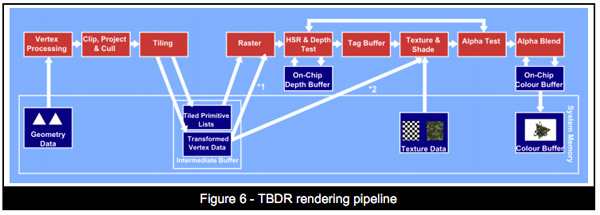

With that said, and we can’t reiterate this enough, this is just a look at theoretical performance. It is not possible to take into account efficiency measures such as memory bandwidth, ROPs, or especially early rejection optimizations such as Tile Based Deferred Rendering. TBDR is Imagination’s ace, and while other GPU firms have their own early rejection technologies, from what little we know about each of them, none of them quite matches TBDR. So Rogue’s theoretical performance aside, if Imagination is rejecting significantly more work before it hits their shaders, then they would have greater performance when all other factors were held equal. The only way to compare the real world performance of these architectures is to benchmark their real world performance, so please do not consider this the final word on performance.

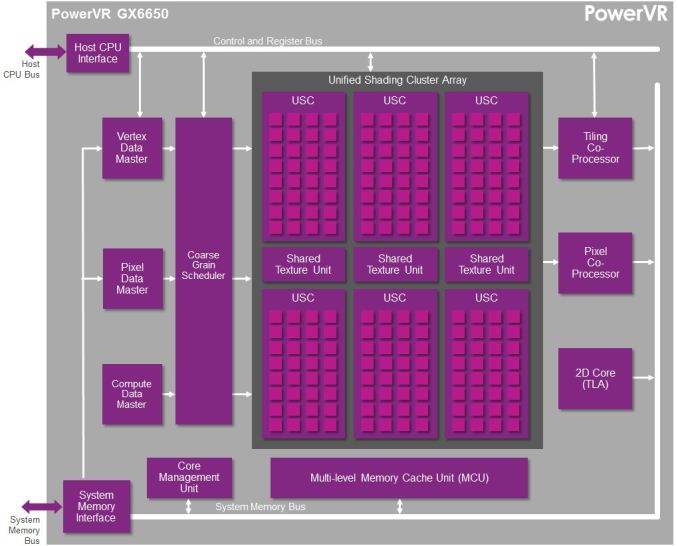

For this comparison we’ll be looking at NVIDIA’s Kepler based K1, AMD’s GCN based A4-1350, and Imagination’s Rogue based GX6650 and G6230. Because Rogue is offered in multiple configurations it’s difficult to determine just how large a Rogue configuration would equal K1 or A4-1350 from a performance and size perspective, but given the anticipated integration time for Series 6XT, a 6 cluster configuration seems the most likely.

| GPU Specification Comparison | |||||||

| NVIDIA K1 | Imagination PVR GX6650 | Imagination PVR G6230 | AMD A4-1350 | NVIDIA GTX 650 | |||

| FP32 ALUs | 192 | 192 | 64 | 128 | 384 | ||

| FP32 FLOPs | 384 | 384 | 128 | 256 | 768 | ||

| Pixels/Clock (ROPs) | 4 | 12 | 4 | 4 | 16 | ||

| Texels/Clock | 8 | 12 | 4 | 8 | 32 | ||

| GFLOPS @ 300MHz | 115.2 GFLOPS | 115.2 GFLOPS | 38.4 GFLOPS | 76.8 GFLOPS | 230.4 GFLOPS | ||

| Architecture | Kepler | Rogue (6XT) | Rogue (6) | GCN 1.0 | Kepler | ||

Briefly, we can see that as far as theoretical shading performance is concerned, both the GX6650 and K1 are neck-and-neck when clockspeeds are held equal. Both of them have the same ILP dependency, so both need to be able to pull off some FP32 co-issued instructions if they are to achieve their full 384 FLOP/cycle throughput. The A4-1350 on the other hand has no such limitation, making it easier to hit its 256 FLOP/cycle throughput, but never getting the chance to go past it.

Meanwhile it was surprising to see that GX6650’s theoretical pixel throughput was so high. 12 pixels/clock (12 ROPs) is much higher than either K1 or A4-1350, and in fact is quite high for an SoC class product. Most designs use relatively few ROPs here for size and power reasons, and not all designs replicate the ROPs with the shader blocks. So having 12 ROPs here was unexpected. At the same time it remains to be seen how well real world efficiency tracks this, as ROPs are frequently memory bandwidth constrained, which makes such a large number of ROPs harder to feed.

Moving on to quickly compare texture throughput, again it’s surprising to see just how many texels GX6650 can push. TMUs regularly scale with shader core counts, so the fact that it’s three-fold what a single TMU design can do is not unexpected, but until now we had never realized just what that meant for overall texture throughput. 12 texels/clock is (thankfully) a lot of texels for a SoC GPU. That said, this is also a memory bandwidth heavy operation, so it’s difficult to say how real world performance will track it.

Finally, to throw in a true desktop comparison for the fun of it, we also put NVIDIA’s Kepler based GTX 650 in the chart. Clockspeeds aside, the best case scenario for even GX6650 is that it achieves half the shading throughput as GTX 650. The ROP throughput gap on the other hand is narrower (but GTX 650 will easily have 2x the memory bandwidth) and the texture throughput gap is nearly 3x wider. In practice it would be difficult to imagine the GX6650 being any closer than about 40% of the GTX 650’s performance, once again owing to the massive memory bandwidth difference between an SoC and a discrete GPU.

Final Words

Wrapping up this architectural overview of Imagination’s Rogue architecture, it’s exciting to finally see much of the underpinnings of an SoC GPU design. While we haven’t seen every facet of Rogue yet – and admittedly it’s unlikely we ever will – the information that we’ve received on Rogue so far has given us a much better perspective on how Imagination’s latest graphics architecture works, and for that matter how Series 6 and Series 6XT differ from one-another.

Ultimately we still can’t do true apples-to-apples comparisons with these integrated GPUs (we can’t separate the CPU and memory controller from the GPU), but it should be helpful for better understanding why certain products perform the way they do, and determining what the stronger products might be in the long run. So it’s with some hope and a bit of luck that this might get the ball rolling with the other SoC GPU vendors, getting them to open up their doors a bit more so that we can see what’s inside their designs.

Coming back full circle to Imagination, we’re left with one of the big reasons why they’re opening up in the first place: core wars. Imagination is keen on not being seen as being left behind on core counts, and while we don’t expect the “core” terminology to go away any time soon, now that we have these low level Rogue architecture details, we can agree that Imagination does have a salient point as far as counting cores and ALUs is concerned.

For the purposes of FP32 operations a Rogue USC is essentially equivalent to a 32 core design, with an ILP reliance similar to what we’re seeing out of NVIDIA right now, though perhaps greater than some other designs. Or as Imagination likes to compare it to, a 6 USC design would be equivalent to a 192 core design. This speaks nothing of real world performance – without real world hardware it can’t, there are too many external variables – but it does give us an idea of how many clusters Imagination’s customers would need to achieve various degrees of theoretical performance, including what it would take to beat the competition.

95 Comments

View All Comments

Scali - Monday, February 24, 2014 - link

"the alternative to DirectX is OpenGL. Is it more suited for TBDR architecture than "brute force" ones?"In theory, D3D used to be more suited to TBDR than OpenGL, because it had explicit BeginScene() and EndScene() markers. But those have been dropped after D3D9.

I can't really think of anything off the top of my head that would make one API more suited than the other these days. They're both very similar: just bind your textures/buffers/shaders, update the constants, and fire off your geometry.

"How is PowerVR going to fight against an architecture as flexible as nvidia ones that can also be used for CUDA computing and thus being adopted into markets (and for other tasks) PowerVR cannot with their current architecture?"

PowerVR supports OpenCL, so they too can do the GPGPU-game. It all depends on who delivers the best package in terms of features, performance, power usage, etc.

Jhwzz - Tuesday, February 25, 2014 - link

>Again, as someone altready asked, tile based rendering was used on the desktop but was soon>abandoned as it could not give any real advantages over the raw power of other architectures

>that grew much faster that what PowerVR could optimize their algorithms, making tile based

>rendering less and less profitable. What makes that scenario different that what we are

> witnessing in this period where mobile resolutions are growing to be even bigger than desktop

> monitors and that games complexity is gonig to increase for the arrival of these really powerful

>GPUs (K1 in primis)?

It is a common misconception that PowerVR's desktop parts where not competitive or had compatibility problems. The last card they produced, the Kyro II was actual very competitive with the offerings from both NVidia and ATi. The claims of incompatibility where largely unfounded marketing FUD from competitors, with later drivers running the majority of content without problems. Further they did not leave the market because they could not compete on performance, instead their partner at the time, STM, decided to pull out of the market for unstated reasons, although this was most likely due to them not being able to invest the amount of money they needed to in order to take the high ground.

Mobile is VERY different to desktop space, NV and ATi where able to brute force their way to the top as both power consumption and memory bandwidth had extremely wide envelopes, this is not the case in mobile space. In Kyro II PowerVR had demonstrated that they were able to compete with considerable higher specification part (for clock & memory BW) from both NV and AMD, with considerably lower memory BW and power requirements. Although NV and AMD have evolved so have PowerVR, as such there is no reason to assume that they don't still have advantages.

>It seems PowerVR is behaving a bit like 3DFx did at the time, till it died. They were using their

>advanced but old technology to the exterme, so they rendered at 16bit instead of 32, used 16bit

>Zbuffer instead of 24 and many more "tricks" that were forced to try to hide what was quite

>clear: 3DFX didn't have the right architecture to compete with new companies like nvidia

> and ATI that started their story with the right step and much more powerful architectures

> (TNT2 simply destroyed Voodoo3 under all points, and beware, I was an Voodoo3 unfortunate > owner).

This simply makes no sense in the context of the market these cores are being target at. At the fundamental level the primary API currently used in mobile is OGLES2.0 which does not mandate anything higher than FP16 within fragment shaders. This means that the vast majority of current mobile content only use FP16 in fragment shaders, in these circumstances do you think it make sense to through area at higher precision paths? Of course it doesn’t! Further it’s not like the PowerVR architecture looks like slouch at FP32.

At the end of the day they truth will only be seen in benchmark in actual devices, not in marketing claims and FUD from various companies.

Jhwzz - Tuesday, February 25, 2014 - link

BTW you do realise that NV run many of these benchmark at 16 bit Z and 16 bit frame buffer don't you? They do thsi because the become even less comeptitive when forced to use 32 bits. So who is actually using old "technology" to hide real deficiencies in thir architectures?MrSpadge - Saturday, March 1, 2014 - link

If you can't see a difference due to using 16 bit somewhere along the rendering path, then it's actually the smart thing to do. It saves power, which can be better used elsewhere (higher clocks). Well, that was actually 3DFX's argument for why Glide with 16 bit + dithering was the smart choice back then. But "they've got the bigger bits" won (along with other advantages of the early nVidias).MrPoletski - Sunday, March 9, 2014 - link

I tmight be the smart thing to do, but when it's in a benchmark that's supposed to be at 32 bits then I call that cheating!allanmac - Monday, February 24, 2014 - link

If a new GPU architecture "deep dive" doesn't include the number of registers per multiprocessor then it's bordering on worthless.Both Intel, AMD and NVIDIA publish these numbers so the other mobile GPU vendors should as well.

Please dig up these numbers since then we can begin to compare these next gen mobile GPUs.

I suspect ARM, ImgTech and QCOM simply won't tell you... but you might be able to find the answer through a series of OpenCL tests.

boostern - Monday, February 24, 2014 - link

OpenCL tests on a GPU that isn't even in production? Before you say "test it on the iphone 5S", there is no OpenCL public libraries available on iOs, as far as I know.allanmac - Monday, February 24, 2014 - link

The first option is to ask the vendor.boostern - Monday, February 24, 2014 - link

Yes, maybe :DMrSpadge - Saturday, March 1, 2014 - link

While this is surely important the raw number of registers doesn't tell you that much either. In fact, it could be very misleading. How many registers you need first and foremost depends on the out of order window (in CPUs) or here the number of threads in flight. Which is something they didn't tell us either. Also different cache sizes and latencies would determine how bad it is to run out of register space.