The Pixel Density Race and its Technical Merits

by Joshua Ho on February 8, 2014 4:25 AM EST- Posted in

- Smartphones

- Displays

- Mobile

- Retina

While this has always been an issue that’s been in the background since Android OEMs started releasing devices with display PPIs above the 300-400 “retina” range, recent events have sparked a broader discussion into the value of pursuing the PPI race that is happening between Android OEMs. Within this discussion, the key points of contention tend to center upon the various tradeoffs from increasing resolution, and whether an increase in pixels per inch (PPI) will actually have a perceivable increase.

If there is any single number that people point to for resolution, it is the 1 arcminute value that Apple uses to indicate a “Retina Display”. This number corresponds to around 300 PPI for a display that is at 10-12 inches from the eye. In other words, this is about 60 pixels per degree (PPD). Pixels per degree is a way accounting for both distance from the display and the resolution of the display, which means that all the information here is not limited to smartphone displays, and applies universally to any type of display. While this is a generally reasonable value to work with, the complexity of the human eye and the brain in regards to image perception makes such a number rather nebulous. For example, human vision systems are able to determine whether two lines are aligned extremely well, with a resolution around two arcseconds. This translates into an effective 1800 PPD. For reference, a 5” display with a 2560x1440 resolution would only have 123 PPD. Further muddying the waters, the theoretical ideal resolution of the eye is somewhere around .4 arcminutes, or 150 PPD. Finally, the minimal separable acuity, or the smallest separation at which two lines can be perceived as two distinct lines, is around .5 arcmin under ideal laboratory conditions, or 120 PPD. While all these values for resolution seem to contradict each other in one way or another, the explanation behind all of this is that the brain is responsible for interpretation of the received image. This means that while the difference in angular size may be far below what is possible to unambiguously interpret by the eye, the brain is able to do a level of interpolation to accurately determine the position of the object in question. This is self-evident, as the brain is constantly processing vision, which is illustrated most strikingly in cases such as using a flashlight to alter the shadowing of blood vessels that are on top of the retina. Such occlusions, along with various types of optical aberration and other defects present in the image formed on the retina are processed away by the brain to present a clean image as the end result.

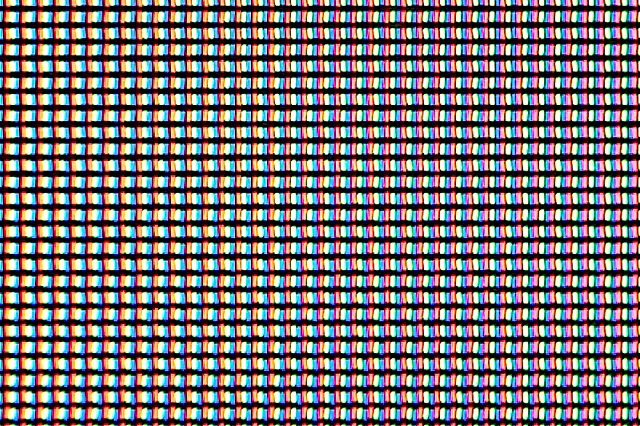

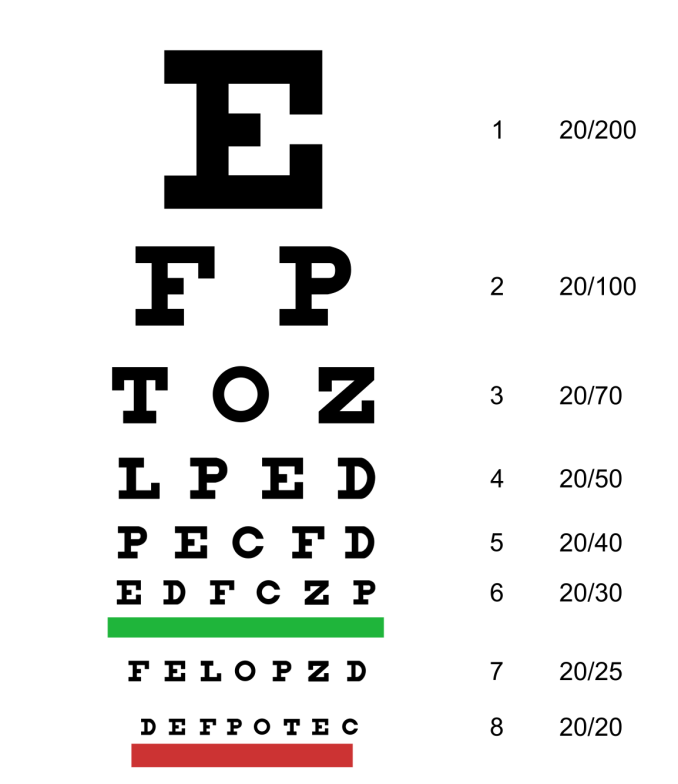

While all of these resolution values are achievable by human vision, in practice, such values are highly unlikely. The Snellen eye test as seen above, is the well-known chart of various lines of high contrast text with increasingly small size, gives a reasonable value of around 1 arcminute, or 60 PPD for adults, and around .8 arcminutes for children, or 75 PPD. It's also well worth noting that these tests are all conducted under ideal conditions with high contrast, well-lit rooms.

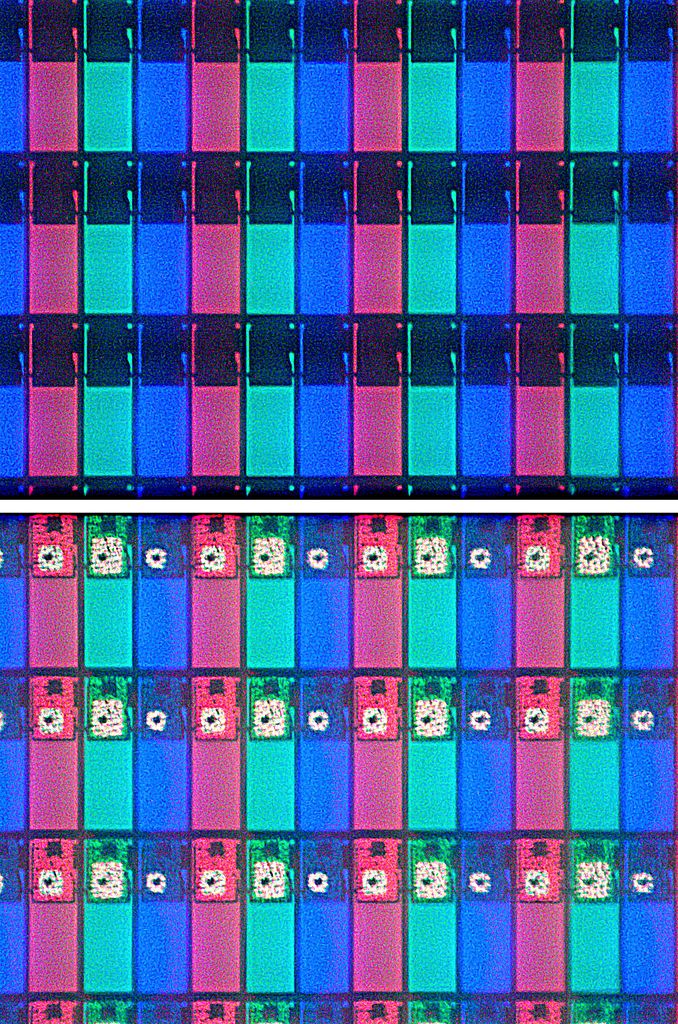

So after going through these possible resolutions, the most reasonable upper bound for human vision is the .5 arcminutes value, as while there is a clear increase in detail going from ~300 PPI to ~400 PPI in mobile displays, it is highly unlikely that any display manufacturer can make a relatively large display with a resolution that corresponds to 1800 PPD at 12 inches away for mass production. However, for the .5 arcminute value, at a distance 12 inches away from the eye, this would mean a pixel density of around 600 PPI. Of course, there would be no debate if it was that easy to reach an answer. Realistically, humans seem to only be able to have a practical resolution of around .8 to 1 arcminute. So while getting to 600 PPI would mean near zero noticeable pixelation for the vast majority of edge cases, the returns are diminishing after passing the 1 arcminute point. For smartphones around the display size of 4.7 to 5 inches in diagonal length, this effectively frames the argument around the choice of a few reasonable display resolutions with PPI ranging from 300 to 600. For both OLED and LCD displays, pushing higher pixel densities incurs a cost in the form of greater power consumption for a given luminance value. Going from around 330 PPI to 470 PPI for an LCD IPS display incurs around a 20% power draw increase on the display, which can be offset by more efficient SoC, larger batteries, improved RF subsystem power draw. Such power draw increases can also be offset by improvements in the panel technology used, which has consistently been the case with Samsung’s OLED development but regardless of these improvements, it is an increase to power draw compared to an equivalent technology display with lower pixel density. In the case of LCD displays, a stronger backlight must be used as the higher pixel density means the transistors around the liquid crystal become a larger proportion of the display, and the same is also true of OLED panels, but instead the issue becomes that smaller portions of organic phosphors on the display have to be driven at higher voltages in order to maintain the same level of luminance. An example of this can be seen below with the photo of the LCD display with its transistors, with the second showing a front-lit shot to illuminate the TFTs.

Thus, there are multiple sets of tradeoffs that come with increased resolution. While getting closer to the 0.5 arcminute value means getting closer to nearly unperceivable pixels, there is a loss in power efficiency, and on the same note, a loss in peak luminance for a given level of power consumption which implies reduced outdoor visibility if an OEM artificially clamps the upper bound of display brightness. With the focus on resolution, it also means that the increased cost associated with producing higher resolution displays may be offset elsewhere as it’s much harder to market lower reflectance, higher color accuracy, and other aspects of display performance that require a more nuanced understanding of the underlying technology. Higher resolution also means a greater processing load to the SoC, and as a result, UI fluidity can be greatly affected by an insufficient GPU, and a greater need to leverage the GPU for drawing operations can also reduce battery life in the long run.

Of course, reaching 120 PPD may be completely doable with little sacrifice in any other aspect of a device, but the closer OEMs get to that value, the less likely it is that anyone will be able to distinguish a change in resolution between a higher pixel density and lower pixel density display, and diminishing returns definitely set in after the 60 PPD point. The real question is what point between 60 and 120 PPD is the right place to stop. Current 1080p smartphones are at the 90-100 PPD mark, and it seems likely that staying at that mark could be the right compromise to make.

But all of this assumes that the display uses an RGB stripe as seen above, and with Samsung’s various subpixel layouts used to deal with the idiosyncrasies of the organic phosphors such as uneven aging of the different red, green and blue subpixels, as blue ages the fastest, followed by green and red. This is most obvious on well-used demo units, as the extensive runtime can show how white point drops dramatically if the display is used for the equivalent of the service lifetime of the smartphone. For an RGBG pixel layout as seen below, this means that a theoretical display of 2560x1440 resolution with a diagonal length of 5 inches would only give 415.4 SPPI for the red and blue subpixels, and only green subpixels would actually have the 587 SPPI value. While the higher number of green subpixels is a way of hiding the lower resolution due to the human eye’s greater sensitivity to wavelengths that correspond to a green color, it is without question that it is still possible to notice the different subpixel pattern, and the edge of high contrast detail is often where such issues are most visible. Therefore, in order to reach the 587 SPPI mark for the red and blue subpixels, a resolution of around 3616x2034 would be needed to actually get to the level of acuity required. This would mean a PPI of 881. Clearly, at such great resolutions, achieving the necessary SPPI with RGBG pixel layouts would effectively be untenable with any SoC that should be launching in 2014, possibly even 2015.

![]()

While going as far as possible in PPD makes sense for applications where power is no object, the mobile space is strongly driven by power efficiency and a need to balance both performance and power efficiency, and when display is the single largest consumer of battery in any smartphone, it seems to be the most obvious place to focus on for battery life gains. While 1440p will undoubtedly make sense for certain cases, it seems hard to justify such a high resolution within the confines of a phone, and that’s before 4K displays come into the equation. While no one can really say that reaching 600 PPI is purely for the sake of marketing, going any further is almost guaranteed to be for marketing purposes.

Source: Capability of the Human Visual System

99 Comments

View All Comments

bsim500 - Sunday, February 9, 2014 - link

Great article. Above a certain practical level, it really is more marketing p*ssing contest than anything else. Most people want longer battery life & lower cost. Apple vs Android "extreme PPI" rat-race is a bit like arguing over whose HD audio is better : 96khz vs 192khz in a world where almost everyone continues to fail 96khz vs 44khz CD tests (once your strip out all the plaecbo and emotional "superman" wishful thinking rife in the audiophile world under controlled double-blind ABX testing conditions...)If you require a perfectly dark room in order for people with better than 20/20 vision to see it, then it's mostly wasted. The human eye pupil diameter varies from 2-7mm. At 2mm, you're diffraction limited to only about 1 minute (roughly 300ppi from 12" away), so I'm pretty sure even if there was a marginal difference post 300ppi, most people still don't want to sacrifice 20% battery life for 1% of people to benefit from it only whilst sitting in a photographic dark-room. :-)

r3loaded - Sunday, February 9, 2014 - link

In the HD audio thing at least the physics can categorically state that anything higher than 44.1/48kHz sampling rate is completely useless to humans. Nyquist's theorem states you only need to sample at double the maximum frequency you need to encode (20kHz for human hearing) so sampling at 96kHz is wasting bandwidth and storage space. Unless you happen to be a dog and can hear sounds up to 48kHz.ZeDestructor - Sunday, February 9, 2014 - link

"On the internet, nobody knows you're a dog..."nathanddrews - Sunday, February 9, 2014 - link

The 20-20K range has one flaw - two actually. Infrasonic and ultrasonic. Just because the brain can't identify the frequencies doesn't mean they don't affect your ear/brain/body or other frequencies. Studies have shown that some people - a small percentage - can tell the difference, so why limit ourselves to the average when we have the technology to record live audio with its entire range of frequencies, why not collect them all?As to the PPI race, I care less about PPI from the perspective of what the human eye can see and more about how it will force GPU makers to step up their game.

FunBunny2 - Sunday, February 9, 2014 - link

-- so why limit ourselves to the average when we have the technology to record live audio with its entire range of frequencies, why not collect them all?Sounds like another 1%-er demanding that the other 99% pay for its toys. Bah.

nathanddrews - Monday, February 10, 2014 - link

Technically, the 16/44 covers approximately 97% of average human hearing, so let's call it what it is. 3%-er. ;-)buttgx - Sunday, February 9, 2014 - link

No one can hear these ultrasonics, their presence can certainly be degrading to the audio experience though in more ways than one. Zero benefit. Anyone arguing against this is simply wrong.I recommend this article by creator of FLAC.

http://xiph.org/~xiphmont/demo/neil-young.html

nathanddrews - Monday, February 10, 2014 - link

Like most things in life, you have to be careful with absolutes. Most of the available "HD music" hocked by websites are in fact not HD at all, but rather upsampled from dated masters. The facts are that in a recording workflow that starts and ends with 24/96 (or higher), there is a measurable difference compared to 16/44. To what extent this can be heard depends upon the playback setup, the playback environment, and the human doing the hearing. Ultrasonics can be negative, but only in improper setups.One problem with the testing of music tracks in the Meyer/Moran study is that the content used was sourced from older formats that lack the dynamic range of a modern high-resolution master. You can't take an old tape master from the 70s and get more out of it than what's there.

For your consideration, live orchestras can exceed 150dB. Many instruments (and noises) operate outside the average human hearing window: from pipe organs can get down below 10Hz and trumpets can get above 40kHz. These are things that can be recorded and played back if done so appropriately. And no, 192kbps MP3s and Beats™ earbuds won't cut it.

While I know for a fact that I can't hear them, I sure as hell can FEEL the bass under 20Hz in movies. War of the Worlds, with freqs down to 5Hz (my theater room in its current setup is only good for 12Hz) always serves as a good method for loosening one's bowels. LOL

fokka - Sunday, February 9, 2014 - link

"As to the PPI race, I care less about PPI from the perspective of what the human eye can see and more about how it will force GPU makers to step up their game."this logic is somewhat backwards. so we have highres displays and framerates aren't as good as they could be. so gpu makers "step up their game" and implement more powerful, but at the same time more power hungry graphics solutions. so now we get the same framerates as we would have gotten if we only had sticked to slightly lower res displays, with the added benefit of having a hand warmer included in our smartphones. mhm.

nathanddrews - Monday, February 10, 2014 - link

You know as well as I do that demand drives innovation. Consumers want devices that last all day (low power), look great (high DPI), and operate smoothly (high FPS). They aren't going to get it until SOC/GPU makers release better GPUs. The display tech has been here for a while, it's time to play catch-up.