AMD Kaveri Review: A8-7600 and A10-7850K Tested

by Ian Cutress & Rahul Garg on January 14, 2014 8:00 AM ESTThe GPU

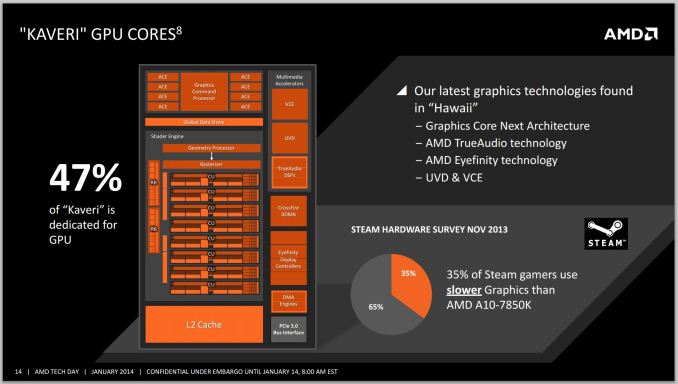

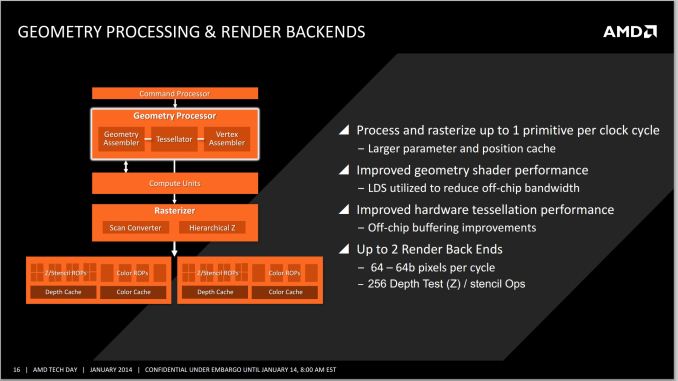

AMD making the move from VLIW4 to the newer GCN architecture makes a lot of sense. Rather than being behind the curve, Kaveri now shares the same GPU architecture as Hawaii based GCN parts; specifically the GCN 1.1 based R9-290X and 260X from discrete GPU lineup. By synchronizing the architecture of their APUs and discrete GPUs, AMD is finally in a position where any performance gains or optimizations made for their discrete GPUs will feed back into their APUs, meaning Kaveri will also get the boost and the bonus. We have already discussed TrueAudio and the UVD/VCE enhancements, and the other major one to come to the front is Mantle.

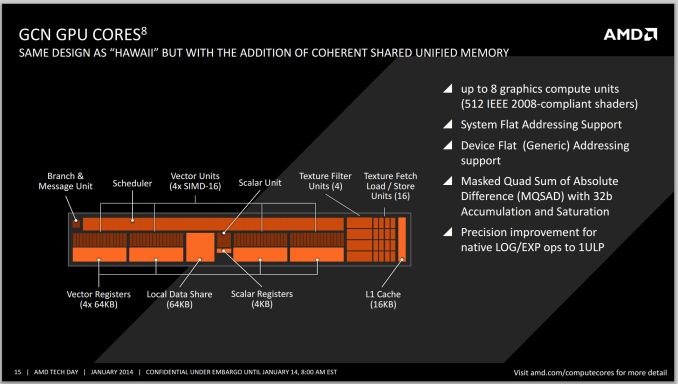

The difference between the Kaveri implementation of GCN and Hawaii, aside from the association with the CPU in silicon, is the addition of the coherent shared unified memory as Rahul discussed in the previous page.

AMD makes some rather interesting claims when it comes to the gaming market GPU performance – as shown in the slide above, ‘approximately 1/3 of all Steam gamers use slower graphics than the A10-7850K’. Given that this SKU is 512 SPs, it makes me wonder just how many gamers are actually using laptops or netbook/notebook graphics. A quick look at the Steam survey shows the top choices for graphics are mainly integrated solutions from Intel, followed by midrange discrete cards from NVIDIA. There are a fair number of integrated graphics solutions, coming from either CPUs with integrated graphics or laptop gaming, e.g. ‘Mobility Radeon HD4200’. With the Kaveri APU, AMD are clearly trying to jump over all of those, and with the unification of architectures, the updates from here on out will benefit both sides of the equation.

A small bit more about the GPU architecture:

Ryan covered the GCN Hawaii segment of the architecture in his R9 290X review, such as the IEEE2008 compliance, texture fetch units, registers and precision improvements, so I will not dwell on them here. The GCN 1.1 implementations on discrete graphics cards will still rule the roost in terms of sheer absolute compute power – the TDP scaling of APUs will never reach the lofty heights of full blown discrete graphics unless there is a significant shift in the way these APUs are developed, meaning that features such as HSA, hUMA and hQ still have a way to go to be the dominant force. The effect of low copying overhead on the APU should be a big break for graphics computing, especially gaming and texture manipulation that requires CPU callbacks.

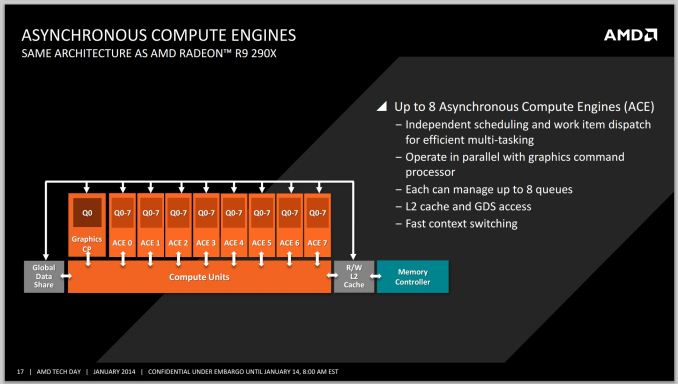

The added benefit for gamers as well is that each of the GCN 1.1 compute units is asynchronous and can implement independent scheduling of different work. Essentially the high end A10-7850K SKU, with its eight compute units, acts as eight mini-GPU blocks for work to be carried out on.

Despite AMD's improvements to their GPU compute frontend, they are still ultimately bound by the limited amount of memory bandwidth offered by dual-channel DDR3. Consequently there is still scope to increase performance by increasing memory bandwidth – I would not be surprised if AMD started looking at some sort of intermediary L3 or eDRAM to increase the capabilities here.

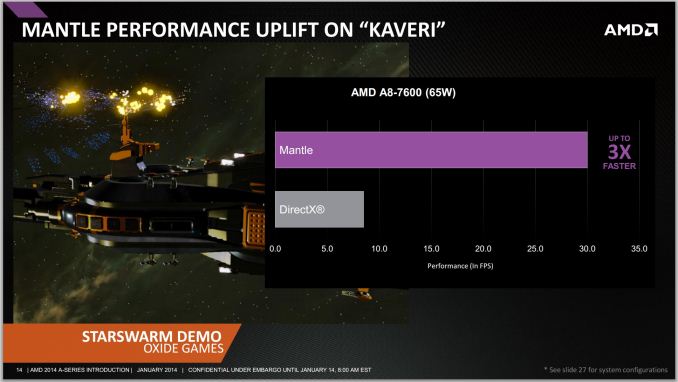

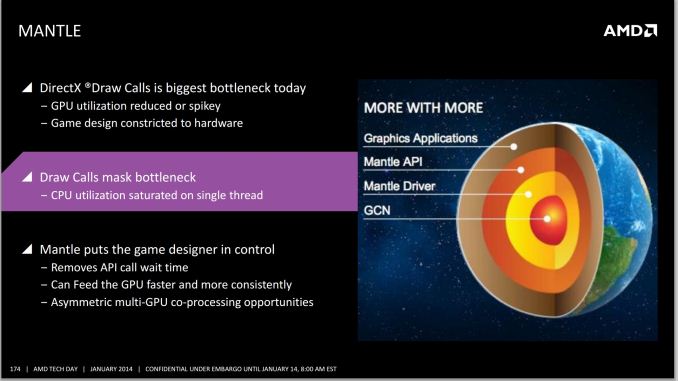

Details on Mantle are Few and Far Between

AMD’s big thing with GCN is meant to be Mantle – AMD's low level API for game engine designers intended to improve GPU performance and reduce the at-times heavy CPU overhead in submitting GPU draw calls. We're effectively talking about scenarios bound by single threaded performance, an area where AMD can definitely use the help. Although I fully expect AMD to eventually address its single threaded performance deficit vs. Intel, Mantle adoption could help Kaveri tremendously. The downside obviously being that Mantle's adoption at this point is limited at best.

Despite the release of Mantle being held back by the delay in the release of the Mantle patch for Battlefield 4 (Frostbite 3 engine), AMD was happy to claim a 2x boost in an API call limited scenario benchmark and 45% better frame rates with pre-release versions of Battlefield 4. We were told this number may rise by the time it reaches a public release.

Unfortunately we still don't have any further details on when Mantle will be deployed for end users, or what effect it will have. Since Battlefield 4 is intended to be the launch vehicle for Mantle - being by far the highest profile game of the initial titles that will support it - AMD is essentially in a holding pattern waiting on EA/DICE to hammer out Battlefield 4's issues and then get the Mantle patch out. AMD's best estimate is currently this month, but that's something that clearly can't be set in stone. Hopefully we'll be taking an in-depth look at real-world Mantle performance on Kaveri and other GCN based products in the near future.

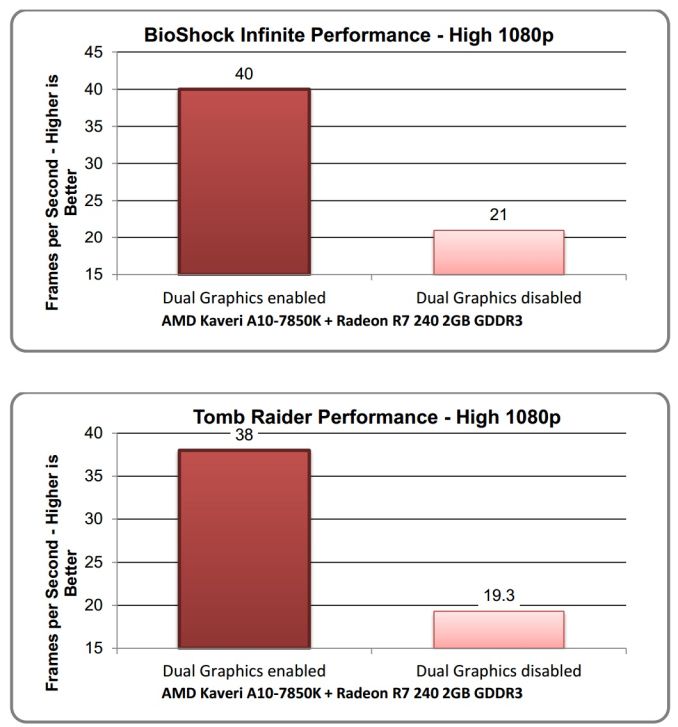

Dual Graphics

AMD has been coy regarding Dual Graphics, especially when frame pacing gets plunged into the mix. I am struggling to think if at any point during their media presentations whether dual graphics, the pairing of the APU with a small discrete GPU for better performance, actually made an appearance. During the UK presentations, I specifically asked about this with little response except for ‘AMD is working to provide these solutions’. I pointed out that it would be beneficial if AMD gave an explicit list of paired graphics solutions that would help users when building systems, which is what I would like to see anyway.

AMD did address the concept of Dual Graphics in their press deck. In their limited testing scenario, they paired the A10-7850K (which has R7 graphics) with the R7 240 2GB GDDR3. In fact their suggestion is that any R7 based APU can be paired with any G/DDR3 based R7 GPU. Another disclaimer is that AMD recommends testing dual graphics solutions with their 13.350 driver build, which due out in February. Whereas for today's review we were sent their 13.300 beta 14 and RC2 builds (which at this time have yet to be assigned an official Catalyst version number).

The following image shows the results as presented in AMD’s slide deck. We have not verified these results in any way and are only here as a reference from AMD.

It's worth noting that while AMD's performance with dual graphics thus far has been inconsistent, we do have some hope that it will improve with Kaveri if AMD is serious about continuing to support it. With Trinity/Richland AMD's iGPU was in an odd place, being based on an architecture (VLIW4) that wasn't used in the cards it was paired with (VLIW5). Never mind the fact that both were a generation behind GCN, where the bulk of AMD's focus was. But with Kavari and AMD's discrete GPUs now both based on GCN, and with AMD having significantly improved their frame pacing situation in the last year, dual graphics is in a better place as an entry level solution to improving gaming performance. Though like Crossfire on the high-end, there are inevitably going to be limits to what AMD can do in a multi-GPU setup versus a single, more powerful GPU.

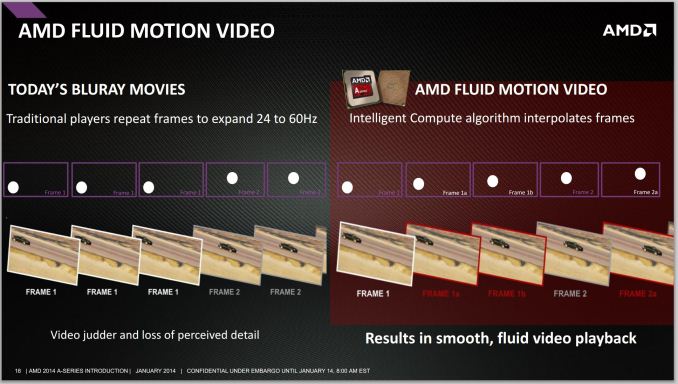

AMD Fluid Motion Video

Another aspect that AMD did not expand on much is their Fluid Motion Video technology on the A10-7850K. This is essentially using frame interpolation (from 24 Hz to 50 Hz / 60 Hz) to ensure a smoother experience when watching video. AMD’s explanation of the feature, especially to present the concept to our reader base, is minimal at best: a single page offering the following:

380 Comments

View All Comments

retrospooty - Tuesday, January 14, 2014 - link

"a low end cpu like the athlon X4 with a HD7750 will be considerably faster than any APU. So in this regard, I disagree with the conclusions that for low end gaming kaveri is the best solution."I get your point, but its not really a review issue , its a product issue. AMD certianly cant compete inthe CPU arena. They are good enough, but nowhere near Intel 2 generations ago (Sandy Bridge from 2011). They have a better integrated GPU, so in that sense its bte best integrated GPU, but as you mentioned, if you are into gaming, you can still get better performance on a budget by getting a budget add in card, so why bother with Kaveri?

Homeles - Tuesday, January 14, 2014 - link

"I get your point, but its not really a review issue , its a product issue."Well, the point of a review is to highlight whether or not a product is worth purchasing.

mikato - Wednesday, January 15, 2014 - link

I agree. He should have made analysis from the viewpoint of different computer purchasers. Just one paragraph would have worked, to fill in the blanks.. something like these -1. the gamer who will buy a pricier discrete GPU

2. the HTPC builder

3. the light gamer + office productivity home user

4. the purely office productivity type work person

just4U - Tuesday, January 14, 2014 - link

I can understand why he didn't use a 7750/70 with GDDR5 ... all sub $70 video cards I've seen come with ddr3. Your bucking up by spending that additional 30-60 bucks (sales not considered)Computer Bottleneck - Tuesday, January 14, 2014 - link

The R7 240 GDDR5 comes in at $49.99 AR---> http://www.newegg.com/Product/Product.aspx?Item=N8...So cheap Video cards can have GDDR5 at a low price point.

just4U - Tuesday, January 14, 2014 - link

That's a sale though.. it's a $90 card.. I mean sure if it becomes the new norm.. but that hasn't been the case for the past couple of years.ImSpartacus - Thursday, January 16, 2014 - link

Yeah, if you get aggressive with sales, you can get $70 7790s. That's a lot of GPU for not a lot of money.yankeeDDL - Tuesday, January 14, 2014 - link

Do you think that once HSA is supported in SW we can see some of the CPU gap reduced?I'd imagine that *if* some of the GPU power can be used to help on FP type of calculation, the boost could be noticeable. Thoughts?

thomascheng - Tuesday, January 14, 2014 - link

Yes, that is probably why the CPU floating point calculation isn't as strong, but we won't see that until developers use OpenCL and HSA. Most likely the big selling point in the immediate future (3 to 6 month) will be Mantle since it is already being implemented in games. HSA and OpenGL 2.0 are just starting to come out, so we will probably see more news on that 6 months from now with partial support in some application and full support after a year. If the APUs in the Playstation 4 and Xbox One are also HSA supported, we will see more games make use of it before general desktop applications.yankeeDDL - Tuesday, January 14, 2014 - link

Agreed. I do hope that the gaming consoles pave the way for more broad adoption of these new techniques. After all, gaming has been pushing most of the innovation for quite some time now.CPU improvement has been rather uneventful: I still use a PC with an Athlon II X2 @ 2.8GHz and with a decent graphic card is actually plenty good for most of the work. That's nearly a 5 year old CPU and I don't think there's a 2X improvement even going to a core i3. In any case, there have to be solution to improve IPC that go beyond some circuit optimization, and HSA seems promising. We'll all have to gain if it happens: it would be nice to have again some competition non the CPU side.