NVIDIA Tegra K1 Preview & Architecture Analysis

by Brian Klug & Anand Lal Shimpi on January 6, 2014 6:31 AM ESTTegra K1 ISP & Video

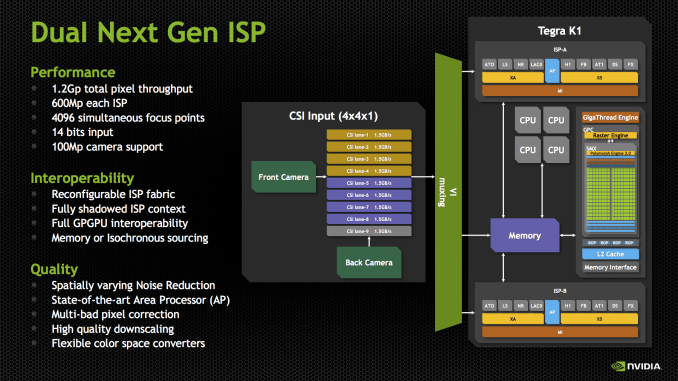

NVIDIA’s Tegra K1 SoC also makes some dramatic improvements on the ISP side. We saw SoCs start arriving with two ISPs sometime in 2013, which allowed OEMs to deliver a host of new imaging experiences, like shot in shot video and simultaneous use of both front and rear cameras. With Tegra K1, NVIDIA is not only moving to two ISPs, but it’s also making ISP more of a first class citizen.

For those not familiar, ISP (Image Signal Processor) handles the imaging pipeline for still photos, video, and performs tasks like Bayer to RGB conversion (demosaicing), 3A (Autofocus, Auto Exposure, Auto white balance), noise reduction, lens correction, and so on. Although NVIDIA has always included an ISP onboard, I couldn’t shake the feeling that still imaging performance could’ve been better, especially in the few cases that allowed direct comparison (HTC One X). With Tegra K1, there’s more die area dedicated to ISP than in the past, and there are two of them to support the kind of dual camera applications that have quickly become popular.

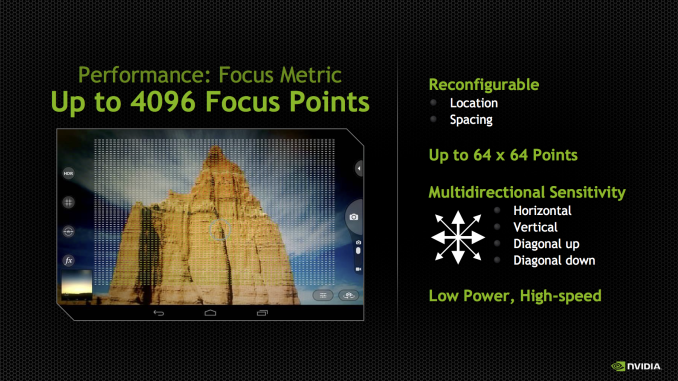

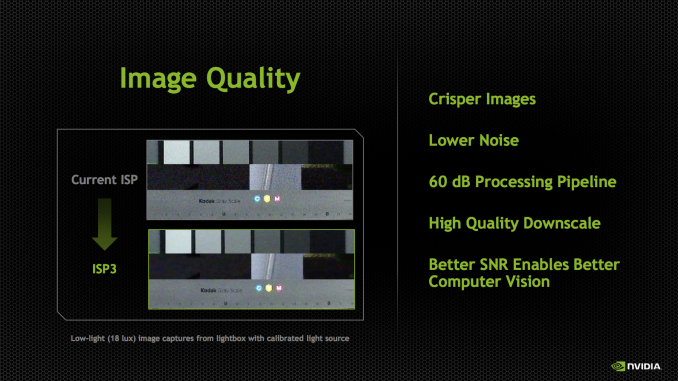

Tegra K1 includes the third generation of NVIDIA’s ISP, capable of processing 600 MP/s on each ISP with 14 bit input, and support for up to 100 MP cameras. There are two of them, so NVIDIA quotes the total pixel throughput as up to 1.2 Gp/s. This is dramatically increased from Tegra 4, which supported up to 400 Mp/s at 10 bits per pixel. In addition the K1’s ISP now supports up to 4096 focus points, a 64x64 array, for its autofocus routine. The ISP also has better noise reduction, and local tone mapping, a feature we’ve also seen become popular for combining parts of images and recovering some of the dynamic range lost with ever shrinking pixel sizes.

Tegra K1 retains compatibility with the Chimera 1.0 features that we just saw in the Tegra Note 7, like object tracking, always-on HDR, slow motion capture, and full resolution burst, and adds more. NVIDIA has kept the Chimera brand for the K1 SoC, calling it Chimera 2.0, and envisions this architecture enabling things like better temporal pixel binning (combining 8 exposures from the CMOS to drive noise down further), faster panorama, video stabilization, and even better live preview with effects applied. The high level of Chimera seems to be the same – kernels that either run on the CPU, or on the GPU (ostensibly in CUDA this time) before or after the ISP and in a variety of image spaces (Bayer or RGB depending).

On the video side, Tegra K1 continues to support 2160p30 (4K or UHD video at 30FPS) encode and decode. Broken down another way, H.264 High Profile Level 5.1 decode and 4K H.264 High Profile 4.2 encode. The fact that there’s a Kepler next door made me suspect that NVENC was used for most of these tasks, but it turns out that NVIDIA still has discrete blocks for video encode of H.264, VP8, VC1, and others. These are the same video encode and decode blocks as what were used in Tegra 4, but with some further optimizations for power and efficiency. The Tegra K1 platform includes support for H.265 video decode as well, but this isn’t accelerated fully in hardware, rather the decode is split across NVENC and CPU.

NVIDIA showed off a K1 reference board doing 4Kp30 H.264 decode on an attached display, I didn’t notice any dropped frames. Of course that’s a given considering we saw the same thing on Tegra 4, but it’s still worth noting that the SoC is capable of driving 4K/UHD displays over eDP 1.4, LVDS and HDMI 1.4b.

The full GPIO breakdown for Tegra K1 includes essentially all the requisite connectivity you’d expect for a mobile SoC. For USB there’s 3 USB 2.0 ports, and 2 USB 3.0 ports. For storage Tegra K1 supports eMMC up to version 4.5.1, and there’s PCIe x4 which can be configured

88 Comments

View All Comments

eddman - Monday, January 6, 2014 - link

I'm wondering the same thing.Xbox and PS are gaming machines, running specialized OSes, and programmers can utilize low-level APIs and extract as much performance as possible.

Tegra K1 might be powerful, but it'll still be running general purpose OSes like android and windows RT.

Is there any way to know the performance gain by going low-level vs. high-level APIs for a video game? How much it really is? 5%? 15%? 40%?!!

Krysto - Monday, January 6, 2014 - link

Xbox360 and PS3 support DirectX9 and OpenGL ES 2.0+extensions. Many developers can and have made games with those APIs. Not all games on the consoles are "bare metal". So the "overall" difference in gaming, is probably not going to be very different.The real "problem" is that, those games will need to come to devices that have 1080p or even 2.5k resolutions, which will cut the graphics performance of the games by 2-4x, compared to Xbox/PS3. This is why I hate the OEMs for being so dumb and pushing resolutions even further on mobile.

It's a waste of component money (could be used for different stuff instead), of battery life, and also of GPU resources.

nicolapeluchetti - Monday, January 6, 2014 - link

I guess really good games use low levels API, i mean GTA V looks amazing and the specs of the X-Box 360 are what they are.I agree with you that resolution will be a problem, but actually i really like the added resolution in everyday use. i recently switched from a note 2 to a nexus 5 and the extra resolution is fantastic.

They will probably have to upscale things, render at 720p and render at 1080p

Krysto - Tuesday, January 7, 2014 - link

AMD said Mantle is pretty much bare-metal console API. And they said at their conference at CES that Battlefield 4 with that is 50 percent faster. So the difference is not huge, but significant.By far the biggest impact will be made by the resolution of the device. While games on Xbox 360 run at 720p, most devices with Tegra K1 will probably have at least a 1080p resolution, which is twice as many pixels, so it cuts the performance in half (or the graphics quality).

TheJian - Sunday, January 12, 2014 - link

Link please, and at what point in the vid do they say it (because some of those vids are 1hr+ for conferences)? I have seen only ONE claim and by a single dev who said you might get 20% if lucky. It is telling that we have NO benchmarks yet.But I'm more than happy to read about someone using Mantle actually saying they expect 45-50% IN GAME over a whole benchmark (not some specific operation that might only be used once). But I don't expect it to go ever 20%.

Which makes sense given AMD shot so low with their comment of "we wouldn't do it for 5%". If it was easy to get even 40% wouldn't you say "we wouldn't do it for 25%"? Reality is they have to spend to get a dev to do this at all, because they gain NOTHING financially for using Mantle unless AMD is paying them.

I'll be shocked to see BF4 over 25% faster than with it off (I only say 25% for THIS case because this is their best case I'm assuming, due to AMD funding it big time as a launch vehicle). Really I might be shocked at 20% but you gave me such a wide margin to be right saying 50%. They may not even get 20%.

Why would ANY dev do FREE work to help AMD, and when done be able to charge ZERO over the cost of the game for everyone else that doesn't have mantle? It would be easier to justify it's use if devs could charge Mantle users say $15 extra per game. But that just won't work here. So you're stuck with amd saying "please dev, I know its more work and you won't ever make a dime from it, but it would be REALLY nice for us if you did this work free"...Or "Hi, my name is AMD, here's $8 Million dollars, please use Mantle". Only the 2nd option works at all, and even then you get Mantle being back burner the second the game needs to be fixed for the rest of us (BF4 for instance, all stuff on back burner until BF4 is fixed for regular users). This story is no different than Phsyx etc.

nicolapeluchetti - Tuesday, January 7, 2014 - link

Mantle is said to have 45% performance bonus compared to DirectX on Battlfield. Those are the rumours.OreoCookie - Monday, January 6, 2014 - link

It's great to see that finally the SoC makers are being serious about GPU compute, now it's up to software developers to take advantage of all that compute horsepower. Given Apple's focus on GPU performance in the past, I'm curious to see what their A8 looks like and how it stacks up against Tegra K1 (in particular the Denver version).timchen - Monday, January 6, 2014 - link

The Denver speculation really needs some justification.Doesn't common sense say that the same task is always more power efficient done with hardware rather than software? It would at least need a paragraph or two to explain how OoO or speculative execution or ILC can be more power efficient in software.

Now if it is just that you need to build different binaries specifically for these cores, it then sounds a lot more like a compute GPU actually-- but as far as I understand so far general tasks are not suitable to run on those configurations, and parallelization for general problems is pretty much a dead horse (similar to P=NP?) now.

KAlmquist - Monday, January 6, 2014 - link

That speculation didn't make a lot of sense to me, either.One of the reasons that out of order execution improves performance is that cache misses are expensive. In an out of order processor, when a cache miss occurs the processor can defer the instructions that need that particular piece of data, and execute other instructions while waiting for the read to complete. To create "nice bundles of instructions that are already optimized for peak parallelism," you have to know how long each memory read is going to take.

The writers mention the Transmeta Efficeon processor, which translated x86 instructions to native instructions and then executed them on an in-order processor. That was a fairly effective approach, but doesn't demonstrate that an in-order processor can compete with a modern out of order processor. After all, ARM started out producing in-order processors, which were very energy efficient, but eventually they had to produce an out of order design in order to increase performance without increasing the clock rate.

Loki726 - Monday, January 6, 2014 - link

Transmeta didn't have an in-order design in the same way that a normal CPU is in order. See their CGO paper: http://people.ac.upc.edu/vmoya/docs/transmeta-cgo....Here's the relevant text:

"Compilers typically deal with recovery from speculation by generating compensation code, which re-

executes incorrectly sequenced operations, performs operations omitted from the speculative code path, and

corrects mismatches in register assignments (Freudenberger et al. [13]). With this approach, hardware

support is required to defer faults of potentially faulting instructions moved above branches (e.g.,

boosting,Smith et al. [23]), to detect overlapping memory operations scheduled out of sequence, and to branch to the

compensation code (e.g., memory conflict buffers, Gallagher et al. [14], or the Intel IA-64 ALAT[18]).

In contrast, Crusoe native VLIW processors provide an elegant hardware solution that supports arbitrary kinds of

speculation and subsequent recovery and works hand-in-hand with the Code Morphing Software [8]. All registers

holding x86 state are shadowed; that is, there exist two copies of each register, a working copy and a shadow

copy. Normal atoms only update the working copy of the register. If execution reaches the end of a translation, a

special commit operation copies all working registers into their corresponding shadow registers, committing the

work done in the translation. On the other hand, if any exceptional condition, such as the failure of one of CMS’s

translation assumptions, occurs inside the translation, the runtime system undoes the effects of all molecules

executed since the last commit via a rollback operation that copies the shadow register values (committed at the

end of the previous translation) back into the working registers.

Following a rollback, CMS usually interprets the x86 instructions corresponding to the faulting translation, executing

them in the original program order, handling any special cases that are encountered, and invoking the x86

exception-handling procedure if necessary.

Commit and rollback also apply to memory operations. Store data are held in a gated store buffer, from which they

are only released to the memory system at the time of a commit. On a rollback, stores not yet committed can

simply be dropped from the store buffer. To speed the common case of no rollback, the mechanism was designed so

that commit operations are effectively “free”[27], while rollback atoms cost less than a couple of branch mispredictions."