NVIDIA Tegra K1 Preview & Architecture Analysis

by Brian Klug & Anand Lal Shimpi on January 6, 2014 6:31 AM ESTCPU Option 2: Dual-Core 64-bit NVIDIA Denver

Three years ago, also at CES, NVIDIA announced that it was working on its own custom ARM based microprocessor, codenamed Denver. Denver was teased back in 2011 as a solution for everything from PCs to servers, with no direct mention of going into phones or tablets. In the second half of 2014, NVIDIA expects to offer a second version of Tegra K1 based on two Denver cores instead of 4+1 ARM Cortex A15s. Details are light but here’s what I’m expecting/have been able to piece together.

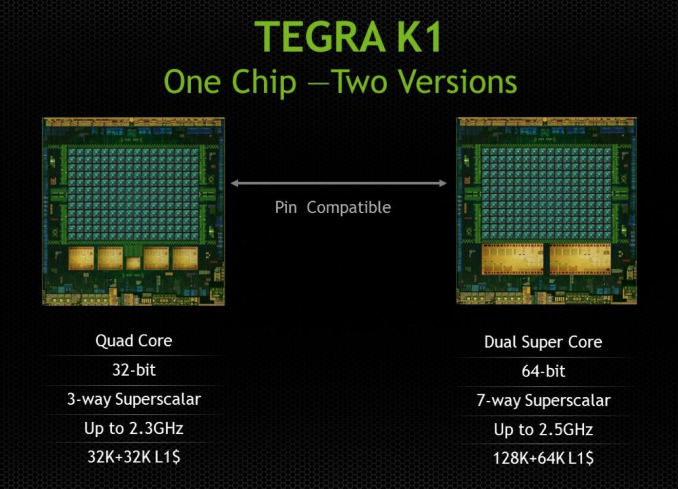

Given the 28nm HPM process for Tegra K1, I’d expect that the Denver version is also a 28nm HPM design. NVIDIA claims the two SoCs are pin-compatible, which tells me that both feature the same 64-bit wide LPDDR3 memory interface.

The companion core is gone in the Denver version of K1, as is the quad-core silliness. Instead you get two, presumably larger cores with much higher IPC; in other words, the right way to design a CPU for mobile. Ironically it’s NVIDIA, the company that drove the rest of the ARM market into the core race, that is the first (excluding Apple/Intel) to come to the realization that four cores may not be the best use of die area in pursuit of good performance per watt in a phone/tablet design.

It’s long been rumored that Denver was a reincarnation of NVIDIA’s original design for an x86 CPU. The rumor there being NVIDIA used binary translation to convert x86 assembly to some internal format (optimizing the assembly in the process for proper scheduling/dispatch/execution) before it hit the CPU core itself. The obvious change being instead of being x86 compatible, NVIDIA built something that was compatible with ARMv8.

I believe Denver still works the same way though. My guess is there’s some form of a software abstraction layer that intercepts ARMv8 machine code, translates and optimizes/morphs it into a friendlier format and then dispatches it to the underlying hardware. We’ve seen code morphing + binary translation done in the past, including famously in Transmeta’s offerings in the early 2000s, but it’s never been done all that well at the consumer client level.

Mobile SoC vendors are caught in a tough position. Each generation they are presented with opportunities to increase performance, however at some point you need to move to a larger out of order design in order to efficiently scale performance. Once you make that jump, there’s a corresponding increase in power consumption that you simply can’t get over. Furthermore, subsequent performance increases usually depend on leveraging more speculative execution, which also comes with substantial power costs.

ARM’s solution to this problem is to have your cake and eat it too. Ship a design with some big, speculative, out of order cores but also include some in-order cores when you don’t absolutely need the added performance. Include some logic to switch between the cores and you’re golden.

If Denver indeed follows this path of binary translation + code optimization/morphing, it offers another option for saving power while increasing performance in mobile. You can build a relatively wide machine (NVIDIA claims Denver is a 7-issue design, though it’s important to note that we’re talking about the CPU’s internal instruction format and it’s not clear what type of instructions can be co-issued) but move a lot of the scheduling/ILP complexities into software. With a good code morphing engine the CPU could regularly receive nice bundles of instructions that are already optimized for peak parallelism. Removing the scheduling/OoO complexities from the CPU could save power.

Granted all of this funky code translation and optimization is done in software, which ultimately has to run on the same underlying CPU hardware, so some power is expended doing that. The point being that if you do it efficiently, any power/time you spend here will still cost less than if you had built a conventional OoO machine.

I have to say that if this does end up being the case, I’ve got to give Charlie credit. He called it all back in late 2011, a few months after NVIDIA announced Denver.

NVIDIA announced that Denver would have a 128KB L1 instruction cache and a 64KB L1 data cache. It’s fairly unusual to see imbalanced L1 I/D caches like that in a client machine, which I can only assume has something to do with Denver’s more unique architecture. Curiously enough, Transmeta’s Efficeon processor (2nd generation code morphing CPU) had the exact same L1 cache sizes (it also worked on 8-wide VLIW instructions for what it’s worth). NVIDIA also gave us a clock target of 2.5GHz. For an insanely wide machine 2.5GHz sounds pretty high, especially if we’re talking about 28nm HPM, so I’m betting Charlie is right in that we need to put machine width in perspective.

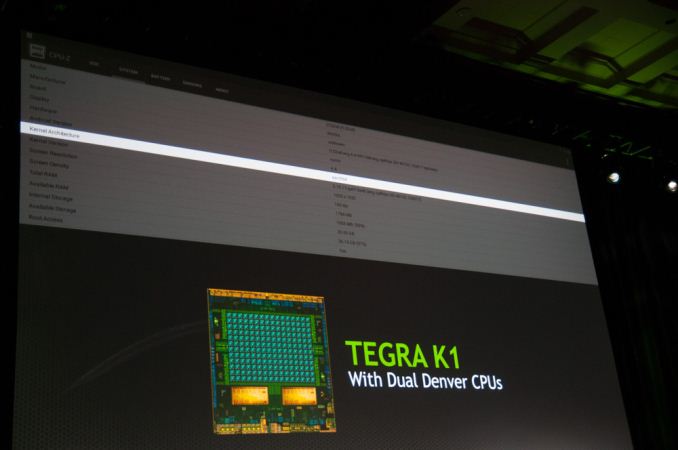

NVIDIA showed a Denver Tegra K1 running Android 4.4 at CES. The design came back from the fab sometime in the past couple of weeks and is already up and running Android. NVIDIA hopes to ship the Denver version of Tegra K1 in the second half of the year.

The Denver option is the more interesting of the two as it not only gives us another (very unique) solution to the power problem in mobile, but it also embraces a much more sane idea of the right balance of core size vs. core count in mobile.

88 Comments

View All Comments

Nenad - Monday, January 13, 2014 - link

That is not real picture of GPUs/CPUs, it is photoshoped, so we do not know relative size of A15 and Denver cores.chizow - Monday, January 6, 2014 - link

Of course they would, designating it as simply dual-core would intimate it's a downgrade when it clearly is not.MrdnknnN - Monday, January 6, 2014 - link

"As if that wasn’t enough, starting now, all future NVIDIA GeForce designs will begin first and foremost as mobile designs."I guess I am a dinosaur because this makes me want to cry.

nathanddrews - Monday, January 6, 2014 - link

Why? It was the best thing that ever happened to Intel (Core). Desktop graphics are in a rut. Too expensive, not powerful enough for the coming storm of high frame rate 4K and 8K software and hardware.HammerStrike - Monday, January 6, 2014 - link

From a gaming perspective Intel's focus on mobile has let to 10%-15% performance increases in their desktop line whenever they release a new chip series. That's pretty disappointing, from a gaming performance perspective, even though I understand why they are focusing there.Also, I disagree with you on desktop graphics - this is a golden time for them. The competition in the $200-$300 card range is fierce, and there is ton of great value there. Not sure why you think there is a "storm" of 4k and 8k content coming any time soon, as there isn't, but even 2x R9 290, $800 at MSRP (I know the mining craze has distorted that, but it will correct) can drive 4K today. Seeing as most decent 4K monitors are still $3000+, I'd argue it is the cost of the displays, and not the GPU's, that is holding back wider adaptation.

As long as nVidia keeps releasing competitive parts I really don't care what their design methodology is. That being said, power efficiency is the #1 priority in mobile, so if they are going to be devoting mindshare to that my concern is top line performance will suffer in desktop apps, where power is much less of an issue.

OreoCookie - Monday, January 6, 2014 - link

Since Intel includes relatively powerful GPUs in their CPUs, discrete GPUs are needed only for special purposes (gaming, GPU compute and various special applications). And the desktop market has been contracting for years in favor of mobile computers and devices. In the notebook space, thanks to Intel finally including decent GPUs in their CPUs, only high-end notebooks come with discrete GPUs. Hence, the market for discrete GPUs is shrinking (which is one of the reasons why nVidia and AMD are both in the CPU game as well as the GPU game).MrSpadge - Monday, January 6, 2014 - link

> From a gaming perspective Intel's focus on mobile has let to 10%-15% performance increases in their desktop line whenever they release a new chip series. That's pretty disappointingThat's not because of their power efficiency oriented design, it's because their CPU designs are already pretty good (difficult to improve upon) and there's no market pressure to push harder. And as socket 2011 shows us: pushing 6 of these fat cores flat out still requires 130+ W, making these PCs the dinosaurs of old again (-> not mass compatible).

Sabresiberian - Monday, January 6, 2014 - link

I think you are misunderstanding the situation here. What will go in a mobile chip will be the equivalent of one SMX core, while what will go in the desktop version will be as many as they can cool properly. With K1 and Kepler we have the same architecture, but there is one SMX in the coming mobile solution, and 15 SMXs in a GeForce 780Ti. So, 15x the performance in the 780Ti (roughly) using the same design.Maxwell could end up being made up of something like 20 SMXs designed with mobile efficiency in mind; that's a good thing for those of us playing at the high end of video quality. :)

MrSpadge - Monday, January 6, 2014 - link

This just means they'll be optimized for power efficiency first. Which makes a lot of sense - look at Haitii, it can not even reach "normal" clock speeds with the stock cooler because it eats so much power. Improving power efficiency automatically results in higher performance becoming achievable via bigger dies. What they decide to offer us is a different story altogether.kpb321 - Monday, January 6, 2014 - link

My initial reaction was a little like MrdnknnN but when I thought about it I realized that may not be a bad thing. Video cards at this point are primarily constrained on the high end by power and cooling limitations more than anything else. The R9 is a great example of this. Optimizing for mobile should result in a more efficient design which can scale up to good desktop and high end performance by adding on the appropriate memory interfaces and putting down enough "blocks" SMXs in nvidia's case. They already do this to give the range of barely better than integrated video cards to top end 500+ dollar cards. I don't think the mobile focus is too far below the low end cards of today to cause major problems here.