NVIDIA Tegra K1 Preview & Architecture Analysis

by Brian Klug & Anand Lal Shimpi on January 6, 2014 6:31 AM ESTCPU Option 2: Dual-Core 64-bit NVIDIA Denver

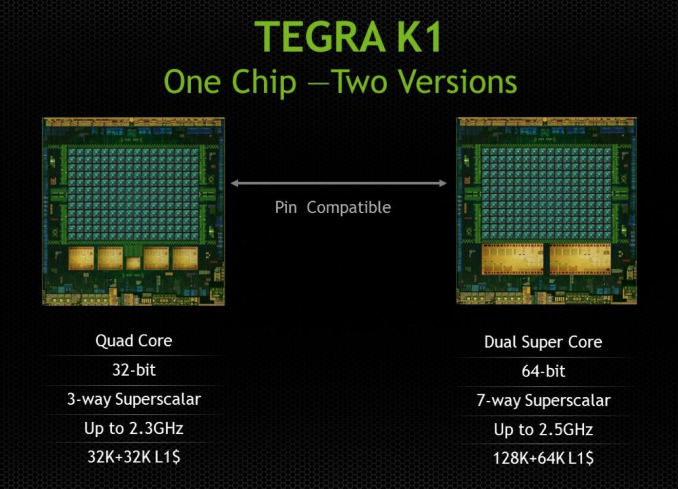

Three years ago, also at CES, NVIDIA announced that it was working on its own custom ARM based microprocessor, codenamed Denver. Denver was teased back in 2011 as a solution for everything from PCs to servers, with no direct mention of going into phones or tablets. In the second half of 2014, NVIDIA expects to offer a second version of Tegra K1 based on two Denver cores instead of 4+1 ARM Cortex A15s. Details are light but here’s what I’m expecting/have been able to piece together.

Given the 28nm HPM process for Tegra K1, I’d expect that the Denver version is also a 28nm HPM design. NVIDIA claims the two SoCs are pin-compatible, which tells me that both feature the same 64-bit wide LPDDR3 memory interface.

The companion core is gone in the Denver version of K1, as is the quad-core silliness. Instead you get two, presumably larger cores with much higher IPC; in other words, the right way to design a CPU for mobile. Ironically it’s NVIDIA, the company that drove the rest of the ARM market into the core race, that is the first (excluding Apple/Intel) to come to the realization that four cores may not be the best use of die area in pursuit of good performance per watt in a phone/tablet design.

It’s long been rumored that Denver was a reincarnation of NVIDIA’s original design for an x86 CPU. The rumor there being NVIDIA used binary translation to convert x86 assembly to some internal format (optimizing the assembly in the process for proper scheduling/dispatch/execution) before it hit the CPU core itself. The obvious change being instead of being x86 compatible, NVIDIA built something that was compatible with ARMv8.

I believe Denver still works the same way though. My guess is there’s some form of a software abstraction layer that intercepts ARMv8 machine code, translates and optimizes/morphs it into a friendlier format and then dispatches it to the underlying hardware. We’ve seen code morphing + binary translation done in the past, including famously in Transmeta’s offerings in the early 2000s, but it’s never been done all that well at the consumer client level.

Mobile SoC vendors are caught in a tough position. Each generation they are presented with opportunities to increase performance, however at some point you need to move to a larger out of order design in order to efficiently scale performance. Once you make that jump, there’s a corresponding increase in power consumption that you simply can’t get over. Furthermore, subsequent performance increases usually depend on leveraging more speculative execution, which also comes with substantial power costs.

ARM’s solution to this problem is to have your cake and eat it too. Ship a design with some big, speculative, out of order cores but also include some in-order cores when you don’t absolutely need the added performance. Include some logic to switch between the cores and you’re golden.

If Denver indeed follows this path of binary translation + code optimization/morphing, it offers another option for saving power while increasing performance in mobile. You can build a relatively wide machine (NVIDIA claims Denver is a 7-issue design, though it’s important to note that we’re talking about the CPU’s internal instruction format and it’s not clear what type of instructions can be co-issued) but move a lot of the scheduling/ILP complexities into software. With a good code morphing engine the CPU could regularly receive nice bundles of instructions that are already optimized for peak parallelism. Removing the scheduling/OoO complexities from the CPU could save power.

Granted all of this funky code translation and optimization is done in software, which ultimately has to run on the same underlying CPU hardware, so some power is expended doing that. The point being that if you do it efficiently, any power/time you spend here will still cost less than if you had built a conventional OoO machine.

I have to say that if this does end up being the case, I’ve got to give Charlie credit. He called it all back in late 2011, a few months after NVIDIA announced Denver.

NVIDIA announced that Denver would have a 128KB L1 instruction cache and a 64KB L1 data cache. It’s fairly unusual to see imbalanced L1 I/D caches like that in a client machine, which I can only assume has something to do with Denver’s more unique architecture. Curiously enough, Transmeta’s Efficeon processor (2nd generation code morphing CPU) had the exact same L1 cache sizes (it also worked on 8-wide VLIW instructions for what it’s worth). NVIDIA also gave us a clock target of 2.5GHz. For an insanely wide machine 2.5GHz sounds pretty high, especially if we’re talking about 28nm HPM, so I’m betting Charlie is right in that we need to put machine width in perspective.

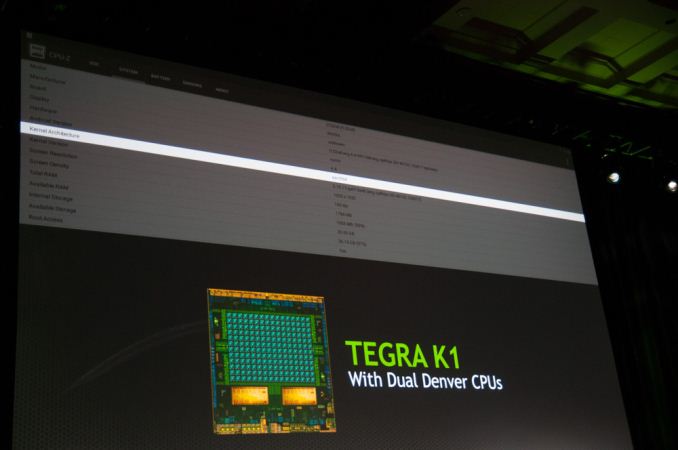

NVIDIA showed a Denver Tegra K1 running Android 4.4 at CES. The design came back from the fab sometime in the past couple of weeks and is already up and running Android. NVIDIA hopes to ship the Denver version of Tegra K1 in the second half of the year.

The Denver option is the more interesting of the two as it not only gives us another (very unique) solution to the power problem in mobile, but it also embraces a much more sane idea of the right balance of core size vs. core count in mobile.

88 Comments

View All Comments

davidjin - Thursday, January 23, 2014 - link

Not true. v8 introduces new MMU design and page table format and other enhanced features, which are not readily compatible to a straightforward "re-compiled" kernel.By App-wise, you are right. Re-compilation will almost do the trick. However, without a nice kernel, how do you run the Apps?

deltatux - Saturday, January 25, 2014 - link

This is no different than the AMD64 implementation, the Linux kernel will gain a few improvements to make it work perfectly on that specific architecture extension, the same is likely going to happen to aarch64. That means, there's no reason to make a 32-bit version of ARMv8. ARMv8 will be able to encompass and execute both 64-bit and 32-bit software.As shown by NVIDIA's own tech demo, Android is already 64-bit capable, thus, no need to continually run in 32-bit mode.

Laxaa - Monday, January 6, 2014 - link

Kepler K1 with Denver in Surface 3?nafhan - Monday, January 6, 2014 - link

I'd be more interested in a Kepler Steam Box.B3an - Monday, January 6, 2014 - link

... You can already get Kepler Steam boxes. They use desktop Kepler GPU's.Surface 3 with K1 would be much more interesting, and unlike Steam OS, actually useful and worthwhile.

Alexvrb - Monday, January 6, 2014 - link

Agreed. The Surface 2 is a well done piece of hardware. It just needs some real muscle now. As the article says, it would be a good opportunity to showcase X360-level games like GTA V.name99 - Monday, January 6, 2014 - link

Right. That's the reason Surface 2 isn't selling, it doesn't have "real muscle". Hell, put a POWER8 CPU in there with 144GB and some 10GbE connectors and you'll have a product that takes over the world...stingerman - Tuesday, January 7, 2014 - link

I doubt Microsoft will sell a device that competes against the 360... It would signal to investors what they already suspect: Gaming Consoles are end of line. Problem is that Apple is now in a better position...klmx - Monday, January 6, 2014 - link

I love how Nvidia's marketing department is selling the dual Denver cores as "supercores"MonkeyPaw - Monday, January 6, 2014 - link

They are at least extra big compared to an A15, so it's at least accurate by a size comparison. ;)