The Mac Pro Review (Late 2013)

by Anand Lal Shimpi on December 31, 2013 3:18 PM ESTThe PCIe Layout

Ask anyone at Apple why they need Ivy Bridge EP vs. a conventional desktop Haswell for the Mac Pro and you’ll get two responses: core count and PCIe lanes. The first one is obvious. Haswell tops out at 4 cores today. Even though each of those cores is faster than what you get with an Ivy Bridge EP, for applications that can spawn more than 4 CPU intensive threads you’re better off taking the IPC/single threaded hit and going with an older architecture that supports more cores. The second point is a connectivity argument.

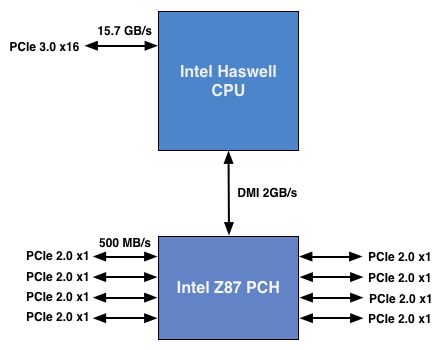

Here’s what a conventional desktop Haswell platform looks like in terms of PCIe lanes:

You’ve got a total of 16 PCIe 3.0 lanes that branch off the CPU, and then (at most) another 8 PCIe 2.0 lanes hanging off of the Platform Controller Hub (PCH). In a dual-GPU configuration those 16 PCIe 3.0 lanes are typically divided into an 8 + 8 configuration. The 8 remaining lanes are typically more than enough for networking and extra storage controllers.

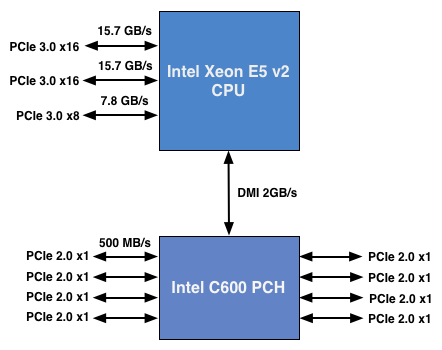

Ivy Bridge E/EP on the other hand doubles the total number of PCIe lanes compared to Intel’s standard desktop platform:

Here the CPU has a total of 40 PCIe 3.0 lanes. That’s enough for each GPU in a dual-GPU setup to get a full 16 lanes, and to have another 8 left over for high-bandwidth use. The PCH also has another 8 PCIe 2.0 lanes, just like in the conventional desktop case.

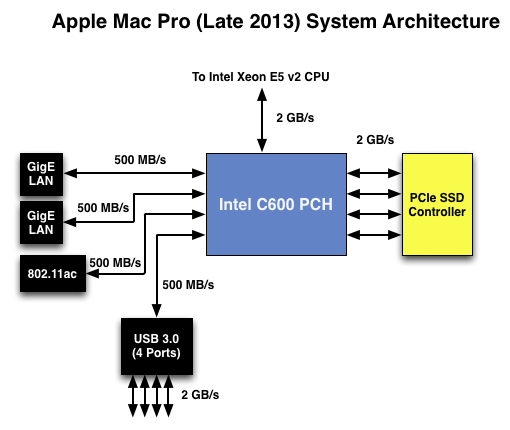

I wanted to figure out how these PCIe lanes were used by the Mac Pro, so I set out to map everything out as best as I could without taking apart the system (alas, Apple tends to frown upon that sort of behavior when it comes to review samples). Here’s what I was able to come up with. Let’s start off of the PCH:

Here each Gigabit Ethernet port gets a dedicated PCIe 2.0 x1 lane, the same goes for the 802.11ac controller. All Mac Pros ship with a PCIe x4 SSD, and those four lanes also come off the PCH. That leaves a single PCIe lane unaccounted for in the Mac Pro. Here we really get to see how much of a mess Intel’s workstation chipset lineup is: the C600/X79 PCH doesn’t natively support USB 3.0. That’s right, it’s nearly 2014 and Intel is shipping a flagship platform without USB 3.0 support. The 8th PCIe lane off of the PCH is used by a Fresco Logic USB 3.0 controller. I believe it’s the FL1100, which is a PCIe 2.0 to 4-port USB 3.0 controller. A single PCIe 2.0 lane offers a maximum of 500MB/s of bandwidth in either direction (1GB/s aggregate), which is enough for the real world max transfer rates over USB 3.0. Do keep this limitation in mind if you’re thinking about populating all four USB 3.0 ports with high-speed storage with the intent of building a low-cost Thunderbolt alternative. You’ll be bound by the performance of a single PCIe 2.0 lane.

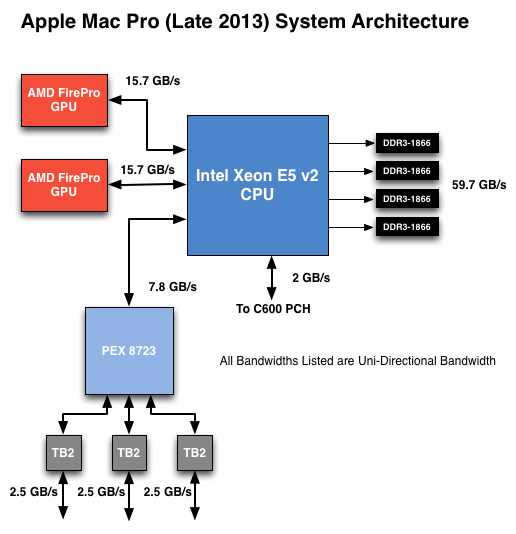

That takes care of the PCH, now let’s see what happens off of the CPU:

Of the 40 PCIe 3.0 lanes, 32 are already occupied by the two AMD FirePro GPUs. Having a full x16 interface to the GPUs isn’t really necessary for gaming performance, but if you want to treat each GPU as a first class citizen then this is the way to go. That leaves us with 8 PCIe 3.0 lanes left.

The Mac Pro has a total of six Thunderbolt 2 ports, each pair is driven by a single Thunderbolt 2 controller. Each Thunderbolt 2 controller accepts four PCIe 2.0 lanes as an input and delivers that bandwidth to any Thunderbolt devices downstream. If you do the math you’ll see we have a bit of a problem: 3 TB2 controllers x 4 PCIe 2.0 lanes per controller = 12 PCIe 2.0 lanes, but we only have 8 lanes left to allocate in the system.

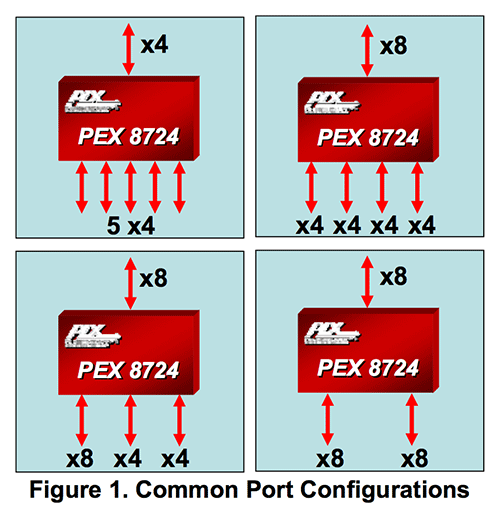

I assumed there had to be a PCIe switch sharing the 8 PCIe input lanes among the Thunderbolt 2 controllers, but I needed proof. Our Senior GPU Editor, Ryan Smith, did some digging into the Mac Pro’s enumerated PCIe devices and discovered a very familiar vendor id: 10B5, the id used by PLX Technology. PLX is a well known PCIe bridge/switch manufacturer. The part used in the Mac Pro (PEX 8723) is of course not listed on PLX’s website, but it’s pretty close to another one that PLX is presently shipping: the PEX 8724. The 8724 is a 24-lane PCIe 3.0 switch. It can take 4 or 8 PCIe 3.0 lanes as an input and share that bandwidth among up to 16 (20 in the case of a x4 input) downstream PCIe lanes. Normally that would create a bandwidth bottleneck but remember that Thunderbolt 2 is still based on PCIe 2.0. The switch provides roughly 15GB/s of bandwidth to the CPU and 3 x 5GB/s of bandwidth to the Thunderbolt 2 controllers.

Literally any of the 6 Thunderbolt 2 ports on the back of the Mac Pro will give you access to the 8 remaining PCIe 3.0 lanes living off of the CPU. It’s pretty impressive when you think about it, external access to a high-speed interface located on the CPU die itself.

The part I haven’t quite figured out yet is how Apple handles DisplayPort functionality. All six Thunderbolt 2 ports are capable of outputting to a display, which means that there’s either a path from the FirePro to each Thunderbolt 2 controller or the PEX 8723 switch also handles DisplayPort switching. It doesn’t really matter from an end user perspective as you can plug a monitor into any port and have it work, it’s more of me wanting to know how it all works.

267 Comments

View All Comments

Ppietra - Friday, January 3, 2014 - link

An object with black color only implies that it absorbs visible light. Thermal radiation is mostly infrared not visible light, so being black has no consequence since there is nothing emitting visible radiation internally. Externally the surface is very reflective so no problem there either - not that there would be one it wasn’t reflectivecosmotic - Tuesday, December 31, 2013 - link

It would be nice to see storage performance of the Mac Pro SSD against RAID on mechanical disks and SSD disks from a previous Mac Pro model.cosmotic - Tuesday, December 31, 2013 - link

Including IOPSacrown - Tuesday, December 31, 2013 - link

The early 2008 Mac Pro does not support hyperthreadimg as your charts indicate. Of course I could just be doing something wrong with mine...acrown - Tuesday, December 31, 2013 - link

Stupid onscreen keyboard. I meant hyperthreading of course.Anand Lal Shimpi - Tuesday, December 31, 2013 - link

Whoops, you're right! Fixed :)acrown - Tuesday, December 31, 2013 - link

Great article by the way. I'm so on the fence about whether to get one to replace my current Mac Pro.The read is tempting me more and more though...

ananduser - Tuesday, December 31, 2013 - link

It's actually simple, it's the best OSX workstation for seemingly only Apple software that actually makes full use of the GPU setup.If your workflow revolves exclusively around FCX, it is the only workstation you'll need. If you're an average consumer wanting a powerful OSX machine you'd better get a consumer oriented imac.

PS: No need for me to mention that if you'll need CUDA and Windows then it's a bad buy.

akdj - Wednesday, January 1, 2014 - link

I'd expect OpenCL to become more and more and MORE ubiquitous as time marches on and Moore's law in relation to CPU slows...and more computing can be taken care of via screaming fast GPUs. Again. Early adopters. But CUDA/Windows options are aplenty. Just more expensive and without twin GPUs. Without PCIe storage. And.....oh yeah, their Windows boxes. At least with the MP you can run Windows...and perhaps, as we saw Adobe so quickly do with HiDPI support post rMBP release (along with hundreds of other apps and software companies)---hopefully Windows 8.2/9.x realizes the more significant 'all around' gains utilizing OpenCL (nVidia too?) than the very, VERY select software titles that take advantage of CUDA....and when they do, it's primal in comparison to what OpenCL opens the doors for. Literally. Everythingmoppop - Wednesday, January 1, 2014 - link

Considering CUDA is a GPGPU API there's no door that OpenCL opens that CUDA can't do...in fact, you could say that CUDA opens more doors on an Nvidia GPU. Nvidia also supports OpenCL since it was among the parties to help expand the api spec, but make no mistake, their flagship is CUDA.Aside from shunning Nvidia to market-segments whose software will be at least CUDA-accelerated (if there's GPU accelaration at all), my main beef with the Mac Pro, however, is that there are artificial limits placed by the design. Namely 1 CPU socket and only 4 DIMM slots.

For VFX/3DCG pros, the reality is that GPU rendering simply isn't there yet. Your PRMan, Mental Ray, V-Ray (not real-time V-Ray), Arnold, and Mantra renderers are still very much in the CPU world. When professionals buy a machine they need it to work now, and not 5 years from now. While the Mac Pro certainly appeals to portions of the pro market-segment, it was a simply foolish reason to castrate the Mac Pro.