The Mac Pro Review (Late 2013)

by Anand Lal Shimpi on December 31, 2013 3:18 PM ESTGPU Choices

The modern Apple is a big fan of GPU power. This is true regardless of whether we’re talking about phones, tablets, notebooks or, more recently, desktops. The new Mac Pro is no exception as it is the first Mac in Apple history to ship with two GPUs by default.

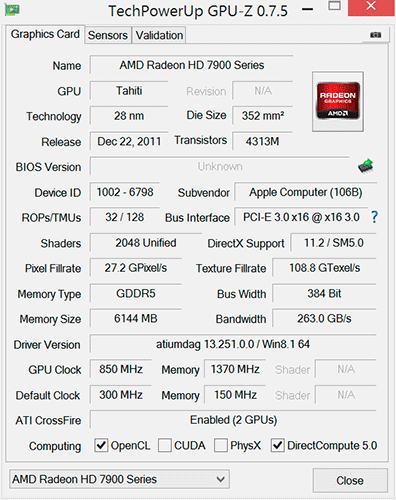

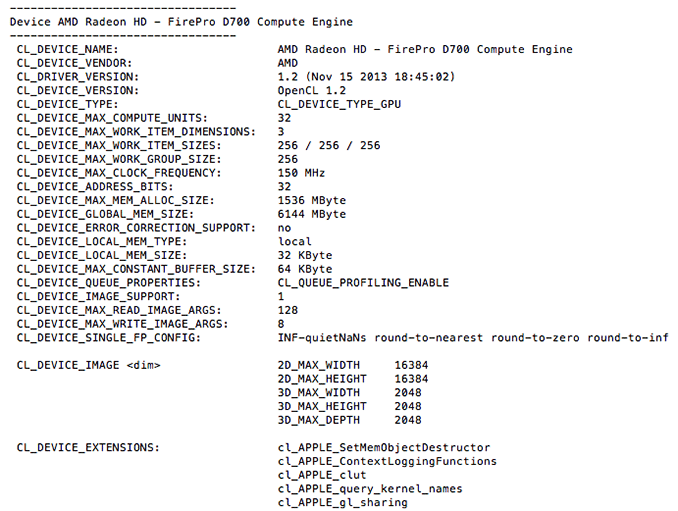

AMD won the contract this time around. The new Mac Pro comes outfitted with a pair of identical Pitcairn, Tahiti LE or Tahiti XT derived FirePro branded GPUs. These are 28nm Graphics Core Next 1.0 based GPUs, so not the absolute latest tech from AMD but the latest of what you’d find carrying a FirePro name.

The model numbers are unique to Apple. FirePro D300, D500 and D700 are the only three options available on the new Mac Pro. The D300 is Pitcairn based, D500 appears to use a Tahiti LE with a wider 384-bit memory bus while D700 is a full blown Tahiti XT. I’ve tossed the specs into the table below:

| Mac Pro (Late 2013) GPU Options | ||||||

| AMD FirePro D300 | AMD FirePro D500 | AMD FirePro D700 | ||||

| SPs | 1280 | 1536 | 2048 | |||

| GPU Clock (base) | 800MHz | 650MHz | 650MHz | |||

| GPU Clock (boost) | 850MHz | 725MHz | 850MHz | |||

| Single Precision GFLOPS | 2176 GFLOPS | 2227 GFLOPS | 3481 GFLOPS | |||

| Double Precision GFLOPS | 136 GFLOPS | 556.8 GFLOPS | 870.4 GFLOPS | |||

| Texture Units | 80 | 96 | 128 | |||

| ROPs | 32 | 32 | 32 | |||

| Transistor Count | 2.8 Billion | 4.3 Billion | 4.3 Billion | |||

| Memory Interface | 256-bit GDDR5 | 384-bit GDDR5 | 384-bit GDDR5 | |||

| Memory Datarate | 5080MHz | 5080MHz | 5480MHz | |||

| Peak GPU Memory Bandwidth | 160 GB/s | 240 GB/s | 264 GB/s | |||

| GPU Memory | 2GB | 3GB | 6GB | |||

| Apple Upgrade Cost (Base Config) | - | +$400 | +$1000 | |||

| Apple Upgrade Cost (High End Config) | - | - | +$600 | |||

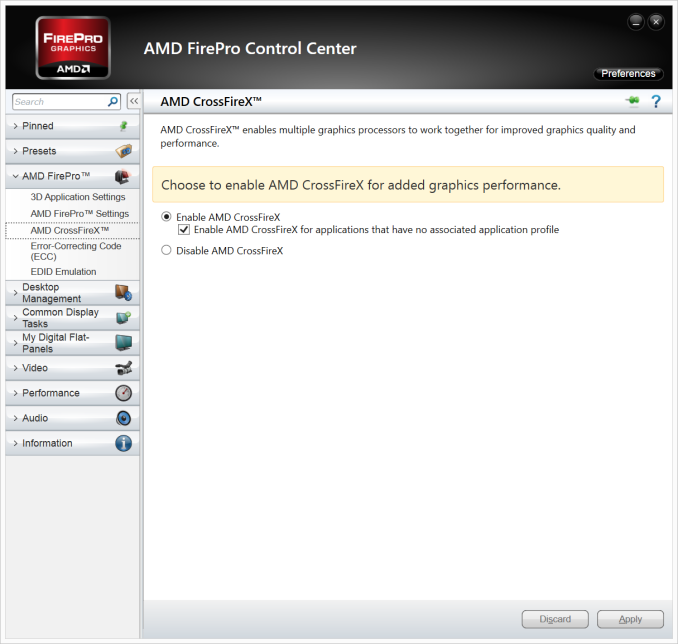

Despite the FirePro brand, these GPUs have at least some features in common with their desktop Radeon counterparts. FirePro GPUs ship with ECC memory, however in the case of the FirePro D300/D500/D700, ECC isn’t enabled on the GPU memories. Similarly, CrossFire X isn’t supported by FirePro (instead you get CrossFire Pro) but in the case of the Dx00 cards you do get CrossFire X support under Windows.

Each GPU gets a full PCIe 3.0 x16 interface to the Xeon CPU via a custom high density connector and flex cable on the bottom of each GPU card in the Mac Pro. I believe Apple also integrated CrossFire X bridge support over this cable.

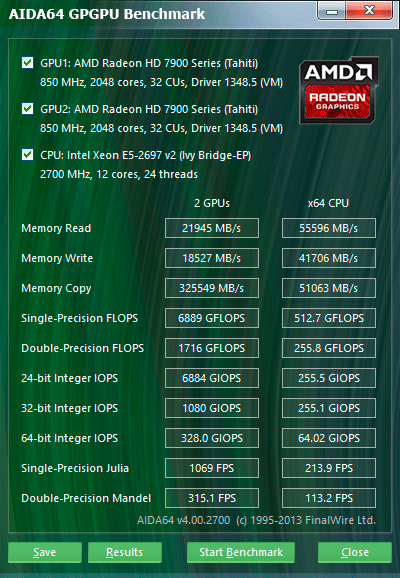

With two GPUs standard in every Mac Pro configuration, there’s obviously OS support for the configuration. Under Windows, that amounts to basic CrossFire X support. Apple’s Boot Camp drivers ship with CFX support, and you can download the latest Catalyst drivers directly from AMD and enable CFX under Windows as well. I did the latter and found that despite the option being there I couldn’t actually disable CrossFire X under Windows. Disabling CFX would drop power consumption, but I didn't always see a corresponding decrease in performance.

Under OS X the situation is a bit more complicated. There is no system-wide CrossFire X equivalent that will automatically split up rendering tasks across both GPUs. By default, one GPU is setup for display duties while the other is used exclusively for GPU compute workloads. GPUs are notoriously bad at context switching, which can severely limit compute performance if the GPU also has to deal with the rendering workloads associated with display in a modern OS. NVIDIA sought to address a similar problem with their Maximus technology, combining Quadro and Tesla cards into a single system for display and compute.

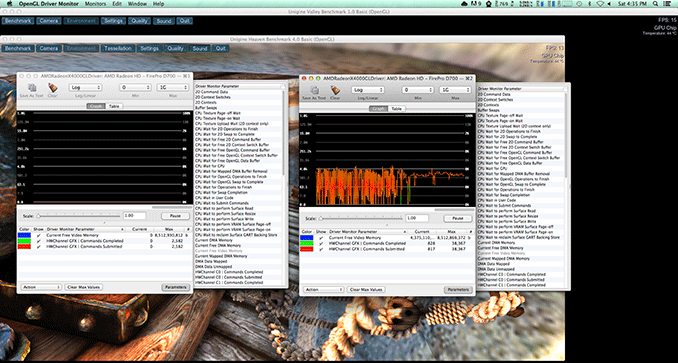

Due to the nature of the default GPU division under OS X, all games by default will only use a single GPU. It is up to the game developer to recognize and split rendering across both GPUs, which no one is doing at present. Unfortunately firing up two instances of a 3D workload won’t load balance across the two GPUs by default. I ran Unigine Heaven and Valley benchmarks in parallel, unfortunately both were scheduled on the display GPU leaving the compute GPU completely idle.

The same is true for professional applications. By default you will see only one GPU used for compute workloads. Just like the gaming example however, applications may be written to spread compute workloads out across both GPUs if they need the horsepower. The latest update to Final Cut Pro (10.1) is one example of an app that has been specifically written to take advantage of both GPUs in compute tasks.

The question of which GPU to choose is a difficult one. There are substantial differences in performance between all of the options. The D700 for example offers 75% more single precision compute than the D300 and 56% more than the D500. All of the GPUs have the same number of render backends however, so all of them should be equally capable of driving a 4K display. In many professional apps, the bigger driver for the higher end GPU options will likely be the larger VRAM configurations.

![]()

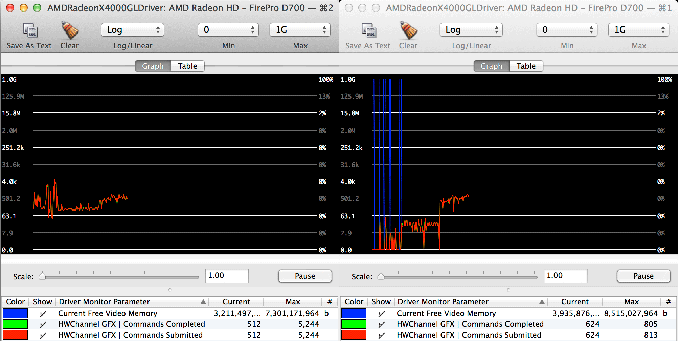

I was particularly surprised by how much video memory Final Cut Pro appeared to take up on the primary (non-compute) GPU. I measured over 3GB of video memory usage while on a 1080p display, editing 4K content. The D700 is the only configuration Apple offers with more than 3GB of video memory. I’m not exactly sure how the experience would degrade if you had less, but throwing more VRAM at the problem doesn’t seem to be a bad idea. The compute GPU’s memory usage is very limited (obviously) until the GPU is actually in use. OS X reported ~8GB of usage when idle, which I can only assume is a bug and a backwards way of saying that none of the memory was in use. Under a GPU compute load (effects rendering in FCP), I saw around 2GB of memory usage on the compute GPU.

Since Final Cut Pro 10.1 appears to be a flagship app for the Mac Pro’s CPU + GPU configuration, I did some poking around to see how the three separate processors are used in the application. Basic rendering still happens on the CPU. With 4K content and the right effects I see 20 - 21 threads in use, maxing out nearly all available cores and threads. I still believe the 8-core version may be a slightly better choice if you're concerned about cost, but that's a guess on my part since I don't have a ton of 4K FCP 10.1 projects to profile. The obvious benefit to the 12-core version is you get more performance when the workload allows it, and when it doesn't you get a more responsive system.

Live preview of content that has yet to be rendered is also CPU bound. I don’t see substantial GPU compute use here, and the same is actually true for the CPU. Scrubbing through and playing back non-rendered content seems to use between 1 - 3 CPU cores. Even if you apply video effects to the project, prior to rendering this ends up being a predominantly CPU workload with the non-compute (display) GPU spending some cycles.

It’s when you actually go to render visual effects that the compute GPU kicks in. Video rendering/transcoding, as I mentioned earlier, is still a CPU bound affair but all effects rendering takes place on the GPUs. The GPU workload increases depending on the number of effects layered upon one another. Effects rendering appears to be spread over both GPUs, with the compute GPU taking the brunt of the workload in some cases and in others the two appear more balanced.

GPU load while running my 4K CPU+GPU FCP 10.1 workload

Final Cut Pro’s division of labor between CPU and GPUs exemplifies what you’ll need to see happen across the board if you want big performance gains going forward. If you’re not bound by storage performance and want more than double digit increases in performance, your applications will have to take advantage of GPU computing to get significant speedups. There are some exceptions (e.g. leveraging AVX hardware in the CPU cores), but for the most part this heterogeneous approach is what needs to happen. What we’ve seen from FCP shows us that the solution won’t come in the form of CPU performance no longer mattering and GPU performance being all we care about. A huge portion of my workflow in Final Cut Pro is still CPU bound, the GPU is used to accelerate certain components within the application. You need the best of both to build good, high performance systems going forward.

267 Comments

View All Comments

zepi - Wednesday, January 1, 2014 - link

How about virtualization and for example VT-d support with multiple gpu's and thunderbolts etc?Ie. Running windows in a virtual machine with half a dozen cores + another GPU while using rest for the OSX simultaneously?

I'd assume some people would benefit of having both OSX and Windows content creation applications and development environments available to them at the same time. Not to mention gaming in a virtual machine with dedicated GPU instead of virtual machine overhead / incompatibility etc.

japtor - Wednesday, January 1, 2014 - link

This is something I've wondered about too, for a while now really. I'm kinda iffy on this stuff, but last I checked (admittedly quite a while back) OS X wouldn't work as the hypervisor and/or didn't have whatever necessary VT-d support. I've heard of people using some other OS as the hypervisor with OS X and Windows VMs, but then I think you'd be stuck with hard resource allocation in that case (without restarting at least). Fine if you're using both all the time but a waste of resources if you predominantly use one vs the other.horuss - Thursday, January 2, 2014 - link

Anyway, I still would like to see some virtualization benchs. In my case, I can pretty much make it as an ideal home server with external storage while taking advantage of the incredible horse power to run multiple vms for my tests, for development, gaming and everything else!iwod - Wednesday, January 1, 2014 - link

I have been how likely we get a Mac ( Non Pro ) Spec.Nvidia has realize those extra die space wasted for GPGPU wasn't worth it. Afterall their main target are gamers and gaming benchmarks. So they decided for Kepler they have two line, one for GPGPU and one on the mainstream. Unless they change course again I think Maxwell will very likely follow the same route. AMD are little difference since they are betting on their OpenCL Fusion with their APU, therefore GPGPU are critical for them.

That could means Apple diverge their product line with Nvidia on the non Professional Mac like iMac and Macbook Pro ( Urg.. ) while continue using AMD FirePro on the Mac Pro Line.

Last time it was rumoured Intel wasn't so interested in getting a Broadwell out for Desktop, the 14nm die shrink of Haswell. Mostly because Mobile / Notebook CPU has over taken Desktop and will continue to do so. It is much more important to cater for the biggest market. Not to mention die shrink nowadays are much more about Power savings then Performance Improvements. So Intel could milk the Desktop and Server Market while continue to lead in Mobile and try to catch up with 14nm Atom SoC.

If that is true, the rumor of Haswell-Refresh on Desktop could mean Intel is no longer delaying Server Product by a single cycle. They will be doing the same for Desktop as well.

That means there could be a Mac Pro with Haswell-EP along with Mac with a Haswell-Refresh.

And by using Nvidia Gfx instead of AMD Apple dont need to worry about Mac eating into Mac Pro Market. And there could be less cost involve with not using a Pro Gfx card, only have 3 TB display, etc.

words of peace - Wednesday, January 1, 2014 - link

I keep thinking that if the MP is a good seller, maybe Apple could enlarge the unit so it contains a four sided heatsink, this could allow for dual CPU.Olivier_G - Wednesday, January 1, 2014 - link

Hi,I don't understand the comment about the lack of HiDPI mode here?

I would think it's simply the last one down the list, listed as 1920x1080 HiDPI, it does make the screen be perceived as such for apps, yet photos and text render at 4x resolution, which is what we're looking for i believe?

i tried such mode on my iMac out of curiosity and while 1280x720 is a bit ridiculously small it allowed me to confirm it does work since OSX mavericks. So I do expect the same behaviour to use my 4K monitor correctly with mac pro?

Am I wrong?

Gigaplex - Wednesday, January 1, 2014 - link

The article clearly states that it worked at 1920 HiDPI but the lack of higher resolutions in HiDPI mode is the problem.Olivier_G - Wednesday, January 1, 2014 - link

Well no it does not state that at all I read again and he did not mention trying the last option in the selector.LumaForge - Wednesday, January 1, 2014 - link

Anand,Firstly, thank you very much for such a well researched and well thought out piece of analysis - extremely insightful. I've been testing a 6 core and 12 core nMP all week using real-life post-production workflows and your scientific analysis helps explain why I've gotten good and OK results in some situations and not always seen the kinds of real-life improvements I was expecting in others.

Three follow up questions if I may:

1) DaVinci Resolve 10.1 ... have you done any benchmarking on Resolve with 4K files? ... like FCP X 10.1, BMD have optimized Resolve 10.1 to take full advantage of split CPU and GPU architecture but I'm not seeing the same performance gains as with FCP x 10.1 .... wondering if you have any ideas on system optimization or the sweet spot? I'm still waiting for my 8 core to arrive and that may be the machine that really takes advantage of the processor speed versus cores trade-off you identify.

2) Thunderbolt 2 storage options? ... external storage I/O also plays a significant role in overall sustained processing performance especially with 4K workflows ... I posted a short article on Creative Cow SAN section detailing some of my findings (no where as detailed or scientific as your approach I'm afraid) ... be interested to know your recommendations on Tbolt2 storage.

http://forums.creativecow.net/readpost/197/859961

3) IP over Tbolt2 as peer-to-peer networking topology? ... as well as running the nMPs in DAS, NAS and SAN modes I've also been testing IP over Tbolt2 .... only been getting around 500 MB/s sustained throughput between two nMPs ... if you look at the AJA diskwhack tests I posted on Creative Cow you'll see that the READ speeds are very choppy ... looks like a read-ahead caching issue somewhere in the pipeline or lack of 'Jumbo Frames' across the network ... have you played with TCP/IP over Thunderbolt2 yet and come to any conclusions on how to optimize throughput?

Keep up the good work and all the best for 2014.

Cheers,

Neil

modeleste - Wednesday, January 1, 2014 - link

I noticed that the Toshiba 65" 4k TV is about the same price as the Sharp 32" The reviews seem nice.Does anyone have any ide what the issues would be with using this display?