OCZ Vector 150 (120GB & 240GB) Review

by Kristian Vättö on November 7, 2013 9:00 AM EST- Posted in

- Storage

- SSDs

- OCZ

- Indilinx

- Vector 150

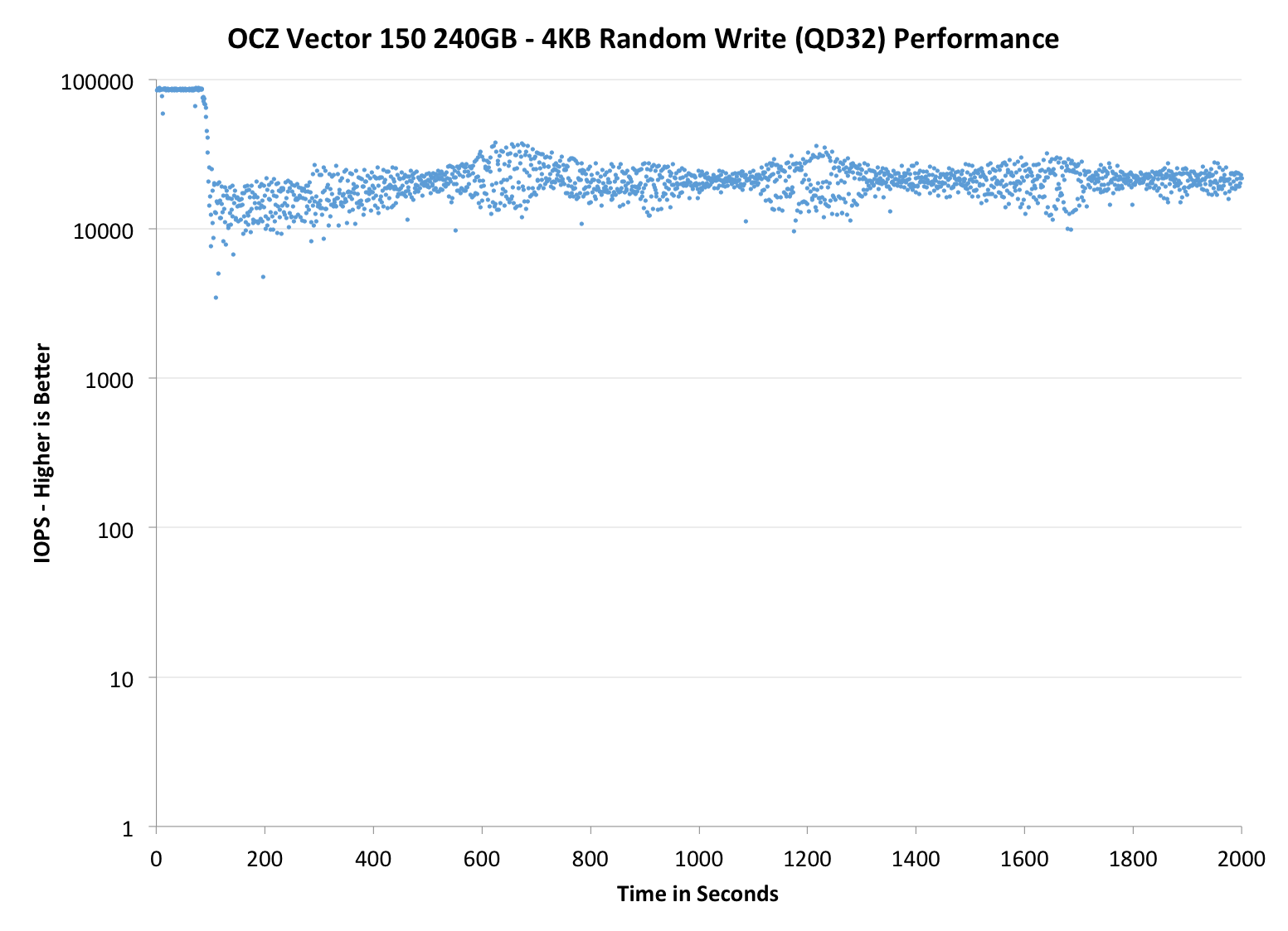

Performance Consistency

In our Intel SSD DC S3700 review Anand introduced a new method of characterizing performance: looking at the latency of individual operations over time. The S3700 promised a level of performance consistency that was unmatched in the industry, and as a result needed some additional testing to show that. The reason we don't have consistent IO latency with SSDs is because inevitably all controllers have to do some amount of defragmentation or garbage collection in order to continue operating at high speeds. When and how an SSD decides to run its defrag and cleanup routines directly impacts the user experience. Frequent (borderline aggressive) cleanup generally results in more stable performance, while delaying that can result in higher peak performance at the expense of much lower worst-case performance. The graphs below tell us a lot about the architecture of these SSDs and how they handle internal defragmentation.

To generate the data below we take a freshly secure erased SSD and fill it with sequential data. This ensures that all user accessible LBAs have data associated with them. Next we kick off a 4KB random write workload across all LBAs at a queue depth of 32 using incompressible data. We run the test for just over half an hour, nowhere near what we run our steady state tests for but enough to give a good look at drive behavior once all spare area fills up.

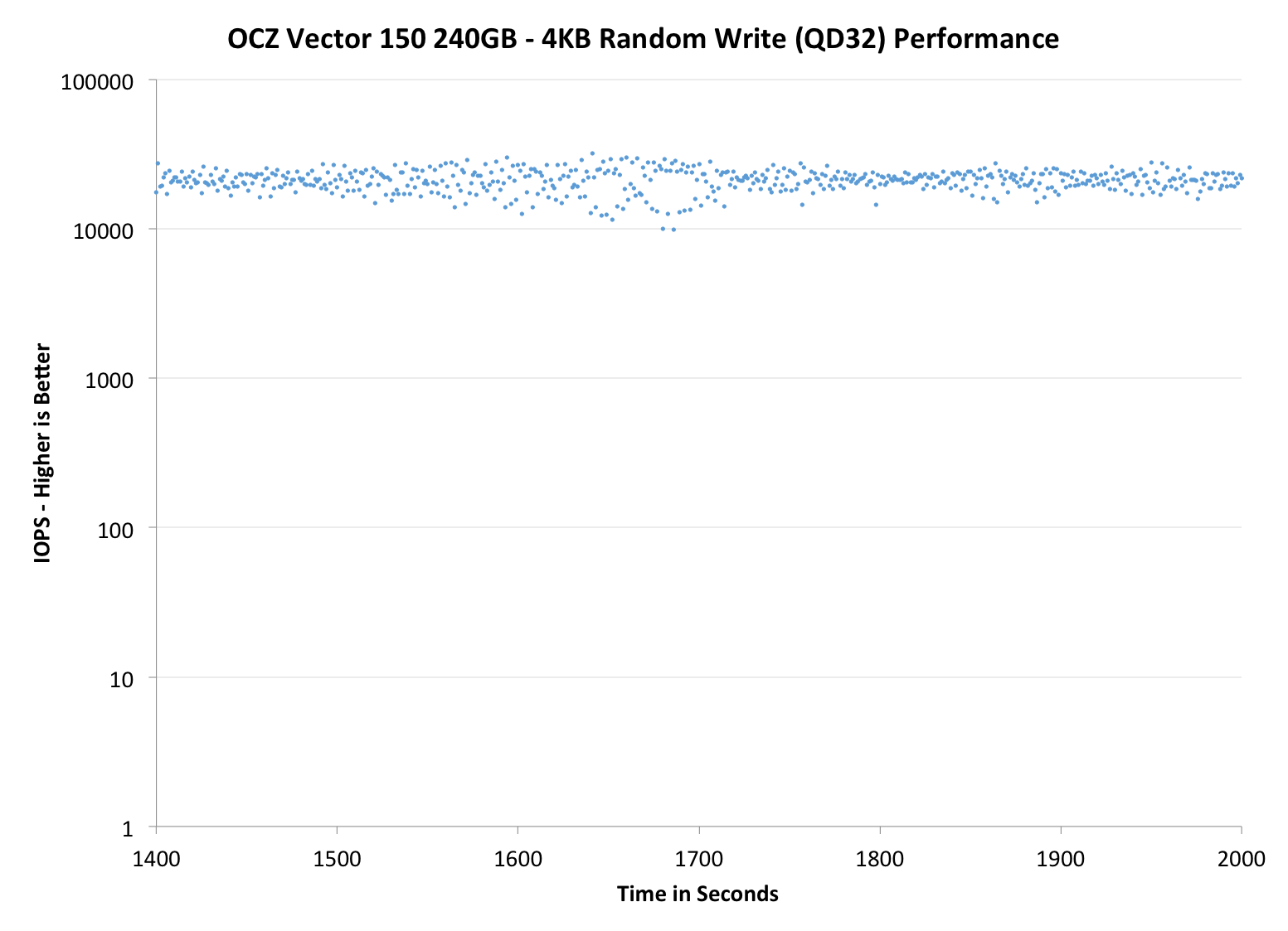

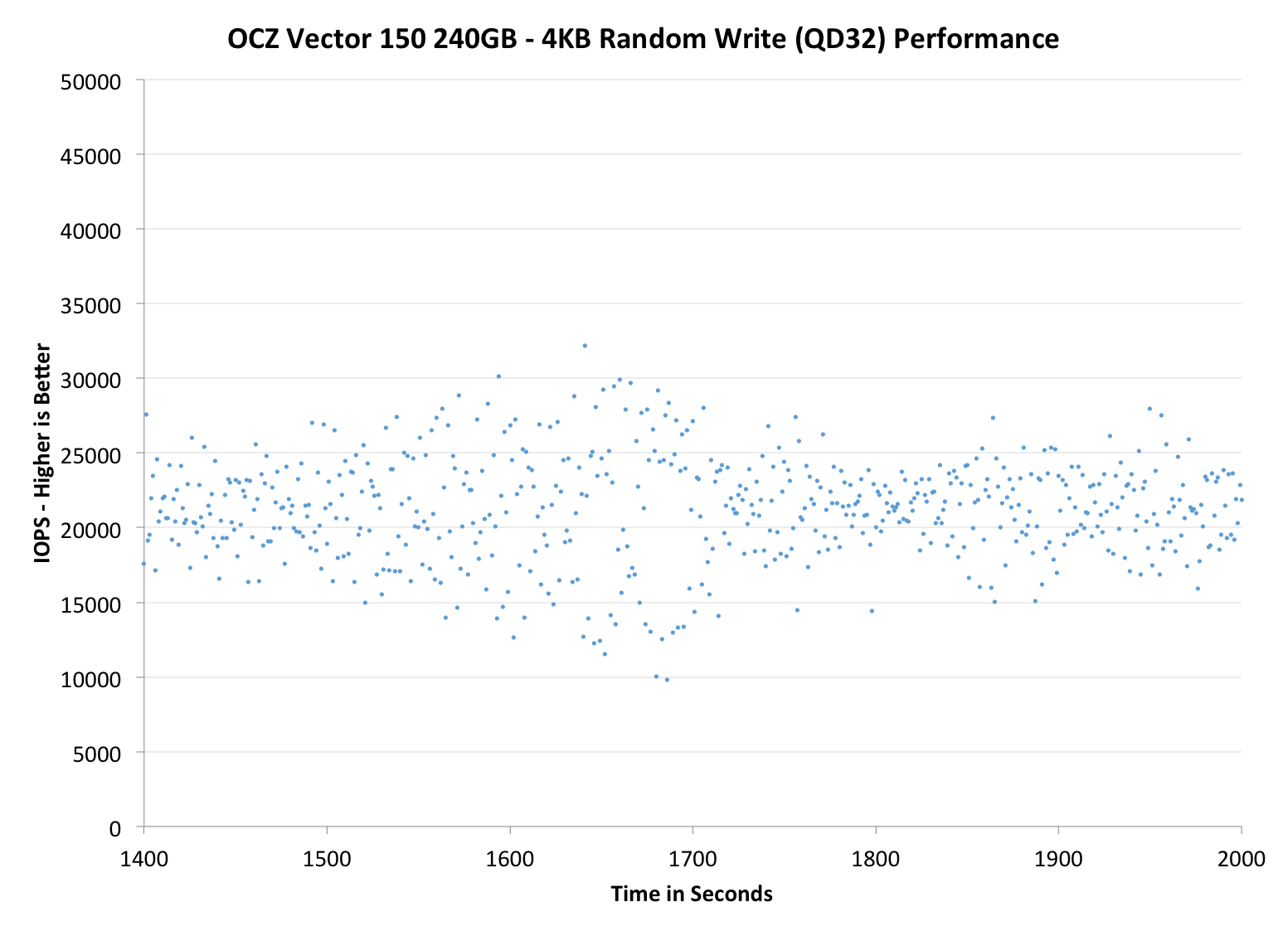

We record instantaneous IOPS every second for the duration of the test and then plot IOPS vs. time and generate the scatter plots below. Each set of graphs features the same scale. The first two sets use a log scale for easy comparison, while the last set of graphs uses a linear scale that tops out at 40K IOPS for better visualization of differences between drives.

The high level testing methodology remains unchanged from our S3700 review. Unlike in previous reviews however, we vary the percentage of the drive that gets filled/tested depending on the amount of spare area we're trying to simulate. The buttons are labeled with the advertised user capacity had the SSD vendor decided to use that specific amount of spare area. If you want to replicate this on your own all you need to do is create a partition smaller than the total capacity of the drive and leave the remaining space unused to simulate a larger amount of spare area. The partitioning step isn't absolutely necessary in every case but it's an easy way to make sure you never exceed your allocated spare area. It's a good idea to do this from the start (e.g. secure erase, partition, then install Windows), but if you are working backwards you can always create the spare area partition, format it to TRIM it, then delete the partition. Finally, this method of creating spare area works on the drives we've tested here but not all controllers are guaranteed to behave the same way.

The first set of graphs shows the performance data over the entire 2000 second test period. In these charts you'll notice an early period of very high performance followed by a sharp dropoff. What you're seeing in that case is the drive allocating new blocks from its spare area, then eventually using up all free blocks and having to perform a read-modify-write for all subsequent writes (write amplification goes up, performance goes down).

The second set of graphs zooms in to the beginning of steady state operation for the drive (t=1400s). The third set also looks at the beginning of steady state operation but on a linear performance scale. Click the buttons below each graph to switch source data.

|

|||||||||

| OCZ Vector 150 240GB | OCZ Vector 256GB | Corsair Neutron 240GB | Sandisk Extreme II 480GB | Samsung SSD 840 Pro 256GB | |||||

| Default | |||||||||

| 25% OP | |||||||||

Performance consistency is simply outstanding. OCZ told us that they focused heavily on IO consistency in the Vector 150 and the results speak for themselves. Obviously the added over-provisioning helps but if you compare it to the Corsair Neutron with the same ~12% over-provisioning, the Vector 150 wins. The Neutron and other LAMD based SSDs have been one of the most consistent SSDs to date, so the Vector 150 beating the Neutron is certainly an honorable milestone for OCZ. However if you increase the over-provisioning to 25%, the Vector 150's advantage doesn't scale. In fact, the original Vector is slightly more consistent with 25% over-provisioning than the Vector 150 but both are definitely among the most consistent.

|

|||||||||

| OCZ Vector 150 240GB | OCZ Vector 256GB | Corsair Neutron 240GB | SanDisk Extreme II 480GB | Samsung SSD 840 Pro 256GB | |||||

| Default | |||||||||

| 25% OP | |||||||||

|

|||||||||

| OCZ Vector 150 240GB | OCZ Vector 256GB | Corsair Neutron 240GB | SanDisk Extreme II 480GB | Samsung SSD 840 Pro 256GB | |||||

| Default | |||||||||

| 25% OP | |||||||||

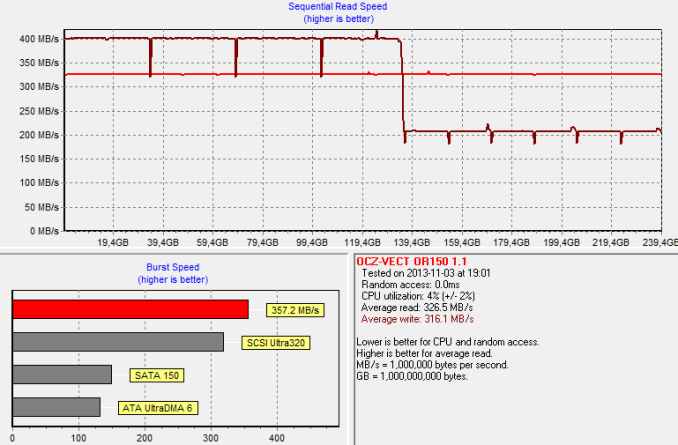

TRIM Validation

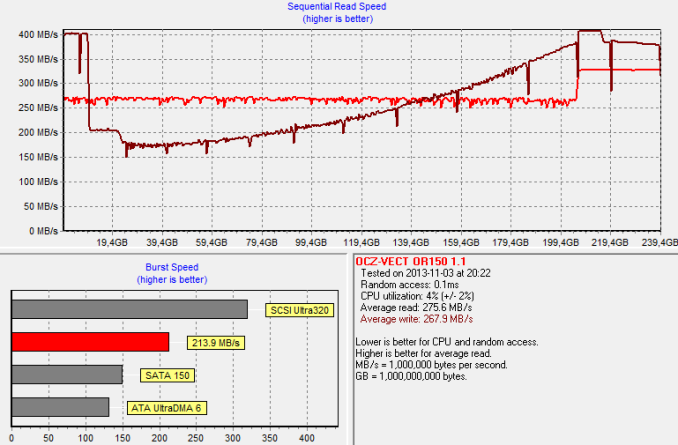

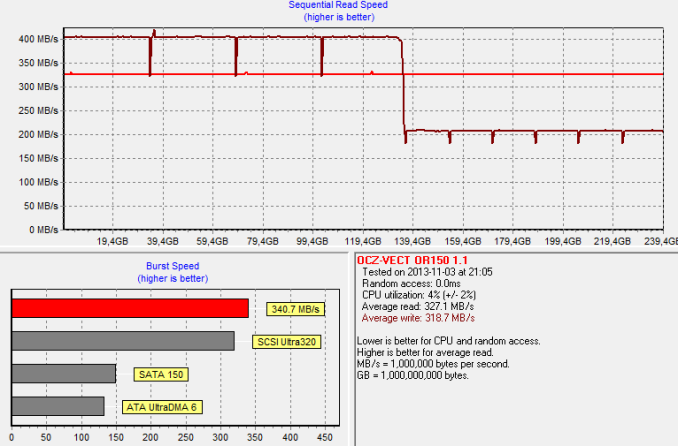

Above is an HD Tach graph I ran on a secure erased drive to get the baseline performance. The graph below is from a run that I ran after our performance consistency test (first filled with sequential data and then hammered with 4KB random writes at queue depth of 32 for 2000 seconds):

And as always, performance degrades, although the Vector 150 does pretty good with recovering the performance if you write sequential data to the drive. Finally I TRIM'ed the entire volume and reran HD Tach to make sure TRIM is functional.

It is. You can also see the impact of OCZ's "performance mode" in the graphs. Once 50% of the LBAs have been filled, the drive will reorganize the data, which causes the performance degradation. If you leave the drive idling after filling over half of it, the performance will return close to brand new state within minutes. Our internal tests with the original Vector have shown that the data reorganization takes less than 10 minutes, so it's nothing to be concerned about. The HD Tach graphs give a much worse picture of the situation than it really is.

59 Comments

View All Comments

Kristian Vättö - Friday, November 8, 2013 - link

With very light usage, I don't think there is any reason to pay extra for an enthusiast class SSD, let alone enterprise-grade. Even the basic consumer SSDs (like Samsung SSD 840 EVO for instance) should outlive the other components in your system.LB-ID - Friday, November 8, 2013 - link

OCZ as a company has, time and again, proven their complete contempt for their customer base. Release something in a late alpha, buggy state, berate their customers for six months while they dutifully jump through all the hoops trying to fix it, then many months down the road release a bios that makes it marginally useful.Not going to be an abused, unpaid beta tester for this company. Never again. Will lift a glass to toast when their poor products and crappy support ultimately send them the way of the dodo.

profquatermass - Friday, November 8, 2013 - link

Then again I remember Intel doing the exact same thing with their new SSDs.Life Tip: Never buy any device on day #1. Wait a few weeks/months until real-life bugs are ironed out.

Kurosaki - Saturday, November 9, 2013 - link

Where is the review of Intels 3500-series?and why aren't the bench getting any love? :-('nar - Sunday, November 10, 2013 - link

I am surprised by all of the negative feedback here, they must all be in their own worlds. Everyone can only base their opinion on their own experiences, but individuals lack the statistical quantity to make an educated determination. I have been using OCZ drives for three years now. The cause of failure of the only one's that have failed have been isolated, and corrected. A "corner issue" where a power event causes the corruption of the firmware rendering the drive inert, corrected in the latest firmware.1. REVO is "bleeding edge" hardware, expect to bleed

2. Agility is cheap crap, throw it away

3. Vertex and Vector lines have been stable

It is interesting how many complain, yet do not provide specifics. Which model drive? What motherboard? What firmware version? I do not care for the opinions of strangers, you need to back it up with details. People get frustrated by inconvenience, and often prefer to complain and replace rather than correct the problem. As I said, I use OCZ on most of my computers, but I install Intel and Plextor for builds I sell. Reliability and toolbox are more practical for most users, and Intel is among, if not the top, of the most reliable drives.

hero4hire - Monday, November 11, 2013 - link

Much of the hate has to do with how consumers were treated during the support process. I can only imagine if a painless fast exchange for failed drives was the status quo we wouldn't have the vitriol posted here. OCZ failed at least twice, with hardware and then poor support.lovemyssd - Sunday, November 24, 2013 - link

right. no doubt thereof comments are from those playing with their stock price. That's why genuine users and those who know can't make sense out of the bashing.KAlmquist - Sunday, November 10, 2013 - link

The review states that the Vector 150 is better than the Corsair Neutron in terms of consistency, but the graphs indicate otherwise. At around the 100 second mark, the Vector 150 drops to around 3500 IOPS for one second, whereas the Neutron is always above 6000 IOPS. So it seems there is a mistake somewhere.CBlade - Wednesday, June 4, 2014 - link

How about that Toshiba second generation 19nm NAND that Kristian mentioned? How we can identify part number on this new SSD? I want to know if the NAND its better or not.