The AMD Radeon R9 290X Review

by Ryan Smith on October 24, 2013 12:01 AM EST- Posted in

- GPUs

- AMD

- Radeon

- Hawaii

- Radeon 200

Bioshock Infinite

Bioshock Infinite is Irrational Games’ latest entry in the Bioshock franchise. Though it’s based on Unreal Engine 3 – making it our obligatory UE3 game – Irrational had added a number of effects that make the game rather GPU-intensive on its highest settings. As an added bonus it includes a built-in benchmark composed of several scenes, a rarity for UE3 engine games, so we can easily get a good representation of what Bioshock’s performance is like.

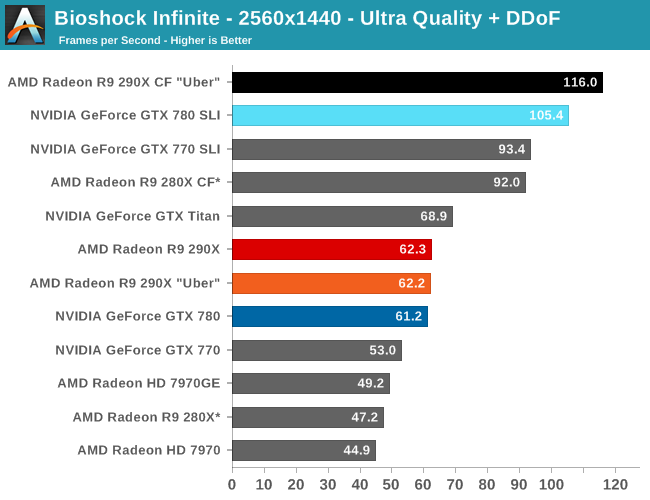

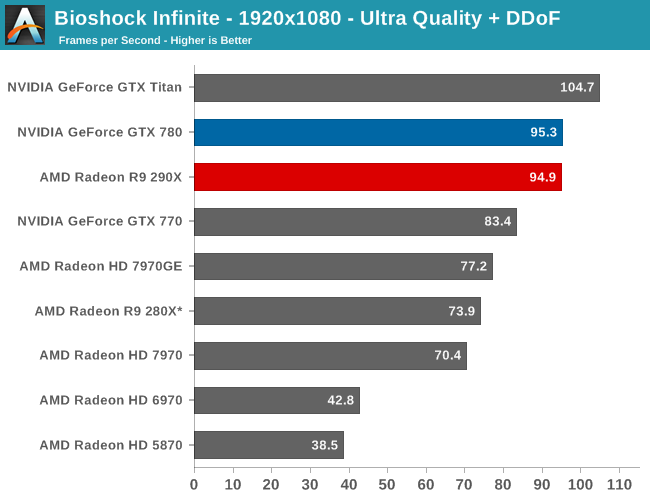

The first of the games AMD allowed us to publish results for, Bioshock is actually a straight up brawl between the 290X and the GTX 780 at 2560. The 290X’s performance advantage here is just 2%, much smaller than the earlier leads it enjoyed and essentially leaving the two cards tied, which also makes this one of the few games that 290X can’t match GTX Titan. At 2560 everything 290X/GTX 780 class or better can beat 60fps despite the heavy computational load of the depth of field effect, so for AMD 290X is the first single-GPU card from them that can pull this off.

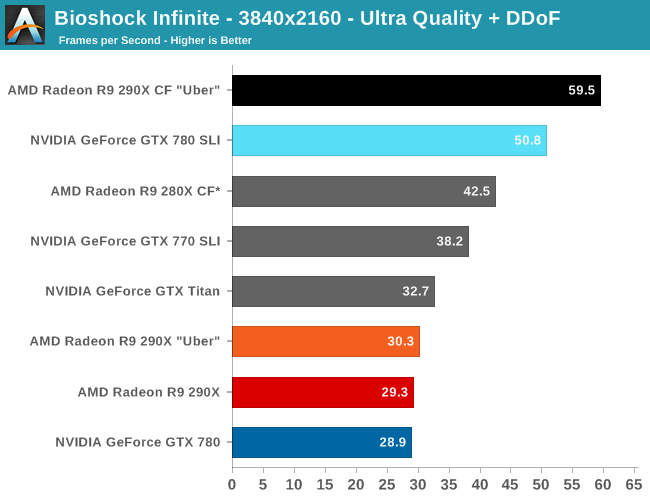

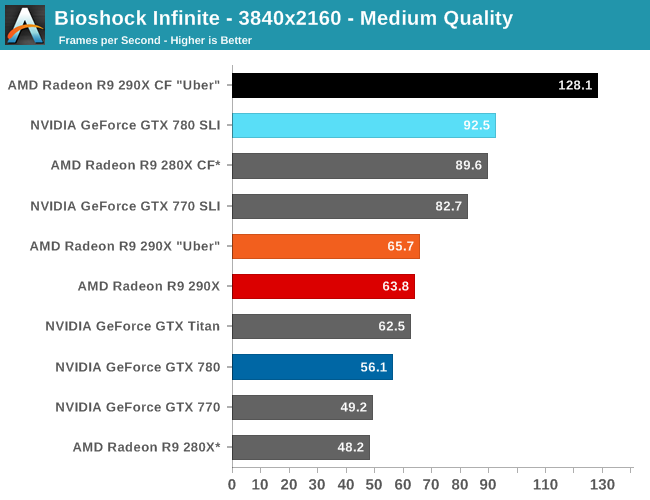

Meanwhile at 4K things end up being rather split depending on the resolution we’re looking at. At Ultra quality the 290X and GTX 780 are again tied, but neither is above 30fps. Drop down to Medium quality however and we get framerates above 60fps again, while at the same time the 290X finally pulls away from the GTX 780, beating it by 14% and even edging out GTX Titan. Like so many games we’re looking at today the loss in quality cannot justify the higher resolution, in our opinion, but it presents another scenario where 290X demonstrates superior 4K performance.

For no-compromises 4K gaming we once again turn our gaze towards the 290X CF and GTX 780 SLI, which has AMD doing very well for themselves. While AMD and NVIDIA are nearly tied at the single GPU level – keep in mind we’re in uber mode for CF, so the uber 290X has a slight performance edge in single GPU mode – with multiple GPUs in play AMD sees better scaling from AFR and consequently better overall performance. At 95% the 290X achieves a nearly perfect scaling factor here, while the GTX 780 SLI achieves only 65%. Curiously this is better for AMD and worse for NVIDIA than the scaling factors we see at 2560, which are 86% and 72% respectively.

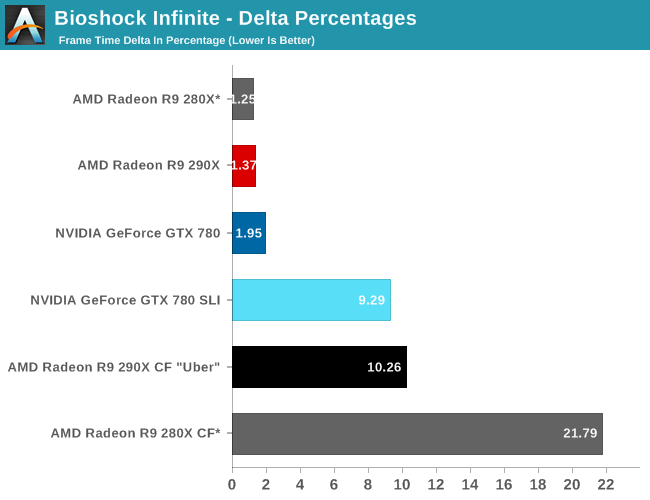

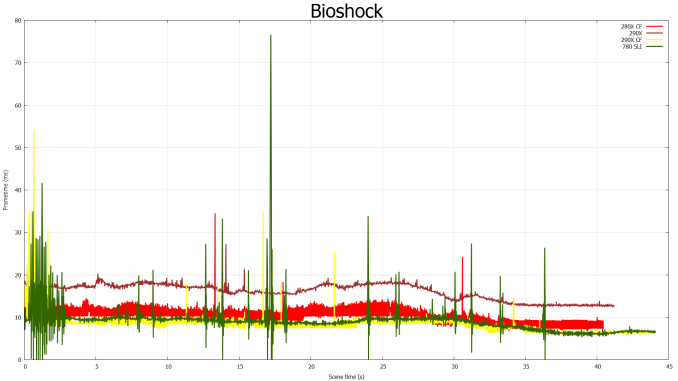

Moving on to our FCAT measurements, it’s interesting to see just how greatly improved the frame pacing is for the 290X versus the 280X, even with the frame pacing fixes in for the 280X. Whereas the 280X has deltas in excess of 21%, the 290X brings those deltas down to 10%, better than halving the variance in this game. Consequently the frame time consistency we’re seeing goes from being acceptable but measurably worse than NVIDIA’s consistency to essentially equal. In fact 10% is outright stunning for a multi-GPU setup, as we rarely achieve frame rates this consistent on those setups.

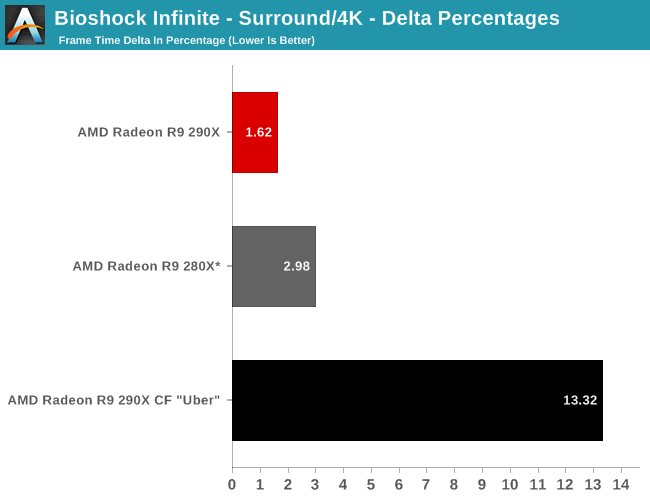

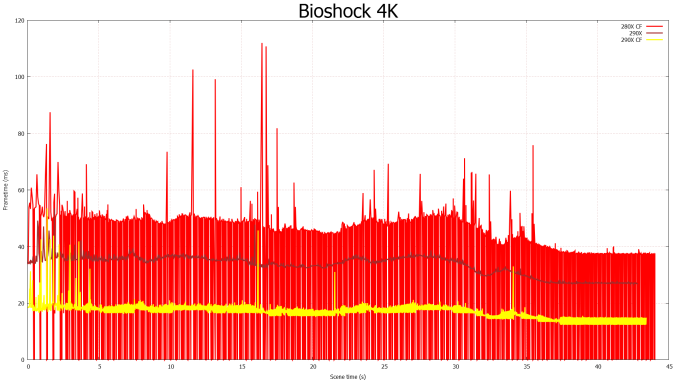

Finally for 4K gaming our variance increases a bit, but not immensely so. Despite the heavier rendering workload and greater demands on moving these large frames around, the delta percentages keep to 13%.

396 Comments

View All Comments

Pontius - Tuesday, October 29, 2013 - link

Some good points Jian, I would like to see side by side comparisons as well. However, I've seen some studies that implement the same algorithm in both OpenCL and CUDA and the results are mostly the same if properly implemented. I've been doing GPU computing development in my spare time over the last year and OpenCL does have one advantage over CUDA that is the reason I use it: run-time compilation. If at run-time you are working with various data sets that involve checking many conditionals, you can compile a kernel with the conditionals stripped out and get a good performance increase since GPUs aren't good at conditionals like CPUs are. But in the end, I agree, more apples to apples comparisons are needed.azixtgo - Thursday, October 24, 2013 - link

the titan is irrelevant. I can't figure why the hell people think a $1000 GPU is even worth mentioning. It's not for sane people to buy and definitely not a genuine effort by nvidia. They saw an opportunity and went for itBloodcalibur - Thursday, October 24, 2013 - link

It's $350 more because of it's compute performance ugh. It benchmarks 5-6x more than the 780 on DGEMM. This is why the card is priced a whopping $350 more than their own 780 which is only a few FPS lower on most games and setups. The only people that should've bought a Titan were people who both GAME and do a little bit of computing.To compare it to the 290x are what retarded ignorant people are doing. Compare it to the 780 which it does beat out. Now we have to wait for nvidia's response.

Cellar Door - Thursday, October 24, 2013 - link

Read the review before trolling. It's $549azixtgo - Thursday, October 24, 2013 - link

technically it's a good value. I think. I despise the higher prices as well but who really knows the value of the product. Comparing a GPU to a lot of things (like a ps4 that has a million other components or a complete PC ), maybe not. but comparing this to nvidia... well...Pounds - Thursday, October 24, 2013 - link

Did the nvidia fanboy get his feelings hurt?superflex - Thursday, October 24, 2013 - link

Yes, and his wallet got shredded.Validation is a bitch.

piroroadkill - Thursday, October 24, 2013 - link

Huh? You can go over to Newegg right now and buy one for $580.Wreckage - Thursday, October 24, 2013 - link

He did not say "Mantle" 7 times so he might not be from their PR department.Either way the 290 is hot, loud, power hungry and nothing new in the performance department. It's cheap but that won't last. Looks like we will have to wait form Maxwell for something truly new.

chrnochime - Thursday, October 24, 2013 - link

You OTOH look like you can't RTFA.