The AMD Radeon R9 290X Review

by Ryan Smith on October 24, 2013 12:01 AM EST- Posted in

- GPUs

- AMD

- Radeon

- Hawaii

- Radeon 200

Meet The Radeon R9 290X

Now that we’ve had a chance to discuss the features and the architecture of GCN 1.1 and Hawaii, we can finally get to the hardware itself: AMD’s reference Radeon R9 290X.

Other than the underlying GPU and the livery, the reference 290X is actually not a significant deviation from the reference design for the 7970. There are some changes that we’ll go over, but for better and for worse AMD’s reference design is not much different from the $550 card we saw almost 2 years ago. For cooling in particular this means AMD is delivering a workable cooler, but it’s not one that’s going to complete with the efficient-yet-extravagant coolers found on NVIDIA’s GTX 700 series.

Starting as always from the top, the 290X measures in at 10.95”. The PCB itself is a bit shorter at 10.5”, but like the 7970 the metal frame/baseplate that is affixed to the board adds a bit of length to the complete card. Meanwhile AMD’s shroud sports a new design, one which is shared across the 200 series. Functionally it’s identical to the 7970, being made of similar material and ventilating in the same manner.

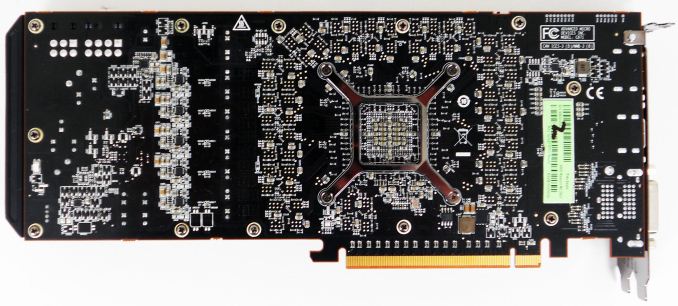

Flipping over to the back of the card quickly, you won’t find much here. AMD has placed all 16 RAM modules on the front of the PCB, so the back of the PCB is composed of resistors, pins, mounting brackets, and little else. AMD continues to go without a backplate here as the backplate is physically unnecessary and takes up valuable breathing room in Crossfire configurations.

Pulling off the top of the shroud, we can see in full detail AMD’s cooling assembling, including the heatsink, radial fan, and the metal baseplate. Other than angling the far side of the heatsink, this heatsink is essentially unchanged from the one on the 7970. AMD is still using a covered aluminum block heatsink designed specifically for use in blower designs, which runs most of the length of the card between the fan and PCIe bracket. Connecting the heatsink to the GPU is an equally large vapor chamber cooler, which is in turn mounted to the GPU using AMD’s screen printed, high performance phase change TIM. Meanwhile the radial fan providing airflow is the same 75mm diameter fan we first saw in the 7970. Consequently the total heat capacity of this cooler will be similar, but not identical to the one on the 7970; with AMD running the 290X at a hotter 95C versus the 80C average of the 7970, this same cooler is actually able to move more heat despite being otherwise no more advanced.

Moving on, though we aren’t able to take apart the card for pictures (we need it intact for future articles), we wanted to quickly go over the power and RAM specs for the 290X. For power delivery AMD is using a traditional 5+1 power phase setup, with power delivery being driven by their newly acquired IR 3567B controller. This will be plenty to drive the card at stock, but hardcore overclockers looking to attach the card to water or other exotic cooling will likely want to wait for something with a more robust power delivery system. Meanwhile despite the 5GHz memory clockspeed for the 290X, AMD has actually equipped the card with everyone’s favorite 6GHZ Hynix R0C modules, so memory controller willing there should be quite a bit of memory overclocking headroom to play with. 16 of these modules are located around the GPU on the front side of the PCB, with thermal pads connecting them to the metal baseplate for cooling.

Perhaps the biggest change for the 290X as opposed to the 7970 is AMD’s choice for balancing display connectivity versus ventilation. With the 6970 AMD used a half-slot vent to fit a full range of DVI, HDMI, and DisplayPorts, only to drop the second DVI port on the 7970 and thereby utilize a full slot vent. With the 290X AMD has gone back once more to a stacked DVI configuration, which means the vent is once more back down to a bit over have a slot in size. At this point both AMD and NVIDIA have successfully shipped half-slot vent cards at very high TDPs, so we’re not the least bit surprised that AMD has picked display connectivity over ventilation, as a half-slot vent is proving to be plenty capable in these blower designs. Furthermore based on NVIDIA and AMD’s latest designs we wouldn’t expect to see full size vents return for these single-GPU blowers in the future, at least not until someone finally gets rid of space-hogging DVI ports entirely.

Top: R9 290X. Bottom: 7970

With that in mind, the display connectivity for the 290X utilizes AMD’s new reference design of 2x DL-DVI-D, 1x HDMI, and 1x DisplayPort. Compared to the 7970 AMD has dropped the two Mini DisplayPorts for a single full-size DisplayPort, and brought back the second DVI port. Note that unlike some of AMD’s more recent cards these are both physically and electrically DL-DVI ports, so the card can drive 2 DL-DVI monitors out of the box; the second DVI port isn’t just for show. The single DVI port on the 7970 coupled with the high cost of DisplayPort to DL-DVI ports made the single DVI port on the 7970 an unpopular choice in some corners of the world, so this change should make DVI users happy, particularly those splurging on the popular and cheap 2560x1440 Korean IPS monitors (the cheapest of which lack anything but DVI).

But as a compromise of this design – specifically, making the second DVI port full DL-DVI – AMD had to give up the second DisplayPort, which is why the full sized DisplayPort is back. This does mean that compared to the 7970 the 290X has lost some degree of display flexibility howwever, as DisplayPorts allow for both multi-monitor setups via MST and for easy conversion to other port types via DVI/HDMI/VGA adapters. With this configuration it’s not possible to drive 6 fully independent monitors on the 290X; the DisplayPort will get you 3, and the DVI/HDMI ports the other 3, but due to the clock generator limits on the 200 series the 3 monitors on the DVI/HDMI ports must be timing-identical, precluding them from being fully independent. On the other hand this means that the PC graphics card industry has effectively settled the matter of DisplayPort versus Mini DisplayPort, with DisplayPort winning by now being the port style of choice for both AMD and NVIDIA. It’s not how we wanted this to end up – we still prefer Mini DisplayPort as it’s equally capable but smaller – but at least we’ll now have consistency between AMD and NVIDIA.

Moving on, AMD’s dual BIOS functionality is back once again for the 290X, and this time it has a very explicit purpose. The 290X will ship with two BIOSes, a “quiet” bios and an “uber” BIOS, selectable with the card’s BIOS switch. The difference between the two BIOSes is that the quiet BIOS ships with a maximum fan speed of 40%, while the uber BIOS ships with a maximum fan speed of 50%. The quiet BIOS is the default BIOS for the 290X, and based on our testing will hold the noise levels of the card equal to or less than those of the reference 7970.

| AMD Radeon Family Cooler Comparison: Noise & Power | |||||||||||

| Card | Load Noise - Gaming | Estimated TDP | |||||||||

| Radeon HD 7970 | 53.5dB | 250W | |||||||||

| Radeon R9 290X Quiet | 53.3dB | 300W | |||||||||

| Radeon R9 290X Uber | 58.9dB | 300W | |||||||||

However because of the high power consumption and heat generation of the underlying Hawaii GPU, in quiet mode the card is unable to sustain its full 1000MHz boost clock for more than a few minutes; there simply isn’t enough cooling occuring at 40% to move 300W of heat. We’ll look at power, temp, and noise in full a bit later in our benchmark section, but average sustained clockspeeds are closer to 900MHz in quiet mode. Uber mode and its 55% fan speed on the other hand is fast enough (and just so) to move enough air to keep the card at 1000MHz in all non-TDP limited workloads. The tradeoff there is that the last 100MHz of clockspeed is going to be incredibly costly from a noise perspective, as we’ll see. The reference 290X would not have been a viable product if it didn’t ship with quiet mode as the default BIOS.

Finally, let’s wrap things up by talking about miscellaneous power and data connectors. With AMD having gone with bridgeless (XDMA) Crossfire for the 290X, the Crossfire connectors that have adorned high-end AMD cards for years are now gone. Other than the BIOS switch, the only thing you will find at the top of the card are the traditional PCIe power sockets. AMD is using the traditional 6pin + 8pin setup here, which combined with the PCIe slot power is good for delivering 300W to the card, which is what we estimate to be the card’s TDP limit. Consequently overclocking boards are all but sure to go the 8pin + 8pin route once those eventually arrive.

396 Comments

View All Comments

Sandcat - Thursday, October 24, 2013 - link

Perhaps they knew it was unsustainable from the beginning, but short term gains are generally what motivate managers when the develop pricing strategies, because bonus. Make hay whilst the sun shines, or when AMD is 8 months late.chizow - Saturday, October 26, 2013 - link

Possibly, but now they have to deal with the damaged goodwill of some of their most enthusiastic, spendy customers. I can't count how many times I've seen it, someone saying they swore off company X or company Y because they felt they got burned/screwed/fleeced by a single transaction. That is what Nvidia will be dealing with going forward with Titan early adopters.Sancus - Thursday, October 24, 2013 - link

AMD really needs to do better than a response 8 months later to crash anyone's parade. And honestly, I would love to see them put up a fight with Maxwell at a reasonable time period so they have incentive to keep prices lower. Otherwise, expect Nvidia to "overprice" things next generation as well.When they have no competition for 8 months it's not unsustainable to price as high as the market will bear, and there's no real evidence that Titan was economically overpriced because it's not like there was a supply glut of Titans sitting around anywhere, in fact they were often out of stock. So really, Nvidia is just pricing according to the market -- no competition from AMD for 8 months, fastest card with limited supply, why WOULD they price it at anything below $1000?

chizow - Saturday, October 26, 2013 - link

My reply would be that they've never had to price it at $1000 before, and we have certainly seen this level of advancement from one generation to the next in the past (7900GTX to 8800GTX, 8800GTX to GTX 280, 280 GTX to 480 GTX, etc), so it's not completely ground-breaking performance increases even though Kepler overall outperformed historical improvements by ~20%, imo.Also, the concern with Titan isn't just the fact it was priced at ungodly premiums this time around, it's the fact it held it's crown for such a relatively short period of time. Sure Nvidia had no competition at the $500+ range for 8 months, but that was also the brevity of Titan's reign at the top. In the past, a flagship in that $500 or $600+ range would generally reign for the entire generation, especially one that was launched half way through that generation's life cycle. Now Nvidia has already announced a reply with the 780 Ti which will mean not one, but TWO cards will surpass Titan at a fraction of it's price before the generation goes EOL.

Nvidia was clearly blind-sided by Hawaii and ultimately it will cost them customer loyalty, imo.

ZeDestructor - Thursday, October 24, 2013 - link

$1000 cards are fine, since the Titan is a cheap compute unit compared to the Quadro K6000 and the 690 is a dual-GPU card (Dual-GPU has always been in the $800+ range).What we should see is the 780 (Ti?) go down in price and match the R9-290x, much to the rejoicing of all!

Nvidia got away with $650-750 on the 780 because they could, and THAT is why competition is important, and why I pay attention to AMD even if I have no reason to buy from them over Nvidia (driver support on Linux is a joke). Now they have to match. Much of the same happens in the CPU segement.

chizow - Saturday, October 26, 2013 - link

For those that actually bought the Titan as a cheap compute card, sure Titan may have been a good buy, but I doubt most Titan buyers were buying it for compute. It was marketed as a gaming card with supercomputer guts and at the time, there was still much uncertainty whether or not Nvidia would release a GTX gaming card based on GK110.I think Nvidia preyed on these fears and took the opportunity to launch a $1K part, but I knew it was an unsustainable business model for them because it was predicated on the fact Nvidia would be an entire ASIC ahead of AMD and able to match AMD's fastest ASIC (Tahiti) with their 2nd fastest (GK104). Clearly Hawaii has turned that idea on it's head and Nvidia's premium product stack is crashing down in flames.

Now, we will see at least 4 cards (290/290X, 780/780Ti) that all come close to or exceed Titan performance at a fraction of the price, only 8 months after it's launch. Short reign indeed.

TheJian - Friday, October 25, 2013 - link

The market dictates pricing. As they said, they sell every Titan immediately, so they could probably charge more. But that's because it has more value than you seem to understand. It is a PRO CARD at it's core. Are you unaware of what a TESLA is for $2500? It's the same freaking card with 1 more SMX and driver support. $1000 is GENEROUS whether you like it or not. Gamers with PRO intentions laughed when they saw the $1000 price and have been buying them like mad ever since. No parade has been crashed. They will continue to do this pricing model for the foreseeable future as they have proven there is a market for high-end gamers with a PRO APP desire on top. The first run was 100,000 and sold in days. By contrast Asus Rog Ares 2 had 1000 unit first run and didn't sell out like that. At $1500 it really was a ripoff with no PRO side.I think they'll merely need another SMX turned on and 50-100mhz for the next $1000 version which likely comes before xmas :) The PRO perf is what is valued here over a regular card. Your short-lived statement makes no sense. It's been 8 months, a rather long life in gpus when you haven't beaten the 8 month old card in much (I debunked 4k crap already, and pointed to a dozen other games where titan wins at every res). You won't fire up Blender, Premiere, PS CS etc and smoke a titan with 290x either...LOL. You'll find out what the other $450 is for at that point.

chizow - Saturday, October 26, 2013 - link

Yes and as soon as they released the 780, the market corrected itself and Titans were no longer sold out anywhere, clearly a shift indicating the price of the 780 was really what the market was willing to bear.Also, there are more differences with their Tesla counterparts than just 1 SMX, Titan lacks ECC support which makes it an unlikely candidate for serious compute projects. Titan is good for hobby compute, anything serious business or research related is going to spend the extra for Tesla and ECC.

And no, 8-months is not a long time at the top, look at the reigns of previous high-end parts and you will see it is generally longer than this. Even the 580 that preceded it held sway for 14-months before Tahiti took over it's spot. Time at the top is just one part though, the amount which Titan devalued is the bigger concern. When 780 launched 3 months after Titan, you could maybe sell Titan for $800. Now that Hawaii has launched, you could maybe sell it for $700? It's only going to keep going down, what do you think it will sell for once 780Ti beats it outright for $650 or less?

Sandcat - Thursday, October 24, 2013 - link

I noticed your comments on the Tahiti pricing fiasco 2 years ago and generally skip through the comment section to find yours because they're top notch. Exactly what I was thinking with the $550 price point, finally a top-tier card at the right price for 28nm. Long live sanity.chizow - Saturday, October 26, 2013 - link

Thanks! Glad you appreciated the comments, I figured this business model and pricing for Nvidia would be unsustainable, but I thought it wouldn't fall apart until we saw 20nm Maxwell/Pirate Islands parts in 2014. Hawaii definitely accelerated the downfall of Titan and Nvidia's $1K eagle's nest.