Samsung SSD 840 EVO Review: 120GB, 250GB, 500GB, 750GB & 1TB Models Tested

by Anand Lal Shimpi on July 25, 2013 1:53 PM EST- Posted in

- Storage

- SSDs

- Samsung

- TLC

- Samsung SSD 840

Performance Consistency

In our Intel SSD DC S3700 review I introduced a new method of characterizing performance: looking at the latency of individual operations over time. The S3700 promised a level of performance consistency that was unmatched in the industry, and as a result needed some additional testing to show that. The reason we don't have consistent IO latency with SSDs is because inevitably all controllers have to do some amount of defragmentation or garbage collection in order to continue operating at high speeds. When and how an SSD decides to run its defrag and cleanup routines directly impacts the user experience. Frequent (borderline aggressive) cleanup generally results in more stable performance, while delaying that can result in higher peak performance at the expense of much lower worst case performance. The graphs below tell us a lot about the architecture of these SSDs and how they handle internal defragmentation.

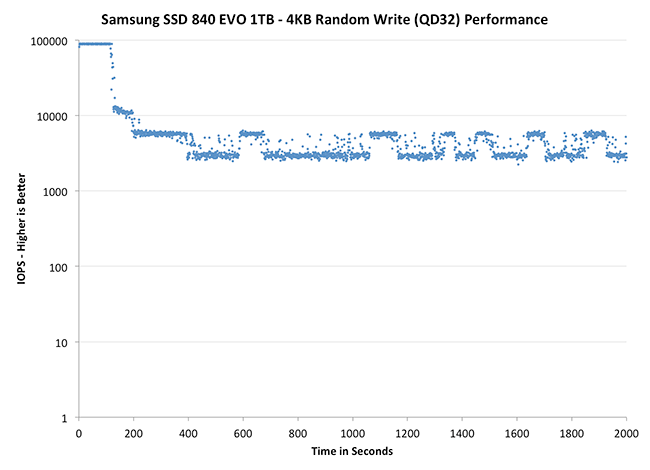

To generate the data below I took a freshly secure erased SSD and filled it with sequential data. This ensures that all user accessible LBAs have data associated with them. Next I kicked off a 4KB random write workload across all LBAs at a queue depth of 32 using incompressible data. I ran the test for just over half an hour, no where near what we run our steady state tests for but enough to give me a good look at drive behavior once all spare area filled up.

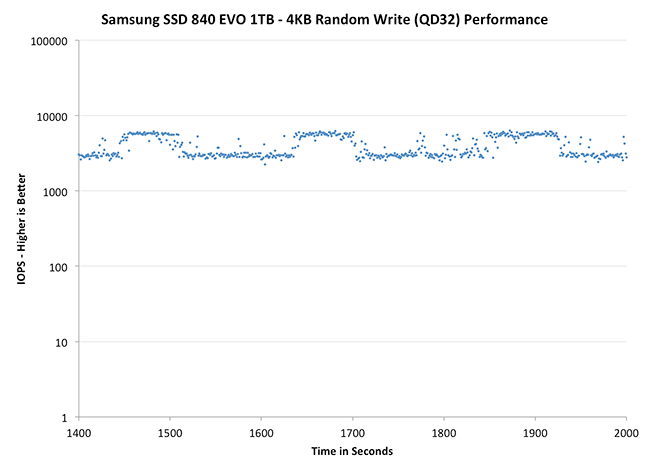

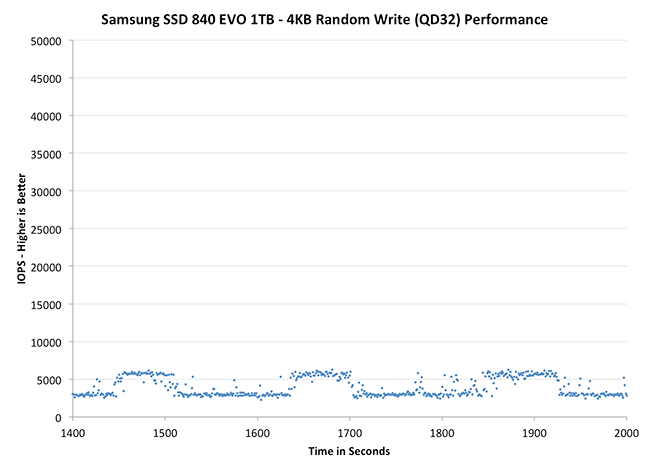

I recorded instantaneous IOPS every second for the duration of the test. I then plotted IOPS vs. time and generated the scatter plots below. Each set of graphs features the same scale. The first two sets use a log scale for easy comparison, while the last set of graphs uses a linear scale that tops out at 40K IOPS for better visualization of differences between drives.

The high level testing methodology remains unchanged from our S3700 review. Unlike in previous reviews however, I did vary the percentage of the drive that I filled/tested depending on the amount of spare area I was trying to simulate. The buttons are labeled with the advertised user capacity had the SSD vendor decided to use that specific amount of spare area. If you want to replicate this on your own all you need to do is create a partition smaller than the total capacity of the drive and leave the remaining space unused to simulate a larger amount of spare area. The partitioning step isn't absolutely necessary in every case but it's an easy way to make sure you never exceed your allocated spare area. It's a good idea to do this from the start (e.g. secure erase, partition, then install Windows), but if you are working backwards you can always create the spare area partition, format it to TRIM it, then delete the partition. Finally, this method of creating spare area works on the drives we've tested here but not all controllers may behave the same way.

The first set of graphs shows the performance data over the entire 2000 second test period. In these charts you'll notice an early period of very high performance followed by a sharp dropoff. What you're seeing in that case is the drive allocating new blocks from its spare area, then eventually using up all free blocks and having to perform a read-modify-write for all subsequent writes (write amplification goes up, performance goes down).

The second set of graphs zooms in to the beginning of steady state operation for the drive (t=1400s). The third set also looks at the beginning of steady state operation but on a linear performance scale. Click the buttons below each graph to switch source data.

|

|||||||||

| Crucial M500 960GB | Samsung SSD 840 EVO 1TB | Samsung SSD 840 EVO 250GB | SanDisk Extreme II 480GB | Samsung SSD 840 Pro 256GB | |||||

| Default | |||||||||

Thanks to the EVO's higher default over provisioning, you actually get better consistency out of the EVO than the 840 Pro out of the box. Granted you can get similar behavior out of the Pro if you simply don't use all of the drive. The big comparison is against Crucial's M500, where the EVO does a bit better. SanDisk's Extreme II however remains the better performer from an IO consistency perspective.

|

|||||||||

| Crucial M500 960GB | Samsung SSD 840 EVO 1TB | Samsung SSD 840 EVO 250GB | SanDisk Extreme II 480GB | Samsung SSD 840 Pro 256GB | |||||

| Default | |||||||||

|

|||||||||

| Crucial M500 960GB | Samsung SSD 840 EVO 1TB | Samsung SSD 840 EVO 250GB | SanDisk Extreme II 480GB | Samsung SSD 840 Pro 256GB | |||||

| Default | |||||||||

Zooming in we see very controlled and frequent GC patterns on the 1TB drive, something we don't see in the 840 Pro. The 250GB drive looks a bit more like a clustered random distribution of IOs, but minimum performance is still much better than on the standard OP 840 Pro.

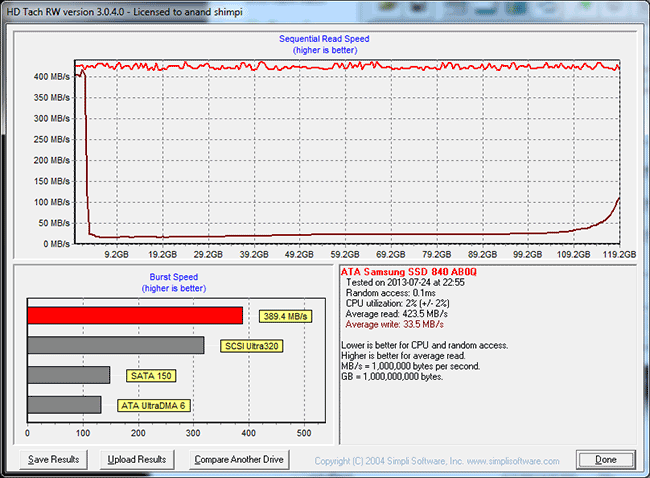

TRIM Validation

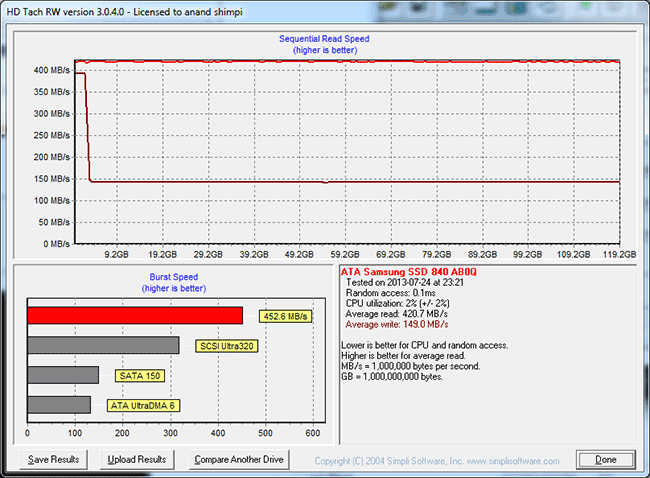

Our performance consistency test actually replaces our traditional TRIM test in terms of looking at worst case scenario performance, but I wanted to confirm that TRIM was functioning properly on the EVO so I dusted off our old test for another go. The test procedure remains unchanged: fill the drive with sequential data, run a 4KB random write test (QD32, 100% LBA range) for a period of time (30 minutes in this case) and use HDTach to visualize the impact on write performance:

Minimum performance drops down to around 30MB/s, eugh. Although the EVO can be reasonably consistent, you'll still want to leave some free space on the drive to ensure that performance always stays high (I recommend 15 - 25% if possible).

A single TRIM pass (quick format under Windows 7) fully restores performance as expected:

The short period of time at 400MB/s is just TurboWrite doing its thing.

137 Comments

View All Comments

halbhh2 - Saturday, July 27, 2013 - link

Exactly. I found that even moving up from 8GB to 16GB had a great effect for me with an old Samsung F3 hard drive. The difference: after just 30 minutes from boot, loading an often used program like iTunes (for podcasts) was very similar to the speed on my laptop which has an 830 SSD and only 4GB. Both load in about 4 seconds, and the 16GB desktop loads so fast because it has had time to cache a lot of iTunes. Before the ram upgrade, that load time for iTunes on the desktop computer was about 14 seconds. Quite a difference due to windows 7 caching. The extra improvement I'd get from installing an SSD onto the desktop computer now would be modest, since I usually only need to reboot once or twice in a week. Still, the sweet spot of price/performance for me is approaching, probably around $60-$70, and that won't be long.Klimax - Sunday, July 28, 2013 - link

It's in wrong place. Unlike OS level caching (at least in Windows), which is in cooperation between memory manager, cache manager and file system driver, this is too low in the chain and sees only requests but nothing else and also takes memory from OS and takes too few.Coup27 - Thursday, July 25, 2013 - link

Typo: "although I wouldn't recommend deploying the EVO in a write heavy serve Microsoft's eDrive standard isn't supported at launch"Excellent article. Samsung continue to push SSDs and I'm really excited about RAPID. Is the 840 Pro due for a successor any time soon? I am selling my current ATX Sandy Bridge + 830 and getting a mITX Haswell + (840 Pro?) and want the fastest Samsung consumer SSD available and I'd be gutted to buy an 840 Pro to see it's successor released a few weeks later.

vLsL2VnDmWjoTByaVLxb - Thursday, July 25, 2013 - link

Another typo last page:"Even though its performnace wasn't class leading, it was honestly good enough to make the recommendation a no-brainer. "

JDG1980 - Thursday, July 25, 2013 - link

Will there be an 840 EVO Pro coming out later? To me, TLC is still a deal-breaker.By the way, what happens if power goes out during a TurboWrite (before the data has been written to the normal storage space)? Does this result in data loss, or, worse, bricking? I'd suspect Samsung at least avoided the latter, but I'd like to see some confirmation on this.

sherlockwing - Thursday, July 25, 2013 - link

I guess you didn't read the Endurance part of the review? Even if you write 100GiB a day all of those drives last longer than their warranty( 3 years), that's more than enough endurance.Coup27 - Thursday, July 25, 2013 - link

Some people just don't want to accept the facts. TLC could get to 99.9% of MLC endurance and people would still want MLC. I've been deploying 840's in a light duty enterprise environment and they've been fine. The only reason I use MLC at home is because I want the absolute fastest performance and I can afford it, not that I actually need it.Oxford Guy - Thursday, July 25, 2013 - link

The SanDisk Extreme 240 was just on sale for $150. TLC NAND still seems like a solution in need of a problem.Spunjji - Friday, July 26, 2013 - link

You can approach TLC pricing with an MLC drive in a sale, but the fact remains that when it comes to actual sustainable production pricing TLC NAND has a 50% density (and thus manufacturing cost advantage) over MLC. Given that NAND price determines drive cost and drive costs are the primary barrier of entry to SSDs, I'm fairly sure it has a problem to solve.FWIW I have not seen any drive touch the 120GB 840's price here in the UK, on sale or otherwise.

Oxford Guy - Friday, July 26, 2013 - link

However, there is also the problem of increasing latency and lifespan from node shrinkage.