NVIDIA GeForce GTX 770 Review: The $400 Fight

by Ryan Smith on May 30, 2013 9:00 AM ESTCrysis 3

Our final benchmark in our suite needs no introduction. With Crysis 3, Crytek has gone back to trying to kill computers, taking back the “most punishing game” title in our benchmark suite. Only in a handful of setups can we even run Crysis 3 at its highest (Very High) settings, and that’s still without AA. Crysis 1 was an excellent template for the kind of performance required to driver games for the next few years, and Crysis 3 looks to be much the same for 2013.

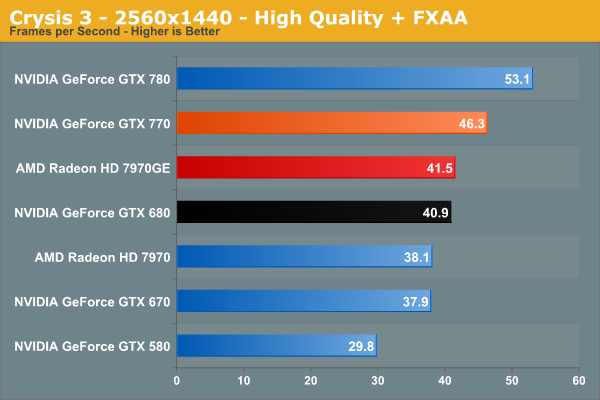

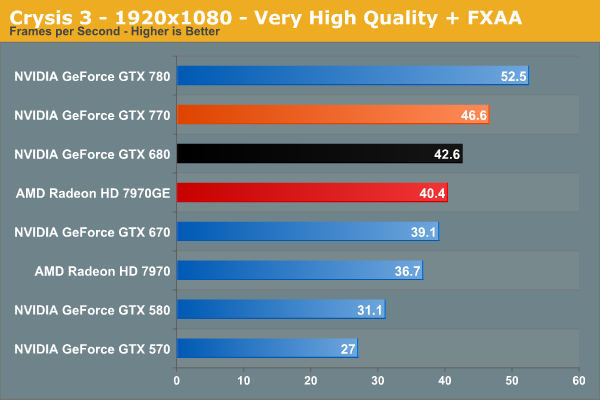

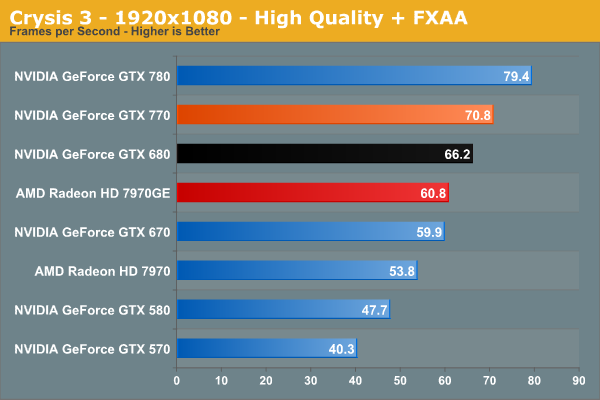

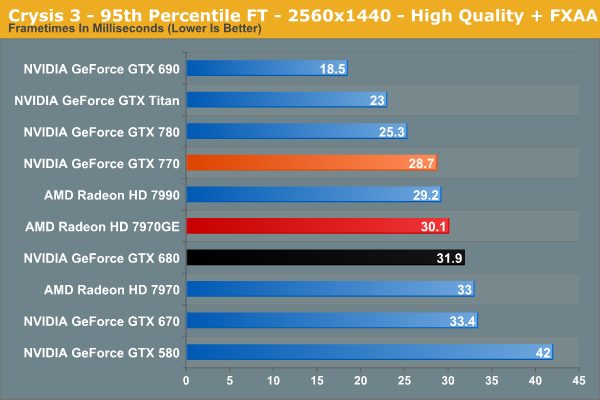

Unsurprisingly, Crysis 3 is another game where 2560 isn’t really on the table. In fact we have to go all the way to 1920 at High settings to get a framerate above 60fps. By this point the GTX 770 leads over the 7970GE by 16%, a smaller 7% over the GTX 680, and 76% over the GTX 570.

117 Comments

View All Comments

JDG1980 - Thursday, May 30, 2013 - link

TechPowerUp ran tests of three GTX 770s with third-party coolers (Asus DirectCU, Gigabyte WindForce, and Palit JetStream). All three beat the GTX 770 reference on thermals for both idle and load. Noise levels varied, but the DirectCU seemed to be the winner since it was quieter than the reference cooler on both idle and load. That card also was a bit faster in benchmarks than the reference.That said, I agree the build quality of the reference cooler is better than the aftermarket substitutes - but Asus is probably a close second. Their DirectCU series has always been very good.

ArmedandDangerous - Thursday, May 30, 2013 - link

This article is in desperate need of some editing work. Spelling and comprehension errors throughout.Nighyal - Thursday, May 30, 2013 - link

I asked this on the 780 review, and it seems like it might be even more interesting for the 770 considering Nvidia's basically threw more power at a 680, but a performance per watt comparison would be great. If there was something that clearly showed the efficiency of each card in a way (maybe using a fixed work load) it would be interesting to see. Especially when compared to similar architectures or when comparing AMD's efforts with the GHz editions.ThIrD-EyE - Thursday, May 30, 2013 - link

Since when did 70-80C temperatures become acceptable? I had been looking to upgrade my MSI Cyclone GTX 460 which would never hit higher than 62C and I got a great deal on 2 560TIs for less than half the cost of them new. I have run them in single card and SLI; I see 80C+ when I run an overclock program like MSI Afterburner. I use a custom fan profile to bring the temps down to 75C or less at higher fan speed, but still in reasonable noise levels. It's still not quite enough.All these video cards may be fine at these temperatures, but when you are sitting next to the case and there is 80C being pumped out, you really feel it. Especially now with Summer heat finally hitting where I live. My $25 Hyper212+ keeps my OC'ed i7 2600k at a good 45-50C when playing games. I would buy aftermarket coolers if they were not going to take up 3 slots each (I have a card that I need, but would have to be removed.) and didn't cost nearly as much as I paid for the cards.

AMD, NVIDIA and card partners need to work on bringing temperatures down.

quorm - Thursday, May 30, 2013 - link

lower temperature readings do not mean less heat produced. better cooling just moves the heat from the GPU to your room more efficiently.ThIrD-EyE - Thursday, May 30, 2013 - link

The architecture of these video cards were obviously made for performance first. That does not mean they can't also work on lowering power consumption to lower the heat produced. One thing that I've found to help my situation is to set all games to run at 60fps without vsync if possible, which thankfully is most fo the games I play. Some games become unplayable or wonky with vsync and other ways of limiting fps without vsync, so I just deal with the heat from no fps limits.I hope that the developers of console ports from PS4 and Xbox One put in an fps limit option like Borderlands 2 if they don't allow dev console access.

MattM_Super - Friday, May 31, 2013 - link

Although its not currently accessible from the driver control panel, Nvidia drivers have a built in fps limiter that I use in every game I play (never had any issues with it). You can access it with NvidiaInspector.DanNeely - Thursday, May 30, 2013 - link

Since 70-80C has always been the best a blower style cooler can do on a high power GPU without getting obscenely loud, and blowers have proven to be the best option to avoid frying the GPU in a case with horrible ventilation. IOW about when both nVidia and ATI adopted blowers for their reference designs.JPForums - Thursday, May 30, 2013 - link

70C-80C temperatures became acceptable after nVidia decided to release Fermi based cards that regularly hit the mid 90Cs. Since then, the temperatures have in fact come down. Of course, they are still high for my liking and I pay extra for cards with better coolers (I.E. MSI TwinFrozer, Asus DirectCU). That said, there is only so much you can do when pushing 3 times the TDP of an Intel Core i7-3770K while cooling it with a cooler that is both lighter and less ideally formed for the task (Comparing some of the best GPU coolers to any number of heatsinks from Noctua, Thermalright, etc.). Water cooling loops work wonders, but not everyone wants the expense or hassle.Rick83 - Friday, May 31, 2013 - link

The higher the temperatures, the less fan speed you need, because you have higher delta-theta between the air entering the cooler and the cooling fins, which results in more energy transfer at less volume throughput.Obviously the temperature is a pure function of the fan curve under load, and has very little to do with the actual chip (unless you go so far down in energy output, that you can rely on passive convection).