Choosing a Gaming CPU at 1440p: Adding in Haswell

by Ian Cutress on June 4, 2013 10:00 AM ESTMetro 2033

Our first analysis is with the perennial reviewers’ favorite, Metro 2033. It occurs in a lot of reviews for a couple of reasons – it has a very easy to use benchmark GUI that anyone can use, and it is often very GPU limited, at least in single GPU mode. Metro 2033 is a strenuous DX11 benchmark that can challenge most systems that try to run it at any high-end settings. Developed by 4A Games and released in March 2010, we use the inbuilt DirectX 11 Frontline benchmark to test the hardware at 1440p with full graphical settings. Results are given as the average frame rate from a second batch of 4 runs, as Metro has a tendency to inflate the scores for the first batch by up to 5%.

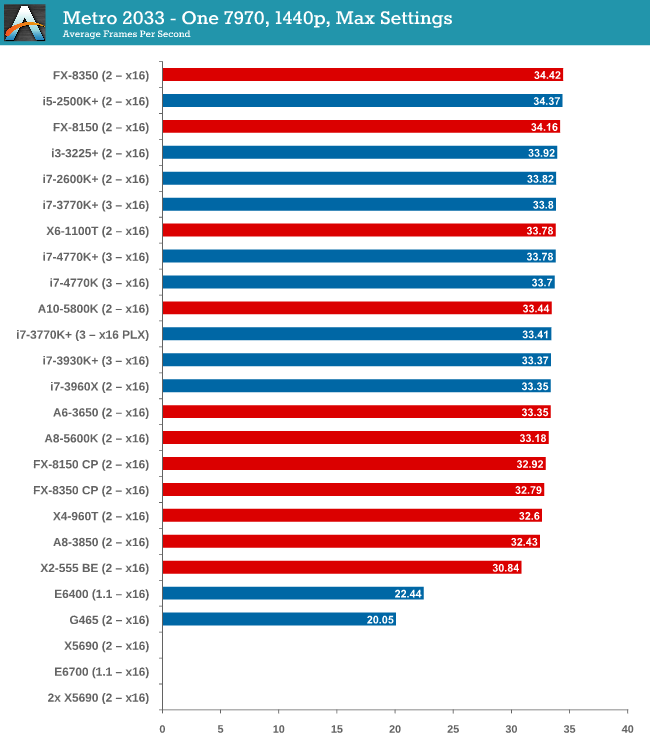

One 7970

With one 7970 at 1440p, every processor is in full x16 allocation and there seems to be no split between any processor with 4 threads or above. Processors with two threads fall behind, but not by much as the X2-555 BE still gets 30 FPS. There seems to be no split between PCIe 3.0 or PCIe 2.0, or with respect to memory.

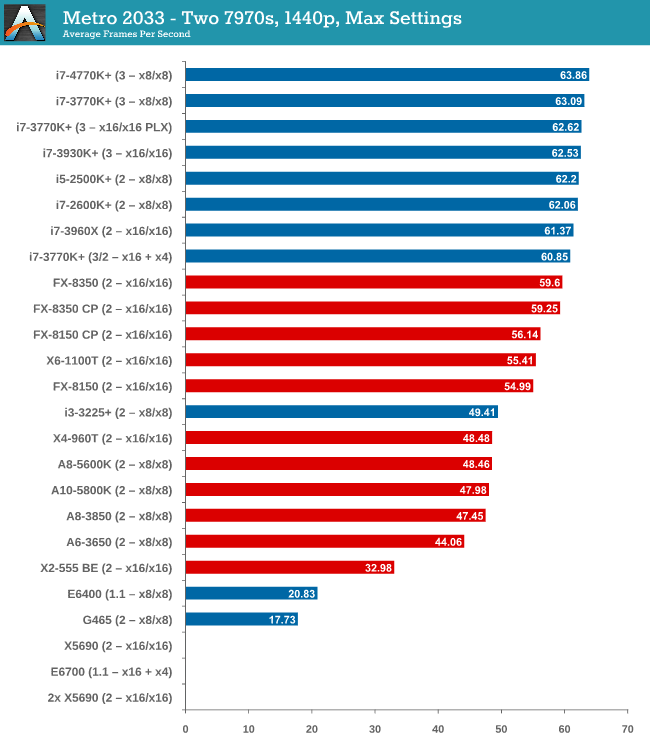

Two 7970s

When we start using two GPUs in the setup, the Intel processors have an advantage, with those running PCIe 2.0 a few FPS ahead of the FX-8350. Both cores and single thread speed seem to have some effect (i3-3225 is quite low, FX-8350 > X6-1100T).

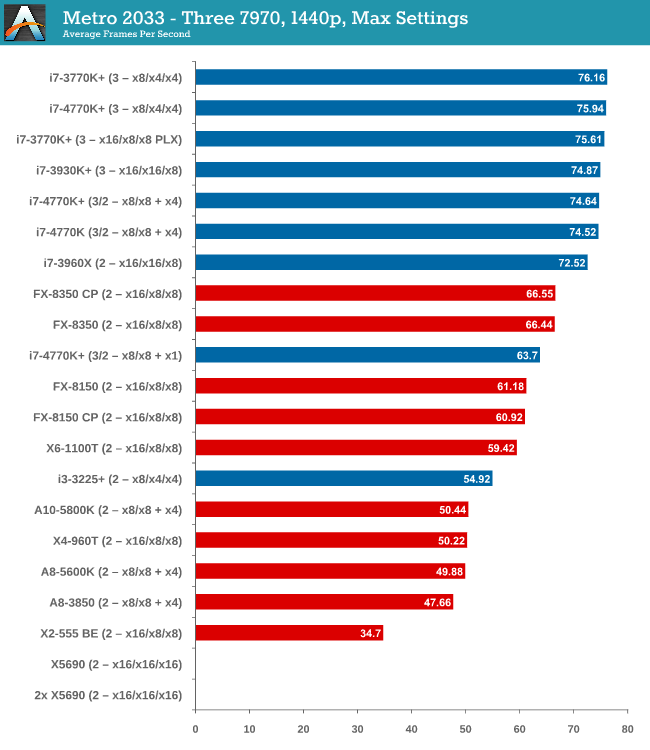

Three 7970s

More results in favour of Intel processors and PCIe 3.0, the i7-3770K in an x8/x4/x4 surpassing the FX-8350 in an x16/x16/x8 by almost 10 frames per second. There seems to be no advantage to having a Sandy Bridge-E setup over an Ivy Bridge one so far.

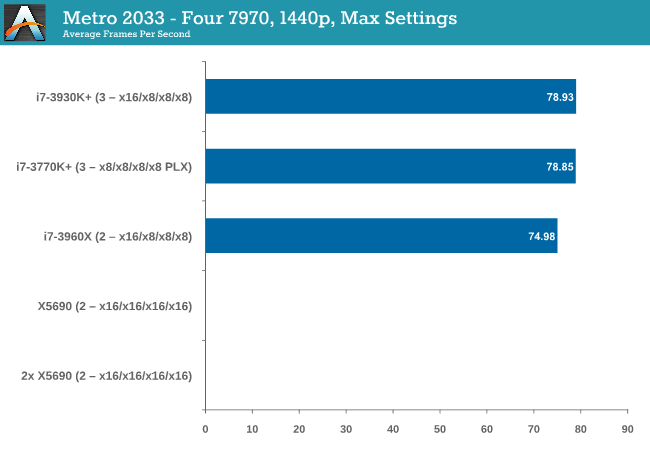

Four 7970s

While we have limited results, PCIe 3.0 wins against PCIe 2.0 by 5%.

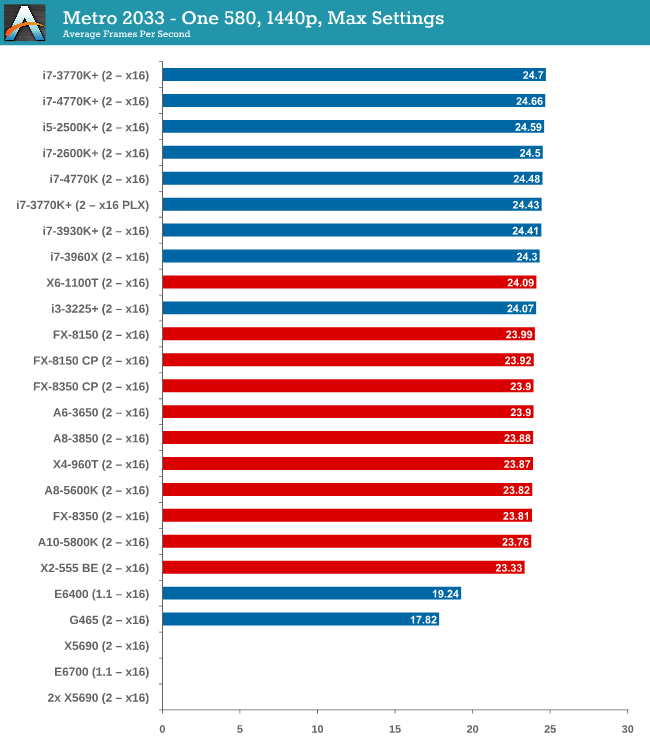

One 580

From dual core AMD all the way up to the latest Ivy Bridge, results for a single GTX 580 are all roughly the same, indicating a GPU throughput limited scenario.

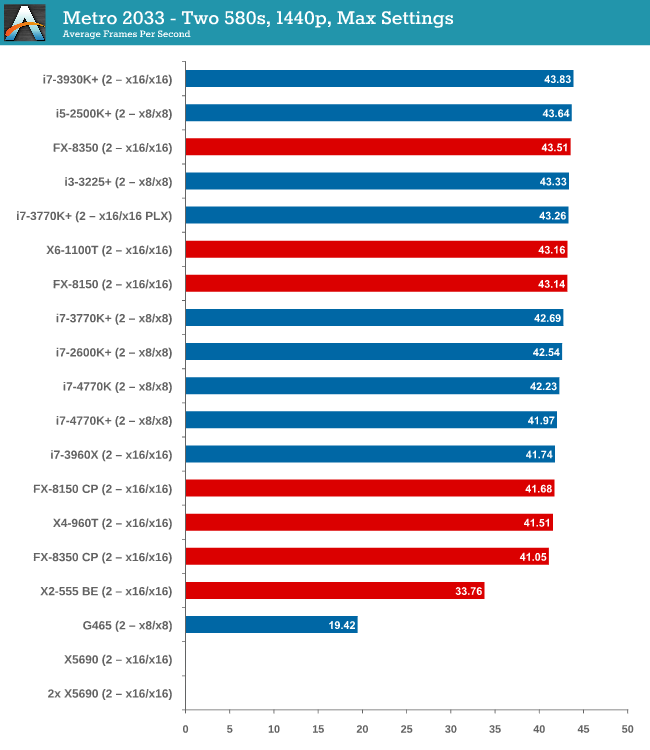

Two 580s

Similar to one GTX580, we are still GPU limited here.

Metro 2033 conclusion

A few points are readily apparent from Metro 2033 tests – the more powerful the GPU, the more important the CPU choice is, and that CPU choice does not matter until you get to at least three 7970s. In that case, you want a PCIe 3.0 setup more than anything else.

116 Comments

View All Comments

IanCutress - Tuesday, June 4, 2013 - link

Hi Ternie,To answer your questions:

(1) Unfortunately for a lot of users, even DIY not just system integrators, they leave the motherboard untouched (even at default memory, not XMP). So choosing that motherboard with MCT might make a difference in performance. Motherboards without MCT are also different between themselves, depending on how quickly they respond to CPU loading and ramp up the speed, and then if they push it back down to idle immediately in a low period or keep the high turbo for a few seconds in case the CPU loading kicks back in.

2) This is a typo - I was adding too many + CPU results at the same time and got carried away.

3) While people have requested more 'modern' games, there are a couple of issues. If I release something that has just come out, the older drivers I have to use for consistency will either perform poorly or not scale (case in point, Sleeping Dogs on Catalyst 12.3). If I am then locked into those drivers for a year, users will complain that this review uses old drivers that don't have the latest performance increases (such as 8% a month for new titles not optimized) and that my FPS numbers are unbalanced. That being said, I am looking at what to do for 2014 and games - it has been suggested that I put in Bioshock Infinite and Tomb Raider, perhaps cut one or two. If there are any suggestions, please email me with thoughts. I still have to keep the benchmarks regular and have to run without attention (timedemos with AI are great), otherwise other reviews will end up being neglected. Doing this sort of testing could easily be a full time job, which in my case should be on motherboards and this was something extra I thought would be a good exercise.

Michaelangel007 - Tuesday, June 4, 2013 - link

It is sad to poor journalism in the form of excuses in an otherwise excellent article. :-/1. Any review sites that make excuses for why they ignore FCAT just highlights that they don't _really_ understand the importance of _accurate_ frame stats.

2. Us hardcore games can _easily_ tell the difference betwen 60 Hz and 30 Hz. I bought a Titan to play games at 1080p @ 100+ Hz on the Asus VG248QE using nVidia's LightBoost to eliminate ghosting. You do your readers a dis-service by again not understand the issue.

3. Focusing on 1440 is largely useless as it means people can't directly compare how their Real-World (tm) system compares to the benchmarks.

4. If your benchmarks are not _exactly_ reproducible across multiple systems you are doing it wrong. Name & Shame games that don't allow gamers to run benchmarks. Use "standard" cut-scenes for _consistency_.

It is sad to see the quality of a "tech" article gloss and trivial important details.

AssBall - Tuesday, June 4, 2013 - link

Judging by your excellent command of English, I don't think you could identify a decent technical article if it slapped you upside the head and banged your sister.Razorbak86 - Tuesday, June 4, 2013 - link

LOL. I have to agree. :)Michaelangel007 - Wednesday, June 5, 2013 - link

There is a reason Tom's Hardware, Hard OCP, guru3d, etc. uses FCAT.I feel sad that you and AnandTech tech writers are to stupid to understand the importance of high frame rates (100 Hz vs 60 Hz vs 30 Hz), frame time variance, 99 percentile, proper CPU-GPU load balancing, and micro stuttering. One of these days when you learn how to spell 'ad hominem' you might actually have something _constructive_ to add to the discussion. Shooting the messenger instead of focusing on the message shows you are still a immature little shit that doesn't know anything about GPUs.

Ignoring the issue (no matter how badly communicated) doesn't make it go away.

What are _you_ doing to help raise awareness about sloppy journalism?

DaveninCali - Tuesday, June 4, 2013 - link

Why doesn't this long article include AMD's latest APU, the Richland 6800K? Heck you can even buy it now on Newegg.ninjaquick - Tuesday, June 4, 2013 - link

The data collected in this article is likely a week or two old. Richland was not available at that time. It takes an extremely long time to do this kind of testing.DaveninCali - Tuesday, June 4, 2013 - link

Richland was launched today. Haswell was launched two days ago. Neither CPU was available two weeks ago. It all depends on review units being released to review websites. Either Richland was left out because it wasn't different enough from Trinity to matter or AMD did not hand out review units.majorleague - Wednesday, June 5, 2013 - link

Here is a youtube link showing 3dmark11 and windows index rating for the 4770k 3.5ghz Haswell. Not overclocked.Youtube link:

http://www.youtube.com/watch?v=k7Yo2A__1Xw

Chicken76 - Tuesday, June 4, 2013 - link

Ian, in the table on page 2 there's a mistake: the Phenom II X4 960T has a stock speed of 3 GHz (you listed 3.2 GHz) and it does turbo up to 3.4 GHz.