Choosing a Gaming CPU at 1440p: Adding in Haswell

by Ian Cutress on June 4, 2013 10:00 AM ESTCivilization V

A game that has plagued my testing over the past twelve months is Civilization V. Being on the older 12.3 Catalyst drivers were somewhat of a nightmare, giving no scaling, and as a result I dropped it from my test suite after only a couple of reviews. With the later drivers used for this review, the situation has improved but only slightly, as you will see below. Civilization V seems to run into a scaling bottleneck very early on, and any additional GPU allocation only causes worse performance.

Our Civilization V testing uses Ryan’s GPU benchmark test all wrapped up in a neat batch file. We test at 1440p, and report the average frame rate of a 5 minute test.

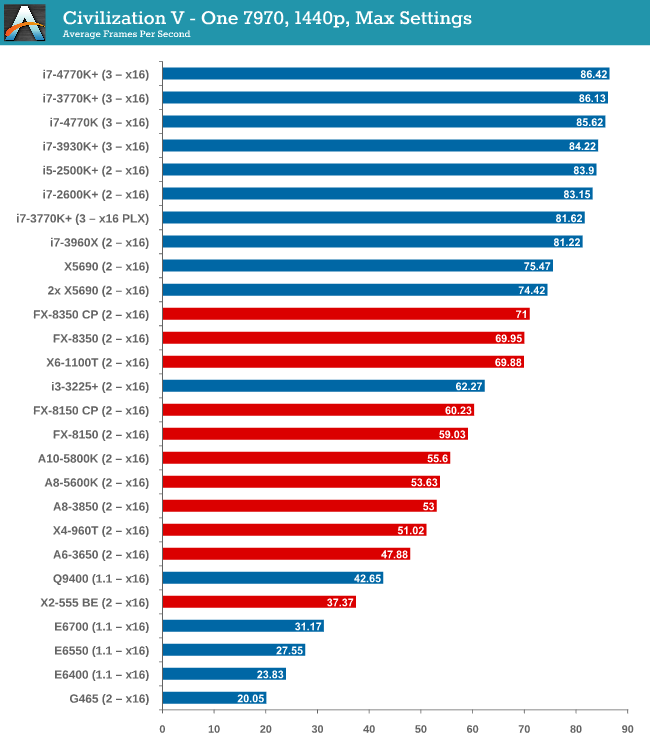

One 7970

Civilization V is the first game where we see a gap when comparing processor families. A big part of what makes Civ5 perform at the best rates seems to be PCIe 3.0, followed by CPU performance – our PCIe 2.0 Intel processors are a little behind the PCIe 3.0 models. By virtue of not having a PCIe 3.0 AMD motherboard in for testing, the bad rap falls on AMD until PCIe 3.0 becomes part of their main game.

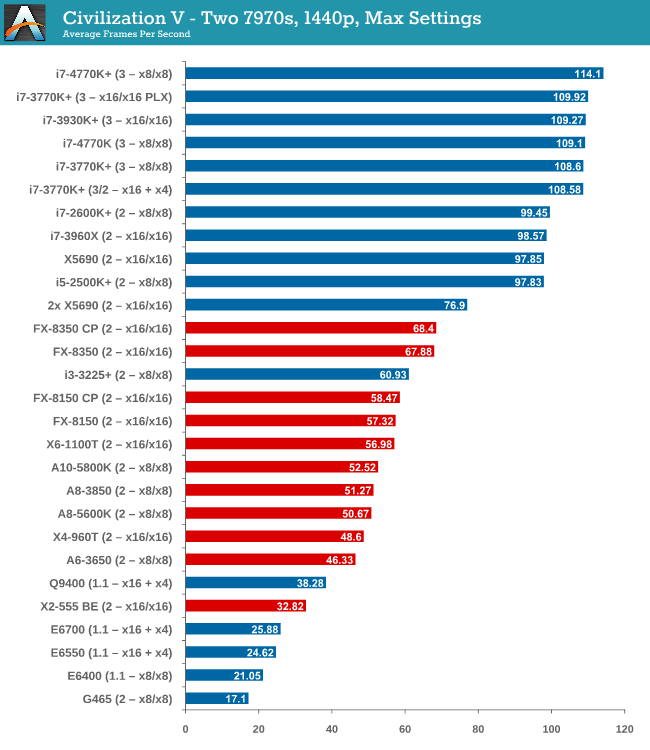

Two 7970s

The power of PCIe 3.0 is more apparent with two 7970 GPUs, however it is worth noting that only processors such as the i5-2500K and above have actually improved their performance with the second GPU. Everything else stays relatively similar.

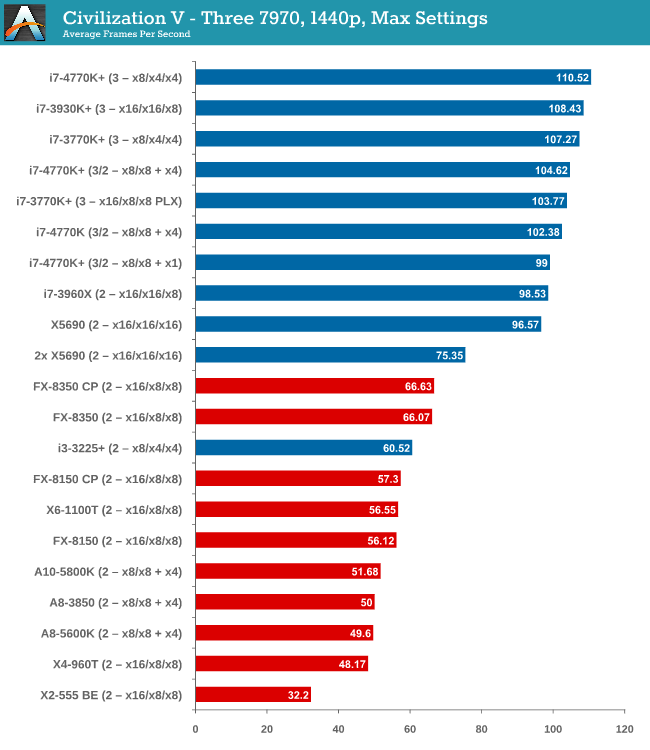

Three 7970s

More cores and PCIe 3.0 are winners here, but no GPU configuration has scaled above two GPUs.

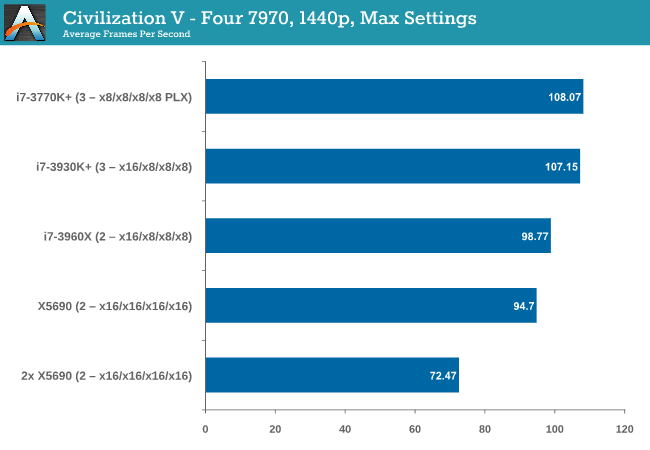

Four 7970s

Again, no scaling.

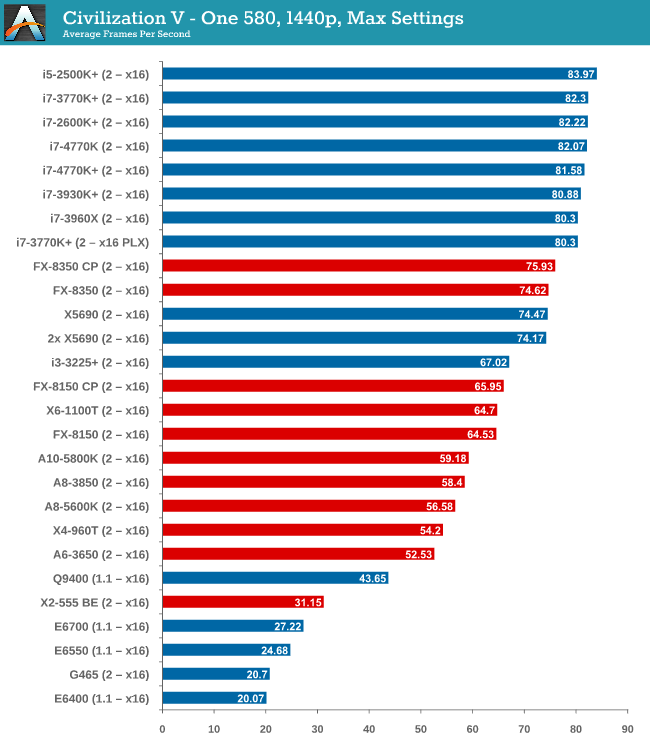

One 580

While the top end Intel processors again take the lead, an interesting point is that now we have all PCIe 2.0 values for comparison, the non-hyper threaded 2500K takes the top spot, 10% higher than the FX-8350.

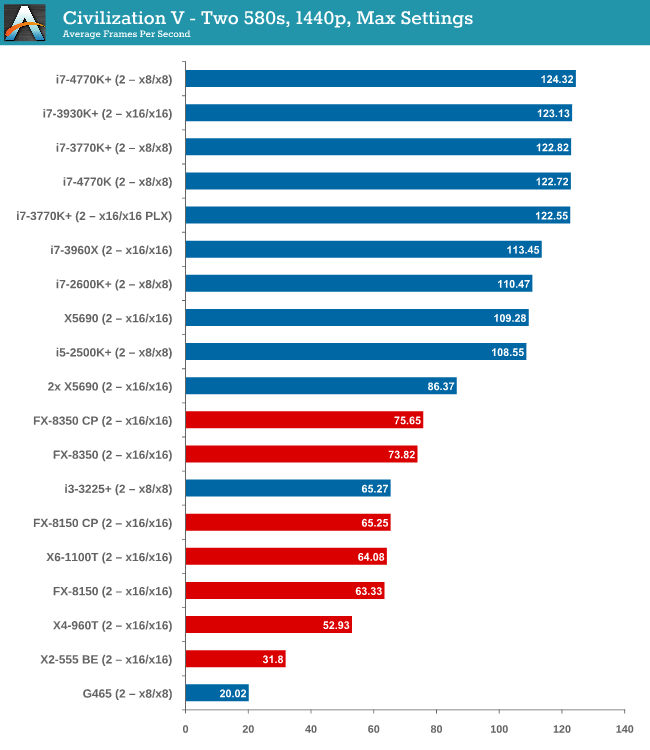

Two 580s

We have another Intel/AMD split, by virtue of the fact that none of the AMD processors scaled above the first GPU. On the Intel side, you need at least an i5-2500K to see scaling, similar to what we saw with the 7970s.

Civilization V conclusion

Intel processors are the clear winner here, though not one stands out over the other. Having PCIe 3.0 seems to be the positive point for Civilization V, but in most cases scaling is still out of the window unless you have a monster machine under your belt.

116 Comments

View All Comments

TheJian - Thursday, June 6, 2013 - link

http://www.tomshardware.com/reviews/a10-6700-a10-6...Check the toms A10-6800 review. With only a 6670D card i3-3220 STOMPS the A10-6800k with the same 6670 radeon card in 1080p in F1 2012. 68fps to 40fps is a lot right? Both chips are roughly $145. Skyrim shows 6800k well, but you need 2133memory to do it. But faster Intel cpu's will leave this in the dust with a better gpu anyway.

http://www.guru3d.com/articles_pages/amd_a10_6800k...

You can say 100fps is a lot in far cry2 (it is) but you can see how a faster cpu is NOT limiting the 580 GTX here as all resolutions run faster. The i7-4770 allows GTX 580 to really stretch it's legs to 183fps, and drops to 132fps at 1920x1200. The FX 8350 however is pegged at 104 for all 4 resolutions. Even a GTX 580 is held back, never mind what you'd be doing to a 7970ghz etc. All AMD cpu's here are limiting the 580GTX while the Intel's run up the fps. Sure there are gpu limited games, but I'd rather be using the chip that runs away from slower models when this isn't the case. From what all the data shows amongst various sites, you'll be caught with your pants down a lot more than anandtech is suggesting here. Hopefully that's enough games for everyone to see it's far more than Civ5 and even with different cards affecting things. If both gpu sides double their gpu cores, we could have a real cpu shootout in many things at 1440p (and of course below this they will all spread widely even more than I've shown with many links/games).

roedtogsvart - Tuesday, June 4, 2013 - link

Hey Ian, how come no Nehalem or Lynnfield data points? There are a lot of us on these platforms who are looking at this data to weigh vs. the cost of a Haswell upgrade. With the ol' 775 geezers represented it was disappointing not to see 1366 or 1156. Superb work overall however!roedtogsvart - Tuesday, June 4, 2013 - link

Other than 6 core Xeon, I mean...A5 - Tuesday, June 4, 2013 - link

Hasn't had time to test it yet, and hardware availability. He covers that point pretty well in this article and the first one.chizow - Tuesday, June 4, 2013 - link

Yeah I understand and agree, would definitely like to see some X58 and Kepler results.ThomasS31 - Tuesday, June 4, 2013 - link

Seems 1440p is too demanding on the GPU side to show the real gaming difference between these CPUs.Is 1440p that common in gaming these days?

I have the impression (from my CPU change experiences) that we would see different differences at 1080p for example.

A5 - Tuesday, June 4, 2013 - link

Read the first page?ThomasS31 - Tuesday, June 4, 2013 - link

Sure. Though still for single GPU, it would be a wiser choice to be "realistic" and do 1080p that is more common (on single monitor average Joe gamer type of scenario).And go 1440p (or higher) for multi GPUs and enthusiast.

The purpose of the article is choosing a CPU and that needs to show some sort of scaling in near real life scenarios, but if the GPU kicks in from start it will not be possible to evaluate the CPU part of the performance equation in games.

Or maybe it would be good to show some sort of combined score from all the test, so the Civ V and other games show some differentation at last in the recommendation as well, sort of.

core4kansan - Tuesday, June 4, 2013 - link

The G2020 and G860 might well be the best bang-for-buck cpus, especially if you tested at 1080p, where most budget-conscious gamers would be anyway.Termie - Tuesday, June 4, 2013 - link

Ian,A couple of thoughts for you on methodology:

(1) While I understand the issue of MCT is a tricky one, I think you'd be better off just shutting it off, or if you test with it, noting the actually core speeds that your CPUs are operating at, which should be 200MHz above nominal Turbo.

(2) I don't understand the reference to an i3-3225+, as MCT should not have any effect on a dual-core chip, since it has no Turbo mode.

(3) I understand the benefit of using time demos for large-scale testing like what you're doing, but I do think you should use at least one modern game. I'd suggest replacing Metro2033, which has incredibly low fps results due to a lack of engine optimization, with Tomb Raider, which has a very simple, quick, and consistent built-in benchmark.

Thanks for all your hard work to add to the body of knowledge on CPUs and gaming.

Termie