The Xbox One: Hardware Analysis & Comparison to PlayStation 4

by Anand Lal Shimpi on May 22, 2013 8:00 AM ESTPower & Thermals

Microsoft made a point to focus on the Xbox One’s new power states during its introduction. Remember that when the Xbox 360 was introduced, power gating wasn’t present in any shipping CPU or GPU architectures. The Xbox One (and likely the PlayStation 4) can power gate unused CPU cores. AMD’s GCN architecture supports power gating, so I’d assume that parts of the GPU can be power gated as well. Dynamic frequency/voltage scaling is also supported. The result is that we should see a good dynamic range of power consumption on the Xbox One, compared to the Xbox 360’s more on/off nature.

AMD’s Jaguar is quite power efficient, capable of low single digit idle power so I would expect far lower idle power consumption than even the current slim Xbox 360 (50W would be easy, 20W should be doable for truly idle). Under heavy gaming load I’d expect to see higher power consumption than the current Xbox 360, but still less than the original 2005 Xbox 360.

Compared to the PlayStation 4, Microsoft should have the cooler running console under load. Fewer GPU ALUs and lower power memory don’t help with performance but do at least offer one side benefit.

OS

The Xbox One is powered by two independent OSes running on a custom version of Microsoft’s Hyper-V hypervisor. Microsoft made the hypervisor very lightweight, and created hard partitions of system resources for the two OSes that run on top of it: the Xbox OS and the Windows kernel.

The Xbox OS is used to play games, while the Windows kernel effectively handles all apps (as well as things like some of the processing for Kinect inputs). Since both OSes are just VMs on the same hypervisor, they are both running simultaneously all of the time, enabling seamless switching between the two. With much faster hardware and more cores (8 vs 3 in the Xbox 360), Microsoft can likely dedicate Xbox 360-like CPU performance to the Windows kernel while running games without any negative performance impact. Transitioning in/out of a game should be very quick thanks to this architecture. It makes a ton of sense.

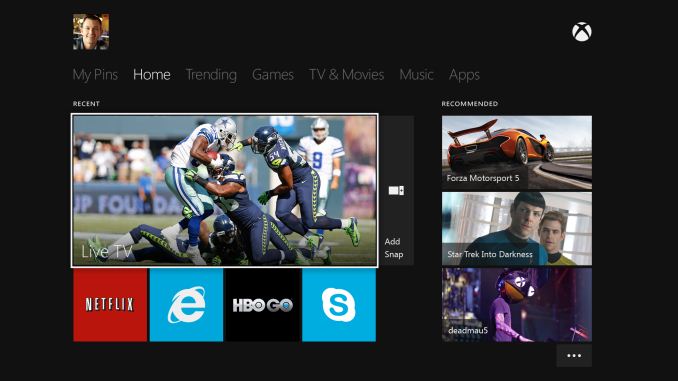

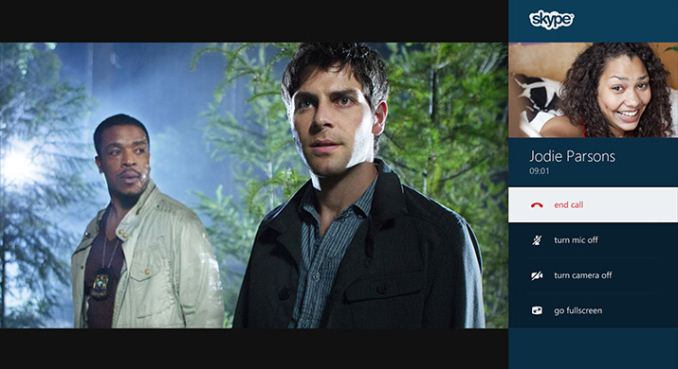

Similarly, you can now multitask with apps. Microsoft enabled Windows 8-like multitasking where you can snap an app to one side of the screen while watching a video or playing a game on the other.

The hard partitioning of resources would be nice to know more about. The easiest thing would be to dedicate a Jaguar compute module to each OS, but that might end up being overkill for the Windows kernel and insufficient for some gaming workloads. I suspect ~1GB of system memory ends up being carved off for Windows.

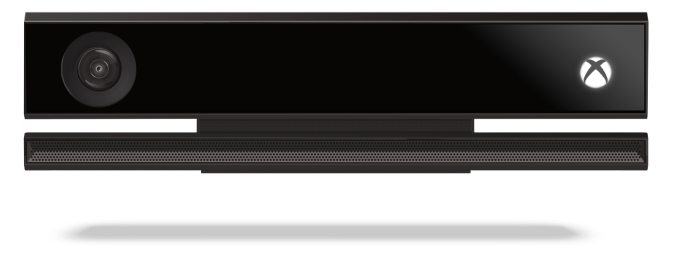

Kinect & New Controller

All Xbox One consoles will ship with a bundled Kinect sensor. Game console accessories generally don’t do all that well if they’re optional. Kinect seemed to be the exception to the rule, but Microsoft is very focused on Kinect being a part of the Xbox going forward so integration here makes sense.

The One’s introduction was done entirely via Kinect enabled voice and gesture controls. You can even wake the Xbox One from a sleep state using voice (say “Xbox on”), leveraging Kinect and good power gating at the silicon level. You can use large two-hand pinch and stretch gestures to quickly move in and out of the One’s home screen.

The Kinect sensor itself is one of 5 semi-custom silicon elements in the Xbox One - the other four are: SoC, PCH, Kinect IO chip and Blu-ray DSP (read: the end of optical drive based exploits). In the One’s Kinect implementation Microsoft goes from a 640 x 480 sensor to 1920 x 1080 (I’m assuming 1080p for the depth stream as well). The camera’s field of view was increased by 60%, allowing support for up to 6 recognized skeletons (compared to 2 in the original Kinect). Taller users can now get closer to the camera thanks to the larger FOV, similarly the sensor can be used in smaller rooms.

The Xbox One will also ship with a new redesigned wireless controller with vibrating triggers:

Thanks to Kinect's higher resolution and more sensitive camera, the console should be able to identify who is gaming and automatically pair the user to the controller.

TV

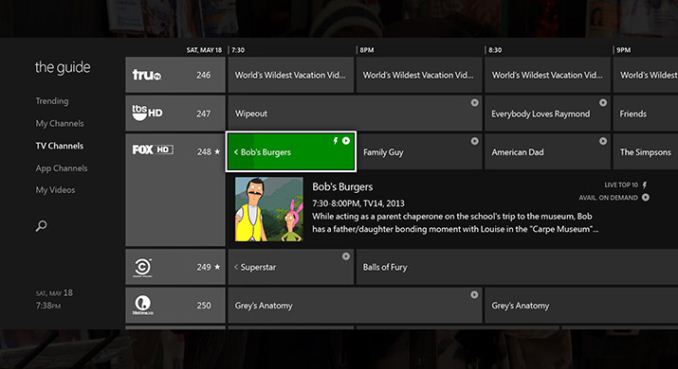

The Xbox One features a HDMI input for cable TV passthrough (from a cable box or some other tuner with HDMI out). Content passed through can be viewed with overlays from the Xbox or just as you would if the Xbox wasn’t present. Microsoft built its own electronic program guide that allows you to tune channels by name, not just channel number (e.g. say “Watch HBO”). The implementation looks pretty slick, and should hopefully keep you from having to switch inputs on your TV - the Xbox One should drive everything. Microsoft appears to be doing its best to merge legacy TV with the new world of buying/renting content via Xbox Live. It’s a smart move.

One area where Microsoft is being a bit more aggressive is in its work with the NFL. Microsoft demonstrated fantasy football integration while watching NFL passed through to the Xbox One.

245 Comments

View All Comments

tipoo - Wednesday, May 22, 2013 - link

I wonder how close the DDR3 plus small fast eSRAM can get to the GDDR5s peak performance from the PS4. The GDDR5 will be better in general for the GPU no doubt, but how much will be offset by the eSRAM? And how much will GDDRs high latency hurt the CPU in the PS4?Braincruser - Wednesday, May 22, 2013 - link

The cpu is running on low frequency ~ 1.6 GHz which is half the frequency of most mainstream processors. And the GDDRs latency shouldn't be more than double the DDR3 latency. So in effect the latency stays the same, relativelly speaking.MrMilli - Wednesday, May 22, 2013 - link

GDDR5 actually has around ~8-10x worse latency compared to DDR3. So the CPU in the PS4 is going to be hurt. Everybody's talking about bandwidth but the Xbox One is going to have such a huge latency advantage that maybe in the end it's going to be better off.mczak - Wednesday, May 22, 2013 - link

gddr5 having much worse latency is a myth. The underlying memory technology is all the same after all, just the interface is different. Though yes memory controllers of gpus are more optimized for bandwidth rather than latency but that's not gddr5 inherent. The latency may be very slightly higher, but it probably won't be significant enough to be noticeable (no way for a factor of even 2 yet alone 8 as you're claiming).I don't know anything about the specific memory controller implementations of the PS4 or Xbox One (well other than one using ddr3 the other gddr5...) but I'd have to guess latency will be similar.

shtldr - Thursday, May 23, 2013 - link

Are you talking latency in cycles (i.e. relative to memory's clock rate) or latency in seconds (absolute)? Latency in cycles is going to be worse, latency in seconds is going to be similar. If I understand it correctly, the absolute (objective) latency expressed in seconds is the deciding factor.MrMilli - Thursday, May 23, 2013 - link

I got my info from Beyond3D but I went to dig into whitepapers from Micron and Hynix and it seems that my info was wrong.Micron's DDR3 PC2133 has a CL14 read latency specification but possibly set as low as CL11 on the XBox. Hynix' GDDR5 (I don't know which brand GDDR5 the PS4 will use but they'll all be more or less the same) has a CL18 up to CL20 for GDDR5-5500.

So even though this doesn't give actual latency information since that depends a lot on the memory controller, it probably won't be worse than 2x.

tipoo - Monday, May 27, 2013 - link

Nowhere near as bad as I thought GDDR5 would be given what everyone is saying about it to defend DDR3, and given that it runs at such a high clock rate the CL effect will be reduced even more (that's measured in clock cycles, right?).Riseer - Sunday, June 23, 2013 - link

For game performance,GDDR5 has no equal atm.Their is a reason why it's used in Gpu's.MS is building a media center,while Sony is building a gaming console.Sony won't need to worry so much about latency for a console that puts games first and everything else second.Overall Ps4 will play games better then Xbone.Also ESram isn't a good thing,the only reason why Sony didn't use it is because it would complicate things more then they should be.This is why Sony went with GDDR5 it's a much simpler design that will streamline everything.This time around it will be MS with the more complicated console.Riseer - Sunday, June 23, 2013 - link

Also lets not forget you only have 32mb worth of ESRAM.At 1080p devs will push for more demanding effects.On Ps4 they have 8 gigs of ram that has around 70GB's more bandwidth.Since DDR3 isn't good for doing graphics,that only leaves 32mb of true Vram.That said Xbone can use the DDR3 ram for graphics,issue being DDR3 has low bandwidth.MS had no choice but to use ESRam to claw back some performance.CyanLite - Sunday, May 26, 2013 - link

I've been a long-term Xbox fan, but the silly Kinect requirement scares me. It's only a matter of time before somebody hacks that. And I'm a casual sit-down kind of gamer. Who wants to stand up and wave arm motions playing Call of Duty? Or shout multiple voice commands that are never recognized the first time around?If PS4 eliminates the camera requirement, get rids of the phone-home Internet connections, and lets me buy used games then I'm willing to reconsider my console loyalty.