Choosing a Gaming CPU: Single + Multi-GPU at 1440p, April 2013

by Ian Cutress on May 8, 2013 10:00 AM ESTCivilization V

A game that has plagued my testing over the past twelve months is Civilization V. Being on the older 12.3 Catalyst drivers were somewhat of a nightmare, giving no scaling, and as a result I dropped it from my test suite after only a couple of reviews. With the later drivers used for this review, the situation has improved but only slightly, as you will see below. Civilization V seems to run into a scaling bottleneck very early on, and any additional GPU allocation only causes worse performance.

Our Civilization V testing uses Ryan’s GPU benchmark test all wrapped up in a neat batch file. We test at 1440p, and report the average frame rate of a 5 minute test.

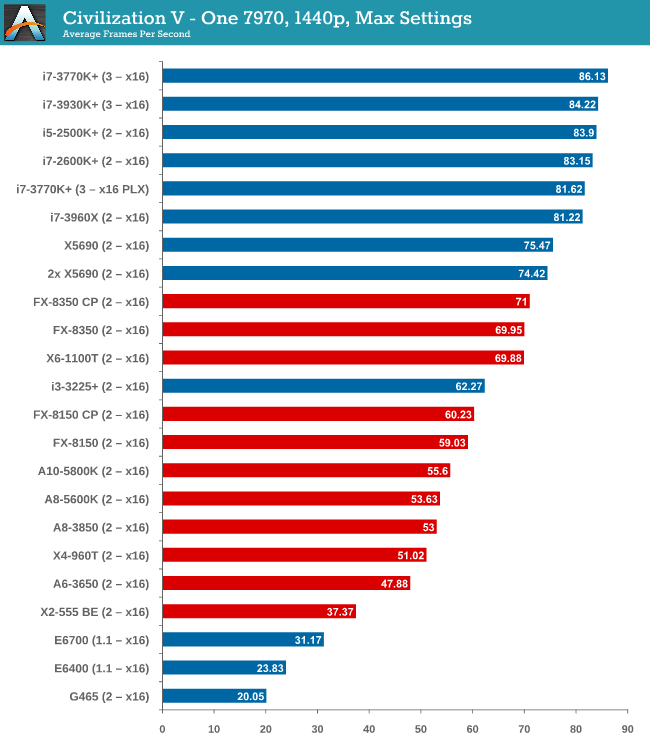

One 7970

Civilization V is the first game where we see a gap when comparing processor families. A big part of what makes Civ5 perform at the best rates seems to be PCIe 3.0, followed by CPU performance – our PCIe 2.0 Intel processors are a little behind the PCIe 3.0 models. By virtue of not having a PCIe 3.0 AMD motherboard in for testing, the bad rap falls on AMD until PCIe 3.0 becomes part of their main game.

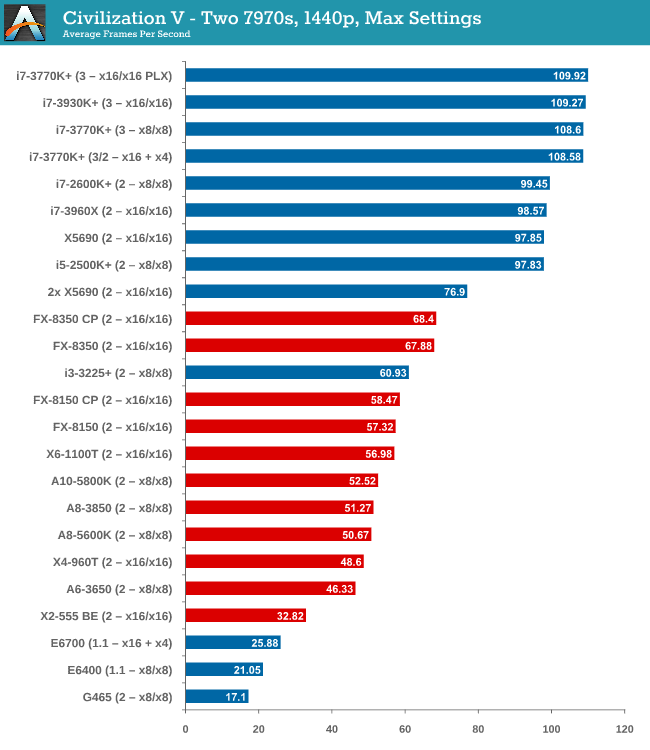

Two 7970s

The power of PCIe 3.0 is more apparent with two 7970 GPUs, however it is worth noting that only processors such as the i5-2500K and above have actually improved their performance with the second GPU. Everything else stays relatively similar.

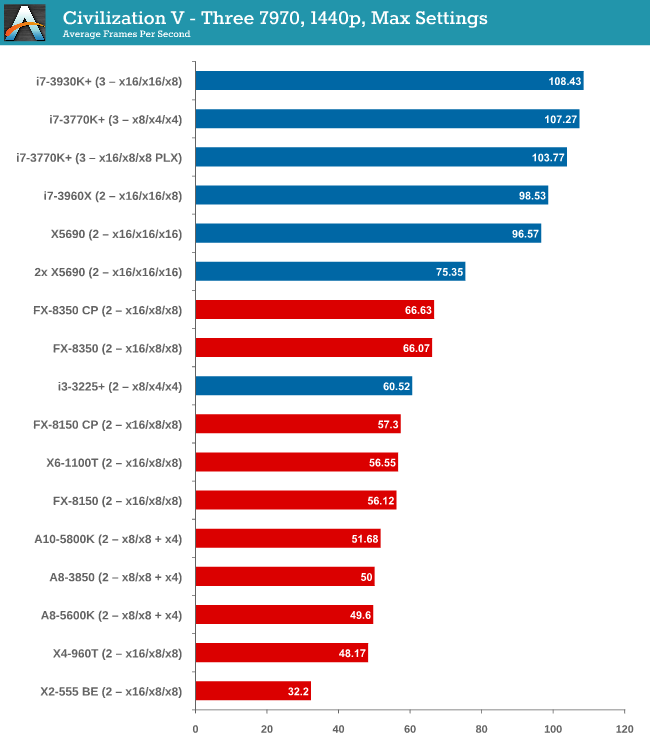

Three 7970s

More cores and PCIe 3.0 are winners here, but no GPU configuration has scaled above two GPUs.

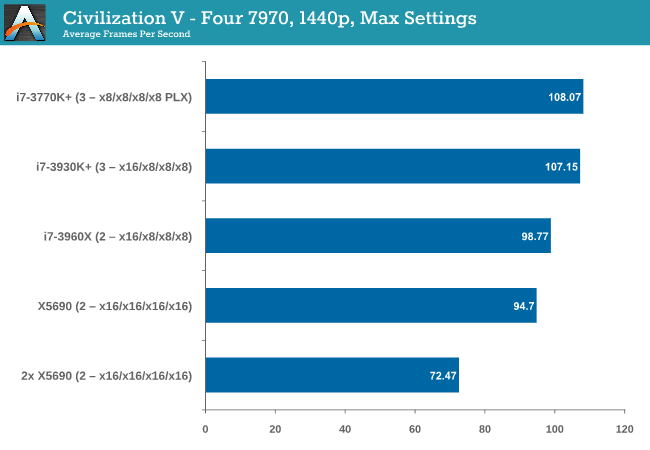

Four 7970s

Again, no scaling.

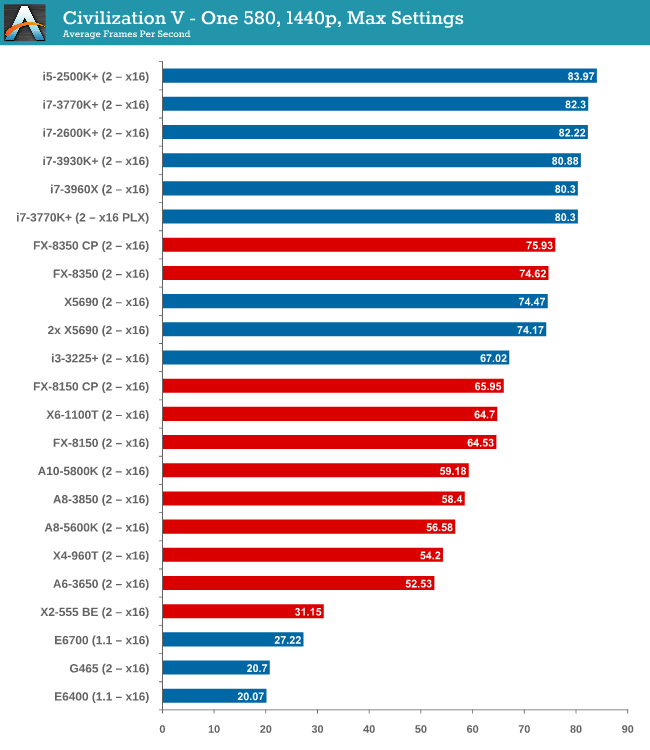

One 580

While the top end Intel processors again take the lead, an interesting point is that now we have all PCIe 2.0 values for comparison, the non-hyper threaded 2500K takes the top spot, 10% higher than the FX-8350.

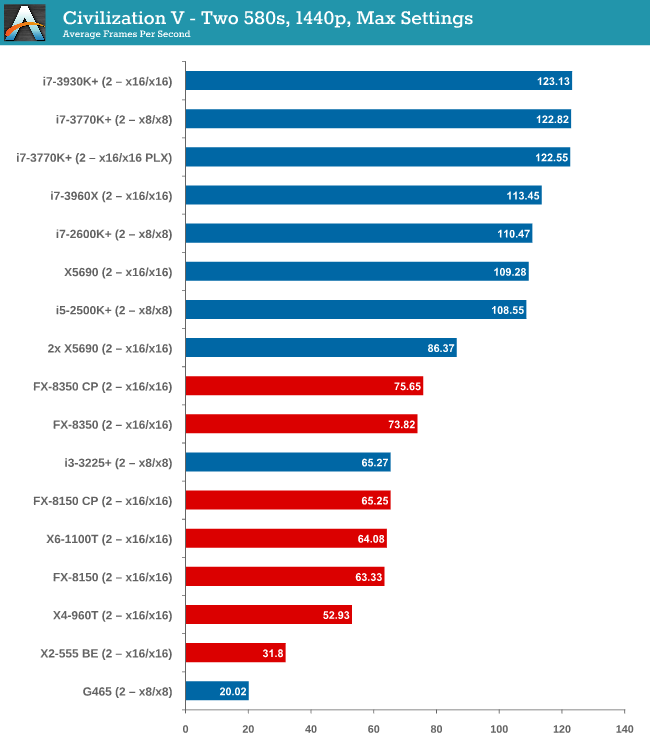

Two 580s

We have another Intel/AMD split, by virtue of the fact that none of the AMD processors scaled above the first GPU. On the Intel side, you need at least an i5-2500K to see scaling, similar to what we saw with the 7970s.

Civilization V conclusion

Intel processors are the clear winner here, though not one stands out over the other. Having PCIe 3.0 seems to be the positive point for Civilization V, but in most cases scaling is still out of the window unless you have a monster machine under your belt.

242 Comments

View All Comments

TheQweaker - Friday, May 10, 2013 - link

Just in case, here is a pointer to the nVidia GPU AI Path finding in the developer zone:https://developer.nvidia.com/gpu-ai-path-finding

And here is the title of a 2011 GPU AI Planning paper (research; not yet in a game): "Exploiting the Computational Power of the Graphics Card: Optimal State Space Planning on the GPU". You should be able to find the PDF on the web.

My 2 cents is that it's a good topic for a final paper.

-- The Qweaker.

yougotkicked - Friday, May 10, 2013 - link

Thanks again, I think I will be doing GPU AI as my final paper, probably try to implement the A* family as massively parallel, or maybe a local beam search using hundreds of hill-climbing threads.TheQweaker - Saturday, May 11, 2013 - link

Nice project.2 more cents.

Keep it simple is the best advice. It's better to have a running algorithm than none, even if it's slow.

Also, ask you advisor whether he'd want you to compare with a CPU implementation of yours in order to evaluate the pros and cons between your sequential implementation and your // implemenation. I did NOT write "evaluate gains from seq to //" as GPU programming is currently not fully understood, probably even not by nVidia engineers.

Finally, here is book title: "CUDA Programming: A Developer's Guide to Parallel Computing with GPUs". But there are many others these days.

OK. That w

TheQweaker - Saturday, May 11, 2013 - link

as my last post.-- The Qweaker.

(sorry for the cut, I wrongly clicked on submit)

yougotkicked - Monday, May 13, 2013 - link

thanks a lot for all your input, I intend to evaluate not only the advantages of GPU computing, but it's weak points as well, so I'll be sure to demonstrate the differences between a sequential algorithm, a parallel CPU algorithm, and a massively parallel GPU algorithm.Azusis - Wednesday, May 8, 2013 - link

Could you test the Q6600 and i7-920 in your next roundup? I have many PC gaming friends, and we all seem to have a Q6600, i7-920, or 2500k in our rigs. Thanks! Great job on the article.IanCutress - Wednesday, May 8, 2013 - link

I have a Q9400 coming in soon from family - Getting one of the Nehalem/Westmere range is definitely on my to-do list for the next update :)sonofgodfrey - Thursday, May 9, 2013 - link

I too have a Q6600, but it would be interesting to see the high end (non-extreme edition) Core 2s as well: E8600 & Q9650. Just for yucks, perhaps a socket 775 Pentium 4 could also make an appearance? :)gonks - Wednesday, May 8, 2013 - link

i knew it from some time ago, but this proves once again that it's time to upgrade my good old c2d (conroe) E6600 @ 3.2GhzQuizzical - Wednesday, May 8, 2013 - link

You've got a lot of data there. And it's good data if your main purpose is to compare a Radeon HD 7970 to a GeForce GTX 580. Unfortunately, most of it is worthless if you're trying to isolate CPU performance, which is the ostensible purpose of the article. You've gone far out of your way to try to make games GPU-limited so that you wouldn't be able to tell what the various CPUs can do when they're the main limiting factors.Loosely, the CPU has to do any work to run a game that isn't done by the GPU. The contents of this can vary wildly from game to game. Unless you're using DirectX 11 multithreaded rendering, only one thread can communicate with the video card at a time. But that one rendering thread mostly consists of passing data to the video card, so you don't do much in the way of real computations there. You do sort some things so that you don't have to switch programs, textures, and so forth more often than necessary, though you can have a separate sorting thread if you're (probably unreasonably) worried that this is going to mean too much work for the rendering thread.

Actually determining what data needs to be passed to the video card can comprise the bulk of the CPU work that a game needs to do. But this portion is mostly trivial to scale to as many threads as you care to--at least within reason. It's a completely straightforward producer-consumer queue with however many "producer" threads you want and the rendering thread as the single "consumer" thread that takes the data set up by other threads and passes it along to the video card.

Not quite all of the work of setting up data for the GPU is trivial to break into as many threads as necessary, though. At the start of a new frame, you have to figure out exactly where the camera is going to go in that frame. This is likely going to be very fast (e.g., tens or hundreds of microseconds), but it does need to be done before you go compute where everything else is relative to the camera.

While I haven't programmed AI, I'd expect that you could likewise break it up into as many threads as you cared to, as you could "save" the state of the game at some instant in time and have separate threads compute what all AI has to do based on the state of the game at that moment, without needing to know anything about other game characters were choosing at the same time. Some games are heavy on AI computations, while online games may do essentially no AI computations client-side, so this varies wildly from game to game.

A game engine may do a lot of other things besides these, such as processing inputs, loading data off of the hard drive, sending data over the Internet, or whatever. Some such things can't be readily scaled to many CPU cores, but if you count by CPU work necessary, few games will have all that much stuff to do other than setting up data for the GPU and computing AI.

But most of the work that a CPU has to do doesn't care what graphical settings you're using. Anything that isn't part of the graphics engine certainly doesn't care. The only parts of a the CPU side of game engine that care what monitor resolution you're using are likely to be a handful of lines to set the resolution when you change it and a few lines to check whether an object is off the camera and therefore doesn't need to be processed in that particular frame--and culling such objects is likely done mostly to save on the GPU load. Any settings that can be adjusted in video drivers (e.g., anti-aliasing or anisotropic filtering) are done almost entirely on the video card and carry a negligible CPU load.

Thus, if you're trying to isolate CPU performance, you turn down or off settings that don't affect the CPU load. In particular, you want a very low monitor resolution, no anti-aliasing, no anisotropic filtering, and no post-processing effects of any sort. Otherwise, you're just trying to make the game mostly CPU bound, and end up with data that looks like most of what you've collected.

Furthermore, even if you do the measurements properly, there's also the question of whether the games you've chosen are representative of what most people will play. If you grab the games that you usually benchmark for video cards reviews, then you're going out of your way to pick games that are unrepresentative. Tech sites like this that review hardware tend to gravitate toward badly-coded games that aren't representative of most of the games that people will play. If this video card gets 200 frames per second at max settings in one game and that video card gets 300, what's the difference in real-world game experience? If you want to differentiate between different video cards, you need games that are more demanding, and simply being really inefficient is one way to do that.

Of course, if you were trying to see how different CPUs affect performance in a mostly GPU-limited game, that can be interesting in an esoteric sense. It would probably tend to favor high single-threaded performance because the only difference you'd be able to pick out are due to things that happen between frames, which is the time that the video card is most likely to be forced to wait on the CPU briefly.

But if you were trying to do that, why not just use a Radeon HD 5450? The question answers itself.

If you would like to get some data that will be more representative of how games handle CPUs, then you'll need to do some things very differently. For starters, use just a single powerful GPU, to avoid any CrossFire or SLI weirdness. A GeForce GTX Titan is ideal, but a Radeon HD 7970 or GeForce GTX 680 would be fine. For that matter, if you're not stupid about picking graphical settings, something weaker like a Radeon HD 7870 or GeForce GTX 660 would probably work just fine. But you need to choose the graphical settings intelligently, by turning down or off any graphical settings that don't affect CPU load. In particular, anti-aliasing, anisotropic filtering, and all post-processing effects should be completely off. Use a fairly low monitor resolution; certainly no higher than 1920x1080, and you could make a good case for 1366x768.

And then don't pick your usual set of games that you use to do video card reviews. You chose those games precisely because they're outliers that won't give a good gauge of CPU performance, so they'll sabotage your measurements if you're trying to isolate CPU performance. Rather, pick games that you rejected from doing video card reviews because they were unable to distinguish between video cards very well. If the results are that in a typical game, this processor can deliver 200 frames per second and that one can do 300, then so be it. If a Core i7-3570K and an FX-6300 can deliver hundreds of frames per second in most games (as is likely if the game runs well on, say, a 2 GHz Core 2 Duo), then you shouldn't shy away from that conclusion.