The Great Equalizer 3: How Fast is Your Smartphone/Tablet in PC GPU Terms

by Anand Lal Shimpi on April 4, 2013 1:00 AM EST- Posted in

- Tablets

- Smartphones

- Mobile

- GPUs

- SoCs

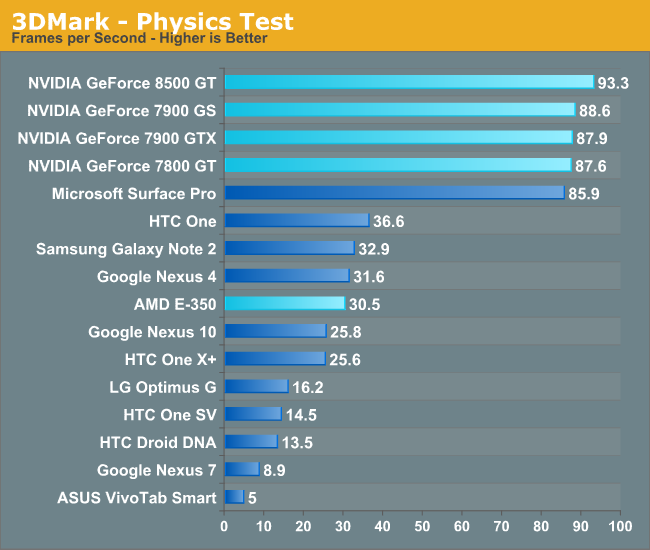

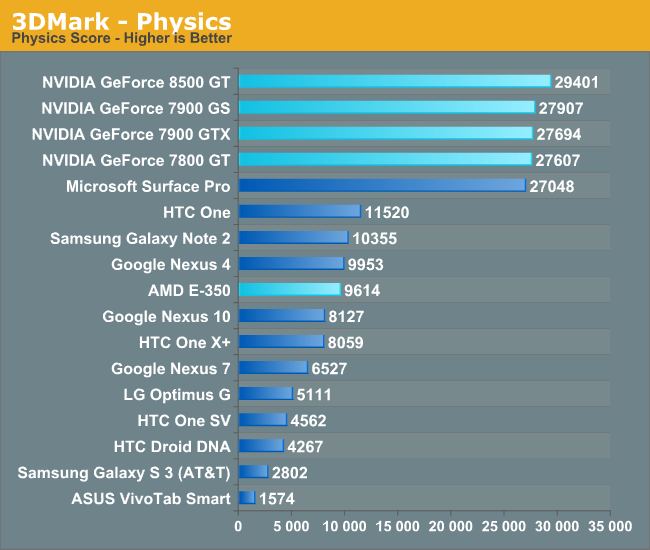

Choosing a Testbed CPU

Although I was glad I could put some of these old GPUs to use (somewhat justifying them occupying space for years in my parts closet), there was the question of what CPU to pair them with. Go too insane on the CPU and I may unfairly tilt performance in favor of these cards. What I decided to do was to simulate the performance of the Core i5-3317U in Microsoft's Surface Pro. That part is a dual-core Ivy Bridge with Hyper Threading enabled (4 threads). Its max turbo is 2.6GHz for a single core, 2.4GHz for two cores. I grabbed a desktop Core i3 2100, disabled turbo, and forced its default clock speed to 2.4GHz. In many cases these mobile CPUs spend a lot of time at or near their max turbo until things get a little too toasty in the chassis. To verify that I had picked correctly I ran the 3DMark Physics test to see how close I came to the performance of the Surface Pro. As the Physics test is multithreaded and should be completely CPU bound, it shouldn't matter what GPU I paired with my testbed - they should all perform the same as the Surface Pro:

Great success! With the exception of the 8500 GT, which for some reason is a bit of an overachiever here (7% faster than Surface Pro), the rest of the NVIDIA cards all score within 3% of the performance of the Surface Pro - despite being run on an open-air desktop testbed.

With these results we also get a quick look at how AMD's Bobcat cores compare against the ARM competitors it may eventually do battle with. With only two Bobcat cores running at 1.6GHz in the E-350, AMD actually does really well here. The E-350's performance is 18% better than the dual-core Cortex A15 based Nexus 10, but it's still not quite good enough to top some of the quad-core competitors here. We could be seeing differences in drivers and/or thermal management with some of these devices since they are far more thermally constrained than the E-350. Bobcat won't surface as a competitor to anything you see here, but its faster derivative (Jaguar) will. If AMD can get Temash's power under control, it could have a very compelling tablet platform on its hands. The sad part in all of this is the fact that AMD seems to have the right CPU (and possibly GPU) architectures to be quite competitive in the ultra mobile space today. If AMD had the capital and relationships with smartphone/tablet vendors, it could be a force to be reckoned with in the ultra mobile space. As we've seen from watching Intel struggle however, it takes more than just good architecture to break into the new mobile world. You need a good baseband strategy and you need the ability to get key design wins.

Enough about what could be, let's look at how these mobile devices stack up to some of the best GPUs from 2004 - 2007.

We'll start with 3DMark. Here we're looking at performance at 720p, which immediately stops some of the cards with 256-bit memory interfaces from flexing their muscles. Never fear, we will have GL/DXBenchmark's 1080p offscreen mode for that in a moment.

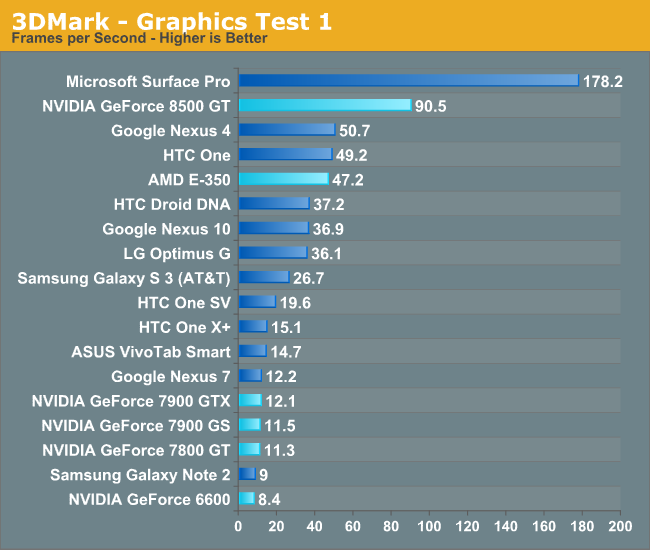

Graphics Test 1

Ice Storm Graphics test 1 stresses the hardware’s ability to process lots of vertices while keeping the pixel load relatively light. Hardware on this level may have dedicated capacity for separate vertex and pixel processing. Stressing both capacities individually reveals the hardware’s limitations in both aspects.

In an average frame, 530,000 vertices are processed leading to 180,000 triangles rasterized either to the shadow map or to the screen. At the same time, 4.7 million pixels are processed per frame.

Pixel load is kept low by excluding expensive post processing steps, and by not rendering particle effects.

Right off the bat you should notice something wonky. All of NVIDIA's G70 and earlier architectures do very poorly here. This test is very heavy on the vertex shaders, but the 7900 GTX and friends should do a lot better than they are. These workloads however were designed for a very different set of architectures. Looking at the unified 8500 GT, we get some perspective. The fastest mobile platforms here (Adreno 320) deliver a little over half the vertex processing performance of the GeForce 8500 GT. The Radeon HD 6310 featured in AMD's E-350 is remarkably competitve as well.

The praise goes both ways of course. The fact that these mobile GPUs can do as well as they are right now is very impressive.

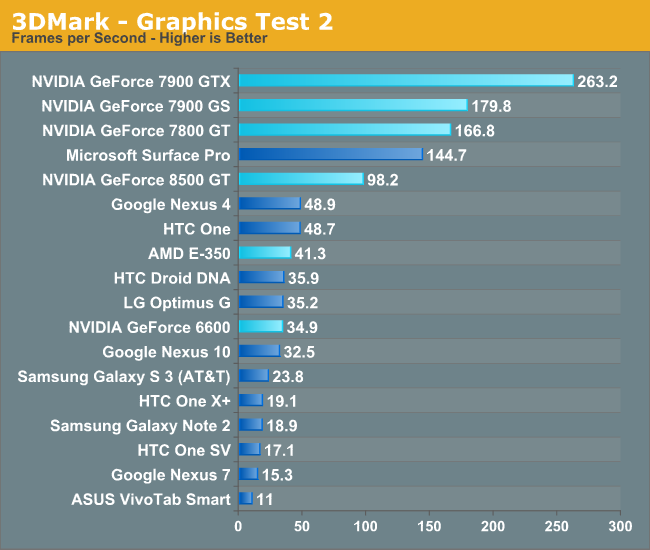

Graphics Test 2

Graphics test 2 stresses the hardware’s ability to process lots of pixels. It tests the ability to read textures, do per pixel computations and write to render targets.

On average, 12.6 million pixels are processed per frame. The additional pixel processing compared to Graphics test 1 comes from including particles and post processing effects such as bloom, streaks and motion blur.

In each frame, an average 75,000 vertices are processed. This number is considerably lower than in Graphics test 1 because shadows are not drawn and the processed geometry has a lower number of polygons.

The data starts making a lot more sense when we look at the pixel shader bound graphics test 2. In this benchmark, Adreno 320 appears to deliver better performance than the GeForce 6600 and once again roughly half the performance of the GeForce 8500 GT. Compared to the 7800 GT (or perhaps 6800 Ultra), we're looking at a bit under 33% of the performance of those cards. The Radeon HD 6310 in AMD's E-350 appears to deliver performance competitive with the Adreno 320.

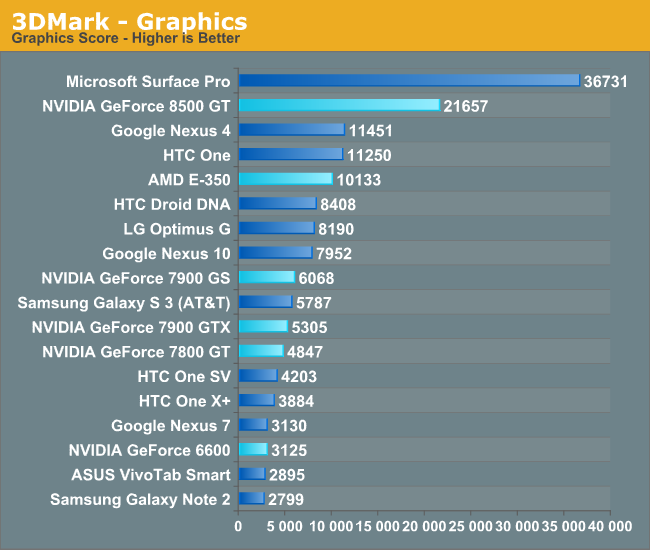

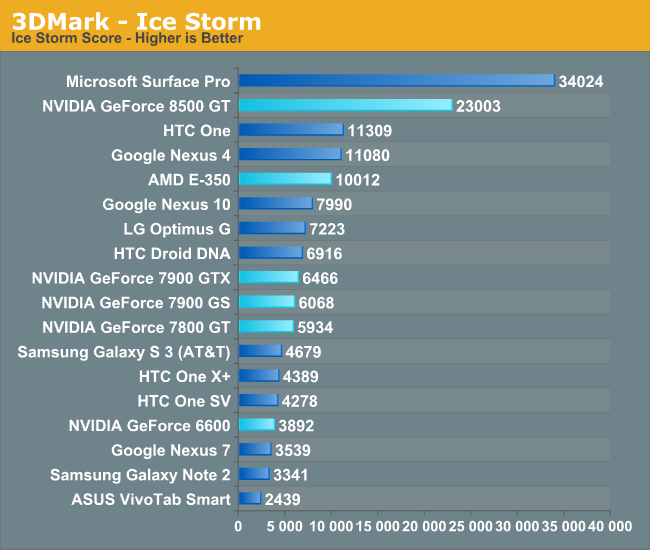

The overall graphics score is a bit misleading given how poorly the G7x and NV4x architectures did on the first graphics test. We can conclude that the E-350 has roughly the same graphics performance as Qualcomm's Snapdragon 600, while the 8500 GT appears to have roughly 2x that. The overall Ice Storm scores pretty much repeat what we've already seen:

Again, the new 3DMark appears to unfairly penalize the older non-unified NVIDIA GPU architectures. Keep in mind that the last NVIDIA driver drop for DX9 hardware (G7x and NV4x) is about a month older than the latest driver available for the 8500 GT.

It's also worth pointing out that Ice Storm also makes Intel's HD 4000 look very good, when in reality we've seen varying degrees of competitiveness with discrete GPUs depending on the workload. If 3DMark's Ice Storm test could map to real world gaming performance, it would mean that devices like the Nexus 4 or HTC One would be able to run BioShock 2-like titles at 10x7 in the 20 fps range. As impressive as that would be, this is ultimately the downside of relying on these types of benchmarks to make comparisons - they fundamentally tell us how well these platforms would run the benchmark itself, not other games unfortunately.

At a high level, it looks like we're somewhat narrowing down the level of performance that today's high end ultra mobile GPUs deliver when put in discrete GPU terms. Let's see what GL/DXBenchmark 2.7 tell us.

128 Comments

View All Comments

SPBHM - Thursday, April 4, 2013 - link

Interesting stuff, the HD 4000 completely destroys the 7900GTX,considering the Playstation 3 uses a cripled G70 based GPU, is fantastic the results they can achieve under a higher optimized platform...

also, it would have been interesting to see something from ATI, a x1900 or x1950. I think itwould represent better the early 2006 GPUs

milli - Thursday, April 4, 2013 - link

Yeah agree. I've seen recent benchmarks of a X1950 and it aged much better than the 7900GTX.Spunjji - Friday, April 5, 2013 - link

Definitely, it had a much more forward-looking shader arrangement; tomorrow's performance cannot sell today's GPU though and I'm not sure AMD have fully learned from that yet.Th-z - Thursday, April 4, 2013 - link

I'll take these numbers with a grain of salt, 7900 GTX still has the memory bandwidth advantage, about 2x as much, maybe in actual games or certain situations it's faster. Perhaps Anand can do a retro discrete vs today's iGPU review just for the trend, it could be fun as well :)Wilco1 - Thursday, April 4, 2013 - link

Anand, what exactly makes you claim: "Given that most of the ARM based CPU competitors tend to be a bit slower than Atom"? On the CPU bound physics test the Z-2760 scores way lower than any of the Cortex-A9, A15 and Snapdragon devices (A15 is more than 5 times as fast core for core despite the Atom running at a higher frequency). Even on Sunspider score Atom scores half that of an A15. I am confused, it's not faster on single threaded CPU tasks, not on multi-threaded CPU tasks, and clearly one of the worst on GPU tasks. So where exactly is Atom faster? Or are you talking about the next generation Atoms which aren't out yet???tech4real - Thursday, April 4, 2013 - link

It's fairly odd to see such a big gap between atom and A9/A15 in a supposedly CPU bound test. However the score powerarmour showed several posts earlier on an atom330/ion system seems to give us some good explanation.The physics core 7951 and 25.2 FPS of his atom330/ion system is pretty in line with what the atom cpu expectation is, faster than a9, a bit slower than a15. So i would guess that the extremely low z2760 score is likely due to its driver implementation, and is not truly exercising CPU for that platform.

Wilco1 - Thursday, April 4, 2013 - link

It may be a driver issue, however it doesn't mean that with better drivers a Z-2760 would be capable of achieving that same score. The Ion Atom uses a lot more power, not just the CPU, but also the separate higher-end GPU and chipset. Besides driver differences, a possibility is that the Z-2760 is thermally throttled to stay within a much lower TDP.tech4real - Friday, April 5, 2013 - link

the scale of the drop does not look like a typical thermal throttle, so I would lean towards the "driver screw up" theory, particularly considering intel's poor track record on PowerVR driver support. It would be interesting to see someone digging a bit deeper into this.islade - Thursday, April 4, 2013 - link

I'd love to see an iPad 3 included in this results. I'm sure many readers have iPad 3's and couldn't justify upgrading to the 4, so I'd love to see where we fit in with all this :)tipoo - Sunday, April 7, 2013 - link

CPU wise you'd be the exact same as the iPad 2, Mini, and just slightly above the 4S. GPU wise you'd be like the 5.