AMD Comments on GPU Stuttering, Offers Driver Roadmap & Perspective on Benchmarking

by Ryan Smith on March 26, 2013 2:28 AM EST

For as long as I can remember talking about video cards and GPU performance at AnandTech, there has been debate over the type of benchmarks used to represent that performance. In the old days, the debate was mostly manufacturer driven. Curiously enough, the discourse usually fired up when one manufacturer was at a significant deficit in GPU performance. NVIDIA made a big deal about moving away from timedemos and average frame rates during the early GeForce FX (NV30) days, when its cards might have delivered a decent gaming experience but were slaughtered in most benchmarks. Even Intel advocated for a shift away from most CPU bound gaming benchmarks back during the early years of the Pentium 4 - again, for obvious reasons.

It’s a shame that these revolutions in gaming performance testing were always associated with underperforming products (and later dropped once the product stack improved in the next generation or two). It’s a shame because there has always been merit in introducing additional metrics in order to provide the most complete picture when it came to gaming performance.

The issue lay mostly dormant over the past several years. Every now and then there’d be a new attempt to revolutionize GPU performance testing, but most failed to gain widespread traction for one reason or another. Broad repeatability, one of the basic tenets of the scientific method, was usually cast aside in pursuit of a lot of these new attempts at performance testing - which ultimately limited acceptance.

A year and a half ago, Scott Wasson over at the Tech Report did something no one since Dr. Pabst was able to do: he actually brought about a revolution in the 3D game benchmarking scene.

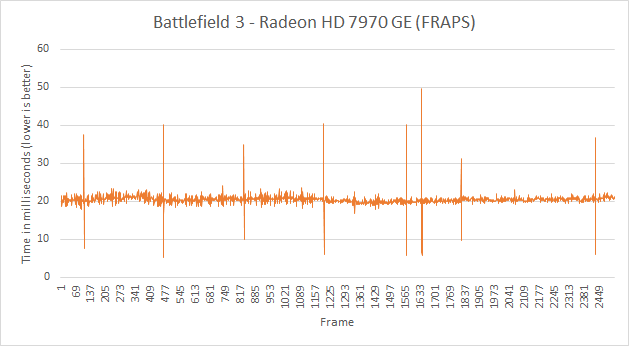

The approach seemed ridiculously simple - we’ve all had the tools for so very long. Scott used FRAPS to record frame times, and would calculate how long every frame in a benchmark took to render. By focusing on individual frame latencies, Scott’s method could better characterize the little hiccups and stutters that would get smoothed out in an average frame rate. With the new method came a bunch of nifty graphs, and the world changed.

The methodology wasn’t perfect, as FRAPS lacks a holistic view of the 3D rendering pipeline, but it did reveal some surprising issues (in addition to spawning further work that uncovered even more issues on the multi-GPU front). Interestingly enough, many of the issues uncovered by this focus on frame times/latency seemed to primarily impact AMD hardware.

AMD remained curiously quiet as to exactly why its hardware and drivers were so adversely impacted by these new testing methods. While our own foray into evolving GPU testing will come later this week, we had the opportunity to sit down with AMD to understand exactly what’s been going on.

Although neither strictly a defense nor merely an explanation of what we’ve been seeing over the past year, AMD wanted to sit down and better explain their position. This includes both why AMD’s products have been impacted in the manner they were, and why at the same time (and not unlike NVIDIA) AMD is worried about FRAPS being given more weight than it should be. Ultimately AMD believes that it’s to the benefit of buyers and journalists alike to better understand just what is happening, why it’s happening, and just what the most common tools can and are measuring.

What follows is based on our meeting with some of AMD's graphics hardware and driver architects, where they went into depth in all of these issues. In the following pages we’ll get into a high-level explanation of how the Windows rendering pipeline works, why this leads to single-GPU issues, why this leads to multi-GPU issues, and what various tools can measure and see in the rendering process.

103 Comments

View All Comments

Bilna - Saturday, April 6, 2013 - link

False defintion of Stutter.The main cause of stutter is due to a drop frame (by moving simply the 3d camera face to the polygons, shaders etc...) this can happen on any GPU/CPU, did Nvidia buy you ?

It can happen on video game console too...

Also caused by a non optimized engine that doesn't execute the instructions a render cache to prevent any stutter problem.

I can easily show you that on every GPU/CPU the problem exist but the fact is that problem doesn't come necessarily from the GPU/CPU but more about render path/engine works -> like i said you can prevent this by using a render cache to final render, it's a software problem not a hardware problem...

What about explaining that Nvidia stole works from a certain person ? no you can't talk about that, it would make problem to your website and your Nvidia contract

AvonX - Friday, April 12, 2013 - link

You really are an idiot by supporting and defending a failing company.Dangerous_Dave - Friday, May 10, 2013 - link

I bloody knew it. There were two reasons I moved away from AMD in spite of the on-the-surface better performance per $, and they were 1) Too many of my games wouldn't work properly with some random graphics feature switched on (many hours lost to this) and 2) Nvidia cards just seemed to be more smooth than AMD cards. Vindicated at last! My AMD gaming experience always looked choppy to me even if I was getting 100+fps, whereas Nvidia played more like a console in terms of chocolatey smoothness. Glad I stumped up the extra cash and went Nvidia instead of listening to people talking crap about there being no difference.