Gigabyte GA-7PESH1 Review: A Dual Processor Motherboard through a Scientist’s Eyes

by Ian Cutress on January 5, 2013 10:00 AM EST- Posted in

- Motherboards

- Gigabyte

- C602

Many thanks to...

We must thank the following companies for kindly donating hardware for our test bed:

OCZ for donating the Power Supply and USB testing SSD

Micron for donating our SATA testing SSD

Kingston for donating our ECC Memory

ASUS for donating AMD GPUs

ECS for donating NVIDIA GPUs

Test Setup

| Test Setup | |

| Processor |

2x Intel Xeon E5-2690 8 Cores, 16 Threads, 2.9 GHz (3.8 GHz Turbo) each |

| Motherboards | Gigabyte GA-7PESH1 |

| Cooling |

Intel AIO Liquid Cooler Corsair H100 |

| Power Supply | OCZ 1250W Gold ZX Series |

| Memory | Kingston 1600 C11 ECC 8x4GB Kit |

| Memory Settings | 1600 C11 |

| Video Cards |

ASUS HD7970 3GB ECS GTX 580 1536MB |

| Video Drivers |

Catalyst 12.3 NVIDIA Drivers 296.10 WHQL |

| Hard Drive | Corsair Force GT 60 GB (CSSD-F60GBGT-BK) |

| Optical Drive | LG GH22NS50 |

| Case | Open Test Bed - DimasTech V2.5 Easy |

| Operating System | Windows 7 64-bit |

| SATA Testing | Micron RealSSD C300 256GB |

| USB 2/3 Testing | OCZ Vertex 3 240GB with SATA->USB Adaptor |

Power Consumption

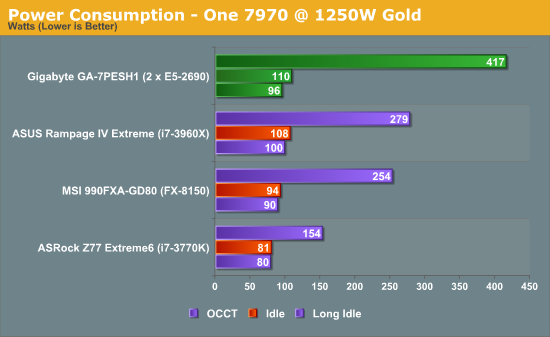

Power consumption was tested on the system as a whole with a wall meter connected to the OCZ 1250W power supply, with a single 7970 GPU installed. This power supply is Gold rated, and as I am in the UK on a 230-240 V supply, leads to ~75% efficiency > 50W, and 90%+ efficiency at 250W, which is suitable for both idle and multi-GPU loading. This method of power reading allows us to compare the power management of the UEFI and the board to supply components with power under load, and includes typical PSU losses due to efficiency. These are the real world values that consumers may expect from a typical system (minus the monitor) using this motherboard.

Using two E5-2690 processors would mean a combined TDP of 270W. If we make the broad assumption that the processors combined use 270W under loading, this places the rest of the motherboard at around 110-130W, which is indicated by our idle numbers (despite PSU efficiency).

POST Time

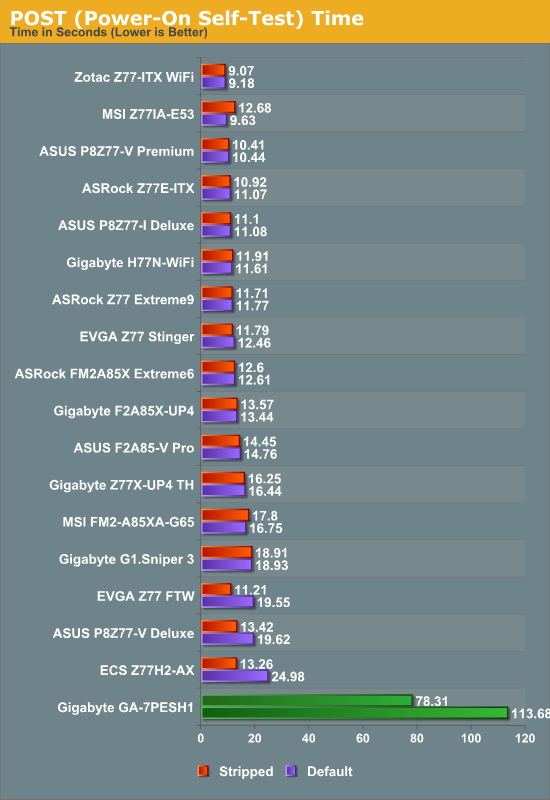

Different motherboards have different POST sequences before an operating system is initialized. A lot of this is dependent on the board itself, and POST boot time is determined by the controllers on board (and the sequence of how those extras are organized). As part of our testing, we are now going to look at the POST Boot Time - this is the time from pressing the ON button on the computer to when Windows starts loading. (We discount Windows loading as it is highly variable given Windows specific features.) These results are subject to human error, so please allow +/- 1 second in these results.

The boot time on this motherboard is a lot longer than anything I have ever experienced. Firstly, when the power supply is switched on, there is a 30 second wait (indicated by a solid green light that turns into a flashing green light) before the motherboard can be switched on. This delay is to enable the management software to be activated. Then, after pressing the power switch, there is around 60 seconds before anything visual comes up on the screen. Due to the use of the Intel NICs, the LSI SAS RAID chips and other functionality, there is another 53 seconds before the OS actually starts loading. This means there is about a 2.5 minute wait from power at the wall enabled to a finished POST screen. Stripping the BIOS by disabling the extra controllers gives a sizeable boost, reducing the POST time by 35 seconds.

64 Comments

View All Comments

dj christian - Monday, January 14, 2013 - link

No please!This article should be a one time only or once every 2 years at most.

nadana23 - Sunday, January 6, 2013 - link

From the looks of results some of the benchmarks are HIGHLY sensitive to effective bandwidth per thread (ie, GDDR5 feeding a GPU stream processor >> DDR3 feeding a Xeon HT core).However - it must be noted that 8x DIMMS is insufficient to achieve full memory bandwidth on Xeon E5 2S!

I'd suggest throwing a pure memory bandwidth test into the mix to make sure you're actually getting the rated number (51.2GB/s)...

http://ark.intel.com/products/64596/Intel-Xeon-Pro...

... as I strongly suspect your memory config is crippling results.

Dell's 12G config guidelines are as good a place as any to start on this :-

http://en.community.dell.com/cfs-file.ashx/__key/c...

Simply removing one E5-2590 and moving to 1-Package, 8 DIMM config may (counter-intuitively) bench(market) faster... for you.

dapple - Sunday, January 6, 2013 - link

Great article, thanks! This is the sort of benchmark I've been wanting to see for quite some time now - simple, brute-force numerics where the code is visible and straightforward. Too many benchmarks are black boxes with processor- and compiler-specific tunes to make manufacturer "X" appear superior to "Y". That said, it would be most illustrative to perform a similar 'mark using vanilla gcc on both MS and *nix OS.daosis - Sunday, January 6, 2013 - link

It is long known issue, when windows does not start after changing hardware, especially GPU (not always so). There is as long known trick so. Just before last "power off" one should replace GPU's own driver with basic microsoft's one. In case of GPU it is "standart Vga adapter" (device manager - update driver - browse my computer - let me pick up). In fact one can replace all specific drivers on OS with similiar basic from MS and then to put this hard drive virtually to any system without any need for fresh install. Mind you, that after first boot it takes some time for OS to find and install specific drivers.jamesf991 - Sunday, January 6, 2013 - link

In the early '70s I was doing very similar simulations using a PDP 11/40 minicomputer. (I can send citations to my publications if anyone is interested.) At Texas Tech and later at Caltech, I simulated systems involving heterogeneous electron transfer kinetics, various chemical reactions in solution, coulostatics, galvanostatics, voltammetry, chronocoulometry, AC voltammetry, migration, double layer effects, solution hydrodynamics (laminar only), etc. Much of this was done on a PDP 11/40, originally with 8K words (= 16K bytes) of core memory. Later the machine was upgraded to 24 K words (!), we got a floating point board, and a hard disk drive (5 M words, IIRC). My research director probably paid in excess of $50K for the hardware. One cute project was to put a simulation "inside" a nonlinear regression routine to solve for electrode kinetic parameters such as k and alpha. Each iteration of the nonlinear solver required a new simulation -- hand-coding the innermost loops using floating point assembly instructions was a big speedup!I wonder how the old PDP would stack up against the 3770?

flynace - Monday, January 7, 2013 - link

Do you guys think that once Haswell moves the VRM on package that someone might do a 2 socket mATX board?Even if it means giving up 2 of the 4 PCIe slots and/or 2 DIMMs per socket it would be nice to have a high core count standard SFF board for those that need just that.

samsp99 - Monday, January 7, 2013 - link

I found this review interesting, but I don't think this board is really targeted at the HPC market. It seems like it would be good as part of a 2U / 12 + 2 drive system, similar to the Dell C2100. It would make a good virtual host, SQL, active web server etc. Having the 3 mSAS connectors would enable 4 drive each without the need for a SAS expander.Servers are designed for 99.999% uptime, remote management, and hands-off operation. To achieve that you need redundent power, UPS, Networking, storage etc. They also require high airflow, which is noisy and not something you want sitting under your desk. Based on that, it makes sense that the MB is intended for sale to system builders not your general build your own enthusiast.

HW manufactuerers are faced with a similar problem to airlines - consumers gravitate to the cheapest price, and so the only real money to be made is selling higher profit margin products to businesses. Servers are where intel etc makes their profits.

For the computational problems the author is trying to solve, to me it would seem to be better to consider:

a) At one point, I think google was using commodity hardware, with custom shelving etc. Assuming the algorithms can be paralleled on different hosts, you shouldn't need the reliability of traditional servers, so why not use a number of commodity systems together, choosing the components that have the best perf/$.

b) There are machines designed for HPC scenarios, such as HPC Systems E5816 that supports 8x Xeon E7-8000 (10 core) processors, or the E4002G8 - that will take 8 nVidia Tesla cards.

c) What about developing and testing the software on cheap worstations, and then when you are sure its ready, buying compute time from Amazon cloud services etc.

babysam - Monday, January 7, 2013 - link

It is quite delighting to look at your review on Anandtech (especially when I am using software and computer configurations of similar nature for my studies), as it is quite difficult for me to evaluate the performance gain of "real-life" software (i.e. science oriented in my case) on new hardware before buying.From what I have seen in your code segments provided (especially for the n-body simulation part) , there are large amount of floating-point divisions. Is there any possibility that the code is not only limited by the cache size(and thrashing), but by the limited throughput of the floating-point divider? (i.e. The performance degradations when HT is enabled may also be caused by the competition of the two running threads on the only floating-point divider in the core)

SanX - Tuesday, January 8, 2013 - link

if you post zipped sources and exes for anyone to follow, learn, play, argue and eventually improve.I'd also preferred to see Fortran sources and benchmarks when possible.

Intel/AMD should start promote 2/4/8 socket monster mobos for enthusiasts and then general public since this is the beginning of the infinite in time era for multiprocessing.

Also where are games benchmarks like for example GTA4 which benefits a lot from multicores as well as from GPUs?

IanCutress - Wednesday, January 9, 2013 - link

The n-body simulations are part of the C++ AMP example page, free for everyone to use. The rest of the code is part of a benchmark package I'm creating, hence I only give the loops in the code. Unfortunately I know no Fortran for benchmarks.Most mainstream users (i.e. gamers) still debate whether 4 or 6 cores are even necessary, so moving to 2P/4P/8P is a big leap in that regard. Enthusiasts can still get the large machines (a few folders use quad AMD setups) if they're willing to buy from ebay which may not always be wholly legal. You may see 2P/4P/8P becoming more mainstream when we start to hit process node limits.

Ian