The x86 Power Myth Busted: In-Depth Clover Trail Power Analysis

by Anand Lal Shimpi on December 24, 2012 5:00 PM ESTGPU Workload

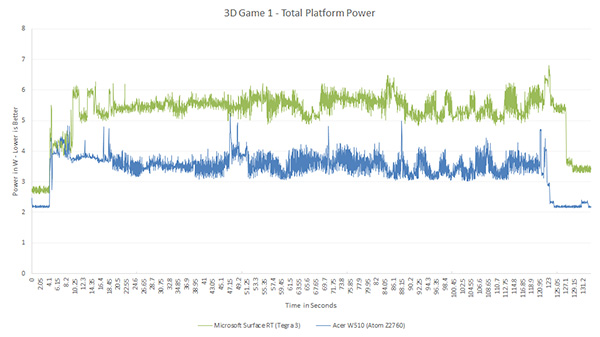

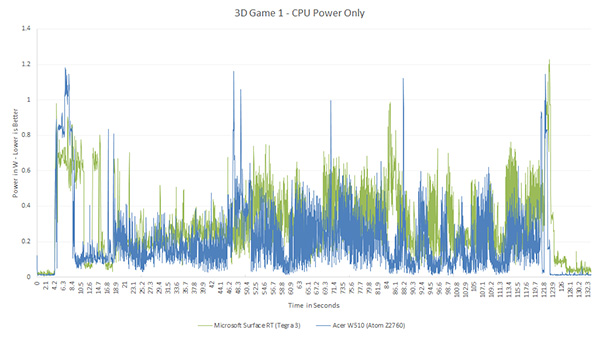

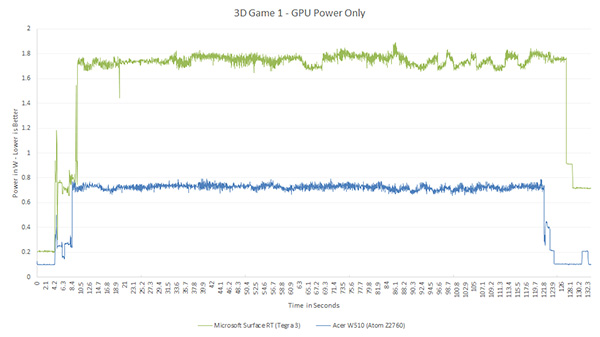

NVIDIA's only performance advantage on the SoC side compared to Clover Trail at this point is in its GPU. Tegra 3's GPU is faster than the high clocked PowerVR SGX 545 in Clover Trail. While we don't yet have final GPU benchmarks under Windows RT/8 that we can share numbers from, the charts below show power consumption in the same DX title running through roughly the same play path.

NVIDIA's GPU power consumption is more than double the PowerVR SGX 545's here, while its performance advantage isn't anywhere near double. I have heard that Imagination has been building the most power efficient GPUs on the market for quite a while now, this might be the first argument in favor of that heresay.

163 Comments

View All Comments

karasaj - Monday, December 24, 2012 - link

All they need to do is either put intel HD graphics (Haswell) or license a better gpu from Imagination, I imagine. Although ARM (Samsung?) have really been developing better GPUs lately, they seem to be catching up.jeffkibuule - Tuesday, December 25, 2012 - link

They've already stated they will be integrating a variant of their Intel HD 4000 GPU in their next-generation Atom SoC, the only question is how many Execution Units and what kind of power profile their will be targeting.With Intel, the question isn't so much about performance, but maximizing profits. If they build an Atom SoC that's so great and also cost competitive with other ARM chips, who would buy their more expensive Core CPUs? This is one reason why I believe that the Atom and Core lines will eventually have to merge, just like how the Pentium and Pentium M lines had to converge into the original Core series back in 2006 (oh, how the irony in history repeating itself).

lmcd - Tuesday, December 25, 2012 - link

It's more likely a variant of the 2500, which won't be enough. 4k doesn't even beat the 543MP3 does it?jeffkibuule - Tuesday, December 25, 2012 - link

There haven't really been any comparisons of mobile and smartphone GPUs yet. We'll have to wait for 3DMark for Windows 8 to get our first reliable comparison.wsw1982 - Tuesday, December 25, 2012 - link

I think it depends, 16 543MP3 cores should beat the 4K :) Single core 543MP3 is not better than 545.mrdude - Wednesday, December 26, 2012 - link

It's also a matter of TDP, though. The ARM SoCs pack a lot of punch on the CPU side but with often better GPU performance at an equal footing with respect to TDP (sub-2W for smartphones and ~sub-5W for tablets).As much as Intel wants to pound home the point that x86 is power efficient, it's an SoC and therefore a package deal. Intel still suffers from the lopsided design approach, dedicating far too much die space to the CPU with the GPU an afterthought. If you look at the more successful and popular/powerful ARM SoCs, it tends to be the other way around. A balanced approach with great efficiency is what makes the Snapdragon S4's such fantastic SoCs and why Qualcomm has now surpassed Intel in total market cap. The GPU is only going to become more and more important going forward due to PPI increasing drastically. At least for Apple, they've already reached a point where they're required to spend a huge portion of the die to the GPU with smaller, incremental bumps in CPU performance.

This really seems like Intel is shoehorning their old Atom architecture into a lower TDP, saying: "Look! It's efficient! Just don't pay any attention to the fact that we're comparing it to a 40nm Tegra 3 and don't you dare do any GPU benchmarks." These things are meant for tablets, are Intel not aware just how much MORE the GPU matters? Great perf-per-watt (maybe), but that's all for nothing if the SoC sucks.

somata - Monday, December 31, 2012 - link

As others have said, it'll be nice once we can do proper comparisons between tablet/notebook/desktop GPUs, but in the meantime just consider the peak shader performance of each:Intel HD 4000 - 16(x4x2) @ 1.3GHz - 333 GFLOPS

Intel HD 3000 - 12(x4) @ 1.3GHz - 125 GFLOPS

PowerVR SGX 543MP4 - 16(x4) @ 300MHz - 38.4 GFLOPS

PowerVR SGX 554MP4 - 32(x4) @ 300MHz - 76.8 GFLOPS

The PowerVR numbers are based off of Anand's analysis. Obviously not exactly a fair comparison, but clearly Intel's mainstream integrated GPUs are substantially more powerful than any current PowerVR design. Of course that shouldn't be a surprise given the TDP of each platform.

p3ngwin1 - Tuesday, December 25, 2012 - link

there are already smartphones with 1080P displays and Android tablets with even high resolutions :)coolhund - Tuesday, December 25, 2012 - link

Plus the Atom is not OoO, IO is known to use much less power. Plus the OS is not the same.Sorry, but for me this comparison is nonsense.

tipoo - Monday, December 24, 2012 - link

I'll be very interested to read the Cortex A15 follow up. From what I gather, if compared on the same lithography the A15 core is much larger than the A9, which likely means more power, all else being equal. It brings performance up to and sometimes over the prior generation Atom, but I wonder what power requirement sacrifices were made, if any.I'm thinking in the coming years, Intel vs ARM will become a more interesting battle than Intel vs AMD.