LG 29EA93 Ultrawide Display - Rev. 1.09

by Chris Heinonen on December 11, 2012 1:20 AM ESTLG 29EA93—Brightness and Contrast

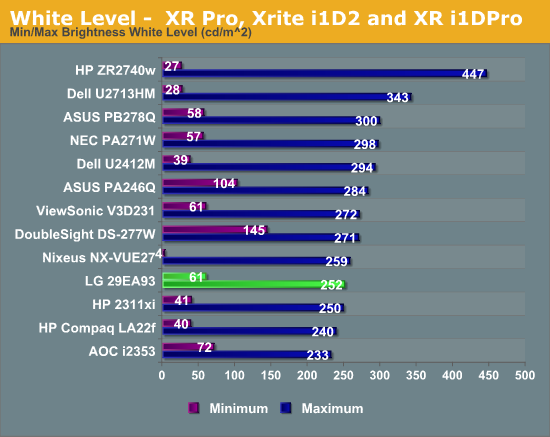

The LG 29EA93 uses a totally different panel and backlighting setup than any display that I’ve tested, so for once I am coming into a review without any real idea of how something will perform. With the LED backlight set to maximum with a pure white screen, the peak brightness measures at 252 nits. This is a bit lower than I expect but fine for those people without direct sunlight on the screen. With the backlight at minimum that light output level drops down to 61 nits, which provides plenty of range for users that have light controlled environments and want a dimmer display.

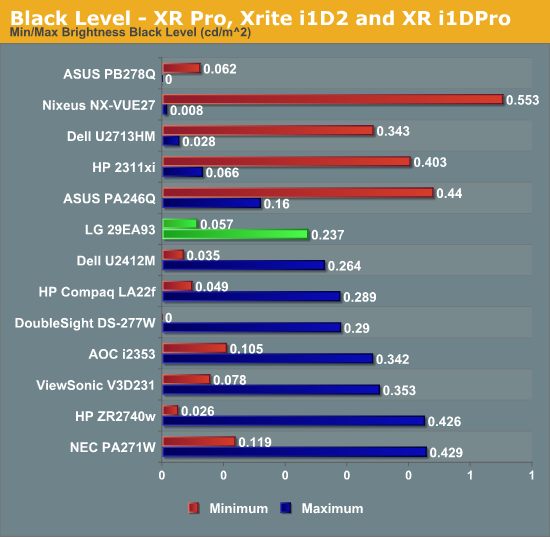

Black levels on the LG are pretty good in comparison to other IPS panels. The black level with the backlight at minimum is a nice 0.237 nits, and that drops all the way down to 0.057 nits with the backlight at minimum. When measured against the peak light levels and against other IPS displays, these are good black levels to see.

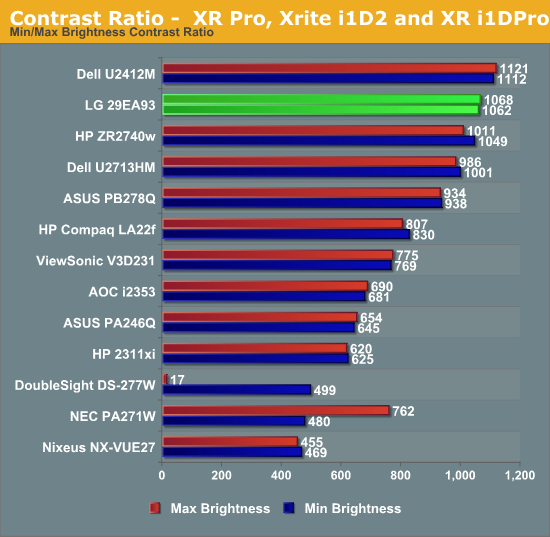

These numbers combine to give us a contrast ratio over 1060 for both minimum and maximum backlight levels. This puts the LG up there with the best IPS contrast ratios we have measured on any size display. The best VA displays still perform better, but IPS has managed to really improve upon contrast ratios the past few years.

Overall the peak brightness left a bit to be desired on the LG 29EA93, but the black levels and contrast ratio help to make up for that. They still won’t make it a good choice for someone that has to deal with direct sunlight on the display, but for users without that you can get plenty of brightness and a good contrast ratio from it.

90 Comments

View All Comments

Rick83 - Tuesday, December 11, 2012 - link

So you say " In my very casual use this didn’t bother me"...and then use that as your main argument against the screen?

That is somewhat strange. If you can only quantify the issue, but not qualify it, then you shouldn't use it as such a strong argument.

Finally, it would be great to get a link back to the methodology used to measure monitor latency. Prad had a huuuge article on that, and I'm not even sure that the methodology you use is actually giving accurate results.

nathanddrews - Tuesday, December 11, 2012 - link

I don't think he was unclear. Input lag is really only a big issue when dealing with really fast action games. For slower paced games and cinefiles (assuming you can adjust latency of your audio source to match) input lag is not as important. So while I can understand the appeal of an extra-wide display for immersive gaming, the high latency of this display cancels that out.Prad? They established that lag testing applications are accurate enough. In the end, being accurate to 1fps is more than sufficient. Look at this chart showing the many different testing methods. They're all pretty close.

http://www.prad.de/en/monitore/specials/inputlag/i...

I will say that I am surprised that the display was tested at 1920x1080 rather than the native resolution. How do we know that the non-native resolution didn't contribute to observed latency? I guess I would have liked to see the native resolution tested to be sure. As a gamer and "cinefile", I would certainly attempt to run the monitor at its native resolution whenever possible, using FOV hacks as necessary.

Rick83 - Tuesday, December 11, 2012 - link

"Values which have been arrived using the old methods to date cannot be compared with these values, since their systematic errors alone often exceed the values from the new method multiple times."So clearly, the method that is used matters.

nathanddrews - Tuesday, December 11, 2012 - link

I understand, but the results - faulty or not - are roughly all within 1fps or real-world perception. Displays that have low latency using the old method still have low latency using the new methods, likewise with high latency. Other than objective purity, it doesn't seem to matter.cheinonen - Tuesday, December 11, 2012 - link

I haven't written up how SMTT is used, but TFT Central did a very through write-up of it in comparison to other methods and how the results were here:http://www.tftcentral.co.uk/articles/input_lag.htm

I plan for an article going over all of the testing methods in more detail soon.

jjj - Tuesday, December 11, 2012 - link

Not very sure why you asume the target was movies, i see that more as an unintended benefit and this as just an alternative to using 2x1080p screens.Maybe you would be happier with higher vertical res,guess there is no reason for them to not to that too at some point.The pricing is rather unfortunate, the Dell was quite a bit cheaper on BF and the input lag takes away so much of the benefit of having this AR.

Wish someone (hint Samsung) would make a 3420x1440 (more or less) with flexible display where the curve can be adjusted.That would be way fun,maybe even help revive a bit PC gaming.

cheinonen - Tuesday, December 11, 2012 - link

The movie assumption comes more from the presence of dual HDMI inputs, a CMS, and an MHL input than from the aspect ratio. Those lean more towards it being a shared desktop and TV/Gaming display, and once it's used for that then movies come more into play. Without the extra inputs and control I'd think it's more likely a dual monitor replacement. I think it's a bit of both, but needs some work.wsaenotsock - Tuesday, December 11, 2012 - link

So this is how laptop screens will look in 5 years after this ratio catches on and homogenizes the global panel supply again?madmilk - Wednesday, December 12, 2012 - link

You could buy a Macbook.peterfares - Wednesday, December 12, 2012 - link

I really hope this doesn't happen. 16:10 was so much better for computer usage, but 16:9 is still acceptable. This is just stupud. 27" WQHD screens are cheaper and much better, too.