The NVIDIA GeForce GTX 650 Ti Review, Feat. Gigabyte, Zotac, & EVGA

by Ryan Smith on October 9, 2012 9:00 AM ESTThe 2GB Question & The Test

If it seems to like 1GB+ video cards have been common for ages now, you wouldn’t be too far off. 1GB cards effectively became mainstream in 2008 with the release of the 1GB Radeon HD 4870, which was followed by 2GB cards pushing out 1GB cards as the common capacity for high-end cards in 2010 with the release of the Radeon HD 6970. Since then 2GB cards have been trickling down AMD and NVIDIA’s product stacks while at the same time iGPUs have been making the bottoms of those stacks redundant.

For this generation AMD decided to make their cutoff the 7800 series earlier this year; the 7700 series would be 1GB by default, while the 7800 series and above would be 2GB or more. AMD has since introduced the 7850 1GB as a niche product (in large part to combat the GTX 650 Ti), but the 7850 is still predominantly a 2GB card. For NVIDIA on the other hand the line is being drawn between the GTX 660 and GTX 650; the GTX 660 is entirely 2GB, while the GTX 650 and GTX 650 Ti are predominantly 1GB cards with some 2GB cards mixed in as a luxury option.

The reason we bring this up is because while this is very clear from a video card family perspective, it doesn’t really address performance expectations. Simply put, at what point does a 2GB card become appropriate? When AMD or NVIDIA move a whole product line the decision is made for you, but when you’re looking at a split product like the GTX 650 Ti or the 7850 then the decision is up to the buyer and it’s not always an easy decision.

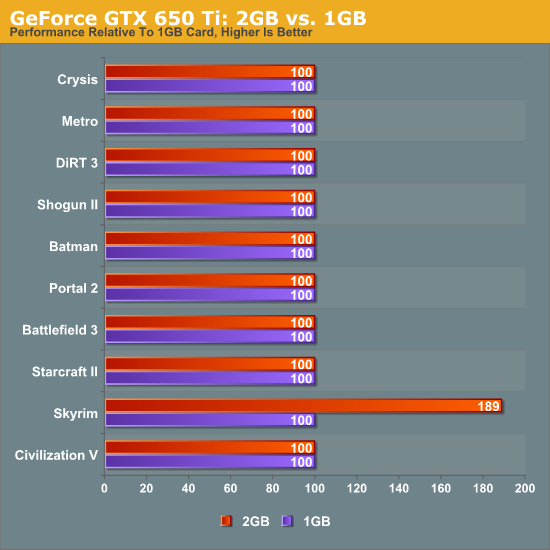

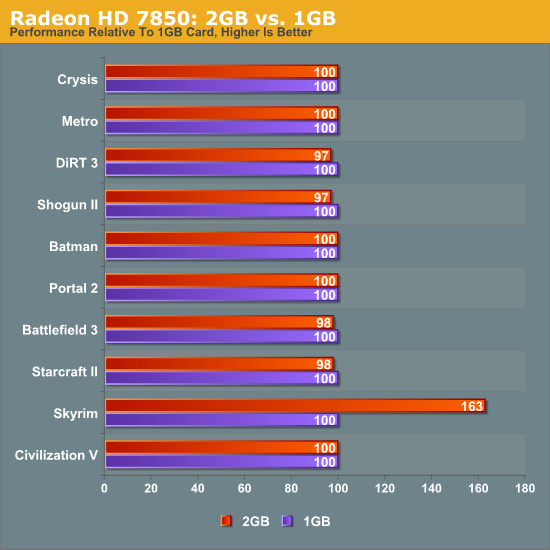

To try to help with that decision, we’ve broken down the performance of several games on both cards with both 1GB and 2GB models, listing the performance of 2GB cards relative to 1GB cards. By looking for performance advantages, we can hopefully better quantify the benefits of a 2GB card.

Regardless of whether we’re looking at AMD or NVIDIA cards, there’s only one benchmark where 2GB cards have a clear lead: Skyrim at 1920 with the high resolution texture pack. For our other 9 games the performance difference is miniscule at best.

But despite the open-and-shut nature of our data we’re not ready to put our weight behind these results. Among other issues, our benchmark suite is approaching a year old now, which means it doesn’t reflect on some of the major games released in the past few months such as XCOM: Enemy Unknown, Borderlands 2, DiRT: Showdown, or for that matter any one of the number of games left to be released this year. While we have full confidence in our benchmark suite from a competitive performance perspective, the fact that it’s not forward looking (and mostly forward rendering) does a disservice to measuring the need for additional memory.

The fact of the matter is that while the benchmarks don’t necessarily show it, along with Skyrim we’ve seen Crysis clobber 1GB cards in the past, Shogun II’s highest settings won’t even run on a 1GB card, and at meanwhile Battlefield 3 scales up render distance with available video memory. Nearly half of our benchmark games do benefit from 2GB cards, a subjective but important quality.

So despite the fact that our data doesn’t immediately show the benefits of 2GB cards, our thoughts go in the other direction. As 2012 comes to a close, cards that can hit the GTX 650 Ti’s performance level are not well equipped for future with only 1GB of VRAM. 1GB is the cheaper option – and at these prices every penny counts – but it is our belief that by this time next year 1GB cards will be in the same place 512MB cards were in 2010: bottlenecked by a lack of VRAM. We have reached that point where if you’re going to be spending $150 or more that you shouldn’t be settling for a 1GB card; this is the time where 2GB cards are going to become the minimum for performance gaming video cards.

The Test

NVIDIA’s GTX 650 Ti launch driver is 306.38, which are a further continuation of the 304.xx branch. Compared to the previous two 304.xx drivers there are no notable performance changes or bug fixes that we’re aware of.

Meanwhile on the AMD side we’re using AMD’s newly released 12.9 betas. While these drivers are from a new branch, compared to the older 12.7 drivers the performance gains are minimal. We have updated our results for our 7000 series cards, but the only difference as it pertains to our test suite is that performance in Shogun II and DiRT 3 is slightly higher than with the 12.7 drivers.

On a final note, AMD sent over XFX’s Radeon HD 7850 1GB card so that we had a 1GB 7850 to test with (thanks guys). As this is not a new part from a performance perspective (see above) we’re not doing anything special with this card beyond including it in our charts as validation of the fact that the 1GB and 2GB 7850s are nearly identical outside of Skyrim.

| CPU: | Intel Core i7-3960X @ 4.3GHz |

| Motherboard: | EVGA X79 SLI |

| Chipset Drivers: | Intel 9.2.3.1022 |

| Power Supply: | Antec True Power Quattro 1200 |

| Hard Disk: | Samsung 470 (256GB) |

| Memory: | G.Skill Ripjaws DDR3-1867 4 x 4GB (8-10-9-26) |

| Case: | Thermaltake Spedo Advance |

| Monitor: | Samsung 305T |

| Video Cards: |

AMD Radeon HD 5770 AMD Radeon HD 6850 AMD Radeon HD 7770 AMD Radeon HD 7850 1GB AMD Radeon HD 7850 2GB NVIDIA GeForce GTS 450 NVIDIA GeForce GTX 550 Ti NVIDIA GeForce GTX 560 NVIDIA GeForce GTX 650 NVIDIA GeForce GTX 650 Ti NVIDIA GeForce GTX 660 |

| Video Drivers: |

NVIDIA ForceWare 305.37 NVIDIA ForceWare 306.23 Beta NVIDIA ForceWare 306.38 Beta AMD Catalyst 12.9 Beta |

| OS: | Windows 7 Ultimate 64-bit |

91 Comments

View All Comments

TheJian - Tuesday, October 9, 2012 - link

The 7850 is more money, it should perform faster. I'd expect nothing less. So what this person would end up with is 10-20% less perf (in the situation you describe) for 10-20% less money. ERGO, exactly what they should have got. So basically a free copy of AC3 :) Which is kind of the point. The 2GB beating the 650TI in that review is $20 more. It goes without saying you should get more perf for more $. What's your point?Your wrong. IN the page you point to (just looking at that, won't bother going though them all), the 650TI 1GB scores 32fps MIN, vs. 7770 25fps min. So unplayable on 7770, but playable on 650TI. Nuff said. Spin that all you want all day. The card is worth more than 7770. That's OVER 20% faster 1920x1080 4xAA in witcher 2. You could argue for $139 maybe, but not with the AC3 AAA title, and physx support in a lot of games and more to come.

http://www.geforce.com/games-applications/physx

All games with physx so far. Usually had for free, no hit, see hardocp etc. Borderlands 2, Batman AC & AAsylum, Alice Madness returns, Metro2033, sacred2FA, etc etc...The list of games is long and growing. This isn't talked about much, nor what these effects at to the visual experience. You just can't do that on AMD. Considering these big titles (and more head to the site) use it, any future revs of these games (sequels etc) will likely use it also and the devs now have great experience with physx. This will continue to become a bigger issue as we move forward. What happens when all new games support this, and there's no hit for having it on (hardocp showed they were winning WITH it on for free)? There's quite a good argument even now that a LOT of good games are different in a good way on NV based cards. Soon it won't be a good argument, it will be THE argument. Unfortunately for AMD/Intel havok never took off and AMD has no money to throw at devs to inspire them to support it. NV continues to make games more fun on their hardware (either on android tegrazone stuff, or PC stuff). Much tighter connections with devs on the NV side. Money talks, unfortunately for AMD debt can't talk for you (accept to say don't buy my stock we're in massive debt) :)

jtenorj - Wednesday, October 10, 2012 - link

No, you are wrong. Lower end nvidia cards(whether this card falls into that category or not is debatable) generally cannot run physx on high, but require it to be set to medium, low or off. AMD cards can run physx in a number of games on medium by using the cpu without a massive performance hit. There hasn't been a lot of time since nvidia got physx tech from ageia for game developers to include it in titles because developement cycles are getting longer and longer. Still, I think most devs shy away from physx because it hurts the bottom line(more time to impliment= more money spend on salaries and later release, alienate 40% of potential market by making it so the full experience is not an option for them, losing more money). Take a look at the havok page on wikipedia vs the physx page(which is more extensive than what even nvidia lists on their own site). Havok and other software physics engines are used in the vast majority of released and soon to be released titles because they will work with anyone's card. I'm not saying HD7770 is better than gtx650ti(it is in fact worse than the new card), but the HD7850 is a far better value(especially the 2GB version). Finally, it is possible to add a low end geforce like gt610 to a higher end AMD primary as a dedicated physx card in some systems.ocre - Thursday, October 11, 2012 - link

but it doest alienate 40% of the market.You said this yourself:

"AMD cards can run physx in a number of games on medium by using the cpu without a massive performance hit."

Then try to turn it all around???? Clever? Doubtful!!

And this is what all the AMD fanboys cried about. Nvidia purposefully crippling physX on the CPU. Nvidia evil for making physX nvidia only. But now they have improved their physX code on the CPU and every single game as of late offers acceptable physX performance on AMD hardware via the CPU. Of course you will only get fully fledged GPU accelerated physX with Nvidia hardware but you cannot really expect more, can you?

Even if your not capable of seeing the improvements Nvidia made it is there. They have reached over and extended the branch to AMD users. They got physX to run better on multicore CPUs. They listened to complaints (even from AMD users) and made massive improvements.

This is the thing with nvidia. They are listening and steadily improving. Removing those negatives one at a time. Its gonna be hard for AMD fanboys to come up with negatives because nvidia is responding with every generation. PhysX is one example, the massive power efficiency improvement of kepler is another. Nvidia is proactive and looking for ways to improve their direction. All these things complaints on Nvidia are getting addressed. There is nothing you can really say except they are making good progress. But that will not stop AMD fans from desperately searching for any negative that they can grasp on to. But more and more people are taking note of this progress, if you havent noticed yourself.

CeriseCogburn - Friday, October 12, 2012 - link

Oh, so that's why the crybaby amd fans have shut their annoying traps on that, not to mention their holy god above all amd/radeon videocards apu holy trinity company after decades of foaming the fuming rage amd fanboys into mooing about "proprietary Physx! " like a sick monkey in heat and half dead, and extolling the pure glorious god like and friendly neighbor gamer love of "open source" and spewwwwwwwing OpenCL as if they had it sewed all over their private parts and couldn't stop staring and reading the teleprompter, their glorious god amd BLEW IT- and puked out their proprietary winzip !R O F L

Suddenly the intense and insane constant moaning and complaining and attacking and dissing and spewing against nVidia "proprietary" was gone...

Now "winzip" is the big a compute win for the freak fanboy of we know which company. LOL

P R O P R I E T A R Y ! ! ! ! ! ! ! ! ! ! 1 ! 1 100100000

JC said it well : Looooooooooooooooooooooseeerrrr !

(that's Jim Carey not the Savior)

CeriseCogburn - Friday, October 12, 2012 - link

" You buy a GPU to play 100s of games not 1 game. "Good for you, so the $50 games times 100 equals your $5,000.00 gaming budget for the card.

I guess you can stop moaning and wailing about 20 bucks in a card price now, you freaking human joke with the melted amd fanboy brain.

Denithor - Tuesday, October 9, 2012 - link

Hopefully your shiny new GTX 650 Ti will be able to run AC3 smoothly...:D

chizow - Thursday, October 11, 2012 - link

According to Nvidia, the 650Ti ran AC3 acceptably at 1080p with 4xMSAA on Medium settings: http://www.geforce.com/whats-new/articles/nvidia-g..."In the case of Assassin’s Creed III, which is bundled with the GTX 650 Ti at participating e-tailers and retailers, we recorded 36.9 frames per second using medium settings."

That's not all that surprising to me though as the GTX 280 ran AC2/ACB Anvil engine games at around the same framerate. While AC3 will certainly be more demanding, the 650Ti is a good bit faster than the 280.

I'm not in the market though for a GTX 650Ti, I'm more interested in the AC3 bundle making its way to other GeForce parts as I'm interested in grabbing another 670. :D

HisDivineOrder - Tuesday, October 9, 2012 - link

Perhaps you might test without AA when dealing with cards in a sub-$200 price range as that would seem the more likely use for the card. Not saying you can't test with AA, too, but to have all tests include AA seems to be testing a new Volkswagon bug with a raw speed test through a live fire training exercise you'd test a humvee with.RussianSensation - Tuesday, October 9, 2012 - link

AA testing is often used to stress the ROP and memory bandwidth of GPUs. Also, it's what separates consoles from PCs. If a $150 GPU cannot handle AA but a $160-180 competitor can, it should be discussed. When GTX650Ti and its after-market versions are so closely priced to 7850 1GB/7850 2GB, and it's clear that 650Ti is so much slower, the only one to blame here is NV for setting the price at $149, not the reviewer for using AA.GTX560/560Ti/6870/6950 were all tested with AA and this card not only competes against HD7850 but gives owners of older cards a perspective of how much progress there has been with new generation of GPUs. Not using AA would not allow for such a comparison to be made unless you dropped AA from all the cards in this review.

It sounds like you are trying to find a way to make this card look good but sub-$200 GPUs are capable of running AA as long as you get a faster card.

HD7850 is 34% faster than GTX650Ti with 4xAA at 1080P and 49% faster with 8xAA at 1080P

http://www.computerbase.de/artikel/grafikkarten/20...

All that for $20-40 more. Far better value.

Mr Perfect - Tuesday, October 9, 2012 - link

I thought GTX was reserved for high end cards, with lower tier cards being GT. I guess they gave up on that?