Intel's Haswell Architecture Analyzed: Building a New PC and a New Intel

by Anand Lal Shimpi on October 5, 2012 2:45 AM ESTDecoupled L3 Cache

With Nehalem Intel introduced an on-die L3 cache behind a smaller, low latency private L2 cache. At the time, Intel maintained two separate clock domains for the CPU (core + uncore) and a third for what was, at the time, an off-die integrated graphics core. The core clock referred to the CPU cores, while the uncore clock controlled the speed of the L3 cache. Intel believed that its L3 cache wasn't incredibly latency sensitive and could run at a lower frequency and burn less power. Core CPU performance typically mattered more to most workloads than L3 cache performance, so Intel was ok with the tradeoff.

In Sandy Bridge, Intel revised its beliefs and moved to a single clock domain for the core and uncore, while keeping a separate clock for the now on-die processor graphics core. Intel now felt that race to sleep was a better philosophy for dealing with the L3 cache and it would rather keep things simple by running everything at the same frequency. Obviously there are performance benefits, but there was one major downside: with the CPU cores and L3 cache running in lockstep, there was concern over what would happen if the GPU ever needed to access the L3 cache while the CPU (and thus L3 cache) was in a low frequency state. The options were either to force the CPU and L3 cache into a higher frequency state together, or to keep the L3 cache at a low frequency even when it was in demand to prevent waking up the CPU cores. Ivy Bridge saw the addition of a small graphics L3 cache to mitigate this situation, but ultimately giving the on-die GPU independent access to the big, primary L3 cache without worrying about power concerns was a big issue for the design team.

When it came time to define Haswell, the engineers once again went to Nehalem's three clock domains. Ronak (Nehalem & Haswell architect, insanely smart guy) tells me that the switching between designs is simply a product of the team learning more about the architecture and understanding the best balance. I think it tells me that these guys are still human and don't always have the right answer for the long term without some trial and error.

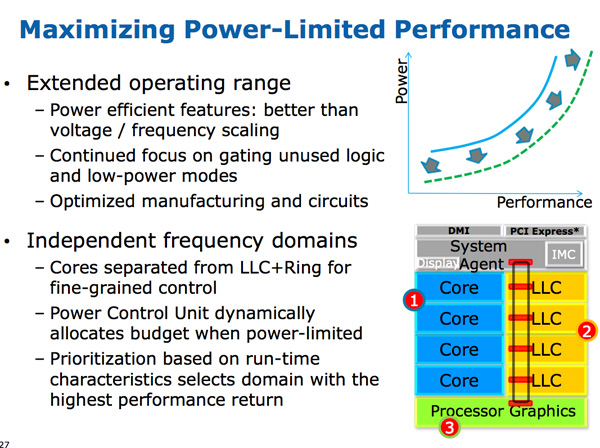

The three clock domains in Haswell are roughly the same as what they were in Nehalem, they just all happen to be on the same die. The CPU cores all run at the same frequency, the on-die GPU runs at a separate frequency and now the L3 + ring bus are in their own independent frequency domain.

Now that CPU requests to L3 cache have to cross a frequency boundary there will be a latency impact to L3 cache accesses. Sandy Bridge had an amazingly fast L3 cache, Haswell's L3 accesses will be slower.

The benefit is obviously power. If the GPU needs to fire up the ring bus to give/get data, it no longer has to drive up the CPU core frequency as well. Furthermore, Haswell's power control unit can dynamically allocate budget between all areas of the chip when power limited.

Although L3 latency is up in Haswell, there's more access bandwidth offered to each slice of the L3 cache. There are now dedicated pipes for data and non-data accesses to the last level cache.

Haswell's memory controller is also improved, with better write throughput to DRAM. Intel has been quietly telling the memory makers to push for even higher DDR3 frequencies in anticipation of Haswell.

245 Comments

View All Comments

FunBunny2 - Friday, October 5, 2012 - link

While not a hardware issue (and thus not an AnandTech major venue), I would be amused if one of your writers explored the implications on data storage design (normal form databases vs. traditional files) of small real estate mobile. My take is that small, consistent bites of bytes are required, and will eventually change how data is stored on the servers. Any takers?lukarak - Saturday, October 6, 2012 - link

In other words, "....all cars were trucks....."?BoloMKXXVIII - Friday, October 5, 2012 - link

Very well written article. Other sites should read Anandtech to see how it should be done.Thank you.

All this power saving in idle conditions is great (love the looping of frame buffer idea), but users aren't always reading text on their screens. When these chips are under load they are still going to draw very significant amounts of power. Unless battery technology improves by an order of magnatude I don't see Haswell (or its replacements) fitting into ultraportable devices like phones or "phablets". The other comments concerning AMD are on the mark. AMD is in big trouble. They are too far behind Intel right now and every indication is they will be falling further behind.

silverblue - Friday, October 5, 2012 - link

Steamroller will haul AMD back towards Intel. Not completely, but a lot closer than they have been, and potentially even ahead in some cases. Still, that process deficit has to be painful, as AMD can only win on idle power.I really hope GF don't mess up again, as delays really are costing AMD dearly. Steamroller is a good design, the sort that means AMD can have a cheaper but still decent part, but I fear it'll come too late.

Intel CPUs are looking even more tasty than ever.

overseer - Friday, October 5, 2012 - link

Great Article.Then I sincerely hope AMD can still survive and stride forward in this mobile tide. (R.R. and J.K., you reading this?)

It may look silly but I do like underdogs and their (solid) products, especially when they achieved something with less talents, capital and executiveness.

wumpus - Friday, October 5, 2012 - link

"To put it in perspective, you'll be able to get something faster than an Ivy Bridge Ultrabook or MacBook Air, in something the size of your smartphone, in fewer than 8 years". I can tell you right now, while this architecture is absolutely great on a motherboard, this isn't the right path to the mobile space."Haswell is the first step of a long term solution to the ARM problem." Unfortunately, anandtech is one of the few places left that can call intel on this marketing blather. Intel's ARM problem is that there is no more efficient way to execute instructions than on a in-order, single instruction issue, clean RISC design: all of which are standard features on an ARM. ARM's intel problem is that this limits you to about .5GIPS ([G]meanless indicator of processor speed) compared to over 6GIPS on an all out Intel design.

The choice isn't all or nothing, just that this time Intel choose performance over efficiency. MIPS, alpha, (to a large part) PowerPC all fell to high performance Intel chips that were vastly less complex than current designs. ARM could try to compete with Intel on performance, but if they are lucky they will end up like AMD, and if they can't out design Intel (remember Intel's process advantage) they will end up like MIPS, etc.

The reason this all appears to be built around speed (and not efficiency) can be found on pages 7 and 8 (despite protests listed on those pages). Intel needs to add wider execution paths to try to get a tiny few more instructions out per second, all the while holding even more (than ivy or sandy) instructions in flight in case it can execute one. All this means a much longer path for any instruction and many more things computed, more leaky transistors leaking picoamps, more latches burning nanowatts. All ARM has to do is execute one after another.

I am surprised that they bothered to toot their horn about the GPU. It might beat ARM, but any code that can be made to fit a GPU should be run on an AMD machine (or possibly discrete nVidia board). They have been pushing Intel graphics for at least 15 years, don't pretend they are ever going to get it right.

In conclusion, I want one of these in my desktop. A phone CPU should look much more like an early core (maybe core2) design, maybe even more like a pentium pro.

A5 - Friday, October 5, 2012 - link

If we're going to start a RISC/CISC battle, you should really look at a modern ARM architecture before talking.What you can fit in a phone today isn't going to be what you can fit in a phone 8 years from now (in terms of both TDP and die size).

Getting Haswell-class performance from a 2020 smartphone isn't that far-fetched...you can argue that modern smartphone SoCs are close to the performance of the Athlon 64 2800+ or the Prescott Pentium 4s of 2004 in a lot of tasks.

wumpus - Friday, October 5, 2012 - link

There is a reason Atom is getting creamed in the phone space by ARM. Also the only way TDP is going to change is with major increases in battery technology. X Joules (typically changed to W/hr in battery speak, but why not stick with SI units) means X seconds a 1 W or X/n seconds at n Watts.On the high end, everything that won the war for CISC (namely, Intel's manufacturing skills) is even more true than when they won. There isn't going to be another. That doesn't mean that a chip designed for all out performance is going to have any business competing with ARM on MIP/W. If they wanted to compete on battery life, they would have scaled down the depth and breadth of the queue, not increased it.

Actually, I was ready to go into full rant when I saw the opening. Then I checked that "ultrabook" meant 1.8GHz i3s. It is quite possible (although I still doubt it is a good way to use a battery) to build a chip that will do that and have low power. I just don't think that Haskel is anyway designed to be that chip

FunBunny2 - Friday, October 5, 2012 - link

-- everything that won the war for CISC (namely, Intel's manufacturing skills) is even more true than when they wonIt's been true since P4 that the "real" cpu is a RISC engine fronted by a x86 ISA translator. Intel tried to sell a ISA level RISC chip (twice). Not so hot. But Intel does know RISC. I've always wondered why they used all that transistor budget the way they did, rather than doing the entire instruction set in hardware, as they could have. It's as if IBM turned all the 370s into 360/30s.

Penti - Saturday, October 6, 2012 - link

It was Pentium Pro that switched to a modern out of order micro-ops powered CPU. I.e. P6. It's only the front end that speaks x86. Intel's own RISC designs like i960 ultimately failed and EPIC even more so when it failed to outdo AMD and Intel server processors in enterprise applications. In reality customers only switched to Itanium because they already had made up their mind before there even was any product thus killing at the time more appropriate Alpha, MIPS and PA-RISC processors. But as soon as those where fased out, Intel's x86 compatible chips had already gained the enterprise features that it missed previously and that set those older chips apart.The front end and x86 decode doesn't use that much space in modern processors at all. CPU architecture aren't really all that important it's today largely about the features it supports, the gpu, video decode/processor etc. ARM just made it into the out-of-order superscalar era in 2011 with A9, superscalar in-order in 2008 with Cortex A8. Atom is kinda designed like a P5 cpu. I.e. superscalar in-order, and moves to an out-of-order design next year. Intel's first superscalar design was in 1988.

ARM just needs to be fast enough, it was fairly easy to replace SH3, Motorola DragonBall, i386 design in the mobile space it was even Intel that did it to a large part. And earlier 8086-stuff had already been left behind by that time. Now what's impressive is the integration and finish of the ARM SoC's. It was Intel that didn't want companies like Research In Motion to continue use low-power Intel x86-chips in their handheld devices. That only changes when Intel sold off the StrongARM/XScale line in 2006. Intel has no reason to start create custom ARM ISA chips again as they can compete with them with x86 chips which they spend much larger time to adapt development tools and frameworks for any way. Atom as a whole has a much larger market then XScale had on it's own. Remember that Intel dropped stuff like RAID/Storage-processors too. Having Intel as a Marvell in ARM chips today wouldn't have changed anything radically.