Intel's Haswell Architecture Analyzed: Building a New PC and a New Intel

by Anand Lal Shimpi on October 5, 2012 2:45 AM ESTCPU Architecture Improvements: Background

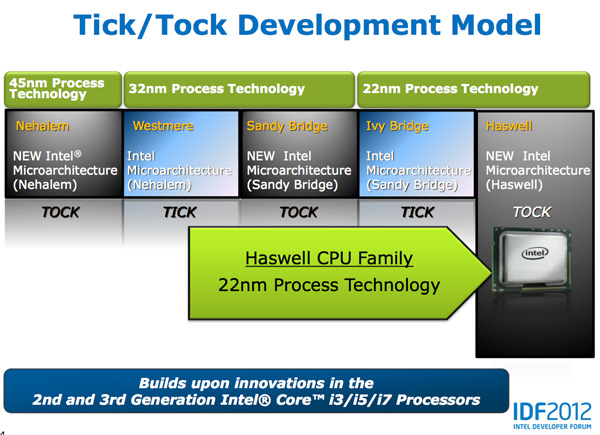

Despite all of this platform discussion, we must not forget that Haswell is the fourth tock since Intel instituted its tick-tock cadence. If you're not familiar with the terminology by now a tock is a "new" microprocessor architecture on an existing manufacturing process. In this case we're talking about Intel's 22nm 3D transistors, that first debuted with Ivy Bridge. Although Haswell is clearly SoC focused, the designs we're talking about today all use Intel's 22nm CPU process - not the 22nm SoC process that has yet to debut for Atom. It's important to not give Intel too much credit on the manufacturing front. While it has a full node advantage over the competition in the PC space, it's currently only shipping a 32nm low power SoC process. Intel may still have a more power efficient process at 32nm than its other competitors in the SoC space, but the full node advantage simply doesn't exist there yet.

Although Haswell is labeled as a new micro-architecture, it borrows heavily from those that came before it. Without going into the full details on how CPUs work I feel like we need a bit of a recap to really appreciate the changes Intel made to Haswell.

At a high level the goal of a CPU is to grab instructions from memory and execute those instructions. All of the tricks and improvements we see from one generation to the next just help to accomplish that goal faster.

The assembly line analogy for a pipelined microprocessor is over used but that's because it is quite accurate. Rather than seeing one instruction worked on at a time, modern processors feature an assembly line of steps that breaks up the grab/execute process to allow for higher throughput.

The basic pipeline is as follows: fetch, decode, execute, commit to memory. You first fetch the next instruction from memory (there's a counter and pointer that tells the CPU where to find the next instruction). You then decode that instruction into an internally understood format (this is key to enabling backwards compatibility). Next you execute the instruction (this stage, like most here, is split up into fetching data needed by the instruction among other things). Finally you commit the results of that instruction to memory and start the process over again.

Modern CPU pipelines feature many more stages than what I've outlined here. Conroe featured a 14 stage integer pipeline, Nehalem increased that to 16 stages, while Sandy Bridge saw a shift to a 14 - 19 stage pipeline (depending on hit/miss in the decoded uop cache).

The front end is responsible for fetching and decoding instructions, while the back end deals with executing them. The division between the two halves of the CPU pipeline also separates the part of the pipeline that must execute in order from the part that can execute out of order. Instructions have to be fetched and completed in program order (can't click Print until you click File first), but they can be executed in any order possible so long as the result is correct.

Why would you want to execute instructions out of order? It turns out that many instructions are either dependent on one another (e.g. C=A+B followed by E=C+D) or they need data that's not immediately available and has to be fetched from main memory (a process that can take hundreds of cycles, or an eternity in the eyes of the processor). Being able to reorder instructions before they're executed allows the processor to keep doing work rather than just sitting around waiting.

Sidebar on Performance Modeling

Microprocessor design is one giant balancing act. You model application performance and build the best architecture you can in a given die area for those applications. Tradeoffs are inevitably made as designers are bound by power, area and schedule constraints. You do the best you can this generation and try to get the low hanging fruit next time.

Performance modeling includes current applications of value, future algorithms that you expect to matter when the chip ships as well as insight from key software developers (if Apple and Microsoft tell you that they'll be doing a lot of realistic fur rendering in 4 years, you better make sure your chip is good at what they plan on doing). Obviously you can't predict everything that will happen, so you continue to model and test as new applications and workloads emerge. You feed that data back into the design loop and it continues to influence architectures down the road.

During all of this modeling, even once a design is done, you begin to notice bottlenecks in your design in various workloads. Perhaps you notice that your L1 cache is too small for some newer workloads, or that for a bunch of popular games you're seeing a memory access pattern that your prefetchers don't do a good job of predicting. More fundamentally, maybe you notice that you're decode bound more often than you'd like - or alternatively that you need more integer ALUs or FP hardware. You take this data and feed it back to the team(s) working on future architectures.

The folks working on future architectures then prioritize the wish list and work on including what they can.

245 Comments

View All Comments

Anand Lal Shimpi - Friday, October 5, 2012 - link

It's the other way around: not talking about Apple using Intel in iPads, but rather Apple ditching Intel in the MacBook Air.I do agree with Charlie in that there's a lot of pressure within Apple to move more designs away from Intel and to something home grown. I suspect what we'll see is the introduction of new ARM based form factors that might slowly shift revenue away from the traditional Macs rather than something as simple as dropping an Ax SoC in a MacBook Air.

Take care,

Anand

A5 - Friday, October 5, 2012 - link

Yeah. I knew what you were getting at, but I guess it wasn't that obvious for some people :-p.Something like an iPad 3 with an Apple-made keyboard case + some changes in iOS would make Intel and notebook OEMs really scared.

tipoo - Friday, October 5, 2012 - link

So pretty much the Surface tablet. The keyboard case looks amazing, can't wait to try one.Kevin G - Friday, October 5, 2012 - link

Apple is in the unique position that they could go with either platform way. They are capable of moving iOS to x86 or OS X to ARM on seemingly a whim. Their decision would be dictated not by current and chips arriving in the short term (Haswell and the Cortex A15) but rather long term road maps. Apple would be willing ditch their own CPU design if it brought a clear power, performance and process advantage from what they could do themselves. The reason why Apple manufactured an ARM chip themselves is that they couldn't get the power and performance out of SoC's from other companies.The message Intel wants to send to Apple is that Haswell (and then Broadwell) can compete in the ultra mobile market. Intel also knows the risk to them if Apple sticks to ARM: Apple is the dominate player in the tablet market and one of the major players in the cell phone market and pretty much the only success in the utlrabook segment. Apple's success is eating away the PC market which is Intel's bread and butter in x86 chip sales. So for the moment Intel is actively promoting Apple's competitors in the ultrabook segment and assist in 10W Ivy Bridge and 10W Haswell tablet designs.

If Intel can't get anyone to beat Apple, they might as well join them over the long run. This would also explain Intel toying with the idea of becoming a foundry. If Intel doesn't get their x86 chips into the iPad/iPhone, they might as well manufacture the ARM chips that do. Apple is also one of the few companies who would be willing to pay a premium for Intel foundry access (and the extra ARM not x86 premium).

So there are four scenarios that could play out in the long term: the status quo of x86 for OS X + ARM for iOS, x86 for both OS X + iOS, ARM for both OS X + iOS and ARM built by Intel for OS X + iOS.

Peanutsrevenge - Friday, October 5, 2012 - link

I will LMAO if Apple switch macs back to RISC in the next few years.Will be RISC, x86, RISC in the space of a decade.

Poor Crapple users having to keep swapping their software.

I laughed 6 ago, and I'll laugh again :D

Kevin G - Saturday, October 6, 2012 - link

But it wouldn't be the same RISC. ARM isn't PowerPC.And hey, Apple did go from CISC to RISC to back to CISC again for their Macs.

Penti - Saturday, October 6, 2012 - link

They hardly would want to be in the situation where they have to compete with Intel and Intel's performance again. Also their PC/Mac lineup is just so much smaller then the mobile market they have, why would they create teams of thousands of engineers (which they don't have) to create workstation processors for their mobile workstations and mac pro's? They couldn't really do that with PowerPC design despite having influence on chip architecture, they lost out in the race and just grows more dependent on other external suppliers and those Macs would loose the ability to run Boot camp'd or virtualized Windows. It's not the same x86 as it was in 2006 either.A switch would turn Macs into toys rather then creative and engineering tools. It would create an disadvantage with all the tools developed for x86 and if they drop high-end they might as well turn themselves into an mobile computing company and port their development tools to Windows. As it's not like they will replace all the client and server systems in the world or even aspire to.

I don't have anything against ARM creping into desktops. But they really has no reason to segment their system into ARM or x86. It's much easier to keep the iOS vs OS X divide.

Haswell will give you ARM or Atom (Z2760) battery life for just some hundred dollars more or so. If they can support the software better those machine will be loaded with software worth thousands of dollar per machine/user any way. Were the weaker machines simply can't run most of that. Casual users can still go with Atom if they want something weaker/cheaper or another ecosystem altogether.

Kevin G - Monday, October 8, 2012 - link

The market is less about performance now as even taking a few steps backward a user has a 'good enough' performance. It is about gaining mobility which is driven by reduction in power consumption.Would Apple want to compete with Intel's Xeon's line up? No and well, Apple isn't even trying to stay on the cutting edge there (their Mac Pro's are essentially a 3 year old design with moderate processor speed bumps in 2010 and 2012). If Apple was serious about performance here, they'd have a dual LGA 2011 Xeon as their flagship system. The creative and engineering types have been eager for such a system which Apple has effectively told them to look elsewhere for such a workstation.

With regards to virtualization, yes it would be a step backward not to be able to run x86 based VM's but ARM has defined their own VM extensions. So while OS X would lose the ability to host x86 based Windows VM's, their ARM hardware could native run OS X with an iOS guest, an Android guest or a Windows RT guest. There is also brute force emulation to get the job done if need be.

Moving to pure ARM is a valid path for iOS and OS X is a valid path for Apple though it is not their only long term option.

Penti - Tuesday, October 9, 2012 - link

You will not be able to license Windows RT at all as an end-user. Apple has no interest what so ever to support GNU/Linux based ARM-VMs.I'm sure they will update the Mac Pro the reason behind it is largely thanks to Intel themselves. That's not their only workstation though, and yes performance is important in the mobile (notebook space), performance per watt is really important too. If they want mobile workstations and engineering type machines they won't go with ARM. As it does mean they would have to compete with Intel. They could buy a firm with an x86 license and outdo Intel if they were really capable of that. ISA doesn't really matter here expect when it comes to tools.

baba264 - Friday, October 5, 2012 - link

"Within 8 years many expect all mainstream computing to move to smartphones, or whatever other ultra portable form factor computing device we're carrying around at that point."I don't know if I am in a minority or what, but I really don't see myself giving up my desktop anytime soon. I love my mechanical keyboard my large screen and my computing power. So I have to wander if I'm just an edge case or if analyst are reading too much in the rise of the smartphone.

Great article otherwise :).