Intel's Haswell Architecture Analyzed: Building a New PC and a New Intel

by Anand Lal Shimpi on October 5, 2012 2:45 AM ESTThe Haswell Front End

Conroe was a very wide machine. It brought us the first 4-wide front end of any x86 micro-architecture, meaning it could fetch and decode up to 4 instructions in parallel. We've seen improvements to the front end since Conroe, but the overall machine width hasn't changed - even with Haswell.

Haswell leaves the overall pipeline untouched. It's still the same 14 - 19 stage pipeline that we saw with Sandy Bridge depending on whether or not the instruction is found in the uop cache (which happens around 80% of the time). L1/L2 cache latencies are unchanged as well. Since Nehalem, Intel's Core micro-architectures have supported execution of two instruction threads per core to improve execution hardware utilization. Haswell also supports 2-way SMT/Hyper Threading.

The front end remains 4-wide, although Haswell features a better branch predictor and hardware prefetcher so we'll see better efficiency. Since the pipeline depth hasn't increased but overall branch prediction accuracy is up we'll see a positive impact on overall IPC (instructions executed per clock). Haswell is also more aggressive on the speculative memory access side.

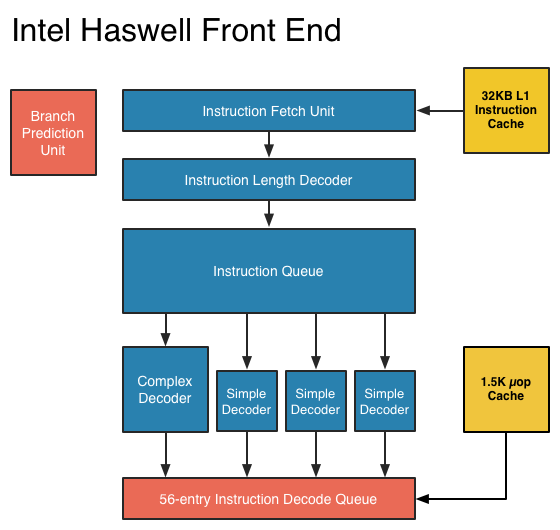

The image below is a crude representation I put together of the Haswell front end compared to the two previous tocks. If you click the buttons below you'll toggle between Haswell, Sandy Bridge and Nehalem diagrams, with major changes highlighted.

In short, there aren't many major, high-level changes to see here. Instructions are fetched at the top, sent through a bunch of steps before getting to the decoders where they're converted from macro-ops (x86 instructions) to an internally understood format known to Intel as micro-ops (or µops). The instruction fetcher can grab 4 - 5 x86 instructions at a time, and the decoders can output up to 4 micro-ops per clock.

Sandy Bridge introduced the 1.5K µop cache that caches decoded micro-ops. When future instruction fetch requests are made, if the instructions are contained within the µop cache everything north of the cache is powered down and the instructions are serviced from the µop cache. The decode stages are very power hungry so being able to skip them is a boon to power efficiency. There are also performance benefits as well. A hit in the µop cache reduces the effective integer pipeline to 14 stages, the same length as it was in Conroe in 2006. Haswell retains all of these benefits. Even the µop cache size remains unchanged at 1.5K micro-ops (approximately 6KB in size).

Although it's noted above as a new/changed block, the updated instruction decode queue (aka allocation queue) was actually one of the changes made to improve single threaded performance in Ivy Bridge.

The instruction decode queue (where instructions go after they've been decoded) is no longer statically partitioned between the two threads that each core can service.

The big changes in Haswell are at the back end of the pipeline, in the execution engine.

245 Comments

View All Comments

tim851 - Friday, October 5, 2012 - link

This is a perfect demonstration of the power of competition.With AMD struggling badly, Intel was content in pushing Atom. They didn't want to innovate in that sector, they sold 10 year old technology with horribly outdated chipsets. Yes, they were relatively cheap, but I was appalled.

Step in ARM, suddenly becoming a viable competitor. Now Intel moves its fat ass and tries to actually build something worthwhile.

Sadly, free markets are an illusion. Intel should pay dearly for the Atom fiasco, but they won't. Just as they didn't pay for the Pentium 4 debacle. They will come 5 years late to the party, but with all their might, they will crush ARM. ARM will fall behind, they can't keep up with that viscious tick-tock-cycle. Who can?

In 8 years, ARM will have been bought by some company, perhaps Apple. ARM will then no longer be a competitor, it will be just a different architecture, like X86. I don't see Apple having any long-term interest in designing their own hardware, it's way too unsexy. They will just cross-licence ARM with Intel and in 10 years time, Intel will rule supremely again.

UpSpin - Friday, October 5, 2012 - link

You forget that Intel vs. ARM is something bigger than AMD vs. Intel.Behind ARM stand Qualcomm, Samsung, Apple, ...

All new software is written for ARM, not Intel (x86) any longer. Microsoft releases a rewritten ARM Windows RT with a rewritten Office for ARM. Android runs on ARM and everyone supports the ARM version, while only Intel has to keep it compatible with x86.

Haswell will get released, when exactly? In a year, ARM A15 in maybe two months. Haswell has nice power savings, but it's still a Ultrabook design. The current Atom SoCs are much worse than current A9/Krait SoCs. Intel heavily optimized the software to make it look not that bad (excellent Sunspider results), but they are.

If Windows 8 is a success, Intel can be lucky. If it's not, what many expect, Intel has a real problem.

Intel is a single company building and developing their CPU/SoC. ARM SoCs get build and developed by a magnitude of companies.

If Apple can design their own ARM based SoC which has the same performance as a Haswell CPU (which is easy in the GPU area (the iPad has a faster GPU than the Intel CPUs most probably already, and with A15 and Apples A6 it's possible to get as fast with the CPU, too), they will be able to move Mac OS to ARM. This allows them to build a very very power efficient, lightweight, silent MacBook. They can port apps from iOS to MacOS and vice versa. Because they designed their SoC in-house, they don't have to fear competition the near term.

Apple always wants a monopoly, so it doesn't make sense for them to cross-license anything.

tuxRoller - Friday, October 5, 2012 - link

Unless your app is doing some serious math you can get by with just using a cross platform key chain.Frankly, the hard part is targeting the different apis that are, currently, predominating on each arch. However, assuming those don't change , and the form factor doesn't either, your new app should just be a compile away.

Kidster3001 - Monday, October 15, 2012 - link

Current ATOM SOC's are not "much worse" than A9/Krait. Most CPU benchmarks running in native code will favor the Intel SoC. It's the addition of Android/Dalvik that leans the favor back to ARM. Android has been on ARM for a lot longer and is more optimized for ARM code. Android needs to be tweaked more yet to run optimally on x86.Kidster3001 - Monday, October 15, 2012 - link

" with A15 and Apples A6 it's possible to get as fast with the CPU, too"say what? A15 and A6 are a full order of magnitude slower than Haswell. omg

Dalamar6 - Sunday, May 12, 2013 - link

Nearly all of the software on Android is junk.Apple blocks everything at a whim and gives no control.

I don't know about Windows RT, but I suspect it will suffer the same manner of crap programs Android does if it's not already.

Even if people are more focused on developing for ARM, the ARM OSes are still way behind in program availability(especially quality). And it's downright sad seeing people charging money for simple, poorly coded programs that can't even compare to existing open source x86 software.

jacobdrj - Friday, October 5, 2012 - link

I agree competition is good/great. However, how you categorize Atom is just not true! Atom filled a very real niche. Cheap mobile computing. Not powerful, but x86 and fast enough to do basic tasks. I loved my Atom netbook and used it until it bit the dust last week. Would I have liked more power? Sure, but not at the expense of (at the time) battery life. Besides, once I maxed it out by putting in a SSD and 2 GB RAM, my netbook often outpaced many peoples' newer more powerful Core based laptops for basic tasks like word processing and web browsing.Just because power users were unhappy does not mean Atom was a 'fiasco'. Those old chipsets allowed Atom netbooks to regularly sell, fully functional, for under $200, a price point that Tablets of similar capability are only just starting to hit almost 4 years later...

Don't bash Atom just because you don't fit into it's niche and don't blame Intel for HP trying to oversell Atom to the wrong customers...

Peanutsrevenge - Friday, October 5, 2012 - link

If competition is 'good/great' what does that make cooperation?Imagine the possibility of Intel and AMD working together along with Qualcomm, Imagination etc.....

Zeitgeist Movement.

Kidster3001 - Monday, October 15, 2012 - link

Intel is not going this way because "ARM stepped in". Intel is going this way because it decided to go play in ARMs playground.krumme - Friday, October 5, 2012 - link

My Samsung 9 series x3c (ivy bridge), have a usage looking on this page with wifi at bt on ranging from 4.9W to 9.9W from lowest to higest screen brightness, with a normal usage of screen of 7.2W with good brightness (using samsung own measuring tool).So screen is by far the most important component on a modern machine. In the complete ecosystem i wonder if it matter how efficient Haswell is. The benefit of 10W tdp for say the same performance is nice, but does it really matter for the market effect. And the idle power is already plenty low.

I doubt Haswell will have an significant impact - as nice as it is. This is just to late and way to expensive for the mass market. Those days are over.

At the time it hits market dirt cheap TSMC 28nm A15 and bobcat successor hits the market for next to nothing, and will give 99% of the consumers the same benefits.