The NVIDIA GeForce GTX 660 Review: GK106 Fills Out The Kepler Family

by Ryan Smith on September 13, 2012 9:00 AM ESTPower, Temperature, & Noise

As always, we’re wrapping up our look at a video card’s stock performance with a look at power, temperature, and noise. Unlike GTX 660 Ti, which was a harvested GK104 GPU, GTX 660 is based on the brand-new GK106 GPU, which will have interesting repercussions for power consumption. Scaling down a GPU by disabling functional units often has diminishing returns, so GK106 will effectively “reset” NVIDIA’s position as far as power consumption goes. As a reminder, NVIDIA’s power target here is a mere 115W, while their TDP is 140W.

| GeForce GTX 660 Series Voltages | |||||

| Ref GTX 660 Ti Load | Ref GTX 660 Ti Idle | Ref GTX 660 Load | Ref GTX 660 Idle | ||

| 1.175v | 0.975v | 1.175v | 0.875v | ||

Stopping to take a quick look at voltages, even with a new GPU nothing has changed. NVIDIA’s standard voltage remains at 1.175v, the same as we’ve seen with GK104. However idle voltages are much lower, with the GK106 based GTX 660 idling at 0.875v versus 0.975v for the various GK104 desktop cards. As we’ll see later, this is an important distinction for GK106.

Up next, before we jump into our graphs let’s take a look at the average core clockspeed during our benchmarks. Because of GPU boost the boost clock alone doesn’t give us the whole picture, we’ve recorded the clockspeed of our GTX 660 during each of our benchmarks when running it at 1920x1200 and computed the average clockspeed over the duration of the benchmark

| GeForce GTX 600 Series Average Clockspeeds | |||||

| GTX 670 | GTX 660 Ti | GTX 660 | |||

| Max Boost Clock | 1084MHz | 1058MHz | 1084MHz | ||

| Crysis | 1057MHz | 1058MHz | 1047MHz | ||

| Metro | 1042MHz | 1048MHz | 1042MHz | ||

| DiRT 3 | 1037MHz | 1058MHz | 1054MHz | ||

| Shogun 2 | 1064MHz | 1035MHz | 1045MHz | ||

| Batman | 1042MHz | 1051MHz | 1029MHz | ||

| Portal 2 | 988MHz | 1041MHz | 1033MHz | ||

| Battlefield 3 | 1055MHz | 1054MHz | 1065MHz | ||

| Starcraft II | 1084MHz | N/A | 1080MHz | ||

| Skyrim | 1084MHz | 1045MHz | 1084MHz | ||

| Civilization V | 1038MHz | 1045MHz | 1067MHz | ||

With an official boost clock of 1033MHz and a maximum boost of 1084MHz on our GTX 660, we see clockspeeds regularly vary between the two points. For the most part our average clockspeeds are slightly ahead of NVIDIA’s boost clock, while in CPU-heavy workloads (Starcraft II, Skyrim), we can almost sustain the maximum boost clock. Ultimately this means that the GTX 660 is spending most of its time near or above 1050MHz, which will have repercussions when it comes to overclocking.

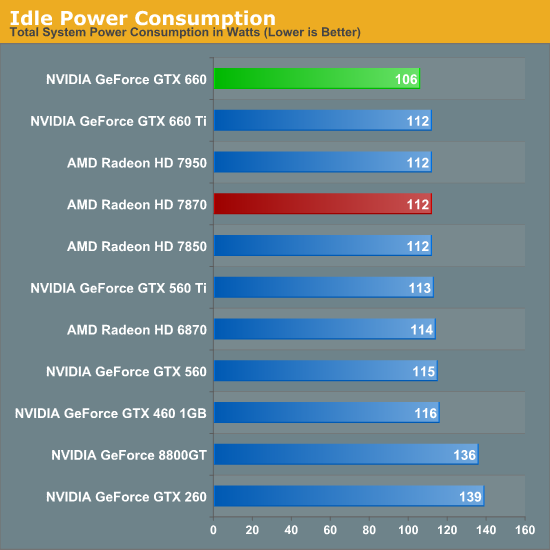

Starting as always with idle power we immediately see an interesting outcome: GTX 660 has the lowest idle power usage. And it’s not just a one or two watt either, but rather a 6W (all the wall) difference between the GTX 660 and both the Radeon HD 7800 series and the GTX 600 series. All of the current 28nm GPUs have offered refreshingly low idle power usage, but with the GTX 660 we’re seeing NVIDIA cut into what was already a relatively low idle power usage and shrink it even further.

NVIDIA’s claim is that their idle power usage is around 5W, and while our testing methodology doesn’t allow us to isolate the video card, our results corroborate a near-5W value. The biggest factors here seem to be a combination of die size and idle voltage; we naturally see a reduction in idle power usage as we move to smaller GPUs with fewer transistors to power up, but also NVIDIA’s idle voltage of 0.875v is nearly 0.1v below GK104’s idle voltage and 0.075v lower than GT 640 (GK107)’s idle voltage. The combination of these factors has pushed the GTX 660’s idle power usage to the lowest point we’ve ever seen for a GPU of this size, which is quite an accomplishment. Though I suspect the real payoff will be in the mobile space, as even with Optimus mobile GPUs have to spend some time idling, which is another opportunity to save power.

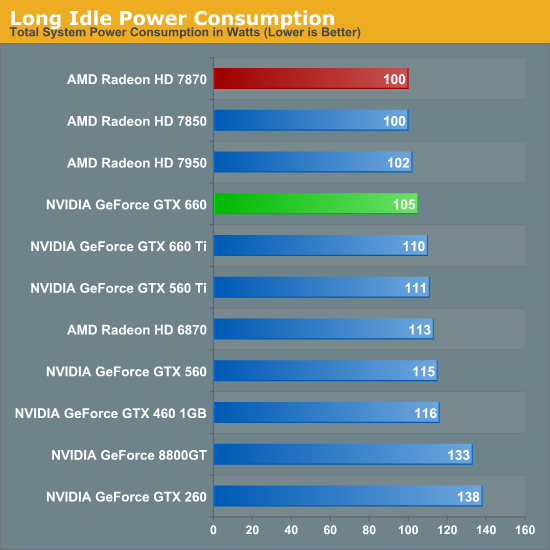

At this point the only area in which NVIDIA doesn’t outperform AMD is in the so-called “long idle” scenario, where AMD’s ZeroCore Power technology gets to kick in. 5W is nice, but next-to-0W is even better.

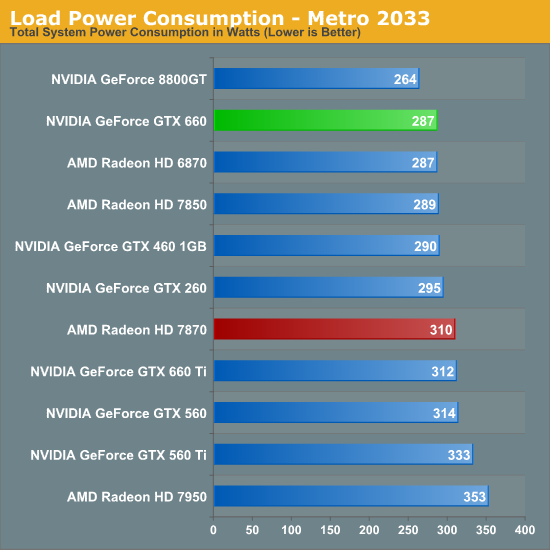

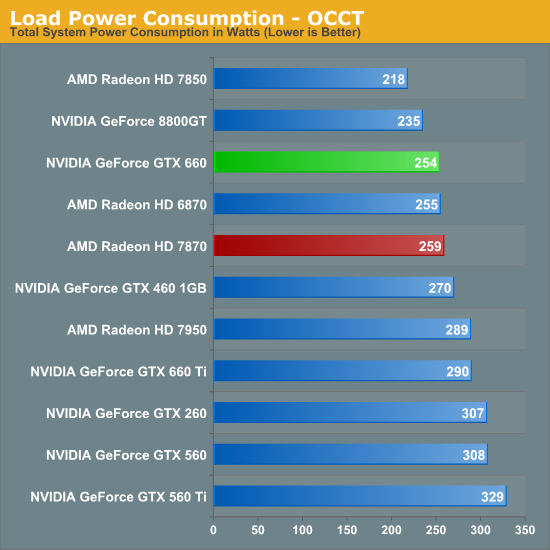

Moving on to load power consumption, given NVIDIA’s focus on efficiency with the Kepler family it comes as no great surprise that NVIDIA continues to hold the lead when it comes to load power consumption. The gap between GTX 660 and 7870 isn’t quite as large as the gap we saw between GTX 680 and 7970 but NVIDIA still has a convincing lead here, with the GTX 660 consuming 23W less at the wall than the 7870. This puts the GTX 660 at around the power consumption of the 7850 (a card with a similar TDP) or the GTX 460. On AMD’s part, Pitcairn is a more petite (and less compute-heavy) part than Tahiti, which means AMD doesn’t face nearly the disparity as they do on the high-end.

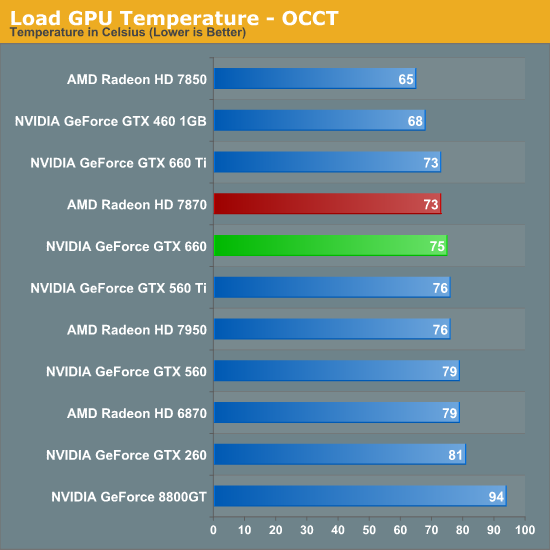

OCCT on the other hand has the GTX 660 and 7870 much closer, thanks to AMD’s much more aggressive throttling through PowerTune. This is one of the only times where the GTX 660 isn’t competitive with the 7850 in some fashion, though based on our experience our Metro results are more meaningful than our OCCT results right now.

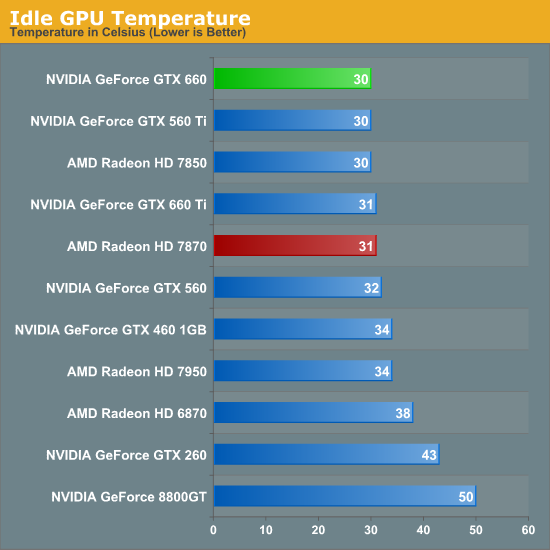

As for idle temperatures, there are no great surprises. A good blower can hit around 30C in our testbed, and that’s exactly what we see.

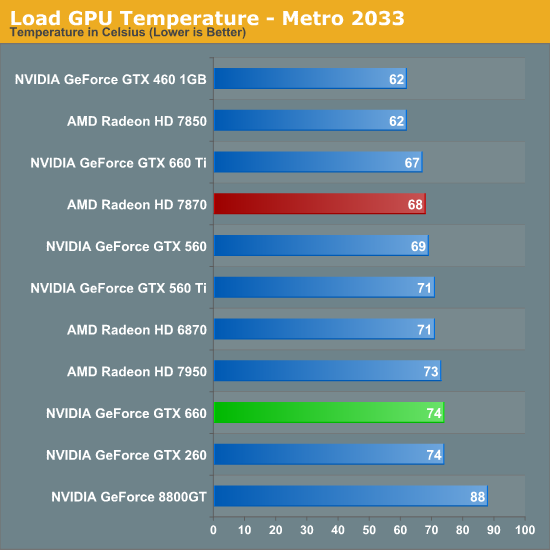

Temperatures under Metro look good enough; though despite their power advantage NVIDIA can’t keep up with the blower-equipped 7800 series. At the risk of spoiling our noise results, the 7800 series doesn’t do significantly worse for noise so it’s not immediately clear why the GTX 660 is 6C warmer here. Our best guess would be that the GTX 660’s cooler just quite isn’t up to the potential of the 7800 series’ reference cooler.

OCCT actually closes the gap between the 7870 and the GTX 660 rather than widening it, which is the opposite of what we would expect given our earlier temperature data. Reaching the mid-70s neither card is particularly cool, but both are still well below their thermal limits, meaning there’s plenty of thermal headroom to play with.

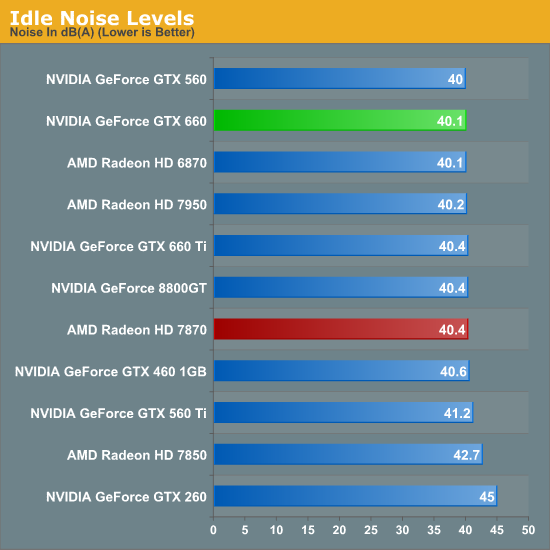

Last but not least we have our noise tests, starting with idle noise. Again there are no surprises here; the GTX 660’s blower is solid, producing no more noise than any other standard blower we’ve seen.

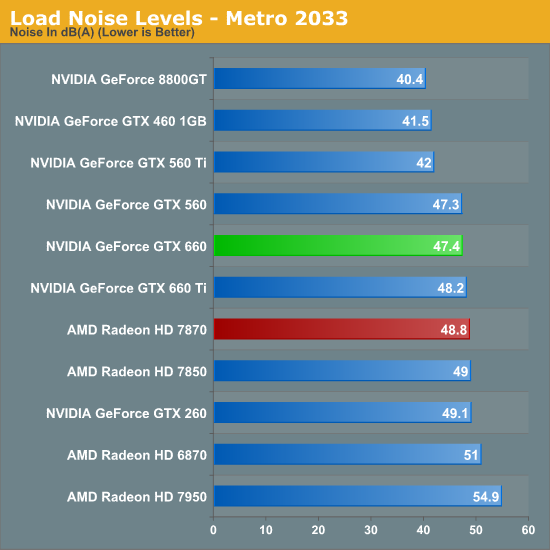

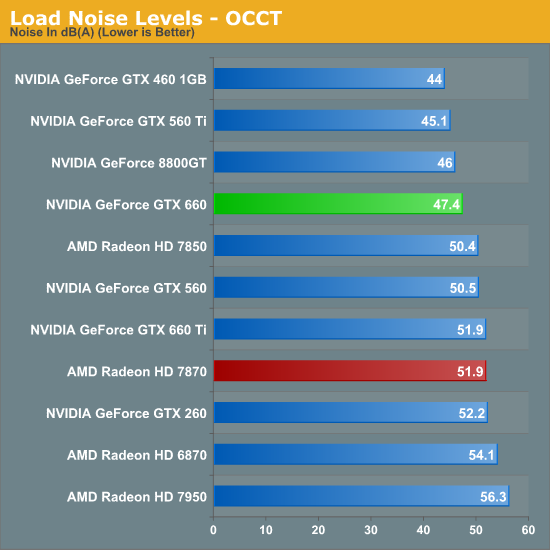

While the GTX 660 couldn’t beat the 7870 on temperatures under Metro, it can certainly beat the 7870 when it comes to noise. The difference isn’t particularly great – just 1.4dB – but every bit adds up, and 47.4dB is historically very good for a blower. However the use of a blower on the GTX 660 means that NVIDIA still can’t match the glory of the GTX 560 Ti or GTX 460; for that we’ll have to take a look at retail cards with open air coolers.

Similar to how AMD’s temperature lead eroded with OCCT, AMD’s slight loss in load noise testing becomes a much larger gap under OCCT. A 4.5dB difference is now solidly in the realm of noticeable, and further reinforces the fact that the GTX 660 is the quieter card under both normal and extreme situations.

We’ll be taking an in-depth look at some retail cards later today with our companion retail card article, but with those results already in hand we can say that despite the use of a blower the “reference” GTX 660 holds up very well. Open air coolers can definitely beat a blower with the usual drawbacks (that heat has to go somewhere), but when a blower is only hitting 47dB, you already have a fairly quiet card. So even a reference GTX 660 (as unlikely as it is to appear in North America) looks good all things considered.

147 Comments

View All Comments

chizow - Monday, September 17, 2012 - link

Yes you chose to interject in this discussion and made a reference to the rebate in particular, continuing on as if the GTX 280 price was unwarranted. I think corrected you by showing the GTX 280's price *WAS* warranted relative to last-gen unlike the 7970, but even still, Nvidia cut prices and did right by their customer by issuing rebates. So, win-win for GTX 260/280 buyers, unlike this case of lose-lose for 7970/7950/7870 buyers."One comment about the rebate on the gtx 280, it's quite different from now. The 549$ radeon 7970 lost to a 499$ gtx 680 3 months after it's launch.

The 650$ gtx 280 was on average 10% better and sometimes 10% worse than the 300$ radeon 4870 one month after it's launch..."

And there you go again saying Nvidia was wrong to price the GTX 680 at $500, so you think it should be priced at $600 since it outperformed the $550 7970? And I guess the GTX 780 should be priced at $750 ad infinitum? This is what happens when you lack the perspective or understanding for a reasonable valuation or basis...I've already laid it out for you, this is why we use historical price and performance expectations....

Calling someone an idiot isn't disrespectful when they continually demonstrate a low level of intelligence and continually argue from a position of ignorance.

Galidou - Monday, September 17, 2012 - link

I never said they were wrong in their price, did I? you take words I never used, do you see in the sentence: ''Nvidia was wrong to price it so high at launch''. I was just using this to show the situation is different thus interjecting you about the fact AMD should issue rebate for the 7970 buyers and this discussion went so far that I lost the beginning of it. The only reason I mentioned those 2 facts was to show the % relavite to THIS gen comparing performance and price that's IT. So to give a short answer, the price of the 7970 wasn't so bad even after the launch of the gtx 280 as to the opposite of the gtx 280 price when the radeon 4870 launched 1 month after for less than half the price of it from a % of price difference and % of performance difference....Sorry if I'm not making myself clear at all time but this discussion is becoming so long and my english isn't as perfect as yours, french is my main language so I tried to stay as clear as possible even if I know I made mistakes when explaining my OPINION. Not the facts, I won'T say these are facts even if I took them from reliabe websites because to be a FACT I'd have to be sure a 100% of the EARTH beleives it the VERY SAME way I do thanks.

And to this day you never told me you bought an AMD/ATI card and never refuted you're not an nvidia fanboy thus proving you are. We all know when you have a choosen side, facts can be interpreted like you are doing, because the words you use are not the ones I hear from EVERYONE on earth and you can't prove everyone THINKS the way you do. It's not as simple as 2 + 2 = 4. If someone thought that every card above 500$ whatever the last gen was is wrong the the pricing of the reason of the gtx 280 pricing might not be as FACTUALLY good to everyone as you might think even before the 4870..... as for the 7970.....

Galidou - Monday, September 17, 2012 - link

''7970 wasn't so bad even after the launch of the gtx 280 ''I meant even after the launch of the gtx 680.

chizow - Tuesday, September 18, 2012 - link

You did say Nvidia was wrong to price at $500, which again is not accurate because if anything Nvidia's pricing was still too high relative to its softer than expected increase in performance relative to GTX 580.The only reason Nvidia was able to get away with this tiny increase was due to the lackluster performance of Tahiti along with its ridiculous pricing, allowing Nvidia to beat AMD this round in both price and performance with only their 2nd tier midrange ASIC GK104.

You don't seem to think this or people's buying decisions has an impact on you, but it does, and it already has. It just means you pay more for performance today or you have to wait longer for that level of performance to trickle down to your pricepoint. I've already seen it, as has every single person who bought an AMD GPU since launch. The prices today are what they should've been at launch now that the market has corrected itself (due to Kepler's launches).

As for my buying decisions again...I have owned AMD in the past a 9700pro and a 5850 for my gf. There's some integral features Nvidia offers that I know AMD is deficient in and that gap has only grown over the years to the point AMD products no longer satisfy my base expectations for graphics card purchases.

So while the two may technically compete in the same market, the products differ so much at this point for me that AMD is really no longer an option.

Some examples, since I'm sure you will ask, are features as basic as game-specific profiles and custom SLI and AA bit control. And no, AMD doesn't offer this, they just do what RadeonPro did for years by adding additional profiles without exposing the AA/SLI bits. Then there is 3D Vision support among many other less important features (PhysX, driver FXAA/AO, Vsync, better game bundles, better game support etc).

Btw, I had to end up selling the 5850 because it lacked support for something as simple as SM2.0 fur and native MSAA in Sims 3 Pets, bugs with AMD cards which my gf picked up on. That's when I threw the GTX 280 in that machine and she didn't even notice a difference (other than the new pet fur and AA). She's run GW2, Diablo3, Skyrim, and a bunch of newer games without AA at 1080p and they run great, think I could say the same for a 4870 4 years later?

Galidou - Monday, September 17, 2012 - link

"End of the discussion, you're a disrespectful Nvidia fanboy."Sorry but saying you're a fanboy wasn'T meant to disrespect you even if it was said in a harsh way and for that I'm sorry but you calling others idiots defending their honor... Nvidia fanboy in my language mean you have a choosen side and for that, you have I know you can't say you're not an Nvidia fanboy and you haven't refuted it either. Now that I did I guess it will be easier for you to say in your answer: ''Well I'm not an nvidia fanboy because of X reasons''. but if you can't say it, it will mean I was right about choosen side and interpretation of the things yuo call ''facts'' because they're interpreted by the same eyes that favor this side.

I can't read what you're saying on any website in the same exact words you're use so it has been in fact interpreted by your brain like my opinions are.

Your question is irrelevant, we were speaking of the pricing scheme at launch of their competitive parts to see if the asking price would have to force the company to issue rebates.

I'll answer you the way I remember I judged the card from my buyer perspective because I can't judge for everyone else not knowing what was in their head(I'll stop speaking like you do and say THIS IS A FACT while I don't know what OTHERS people thought in the whole world). Many of my friends back then had 8800gt because they got them dirt cheap(180$ CAD) and some of them had sli 8800gt running perfectly.

Seeing the gtx 280 at 650$ performance I was really shocked. We were in an era where Sli was becoming real popular as well as double gpu cards. And knowing you could already get easily the performance of a ''new and amazing card'' equalled on many levels by other CHEAPER solutions, I wasn't impressed but the price was totally out of what I pay for a card anyway.

Same for the 7970 I really think of both as not very good solutions. When I saw the benchmarks, I wasn't impressed at all, the only reason. I understand your point of view, the pricing of the new gen 28nm would normally drive the price back of all the generations before it while the 7xxx series instead just placed itself around to the price points corresponding at it's performance. There was NO deal but there was no CROOK either, 650$ video cards and 800$ video cards(thinking about geforce 2 and 3 series) just never made any sens to me, 550$ for a radeon 7970 don't make sense but I KNEW it had to go up someday because of AMD driving the price down WAY too much. It's just unbearable to see people whine when AMD drives the prices down too much telling they made a mistake and then whine when they drive the prices up back to normal putting all the fault on their shoulder again.....

You have to see the whole story sometimes and stop focusing on only one side of the medal IT HAD TO HAPPEN, while the 7970 wasn't priced right, 550$ to me, at launch WHATEVER the performance relative to the last gen is more acceptable than anything priced 600$ and above for gaming usage end of the line, per dollar performance was always and still remain TO ME in the 150-300$ range.

BTW you keep comparing the gtx 280 to it's last gen counter part ''8800gtx'' which it was(considering the 9xxx series was a refresh). But you keep comparing performance and price to the REFRESH of the last gen fron ATI because instead of just remaking the video cards and giving them new names, they made new more powerful parts(6950 and 6970).

If you compare the REAL last gen not refreshed parts, the 7970 would have to compare to the 5870 which it almost doubled the performance from. 300$ 4870, 380$ 5870, 550$ 7970 a return to ''normal things'' sorry if it did harm your eyes to the point you couldn'T stop remembering everyone about the 4870 SO bad PRICING and hoping they kepp it for the radeon 7970 BECAUSE when you make a mistake you cannot go back to normal after HEY? Without having some fanboys freaking out, HEY?

chizow - Monday, September 17, 2012 - link

I don't need to refute anything, I'm a fan of "whatever is better" and Nvidia products are consistently better at meeting my needs and expectations.As much as I'd love to go over all of that with you, I'm sure its just a huge waste of time, but needless to say some people don't just look at FPS charts and sticker prices for buying guidance. Luckily for me however, Nvidia is still bound by this guidance in pricing their products, otherwise I might really be paying dearly for their parts. :)

In any case, if you can't even admit 7970 pricing was far worst than GTX 280 pricing at launch, there is no point in continuing this discussion with you. I won't even bother calling you a fanboy because honestly, it has nothing do with fanboyism and everything to do with intellect, or lack thereof. These really are very simple metrics that everyone should use to make an informed buying decision.

Finally, you are right about the generational comparisons, but you can just as easily plug in the 5870 and see the 7970 is only 50% faster, ~40% faster than the 6970. Either way you can see the 7970 offers the worst increase in performance for the biggest increase in price of any new AMD or Nvidia generation or process in the last 10 years, and you really don't need to be a fanboy of either company to understand this. ;)

Galidou - Monday, September 17, 2012 - link

''Calling someone an idiot isn't disrespectful when they continually demonstrate a low level of intelligence and continually argue from a position of ignorance. ''You're not even knowing me personally, english is not my main language and you tell me I have a low level of intelligence. Who got a choosen side, who's fit to speak of both side of the medal for having PERSONALLY experimenting with BOTH companies. I even apologized for calling you a fanboy in a harsh way and you have to push the insult farther.

''7970 offers the worst increase in performance for the biggest increase in price''

That's right but that doesn't justify, compared to the competition OUT NOW, the reason to issue rebates like for the 4870 case that's all I meant from the freaking beginning... gosh it's hard. We just spoke why this is happening, AMD back in the 4870 days had to regain populatiry for being many years behind, WAY behind the pack. Right they could of priced it higher but THEY DIDN'T and good thing it put them back on the track, bad thing for now because they have to spike the prices back to normal, gosh...

Galidou - Monday, September 17, 2012 - link

''5870 and see the 7970 is only 50% faster''Well that's a little more than that, I see from 40% to 110% faster but I'll go with your 50%, not bad considering that gtx 680 is 20-25% faster than gtx580....

http://www.techpowerup.com/reviews/NVIDIA/GeForce_...

But we all know these comparisons are useless because people mostly upgrades jumping 2-3 generations, cept for heavy gamers but no need to speak or discuss for them, they already know what they want.

chizow - Tuesday, September 18, 2012 - link

I guess it depends on what review you prefer but yes, even your own preferred review shows ~50% just as I stated. So 150% performance for 150% pricing, terrible I know.Also curious as to why you single out 680 performance over GTX 580, certainly 120-125% performance for 100% of the price is better than what AMD was asking....112% of the performance for 110% of the price.

Galidou - Thursday, September 20, 2012 - link

Wait wait, AMD's 7970 price at launch was bad, but the gtx 680 will keep it's price for a while. This was a TOCK in intel's language, usually giving a huge increase in performance over last gen. The 7970 was the worst increase of performance for the worst, but Nvidia's 20-25% improve over last gen is the worst ever in history improve in performance for a TOCK in history, not speaking about price wise, just increase in performance. They went TOCK gtx 480, tick gtx 580, tick gtx 680, and we can guess next one will be a TOCK with big improve in performance or another tick with a refresh of the 600 series. And add to that the series with an automatic overclock on all their cards, still it gave out 20-25% more than last gen.... Nothing amazing there either, sure the price seems better because it's the same than last gen.