The NVIDIA GeForce GTX 660 Review: GK106 Fills Out The Kepler Family

by Ryan Smith on September 13, 2012 9:00 AM ESTPower, Temperature, & Noise

As always, we’re wrapping up our look at a video card’s stock performance with a look at power, temperature, and noise. Unlike GTX 660 Ti, which was a harvested GK104 GPU, GTX 660 is based on the brand-new GK106 GPU, which will have interesting repercussions for power consumption. Scaling down a GPU by disabling functional units often has diminishing returns, so GK106 will effectively “reset” NVIDIA’s position as far as power consumption goes. As a reminder, NVIDIA’s power target here is a mere 115W, while their TDP is 140W.

| GeForce GTX 660 Series Voltages | |||||

| Ref GTX 660 Ti Load | Ref GTX 660 Ti Idle | Ref GTX 660 Load | Ref GTX 660 Idle | ||

| 1.175v | 0.975v | 1.175v | 0.875v | ||

Stopping to take a quick look at voltages, even with a new GPU nothing has changed. NVIDIA’s standard voltage remains at 1.175v, the same as we’ve seen with GK104. However idle voltages are much lower, with the GK106 based GTX 660 idling at 0.875v versus 0.975v for the various GK104 desktop cards. As we’ll see later, this is an important distinction for GK106.

Up next, before we jump into our graphs let’s take a look at the average core clockspeed during our benchmarks. Because of GPU boost the boost clock alone doesn’t give us the whole picture, we’ve recorded the clockspeed of our GTX 660 during each of our benchmarks when running it at 1920x1200 and computed the average clockspeed over the duration of the benchmark

| GeForce GTX 600 Series Average Clockspeeds | |||||

| GTX 670 | GTX 660 Ti | GTX 660 | |||

| Max Boost Clock | 1084MHz | 1058MHz | 1084MHz | ||

| Crysis | 1057MHz | 1058MHz | 1047MHz | ||

| Metro | 1042MHz | 1048MHz | 1042MHz | ||

| DiRT 3 | 1037MHz | 1058MHz | 1054MHz | ||

| Shogun 2 | 1064MHz | 1035MHz | 1045MHz | ||

| Batman | 1042MHz | 1051MHz | 1029MHz | ||

| Portal 2 | 988MHz | 1041MHz | 1033MHz | ||

| Battlefield 3 | 1055MHz | 1054MHz | 1065MHz | ||

| Starcraft II | 1084MHz | N/A | 1080MHz | ||

| Skyrim | 1084MHz | 1045MHz | 1084MHz | ||

| Civilization V | 1038MHz | 1045MHz | 1067MHz | ||

With an official boost clock of 1033MHz and a maximum boost of 1084MHz on our GTX 660, we see clockspeeds regularly vary between the two points. For the most part our average clockspeeds are slightly ahead of NVIDIA’s boost clock, while in CPU-heavy workloads (Starcraft II, Skyrim), we can almost sustain the maximum boost clock. Ultimately this means that the GTX 660 is spending most of its time near or above 1050MHz, which will have repercussions when it comes to overclocking.

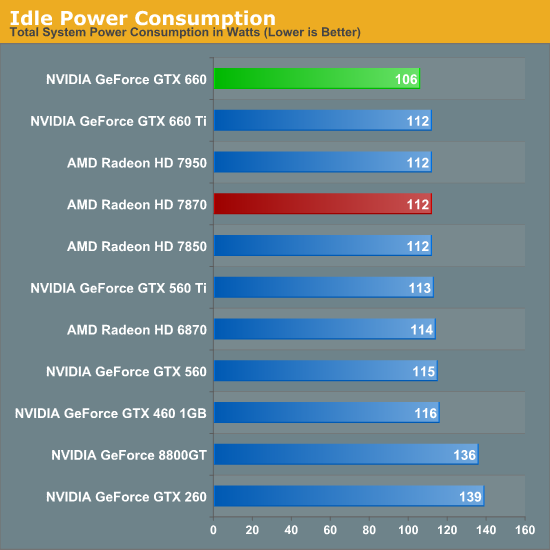

Starting as always with idle power we immediately see an interesting outcome: GTX 660 has the lowest idle power usage. And it’s not just a one or two watt either, but rather a 6W (all the wall) difference between the GTX 660 and both the Radeon HD 7800 series and the GTX 600 series. All of the current 28nm GPUs have offered refreshingly low idle power usage, but with the GTX 660 we’re seeing NVIDIA cut into what was already a relatively low idle power usage and shrink it even further.

NVIDIA’s claim is that their idle power usage is around 5W, and while our testing methodology doesn’t allow us to isolate the video card, our results corroborate a near-5W value. The biggest factors here seem to be a combination of die size and idle voltage; we naturally see a reduction in idle power usage as we move to smaller GPUs with fewer transistors to power up, but also NVIDIA’s idle voltage of 0.875v is nearly 0.1v below GK104’s idle voltage and 0.075v lower than GT 640 (GK107)’s idle voltage. The combination of these factors has pushed the GTX 660’s idle power usage to the lowest point we’ve ever seen for a GPU of this size, which is quite an accomplishment. Though I suspect the real payoff will be in the mobile space, as even with Optimus mobile GPUs have to spend some time idling, which is another opportunity to save power.

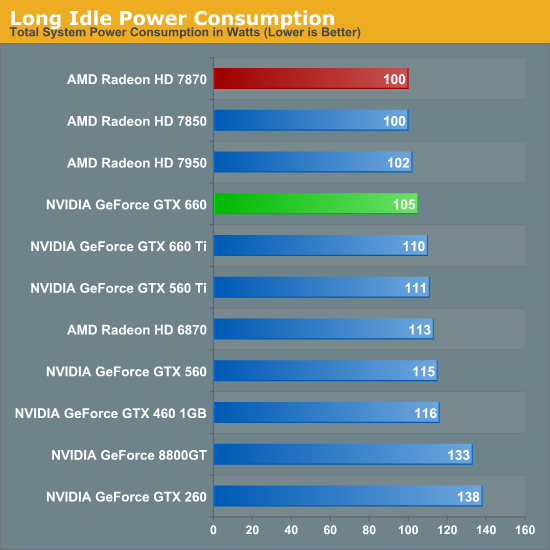

At this point the only area in which NVIDIA doesn’t outperform AMD is in the so-called “long idle” scenario, where AMD’s ZeroCore Power technology gets to kick in. 5W is nice, but next-to-0W is even better.

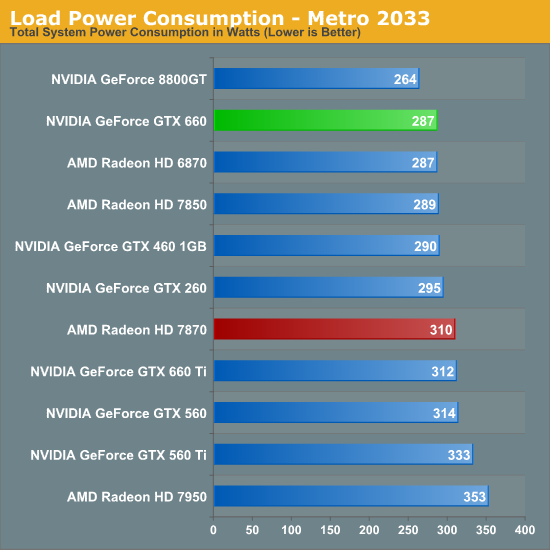

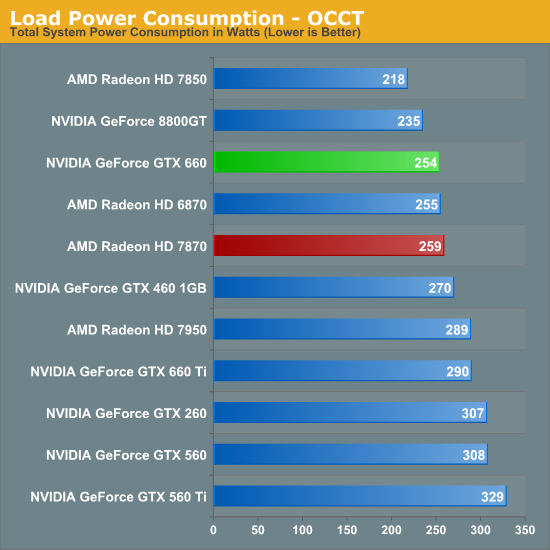

Moving on to load power consumption, given NVIDIA’s focus on efficiency with the Kepler family it comes as no great surprise that NVIDIA continues to hold the lead when it comes to load power consumption. The gap between GTX 660 and 7870 isn’t quite as large as the gap we saw between GTX 680 and 7970 but NVIDIA still has a convincing lead here, with the GTX 660 consuming 23W less at the wall than the 7870. This puts the GTX 660 at around the power consumption of the 7850 (a card with a similar TDP) or the GTX 460. On AMD’s part, Pitcairn is a more petite (and less compute-heavy) part than Tahiti, which means AMD doesn’t face nearly the disparity as they do on the high-end.

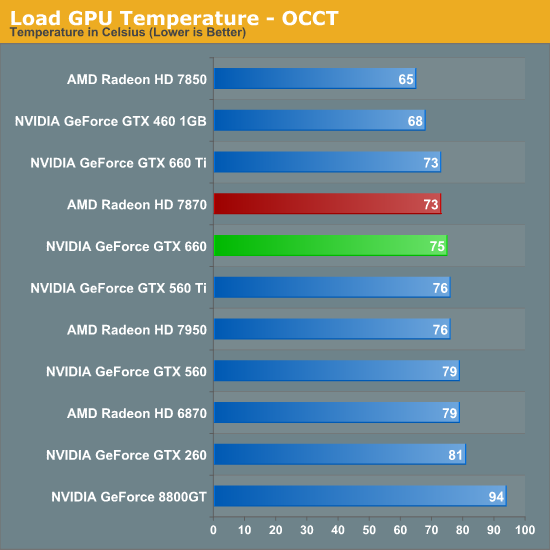

OCCT on the other hand has the GTX 660 and 7870 much closer, thanks to AMD’s much more aggressive throttling through PowerTune. This is one of the only times where the GTX 660 isn’t competitive with the 7850 in some fashion, though based on our experience our Metro results are more meaningful than our OCCT results right now.

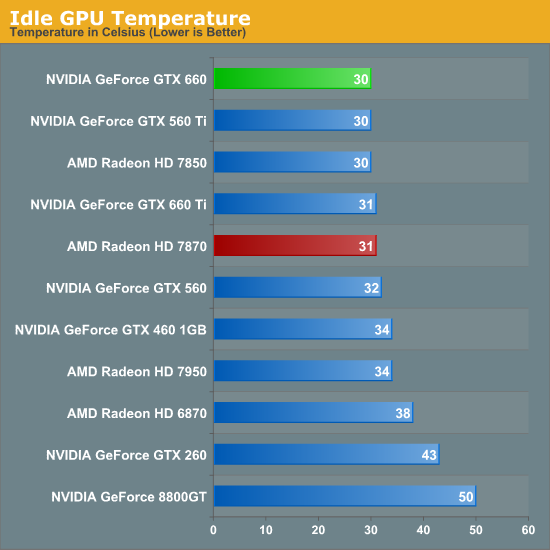

As for idle temperatures, there are no great surprises. A good blower can hit around 30C in our testbed, and that’s exactly what we see.

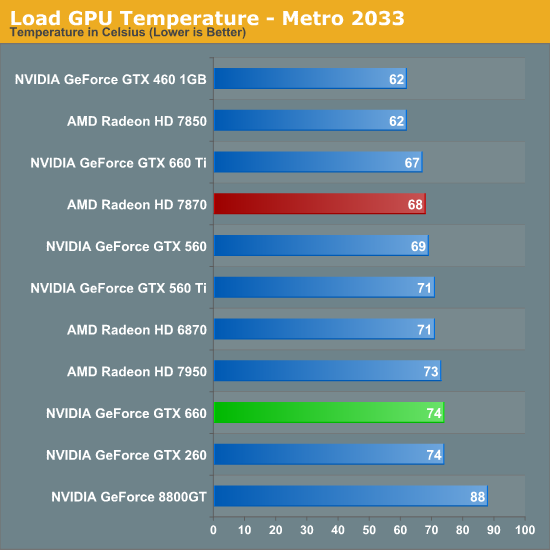

Temperatures under Metro look good enough; though despite their power advantage NVIDIA can’t keep up with the blower-equipped 7800 series. At the risk of spoiling our noise results, the 7800 series doesn’t do significantly worse for noise so it’s not immediately clear why the GTX 660 is 6C warmer here. Our best guess would be that the GTX 660’s cooler just quite isn’t up to the potential of the 7800 series’ reference cooler.

OCCT actually closes the gap between the 7870 and the GTX 660 rather than widening it, which is the opposite of what we would expect given our earlier temperature data. Reaching the mid-70s neither card is particularly cool, but both are still well below their thermal limits, meaning there’s plenty of thermal headroom to play with.

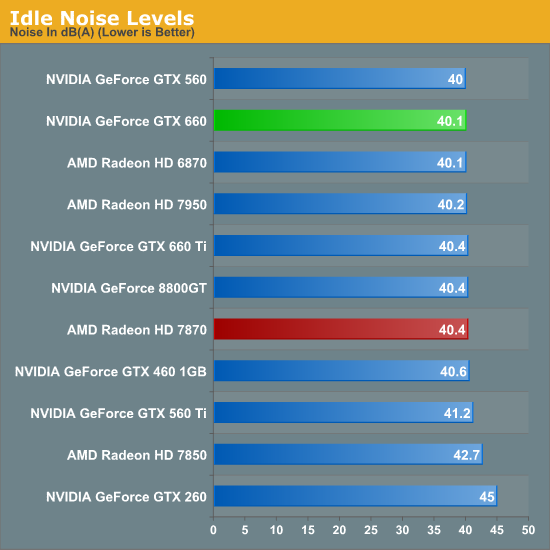

Last but not least we have our noise tests, starting with idle noise. Again there are no surprises here; the GTX 660’s blower is solid, producing no more noise than any other standard blower we’ve seen.

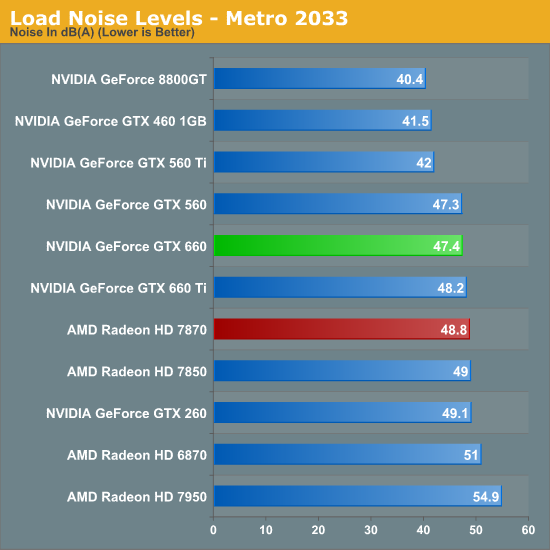

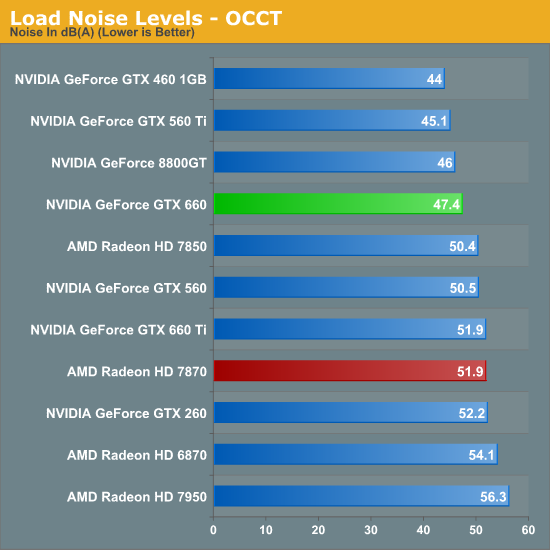

While the GTX 660 couldn’t beat the 7870 on temperatures under Metro, it can certainly beat the 7870 when it comes to noise. The difference isn’t particularly great – just 1.4dB – but every bit adds up, and 47.4dB is historically very good for a blower. However the use of a blower on the GTX 660 means that NVIDIA still can’t match the glory of the GTX 560 Ti or GTX 460; for that we’ll have to take a look at retail cards with open air coolers.

Similar to how AMD’s temperature lead eroded with OCCT, AMD’s slight loss in load noise testing becomes a much larger gap under OCCT. A 4.5dB difference is now solidly in the realm of noticeable, and further reinforces the fact that the GTX 660 is the quieter card under both normal and extreme situations.

We’ll be taking an in-depth look at some retail cards later today with our companion retail card article, but with those results already in hand we can say that despite the use of a blower the “reference” GTX 660 holds up very well. Open air coolers can definitely beat a blower with the usual drawbacks (that heat has to go somewhere), but when a blower is only hitting 47dB, you already have a fairly quiet card. So even a reference GTX 660 (as unlikely as it is to appear in North America) looks good all things considered.

147 Comments

View All Comments

TemjinGold - Thursday, September 13, 2012 - link

"For today’s launch we were able to get a reference clocked card, but in order to do so we had to agree not to show the card or name the partner who supplied the card.""Breaking open a GTX 660 (specifically, our EVGA 660 SC using the NV reference PCB),"

So... didn't you just break your promise as soon as you made it AND show a pic of the card right underneath?

Sufo - Thursday, September 13, 2012 - link

Haha, shhhh!Homeles - Thursday, September 13, 2012 - link

Reading comprehension is such an endangered resource...If it's the super clocked edition, it's obviously not a reference clocked card.

jonup - Thursday, September 13, 2012 - link

Exactly my thoughts.Ryan Smith - Thursday, September 13, 2012 - link

Homeles is correct. That's one of the cards from the launch roundup we're publishing later today.. The reference-clocked GTX 660 we tested is not in any way pictured (I'm not quite that daft).knutjb - Saturday, September 15, 2012 - link

No matter what you try to say it still reads poorly. It should be blatantly obvious about which card was which up front, which the article wasn't. I should have to dig when scanning through.Also, your picking it as the better choice over a card that has been out how long, over slight differences... If nvivda really wanted to me to say wow I'll buy it now, the card would have been no more than 199 at launch. 10 bucks under is the best they can do for being late to the party? And you bought the strategy. I have been equally disappointed with AMD when they have done the same thing.

MrSpadge - Sunday, September 16, 2012 - link

When reading Anadtech articles it's almost always safe to assume "he actually means what he's saying". Helps a lot with understanding.thomp237 - Sunday, September 23, 2012 - link

So where is this roundup? We are now 10 days on from your comment and still no signs of a roundup.CeriseCogburn - Friday, October 12, 2012 - link

I have been wondering where all the eyefinity amd fragglers have gone to, and now I know what has occurred.Eyefinity is Dead.

These Kepler GPU's from nVidia all can do 4 monitors out of the box. Sure you might find a cheap version with 3 ports, whatever - that's the minority.

So all the amd fanboys have shut their fat traps about eyefinity, since nVidia surpassed them with A+ 4 easy monitors out of the box on all the Kelpers.

Thank you nVidia dearly for shutting the idiot pieholes of the amd fanboys.

It took me this long to comment on this matter because nVidia fanboys don't all go yelling in unison sheep fashion about stuff like the little angry losing amd fans do.

I have also noticed all the reviewers who are so used to being amd fan rave boys themselves almost never bring up multimonitor and abhor pointing out nVidia does 4 while amd only does 3 except in very expensive special cases.

Yeah that's notable too. As soon as amd got utterly and totally crushed, it was no longer a central topic and central theme for all the review sites like this place.

That 2 week Island vacation every year amd puts hundreds of these reporters on must be absolutely wonderful.

I do hope they are treated very well and have a great time.

EchoOne - Wednesday, November 21, 2012 - link

LOL dude,the 660ti vs the 7950 in eyefinity would get destroyed.I know this because my friend has a comp build with a phenom 965be 4.2ghz and 660ti with 16gb of ram (i built this for him) and i have a fx 6100 4.7ghz,16gb ram and a 7950 i run a triple monitor setuphttps://www.youtube.com/watch?v=ZRXGveviruw&fe...

And his 660ti DIED trying to play the games at that res and at the same settings as i do.He had to take down his graphics settings from say gta4 from max settings down to about medium and high (i run very high)

So yeah sure it can run a couple monitors out of the box but same with eyefinity.And trust me their nvidia surround is not as polished as eyefinity..But they get props for trying.