The GeForce GTX 660 Ti Review, Feat. EVGA, Zotac, and Gigabyte

by Ryan Smith on August 16, 2012 9:00 AM ESTMeet The Gigabyte GeForce GTX 660 Ti OC

Our final GTX 660 Ti of the day is Gigabyte’s entry, the Gigabyte GeForce GTX 660 Ti OC. Unlike the other cards in our review today this is not a semi-custom card but rather a fully-custom card, which brings with it some interesting performance ramifications.

| GeForce GTX 660 Ti Partner Card Specification Comparison | ||||||

| GeForce GTX 660 Ti(Ref) | EVGA GTX 660 Ti Superclocked | Zotac GTX 660 Ti AMP! | Gigabyte GTX 660 Ti OC | |||

| Base Clock | 915MHz | 980MHz | 1033MHz | 1033MHz | ||

| Boost Clock | 980MHz | 1059MHz | 1111MHz | 1111MHz | ||

| Memory Clock | 6008MHz | 6008MHz | 6608MHz | 6008MHz | ||

| Frame Buffer | 2GB | 2GB | 2GB | 2GB | ||

| TDP | 150W | 150W | 150W | ~170W | ||

| Width | Double Slot | Double Slot | Double Slot | Double Slot | ||

| Length | N/A | 9.5" | 7.5" | 10,5" | ||

| Warranty | N/A | 3 Year | 3 Year + Life | 3 Year | ||

| Price Point | $299 | $309 | $329 | $319 | ||

The big difference between a semi-custom and fully-custom card is of course the PCB; fully-custom cards pair a custom cooler with a custom PCB instead of a reference PCB. Partners can go in a few different directions with custom PCBs, using them to reduce the BoM, reduce the size of the card, or even to increase the capabilities of a product. For their GTX 660 Ti OC, Gigabyte has gone in the latter direction, using a custom PCB to improve the card.

On the surface the specs of the Gigabyte GeForce GTX 660 Ti OC are relatively close to our other cards, primarily the Zotac. Like Zotac Gigabyte is pushing the base clock to 1033MHz and the boost clock to 1111MHz, representing a sizable 118MHz (13%) base overclock and a 131MHz (13%) boost overclock respectively. Unlike the Zotac however there is no memory overclocking taking place, with Gigabyte shipping the card at the standard 6GHz.

What sets Gigabyte apart here in the specs is that they’ve equipped their custom PCB with better VRM circuitry, which means NVIDIA is allowing them to increase their power target from the GTX 660 Ti standard of 134W to an estimated 141W. This may not sound like much (especially since we’re working with an estimate on the Gigabyte board), but as we’ve seen time and time again GK104 is power-limited in most scenarios. A good GPU can boost to higher bins than there is power available to allow it, which means increasing the power target in a roundabout way increases performance. We’ll see how this works in detail in our benchmarks, but for now it’s good enough to say that even with the same GPU overclock as Zotac the Gigabyte card is usually clocking higher.

Moving on, Gigabyte’s custom PCB measures 8.4” long, and in terms of design it doesn’t bear a great resemblance to either the reference GTX 680 PCB nor the reference GTX 670 PCB; as near as we can tell it’s completely custom. In terms of design it’s nothing fancy – though like the reference GTX 670 the VRMs are located in the front – and as we’ve said before the real significance is the higher power target it allows. Otherwise the memory layout is the same as the reference GTX 660 Ti with 6 chips on the front and 2 on the back. Due to its length we’d normally insist on there being some kind of stiffener for an open air card, but since Gigabyte has put the GPU back far enough, the heatsink mounting alone provides enough rigidity to the card.

Sitting on top of Gigabyte’s PCB is a dual fan version of Gigabyte’s new Windforce cooler. The Windforce 2X cooler on their GTX 660 Ti is a bit of an abnormal dual fan cooler, with a relatively sparse aluminum heatsink attached to unusually large 100mm fans. This makes the card quite large and more fan than heatsink in the process, which is not something we’ve seen before.

The heatsink itself is divided up into three segments over the length of the card, with a pair of copper heatpipes connecting them. The bulk of the heatsink is over the GPU, while a smaller portion is at the rear and an even smaller portion is at the front, which is also attached to the VRMs. The frame holding the 100mm fans is then attached at the top, anchored at either end of the heatsink. Altogether this cooling contraption is both longer and taller than the PCB itself, making the final length of the card nearly 10” long.

Finishing up the card we find the usual collection of ports and connections. This means 2 PCIe power sockets and 2 SLI connectors on the top, and 1 DL-DVI-D port, 1 DL-DVI-I port, 1 full size HDMI 1.4 port, and 1 full size DisplayPort 1.2 on the front. Meanwhile toolless case users will be happy to see that the heatsink is well clear of the bracket, so toolless clips are more or less guaranteed to work here.

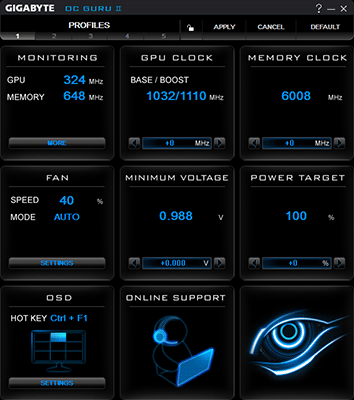

Rounding out the package is the usual collection of power adapters and a quick start guide. While it’s not included in the box or listed on the box, the Gigabyte GeForce GTX 660 Ti OC works with Gigabyte’s OC Guru II overclocking software, which is available on Gigabyte’s website. Gigabyte has had OC Guru for a number of years now, and with this being the first time we’ve seen OC Guru II we can say it’s greatly improved from the functional and aesthetic mess that defined the previous versions.

While it won’t be winning any gold medals, in our testing OC Guru II gets the job done. Gigabyte offers all of the usual tweaking controls (including the necessary power target control), along with card monitoring/graphing and an OSD. It’s only real sin is that Gigabyte hasn’t implemented sliders on their controls, meaning that you’ll need to press and hold down buttons in order to dial in a setting. This is less than ideal, especially when you’re trying to crank up the 6000MHz memory clock by an appreciable amount.

Wrapping things up, the Gigebyte GeForce GTX 660 Ti OC comes with Gigabyte’s standard 3 year warranty. Gigabyte will be releasing it at an MSRP of $319, $20 over the price of a reference-clocked GTX 660 Ti and $10 less than the most expensive card in our roundup today.

313 Comments

View All Comments

claysm - Friday, August 24, 2012 - link

I absolutely will ignore driver support for the 6 series cards. If you are using an AGP card, it's really REALLY time to upgrade.You are just as bad a fanboy for nVidia as any AMD guy here, moron. You are completely ignoring anything good about AMD just because it has AMD attached to it.

I'm completely confident that if AMD had introduced adaptive v-sync and PhysX, you would still say they suck, just because they came from AMD. If you read my post, it says that 660 Ti IS more powerful than the 7870. I was just pointing out that they are closer than they seem. I have no nVidia hatred, they have a lot of cool stuff.

And about the 660 Ti beating the 7950 at 5760x1080, look at the other three benchmarks, moron. The 7950 wins all of them, meaning BF3, Dirt 3, and Crysis 2. It only looses in Skyrim by and average of 2 FPS. Why didn't you include those games in your response.

And when I left the games out, I said that they merely blew the average out of proportion, but that you can't leave them out because you want to. You still have to calculate them in the total. Moron.

And for the record, I'm running a GTX 570, moron.

CeriseCogburn - Friday, August 24, 2012 - link

Look, the amd crew, you, talk your crap of lies, then I correct you.That's why.

Now, whatever you have that is "good by amd" go ahead and state it. Don't tell lies, don't spin, don't talk crap.

I'm waiting...

My guess is I'll have to correct your lies again, and your STUPID play dumb amnesia.

The reason one game was given with 660Ti in that highest resolution winning is very obvious, isn't it, the endless your bud giradou or geradil or geritol whatever his name is was claiming that's the game he was buying the 7950 for...

LOL

ROFL

MHO

Whatever - do your worst.

CeriseCogburn - Saturday, August 25, 2012 - link

" Fan noise of the card is very low in both idle and load, and temperatures are fine as well.Overall, MSI did an excellent job improving on the NVIDIA reference design, resulting in a significantly better card. The card's price of $330 is the same as all other GTX 660 Ti cards we reviewed today. At that price the card easily beats AMD's HD 7950 in all important criteria: performance, power, noise, heat, performance per Dollar, performance per Watt. "

LOL

power target 175W LOL

" It seems that MSI has added some secret sauce, no other board partner has, to their card's BIOS. One indicator of this is that they raised the card's default power limit from 130 W to 175 W, which will certainly help in many situations. During normal gaming, we see no increased power consumption due to this change. The card essentially uses the same power as other cards, but is faster - leading to improved performance per Watt.< br />Overclocking works great as well and reaches the highest real-life performance, despite not reaching the lowest GPU clock. This is certainly an interesting development. We will, hopefully, see more board partners pick up this change. "

Uh OH

bad news for you amd fanboys.....

HAHAHHAHAHAHAAAAAAAAAAAAAA

The MSI 660Ti is uncorked from the bios !

roflmao

Ambilogy - Friday, August 24, 2012 - link

"I don't have a problem with that. 660Ti is hitting 1300+ on core and 7000+ on memory, and so you have a problem with that.The general idea you state, though I'M ALL FOR IT MAN!

A FEW FPS SHOULD NOT BE THE THING YOU FOCUS ON, ESPECIALLY WHEN #1 ! ALL FOR IT ! 100% !"

So you have not a problem with performance? good, because actually that means its a competitive card, not a omfg card. And if you want to oc a 660 you would just oc a 7950 so I don't see the omfg nvidia is so much better.

"Thus we get down to the added features- whoops ! nVidia is about 10 ahead on that now. That settles it.

Hello ? Can YOU accept THAT ?"

So essentially when i ask how many people do actually 3D because you seem to think 2% is unimportant in resolution your answer is "well nvidia is 10 ahead because it has features ACCEPT BLINDLY". Not smart.

"Nope, it's already been proven it's a misnomer. Cores are gone , fps is too, before memory can be used. In the present, a bit faster now, cranked to the max, and FAILING on both sides with CURRENT GAMES - but some fantasy future is viable ? It's already been aborted.

You need to ACCEPT THAT FACT."

FPS are gone and future is fantasy? amd cards still perfom, they are very gpgpu focused and they do excellent for that, and still they don't have bad gaming performance while doing it because you just buy a pre OC version or something and you get still awesome performance (very similar to your 660ti god), say to me what is not enjoyable while playing with an AMD card mr fanboy.

And the future, well, future is gpgpu because allows big improvements to computing, yet is "fantasy". It's only non important because nvidia had good gpgpu in the past and not now?

"Okay, so whatever that means...all I see is insane amd fanboysim - that's the PR call of the loser - MARKETING to get their failure hyped.."

Yeah, calling fan-boy before actually noticing that nvidia told the reviewers how to review the card so it looked better, because get realist, if they include a horrible AA technique with no reason at all something is behind the table hiding you know. Haven't you noticed? theres a lot of discrepancy in 660ti's benchmarks around the web, from sites where the 660 loses to 870's radeons and where it wins to 970's, there is not a single liable review now, do you want to see the truth? buy a 660ti a 870 and a 950, and compare the 3, you will have the truth, thay they perform like they are priced and AMD cards are not shit.

CeriseCogburn - Saturday, August 25, 2012 - link

Hey, I answered the guys 3 questions. I made my points. I didn't say half of what you're talking about, but who cares.

The guy killed himself with point #1, so that's the end of it.

CeriseCogburn - Saturday, August 25, 2012 - link

Oh stop the crap. nVidia is 10 features ahead, I'm not the one who talked about resolution usage, so you've got the wrong fellow there.3D isn't the only feature... but then you know that, but will blabber like an idiot anyway.

Go away.

claysm - Saturday, August 25, 2012 - link

"I'm not the one who talked about resolution usage". You can't fault him for mixing up his trolls. Since almost everything you and TheJian have said is complete shit it's hard to keep track of who said what.And if you can objectively prove that I've lied about anything, I really would like to see it. And I mean objectively, not your usual response of entirely subjective 'AMD suckz lololol' presented in almost unreadably bad grammar.

I take that back, I won't read it anyways, since I know already know it'll be an nVidia love fest regardless of what the facts state. And I'll reiterate that I'm using an nVidia card. Moron.

CeriseCogburn - Saturday, August 25, 2012 - link

Oh it is not, he showed it all to be true and so does the review man.Get out of your freaking goggled amd fanboy gourd.

Look, I just realized another thing that doesn't bode well for you..

What nVidia did here was make a very good move, and the losses of amd on the Steam Hardware Survey at the top end are going to increase....

The amd fanboy is constantly crying about price - they're going to look at $299 with the excellent new game for free and PASS on the more expensive 7950 Russian is promoting EVEN MORE now.

Here let me get you the little info you're now curious about. ( I hope but maybe you're just a scowling amd fanboy liar still completely uninterested because you never got 1 fact according to you LOL sad what you are it's sad)

Aug 15th 2012 prdola0

" Looking at Steam Survey, it is clear why AMD is so desperate. GTX680 has 0.90% share, while even the 7850 lineup has less, just 0.62%. If you look at the GTX670, it has 0.99%. The HD7970 has only 0.54%, about half of what GTX680 has, which is funny considering that the GTX680 is selling only half the time compared to HD7970. It means that GTX680 is selling 4 times faster."

ROFL...

No one is listening to you fools, Russian included... now it's going to GET WORSE for amd....

CeriseCogburn - Saturday, August 25, 2012 - link

forgot link, sorry, page 2 commenthttp://www.anandtech.com/show/6152/amd-announces-n...

Okay, and that stupid 7950 boost REALLY IS CRAPPY CHIPS from the low end loser harvest they had to OVER VOLT to get to their boost...

LOL

LOL\

OLO

I mean there it is man - the same JUNK amd fanboys always use to attack nVida talking about rejected chips for lower clocked down the line variants has NOW COME TRUE IN FULL BLOWN REALITY FOR AMD....~!

HAHHAHAHAHAHAHAHHAHA

AHHAHAHAHAHAA

omg !

hahahahahahhahaha

ahhahaha

ahaha

Holy moly. hahahahahhahahha

CeriseCogburn - Thursday, August 23, 2012 - link

plus here the 660Ti wins in 5760x1080, beating the 7950 the 7950 boost, and the 7970...http://www.bit-tech.net/hardware/2012/08/16/nvidia...

Skyrim. So, throw that out too - add skyrim to your too short shortlist. Throw out your future mem whine. throw out your OC whine readied for 660Ti...

Yep. So the argument left is " I wuv amd ! " - or the more usual " I OWS nVidia ! " ( angry face )